DIGITAL BIZ TECH

Workflows by DIGITAL BIZ TECH

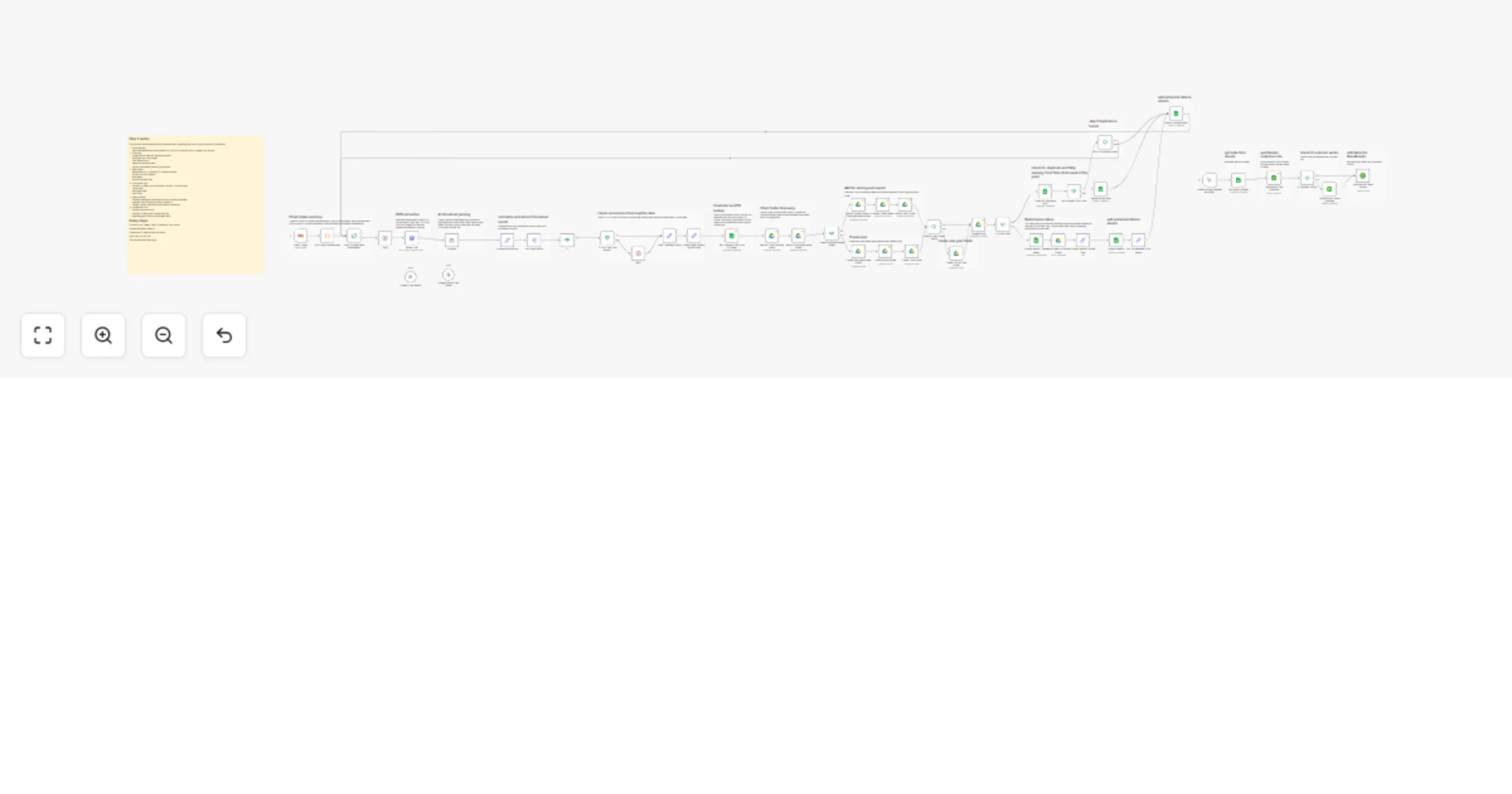

Convert emailed timesheets into QuickBooks invoices with OCR, AI, Gmail and Sheets

AI Powered Timesheet → Invoice Automation (Gmail + OCR + AI + Google Sheets + QuickBooks) > Note: This workflow us...

Automate timesheet to invoice conversion with OpenAI, Gmail & Google Workspace

This workflow converts emailed timesheets into structured invoice rows in Google Sheets and stores them in the correc...

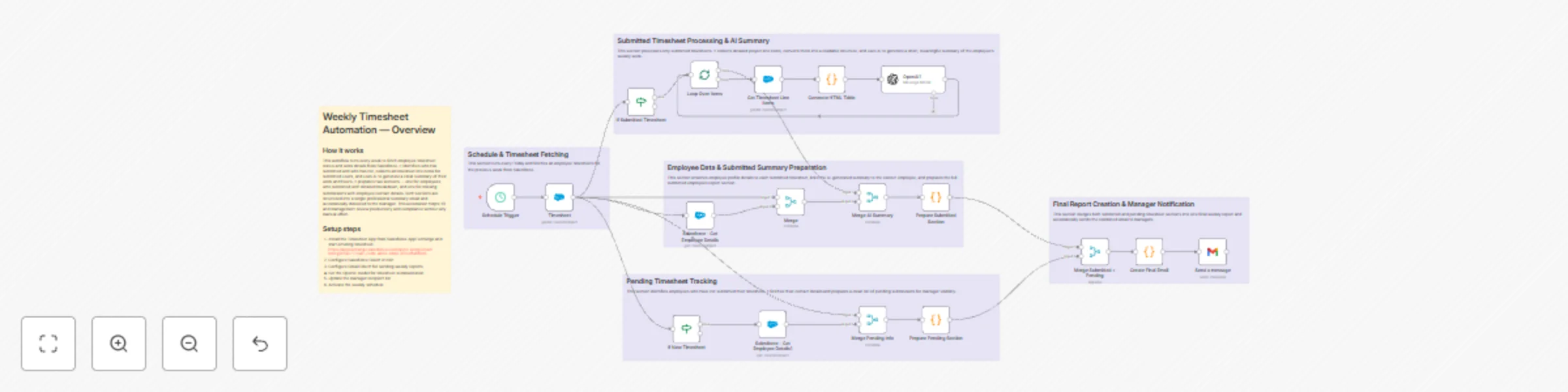

Automate weekly timesheet reporting with Salesforce, OpenAI and Gmail

Weekly Timesheet Report + Pending Submissions Workflow Overview This workflow automates the entire weekly timesheet r...

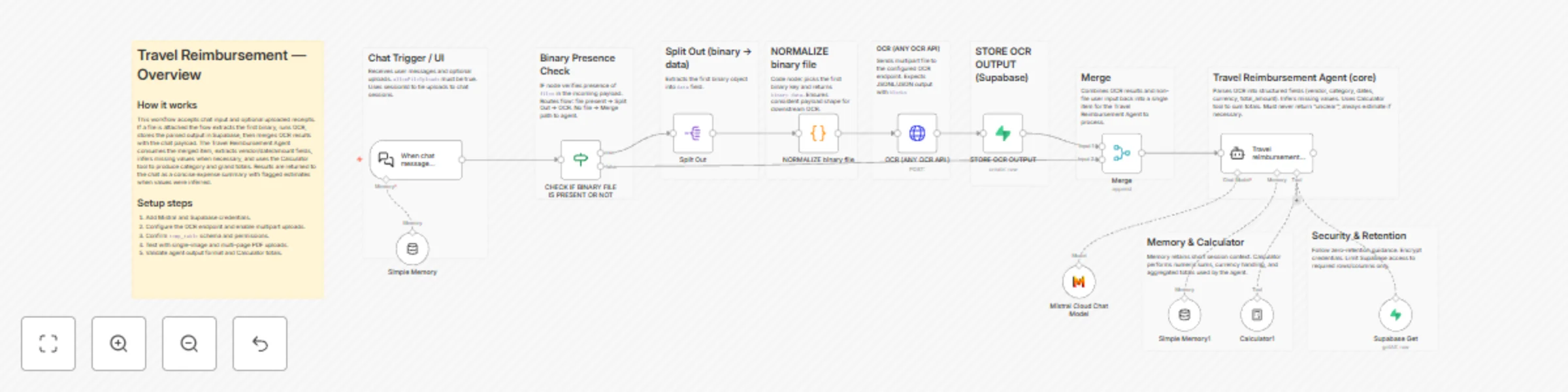

Automate travel expense extraction with OCR, Mistral AI and Supabase

Travel Reimbursement OCR & Expense Extraction Workflow Overview This is a lightweight n8n workflow that accepts chat...

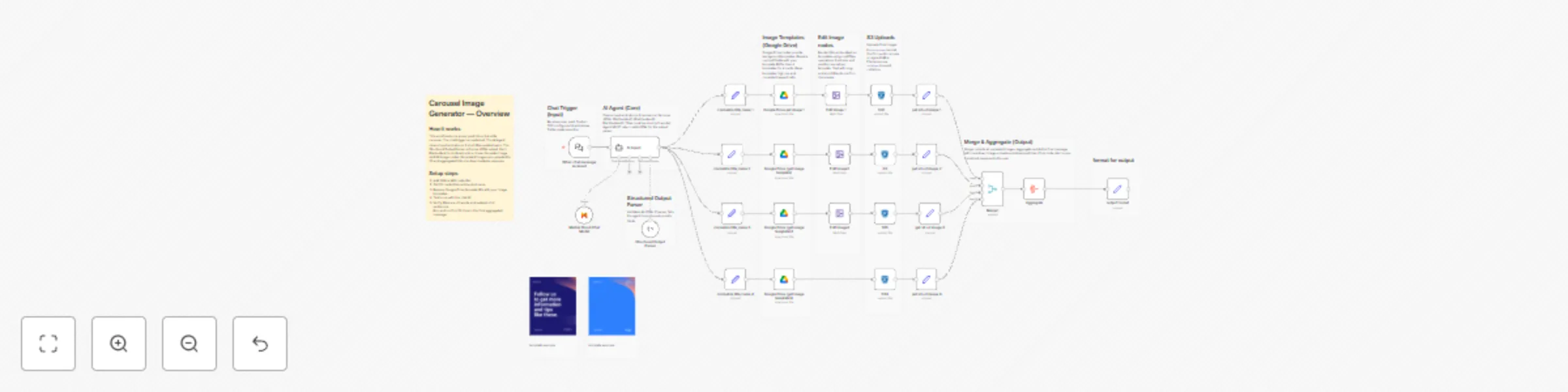

Generate LinkedIn carousel images from text with Mistral AI & S3 Storage

AI Carousel Caption & Template Editor Workflow Overview This workflow is a caption only carousel text generator built...

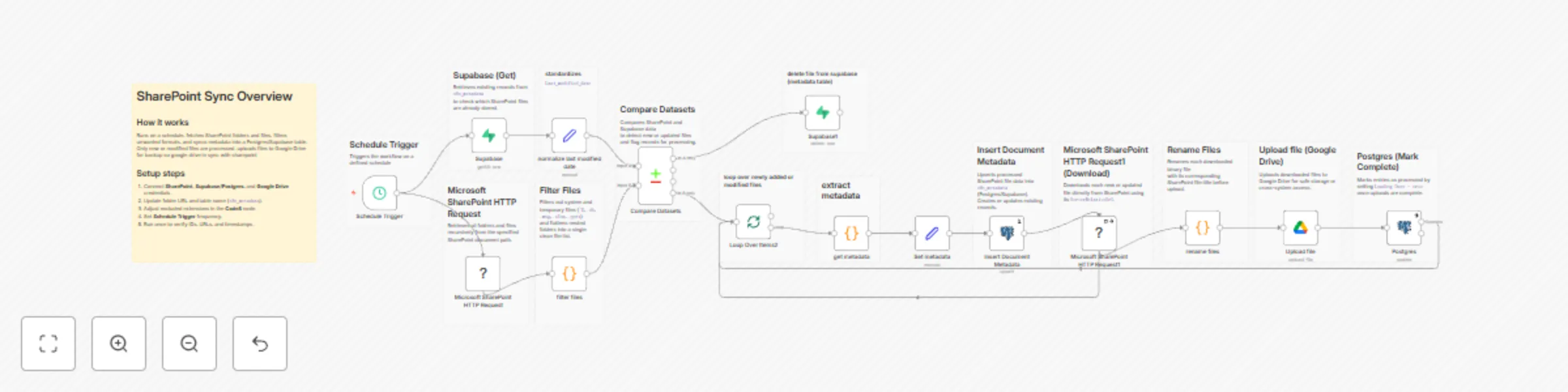

Automated document sync between SharePoint and Google Drive with Supabase

SharePoint → Supabase → Google Drive Sync Workflow Overview This workflow is a multi system document synchronization...

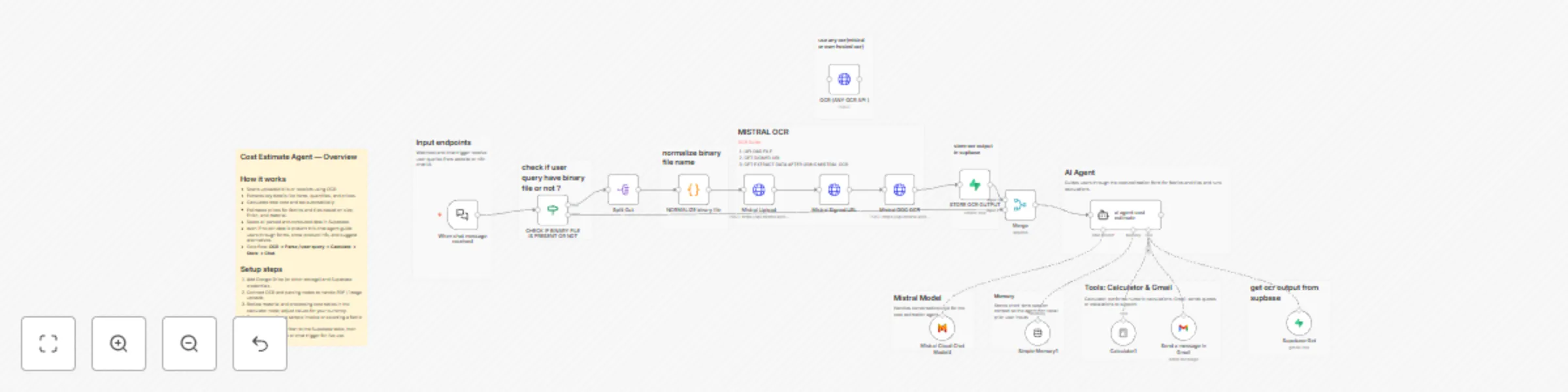

Build a cost estimation chatbot with Mistral AI, OCR & Supabase

AI Cost Estimation Chatbot (Conversational Dual Agent + OCR Workflow) Overview This workflow introduces a conversatio...

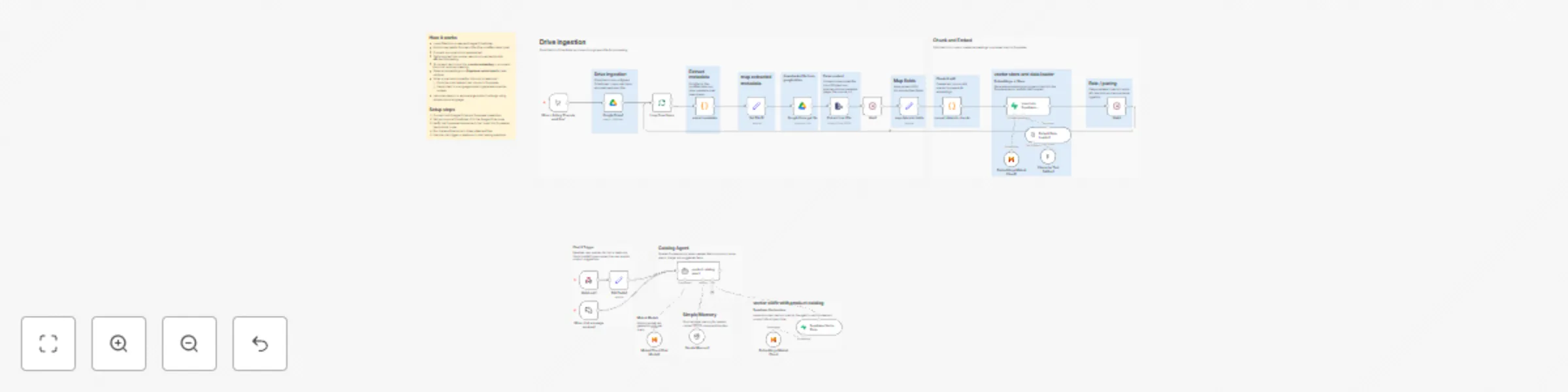

Build a product catalog chatbot with Mistral AI, Google Drive & Supabase RAG

AI Product Catalog Chatbot with Google Drive Ingestion & Supabase RAG Overview This workflow builds a dual system tha...

Generate LinkedIn posts with Mistral AI using 7 dynamic content templates

AI Powered LinkedIn Post Generator Workflow Overview This workflow is a two part intelligent content creation system...

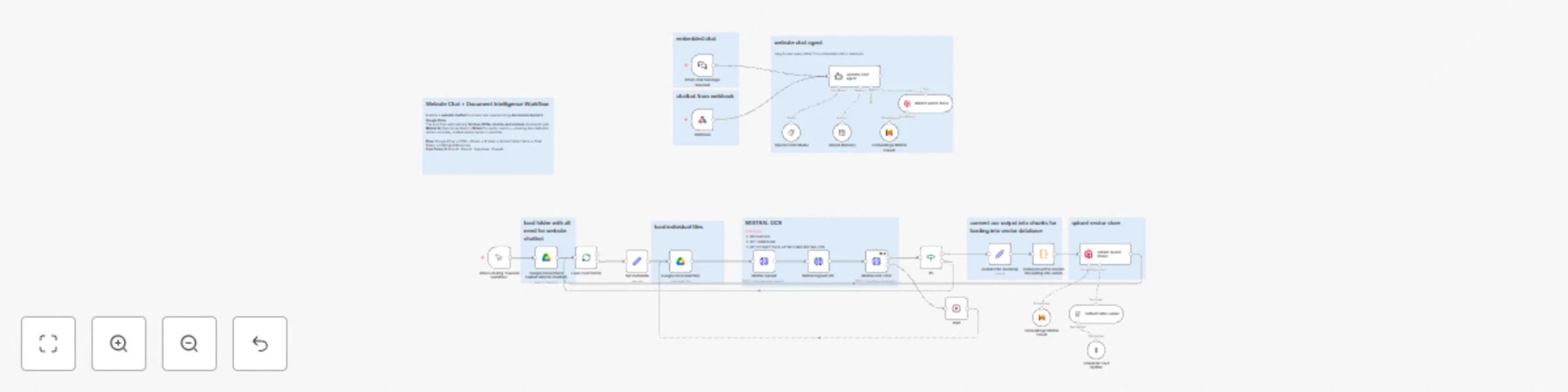

Website chatbot with Google Drive knowledge base using GPT-4 and Mistral AI

AI Powered Website Chatbot with Google Drive Knowledge Base Overview This workflow combines website chatbot intellige...

Ai website scraper & company intelligence

AI Website Scraper & Company Intelligence Description This workflow automates the process of transforming any website...

Document RAG & chat agent: Google Drive to Qdrant with Mistral OCR

Knowledge RAG & AI Chat Agent: Google Drive to Qdrant Description This workflow transforms a Google Drive folder into...