Track AI model executions with LangFuse observability for better performance insights

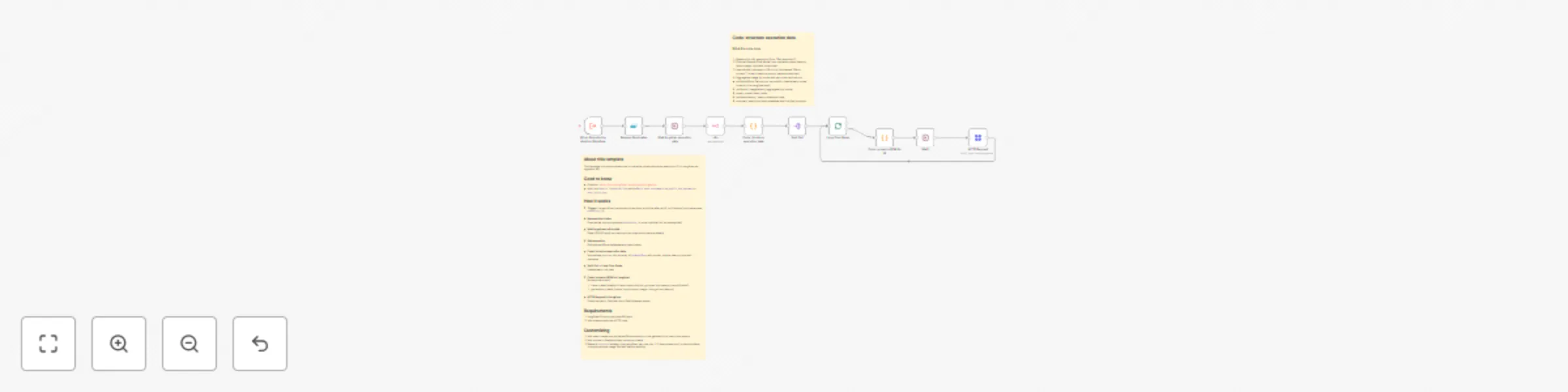

Workflow preview

Early Deal

Early DealDISCOUNT 20%

Important notice

This workflow is provided as-is. Please review and test before using in production.

Overview

About this template

This template is to demonstrate how to trace the observations per execution ID in Langfuse via ingestion API.

Good to know

- Endpoint:

https://cloud.langfuse.com/api/public/ingestion - Auth is a

Generic Credential Typewith aBasic Auth:username=you_public_key,password=your_secret_key.

How it works

Trigger: the workflow is executed by another workflow after an AI run finishes (input parameter

execution_id).Remove duplicates Ensures we only process each

execution_idonce (optional but recommended).Wait to get execution data Delay (60-80 secs) so totals and per-step metrics are available.

Get execution Fetches workflow metadata and token totals.

Code: structure execution data Normalizes your run into an array of

perModelRunswith model, tokens, latency, and text previews.Split Out → Loop Over Items Iterates each run step.

Code: prepare JSON for Langfuse Builds a batch with:

- trace-create (stable id trace-<executionId>, grouped into session-<workflowId>)

- generation-create (model, input/output, usage, timings from latency)

HTTP Request to Langfuse Posts the batch. Optional short Wait between sends.

Requirements

- Langfuse Cloud project and API keys

- n8n instance with the HTTP node

Customizing

- Add span-create and set

parentObservationIdon the generation to nest under spans. - Add scores or feedback later via score-create.

- Replace

sessionIdstrategy (per workflow, per user, etc.). If some steps don’t produce tokens, compute and set usage yourself before sending.