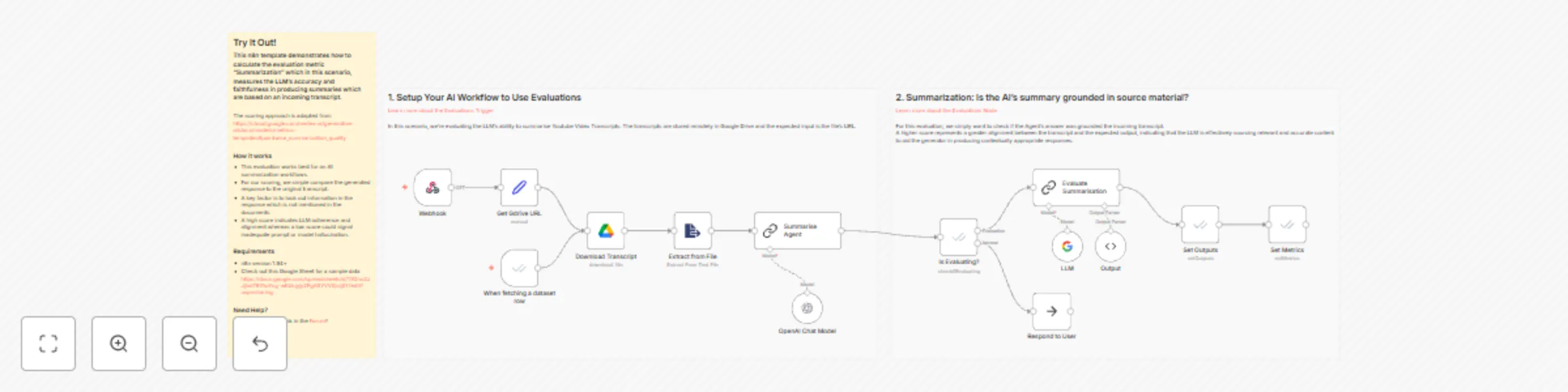

Evaluation metric: summarization

Workflow preview

$20/month : Unlimited workflows

2500 executions/month

THE #1 IN WEB SCRAPING

Scrape any website without limits

HOSTINGER  Early Deal

Early Deal

DISCOUNT 20% Try free

Early Deal

Early DealDISCOUNT 20%

Self-hosted n8n

Unlimited workflows - from $4.99/mo

#1 hub for scraping, AI & automation

6000+ actors - $5 credits/mo

Important notice

This workflow is provided as-is. Please review and test before using in production.

Overview

This n8n template demonstrates how to calculate the evaluation metric "Summarization" which in this scenario, measures the LLM's accuracy and faithfulness in producing summaries which are based on an incoming Youtube transcript.

The scoring approach is adapted from https://cloud.google.com/vertex-ai/generative-ai/docs/models/metrics-templates#pointwise_summarization_quality

How it works

- This evaluation works best for an AI summarization workflows.

- For our scoring, we simple compare the generated response to the original transcript.

- A key factor is to look out information in the response which is not mentioned in the documents.

- A high score indicates LLM adherence and alignment whereas a low score could signal inadequate prompt or model hallucination.

Requirements

- n8n version 1.94+

- Check out this Google Sheet for a sample data https://docs.google.com/spreadsheets/d/1YOnu2JJjlxd787AuYcg-wKbkjyjyZFgASYVV0jsij5Y/edit?usp=sharing