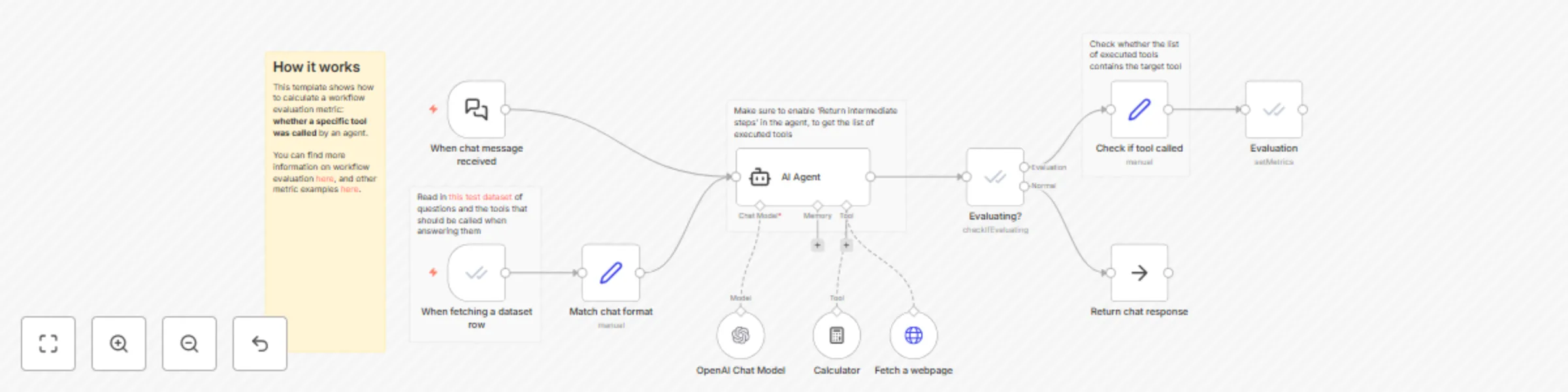

Evaluation metric example: Check if tool was called

Workflow preview

$20/month : Unlimited workflows

2500 executions/month

THE #1 IN WEB SCRAPING

Scrape any website without limits

HOSTINGER  Early Deal

Early Deal

DISCOUNT 20% Try free

Early Deal

Early DealDISCOUNT 20%

Self-hosted n8n

Unlimited workflows - from $4.99/mo

#1 hub for scraping, AI & automation

6000+ actors - $5 credits/mo

Important notice

This workflow is provided as-is. Please review and test before using in production.

Overview

AI evaluation in n8n

This is a template for n8n's evaluation feature.

Evaluation is a technique for getting confidence that your AI workflow performs reliably, by running a test dataset containing different inputs through the workflow.

By calculating a metric (score) for each input, you can see where the workflow is performing well and where it isn't.

How it works

This template shows how to calculate a workflow evaluation metric: whether a specific tool was called by an agent.

- We use an evaluation trigger to read in our dataset

- It is wired up in parallel with the regular trigger so that the workflow can be started from either one. More info

- We make sure that the agent outputs the list of tools that it used

- We then check whether the expected tool (from the dataset) is in that list

- Finally we pass this information back to n8n as a metric