🤖 Build resilient AI workflows with automatic GPT and Gemini failover chain

Workflow preview

$20/month : Unlimited workflows

2500 executions/month

THE #1 IN WEB SCRAPING

Scrape any website without limits

HOSTINGER  Early Deal

Early Deal

DISCOUNT 20% Try free

Early Deal

Early DealDISCOUNT 20%

Self-hosted n8n

Unlimited workflows - from $4.99/mo

#1 hub for scraping, AI & automation

6000+ actors - $5 credits/mo

Important notice

This workflow is provided as-is. Please review and test before using in production.

Overview

This workflow contains community nodes that are only compatible with the self-hosted version of n8n.

How it works

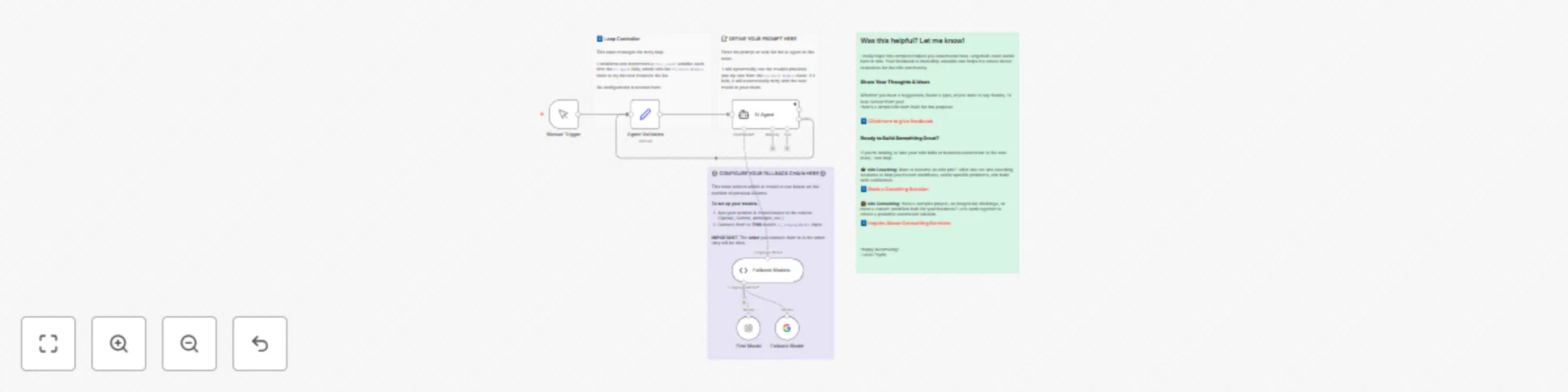

This workflow demonstrates how to create a resilient AI Agent that automatically falls back to a different language model if the primary one fails. This is useful for handling API errors, rate limits, or model outages without interrupting your process.

- State Initialization: The

Agent Variablesnode initializes afail_countto 0. This counter tracks how many models have been attempted. - Dynamic Model Selection: The

Fallback Models(a LangChain Code node) acts as a router. It receives a list of all connected AI models and, based on the currentfail_count, selects which one to use for this attempt (0 for the first model, 1 for the second, etc.). - Agent Execution: The

AI Agentnode attempts to run your prompt using the model selected by the router. - The Fallback Loop:

- On Success: The workflow completes successfully.

- On Error: If the

AI Agentnode fails, its "On Error" output is triggered. This path loops back to theAgent Variablesnode, which increments thefail_countby 1. The process then repeats, causing theFallback Modelsrouter to select the next model in the list.

- Final Failure: If all connected models are tried and fail, the workflow will stop with an error.

Set up steps

Setup time: ~3-5 minutes

- Configure Credentials: Ensure you have the necessary credentials (e.g., for OpenAI, Google AI) configured in your n8n instance.

- Define Your Model Chain:

- Add the AI model nodes you want to use to the canvas (e.g., OpenAI, Google Gemini, Anthropic).

- Connect them to the

Fallback Modelsnode. - Important: The order in which you connect the models determines the fallback order. The model nodes first created/connected will be tried first.

- Set Your Prompt: Open the

AI Agentnode and enter the prompt you want to execute. - Test: Run the workflow. To test the fallback logic, you can temporarily disable the

First Modelnode or configure it with invalid credentials to force an error.