🔐🦙🤖 Private & local Ollama self-hosted AI assistant

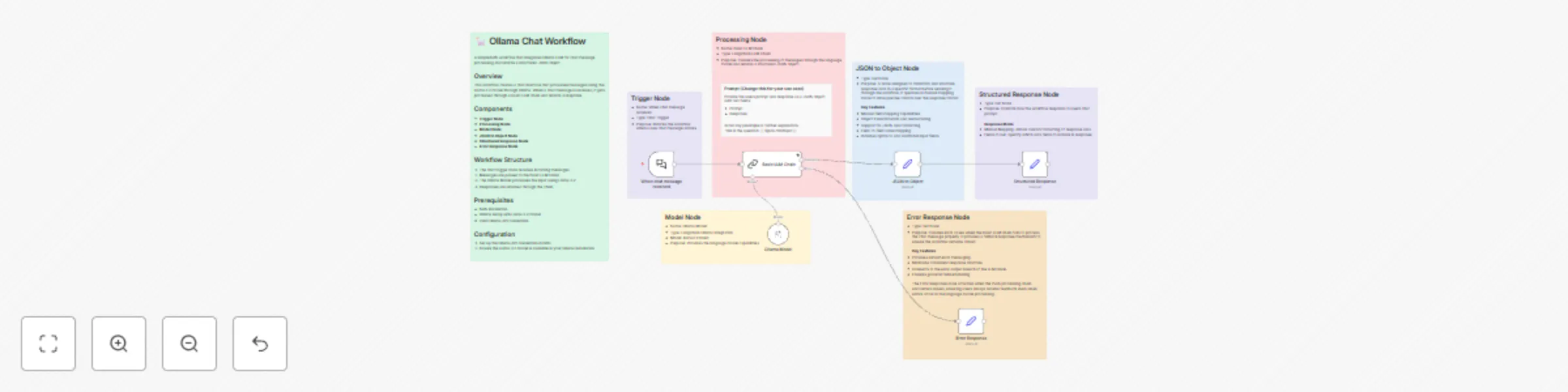

Workflow preview

$20/month : Unlimited workflows

2500 executions/month

THE #1 IN WEB SCRAPING

Scrape any website without limits

HOSTINGER  Early Deal

Early Deal

DISCOUNT 20% Try free

Early Deal

Early DealDISCOUNT 20%

Self-hosted n8n

Unlimited workflows - from $4.99/mo

#1 hub for scraping, AI & automation

6000+ actors - $5 credits/mo

Important notice

This workflow is provided as-is. Please review and test before using in production.

Overview

Transform your local N8N instance into a powerful chat interface using any local & private Ollama model, with zero cloud dependencies ☁️. This workflow creates a structured chat experience that processes messages locally through a language model chain and returns formatted responses 💬.

How it works 🔄

- 💭 Chat messages trigger the workflow

- 🧠 Messages are processed through Llama 3.2 via Ollama (or any other Ollama compatible model)

- 📊 Responses are formatted as structured JSON

- ⚡ Error handling ensures robust operation

Set up steps 🛠️

- 📥 Install N8N and Ollama

- ⚙️ Download Ollama 3.2 model (or other model)

- 🔑 Configure Ollama API credentials

- ✨ Import and activate workflow

This template provides a foundation for building AI-powered chat applications while maintaining full control over your data and infrastructure 🚀.