Advanced Workflows

For experienced users. Complex workflows with advanced logic, error handling, and optimizations.

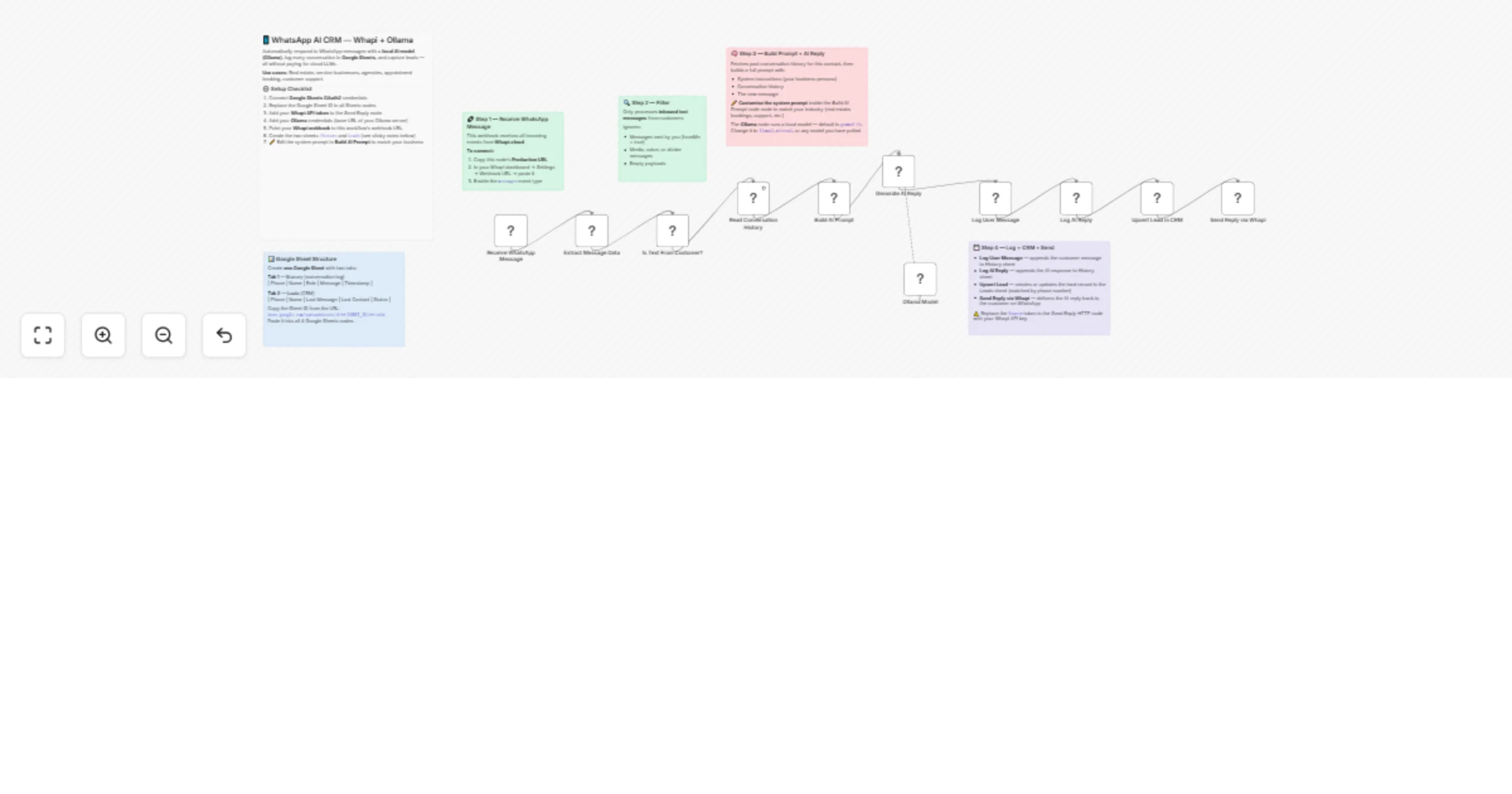

Automate WhatsApp lead capture and replies with Whapi, Ollama and Sheets

Receive WhatsApp messages via Whapi, generate AI replies with a local Ollama model, log conversations in Google Sheet...

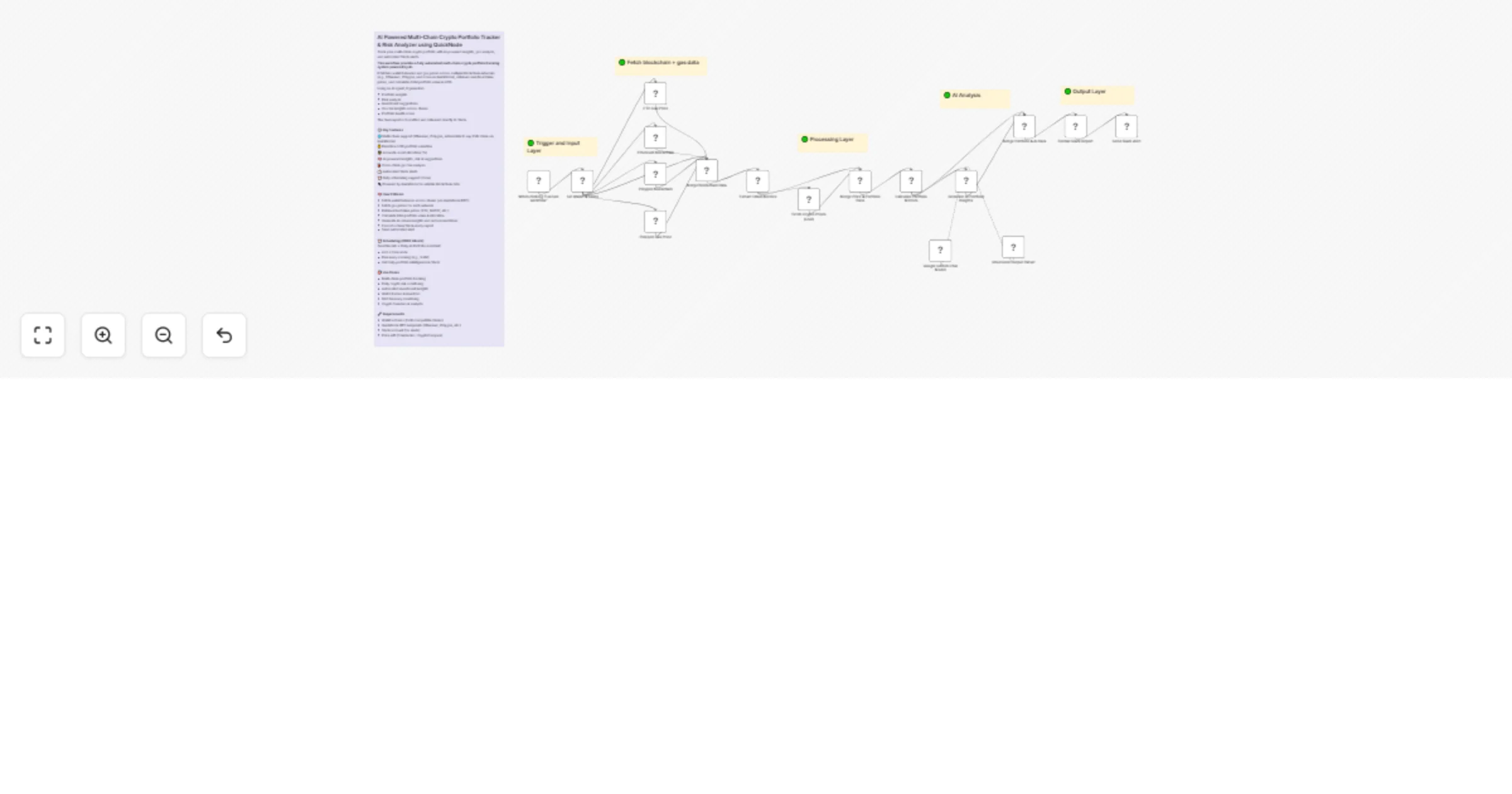

Track multi-chain crypto portfolios and analyze risk with Gemini and QuickNode

This workflow provides a fully automated multi chain crypto portfolio tracking system powered by AI. It fetches walle...

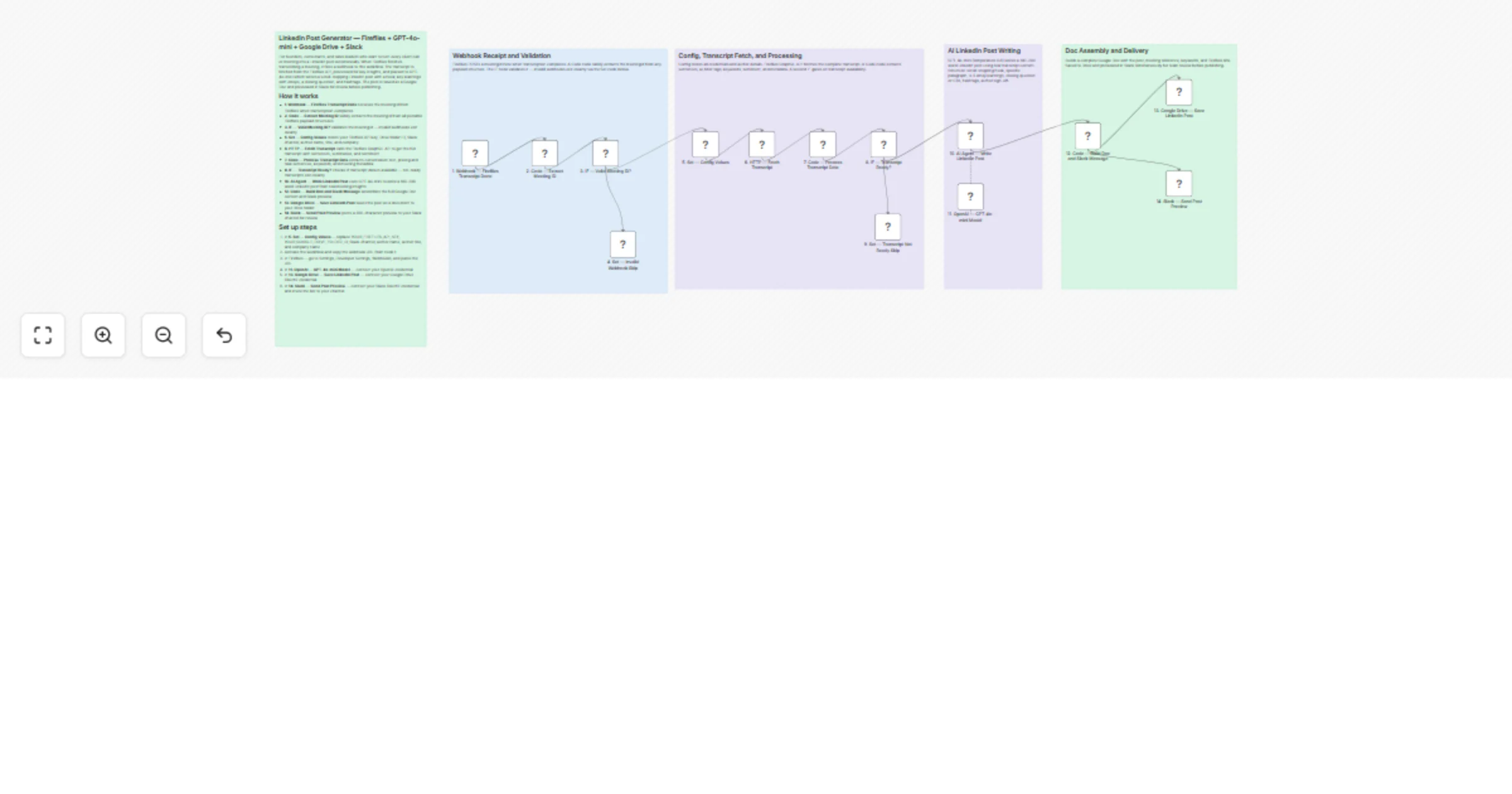

Create LinkedIn post drafts from Fireflies meetings with GPT-4o-mini, Drive and Slack

Description Connect Fireflies to this workflow once and every meeting you record becomes a LinkedIn post draft automa...

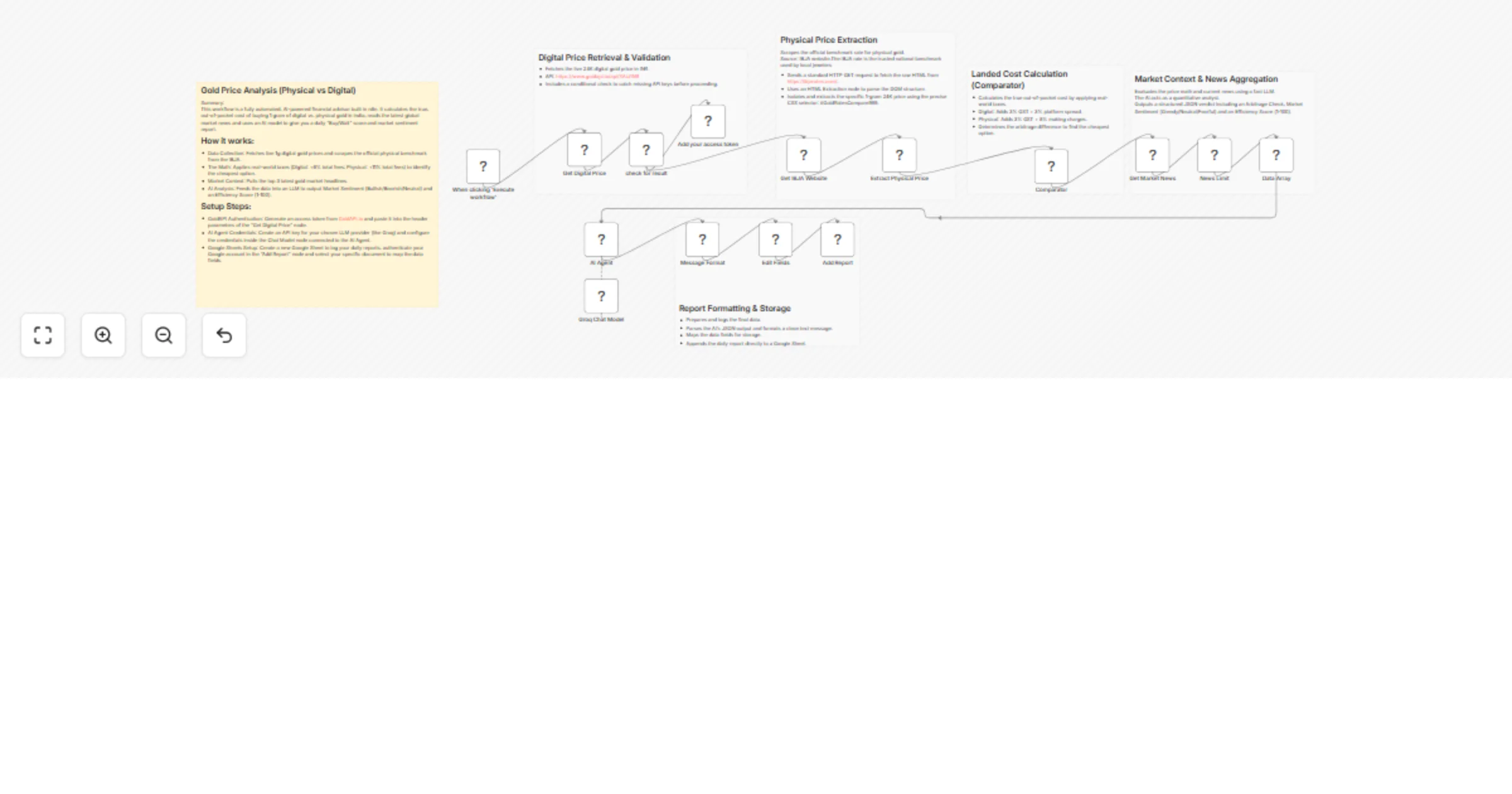

Compare physical vs digital 24K gold costs and returns with GoldAPI, IBJA, Groq and Google Sheets

Gold Investment Intelligence: Physical vs. Digital 24K Cost & Return Analysis This high performance n8n workflow auto...

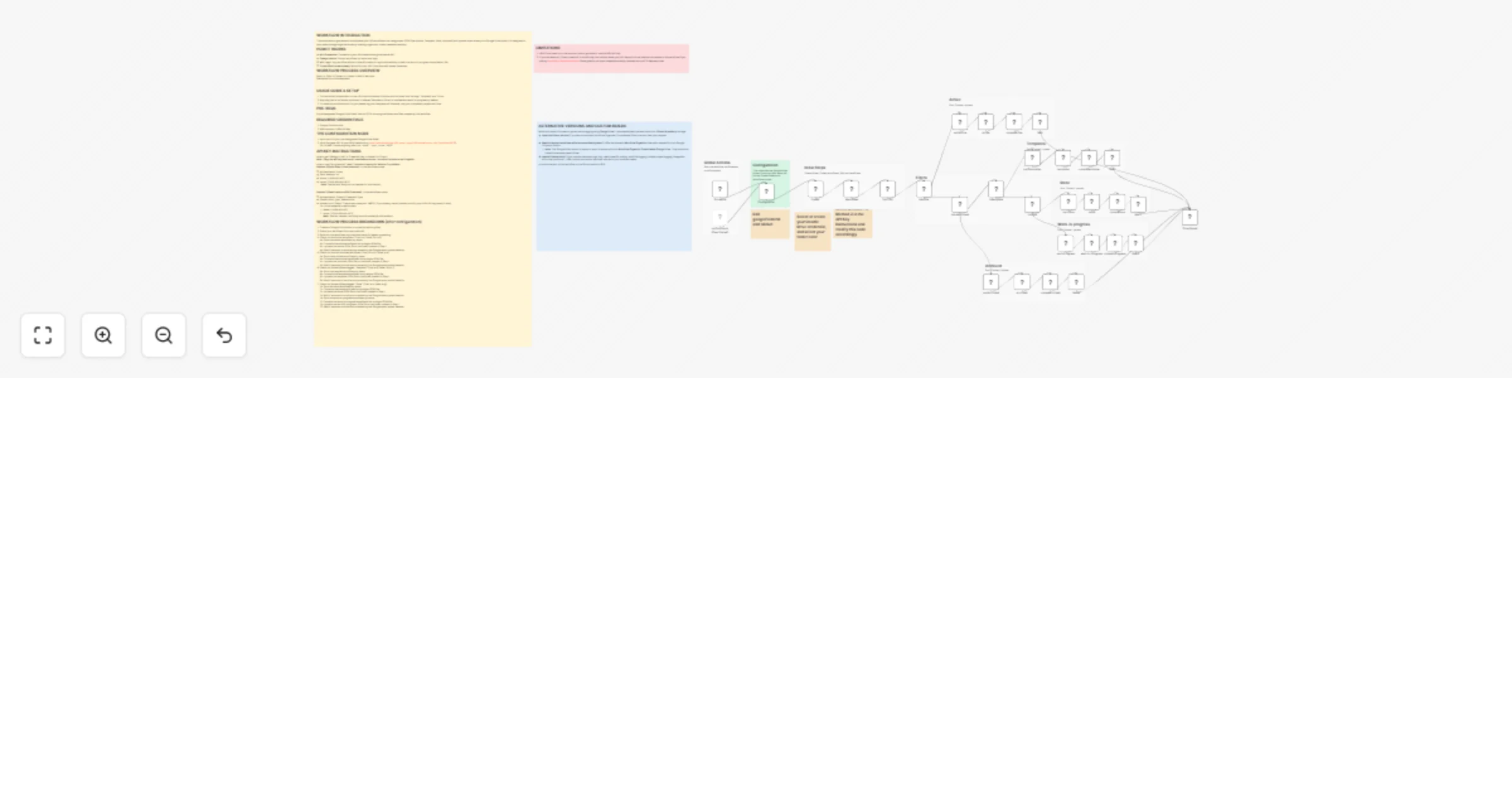

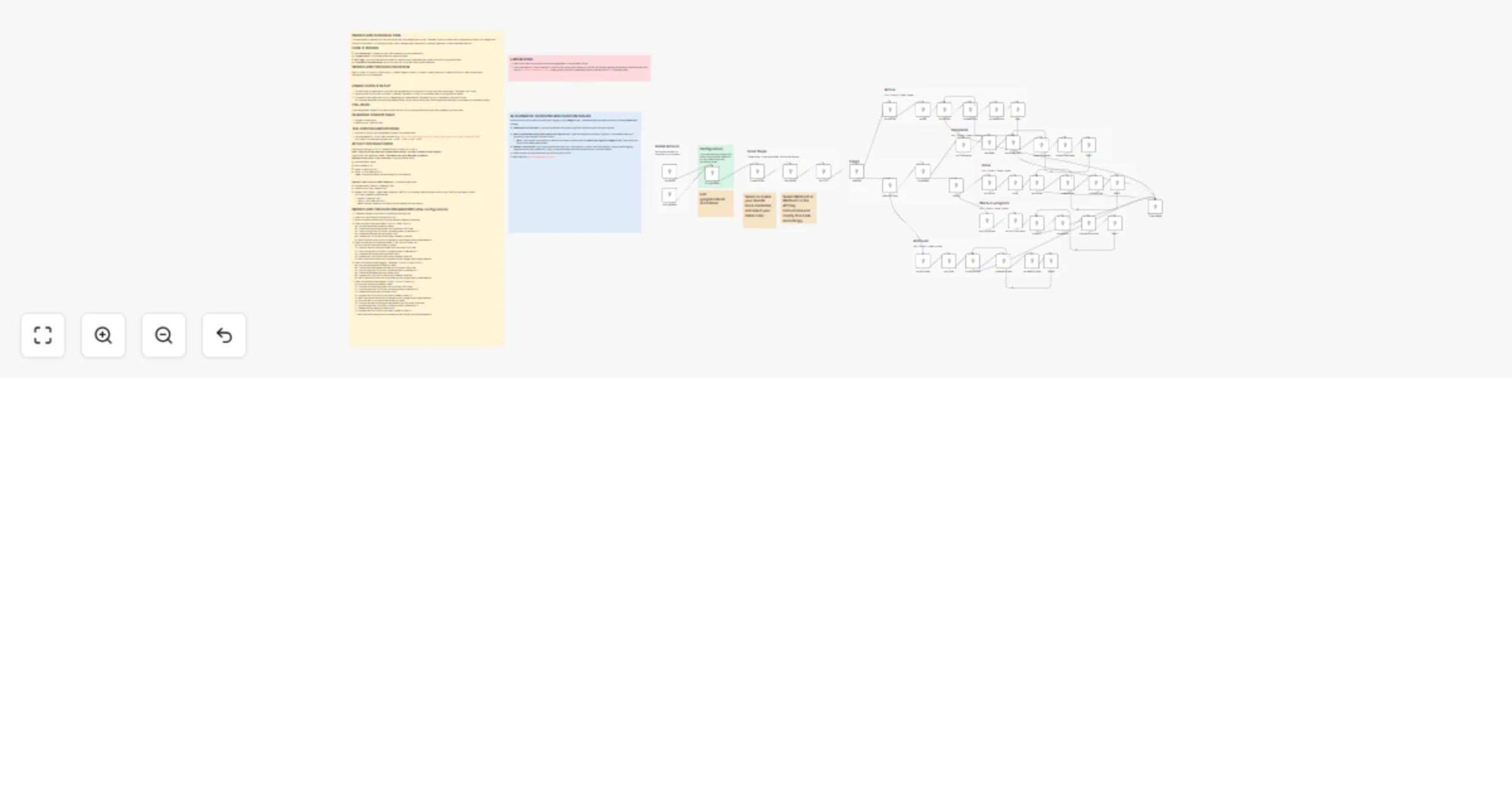

Organize and back up n8n workflows to Google Drive as consolidated JSON

WORKFLOW INTRODUCTION This automation organizes and consolidates your n8n workflows into categorized JSON files (Acti...

Organize and back up n8n workflows to Google Drive folders

WORKFLOW INTRODUCTION This automation organizes your n8n workflows files into categorizes (Active, Template, Done, Ar...

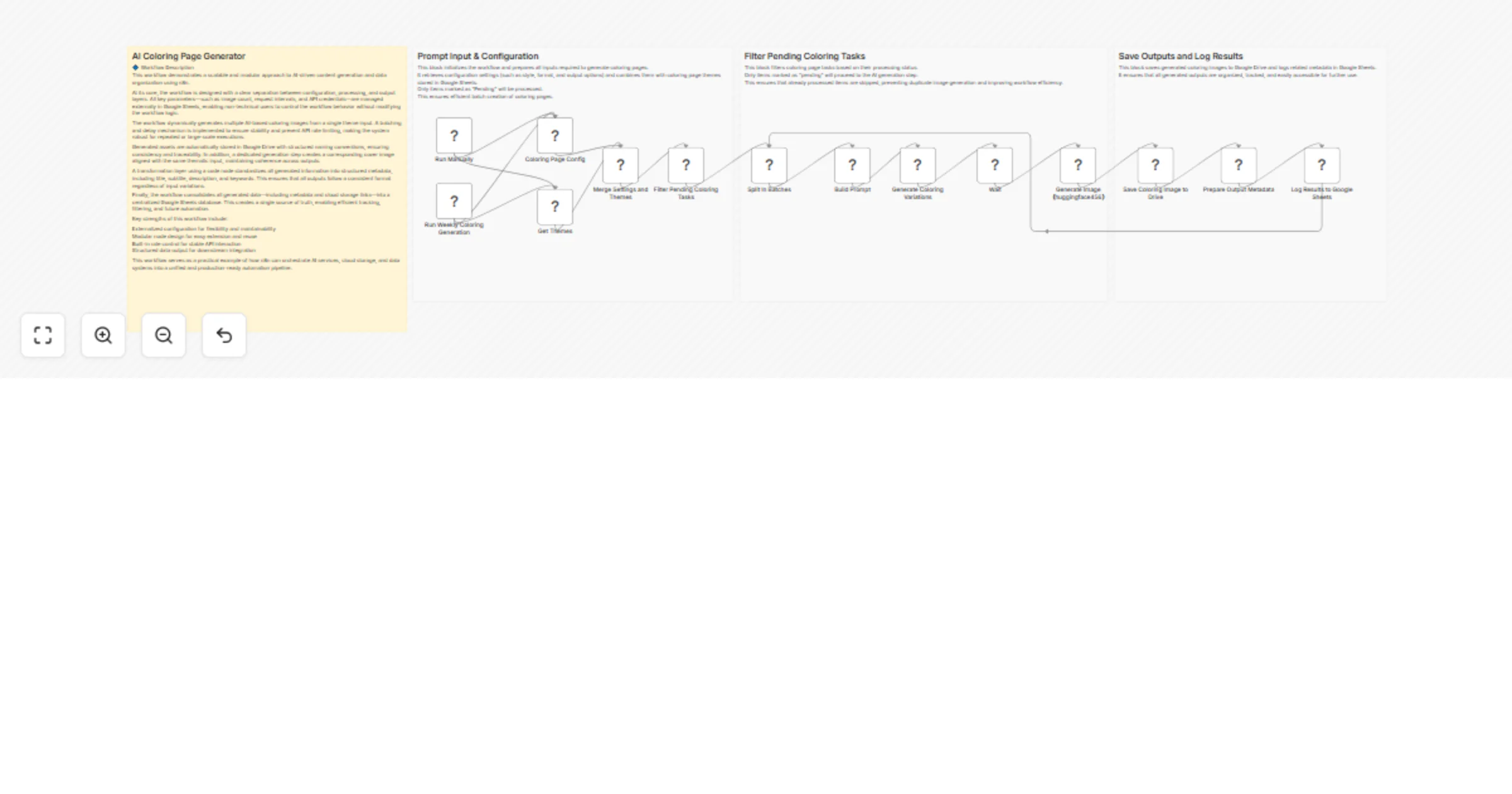

Create AI coloring book pages from Google Sheets and save to Google Drive with Stable Diffusion

This workflow automatically generates printable coloring pages from themes stored in Google Sheets. It reads configur...

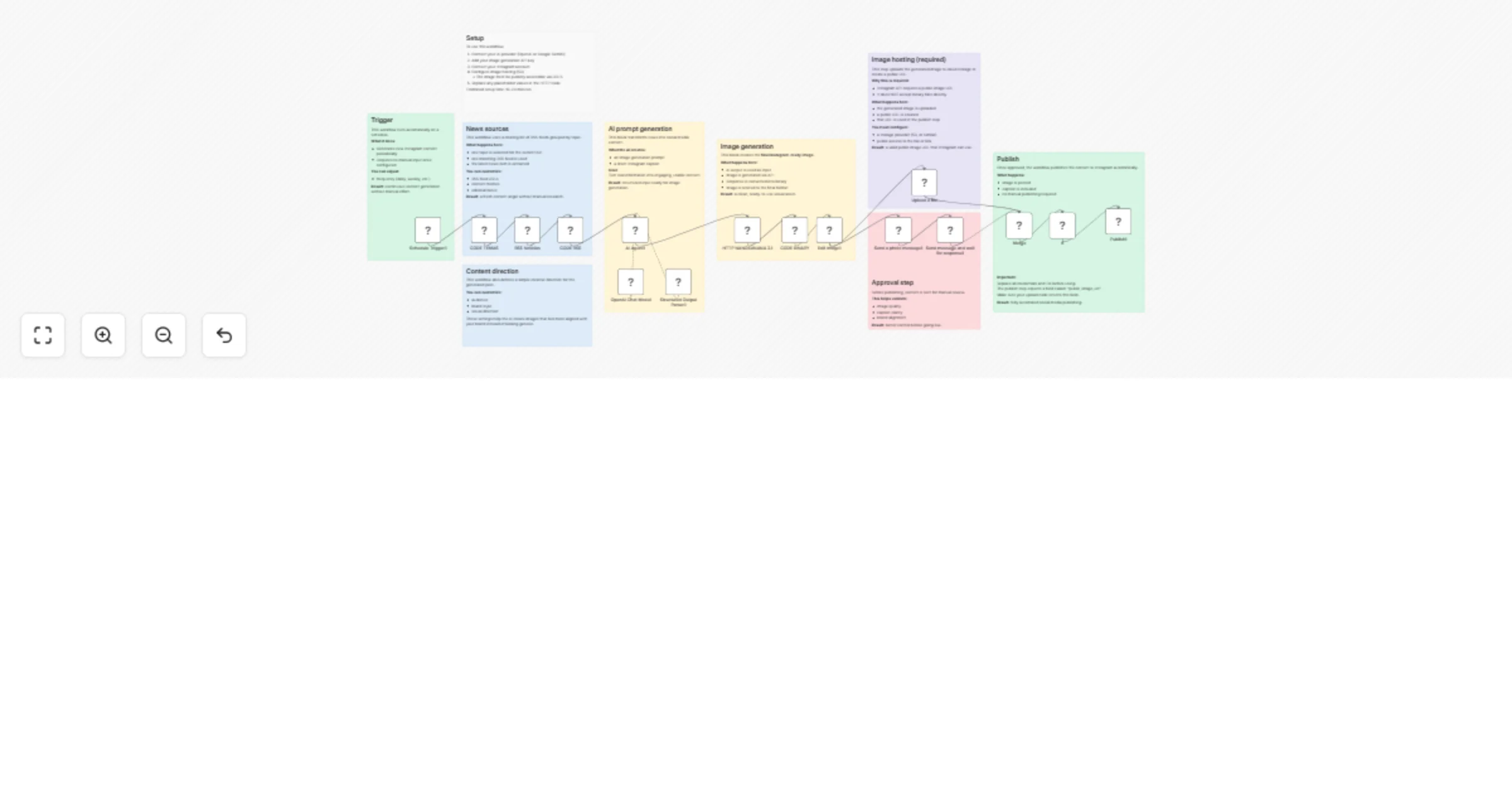

Generate Instagram posts with OpenAI, RSS news, and auto image posting

What this workflow does This workflow automatically generates and publishes Instagram content using AI. It: Generates...

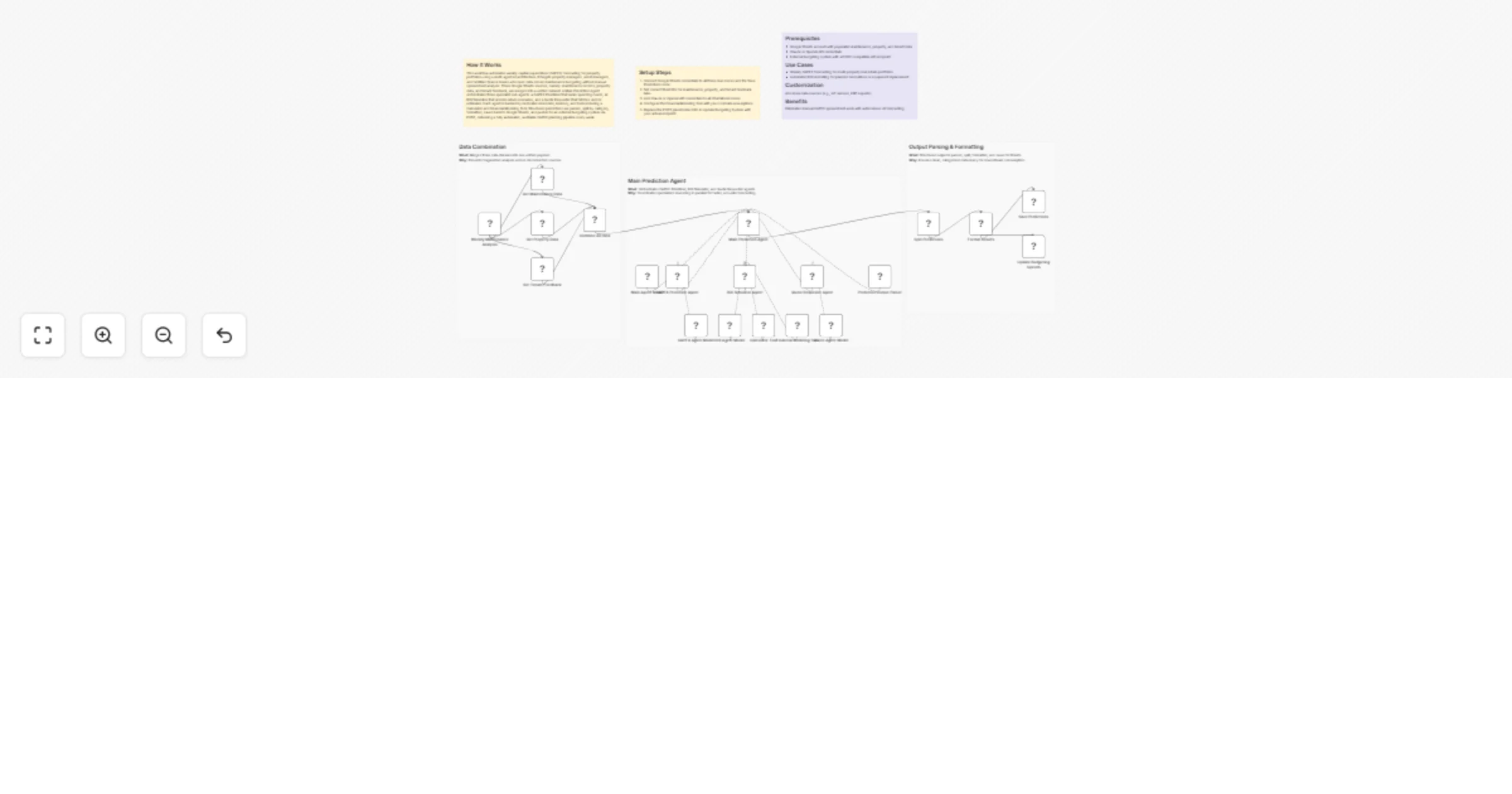

Forecast property CAPEX and ROI weekly using Google Sheets and GPT-4o

How It Works This workflow automates weekly capital expenditure (CAPEX) forecasting for property portfolios using a m...

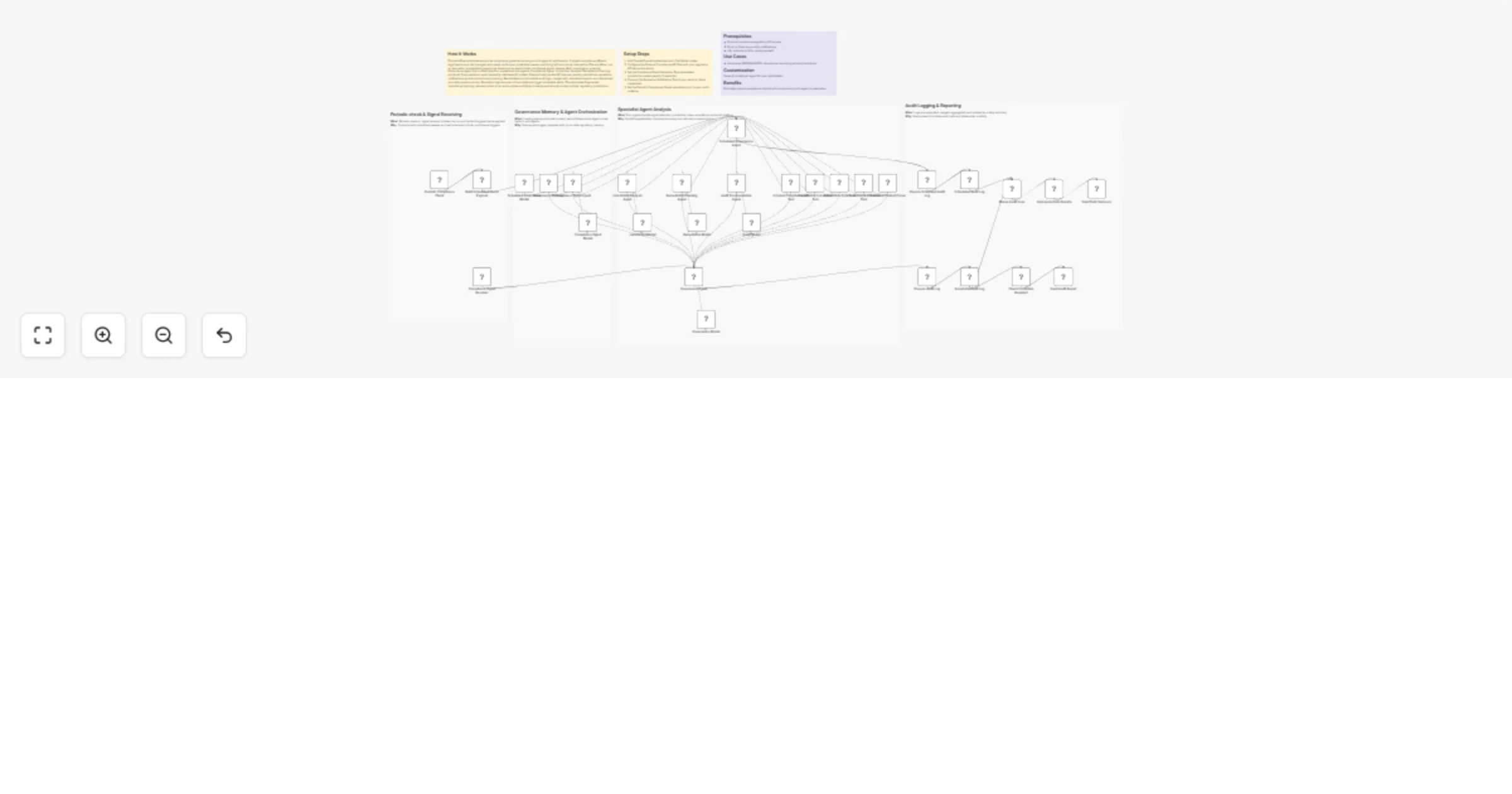

Orchestrate multi-agent compliance monitoring and audit logging with GPT-4o and Slack

How It Works This workflow automates enterprise compliance governance using a multi agent AI architecture. It targets...

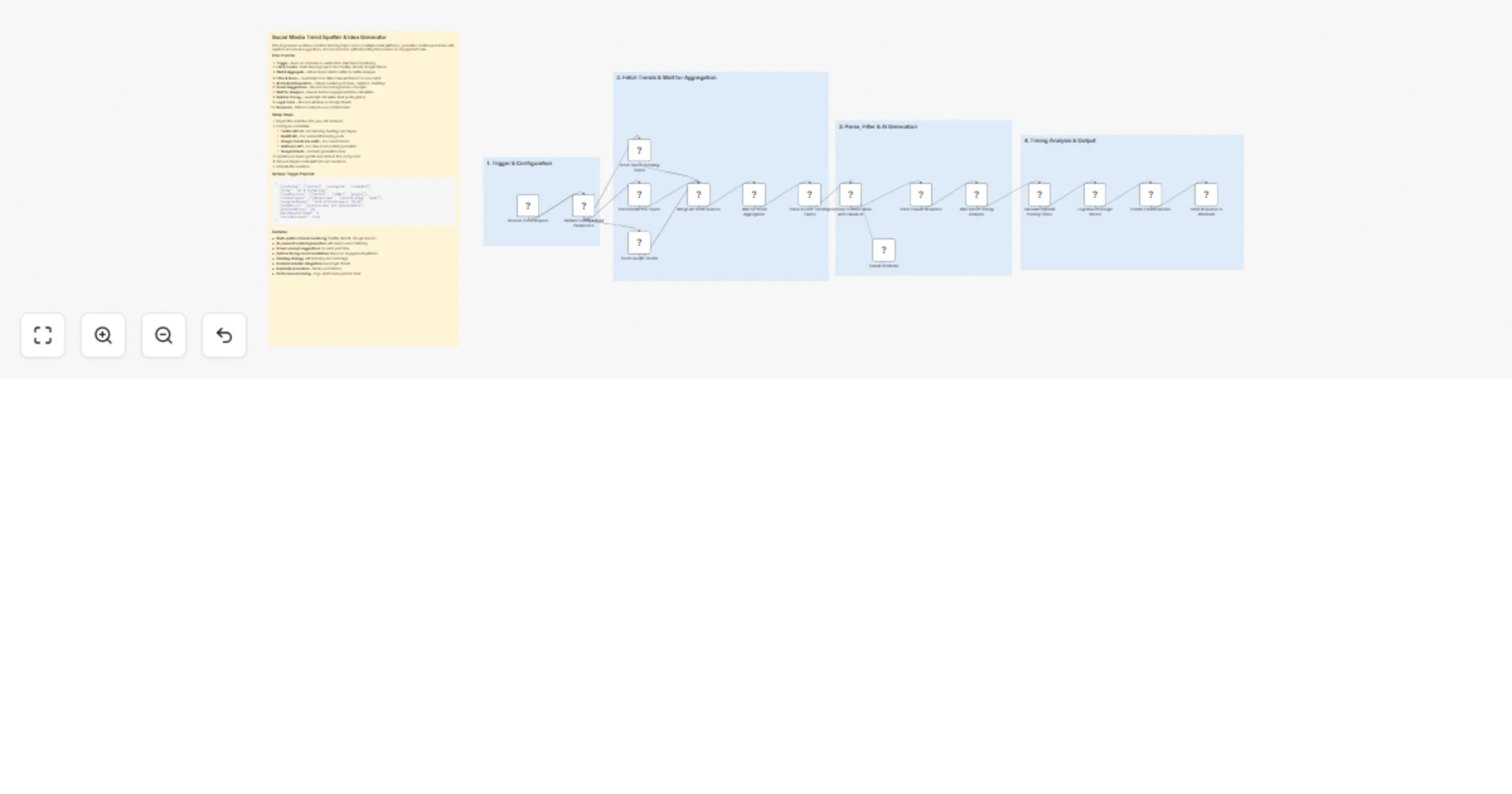

Spot social media trends and generate post ideas with Claude and Google Sheets

This AI powered workflow monitors trending topics across multiple social platforms, generates creative post ideas wit...

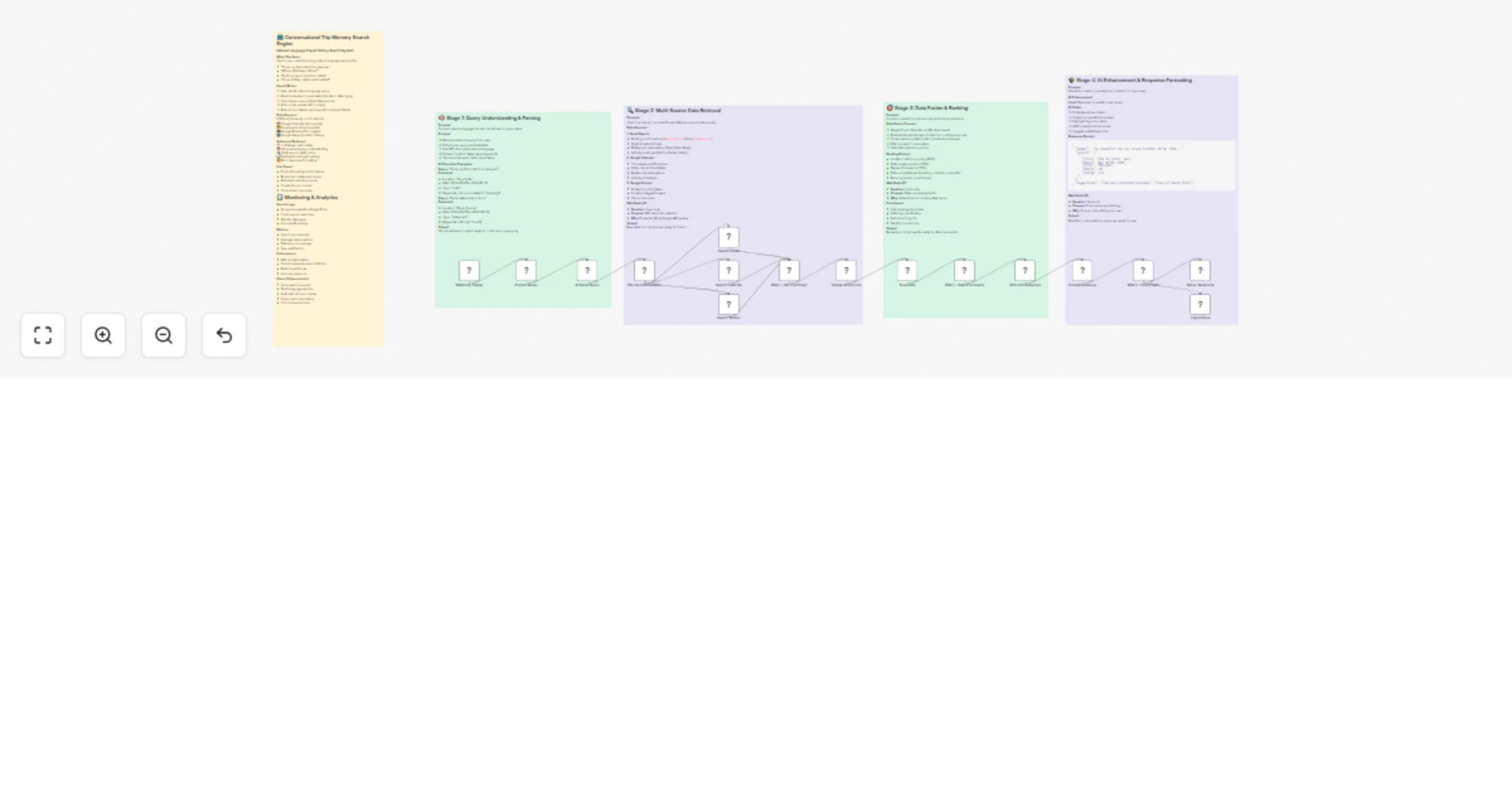

Search your travel memories with Gmail, Google Photos, GPT-4 and Claude

What This Does: Search your past trips using natural language queries like: "Show my Goa hotel from last year" "Where...

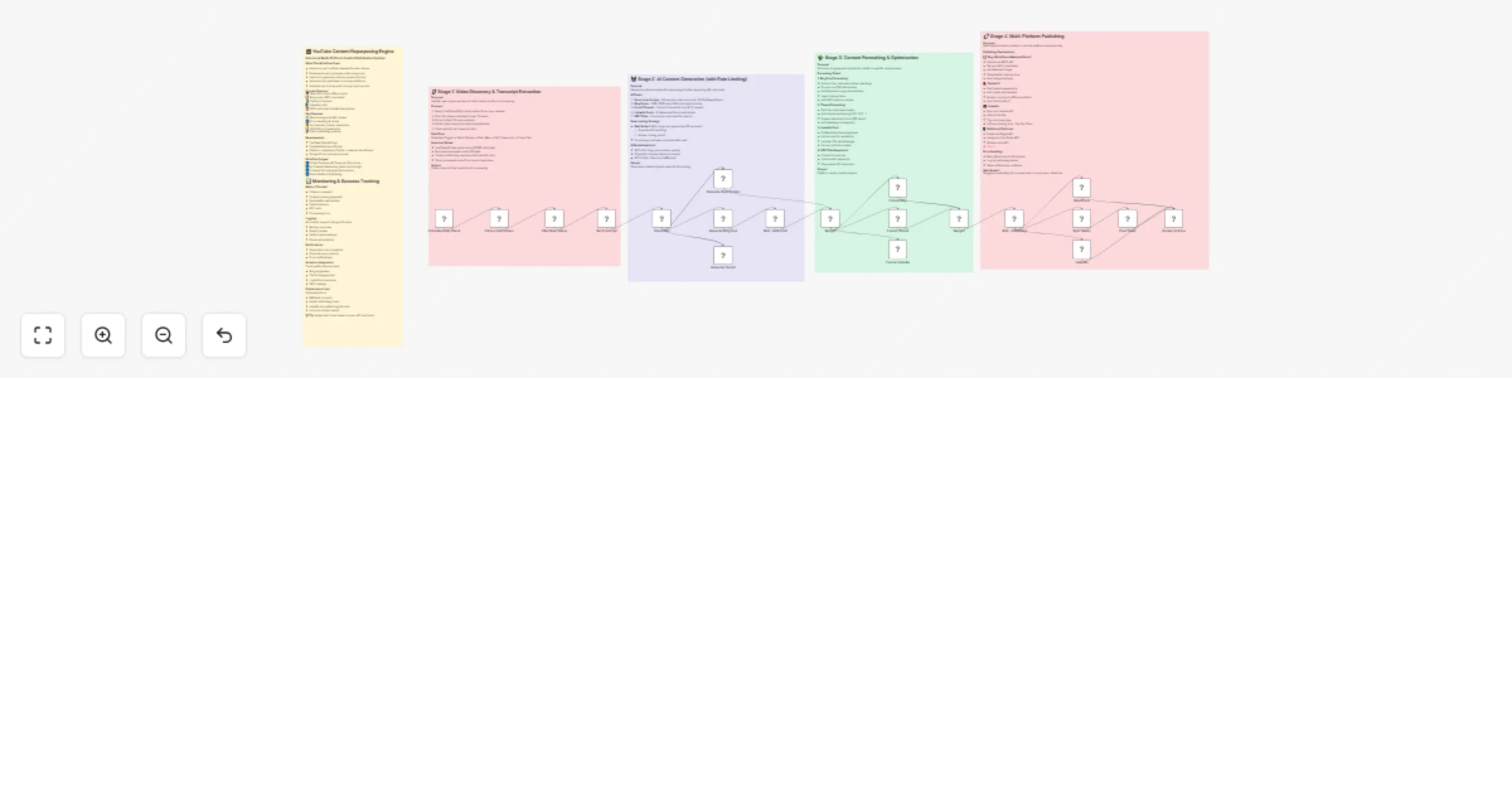

Repurpose YouTube videos into multi-platform content with OpenAI and Anthropic

Advanced Multi Platform Content Distribution System What This Workflow Does: Monitors your YouTube channel for new vi...

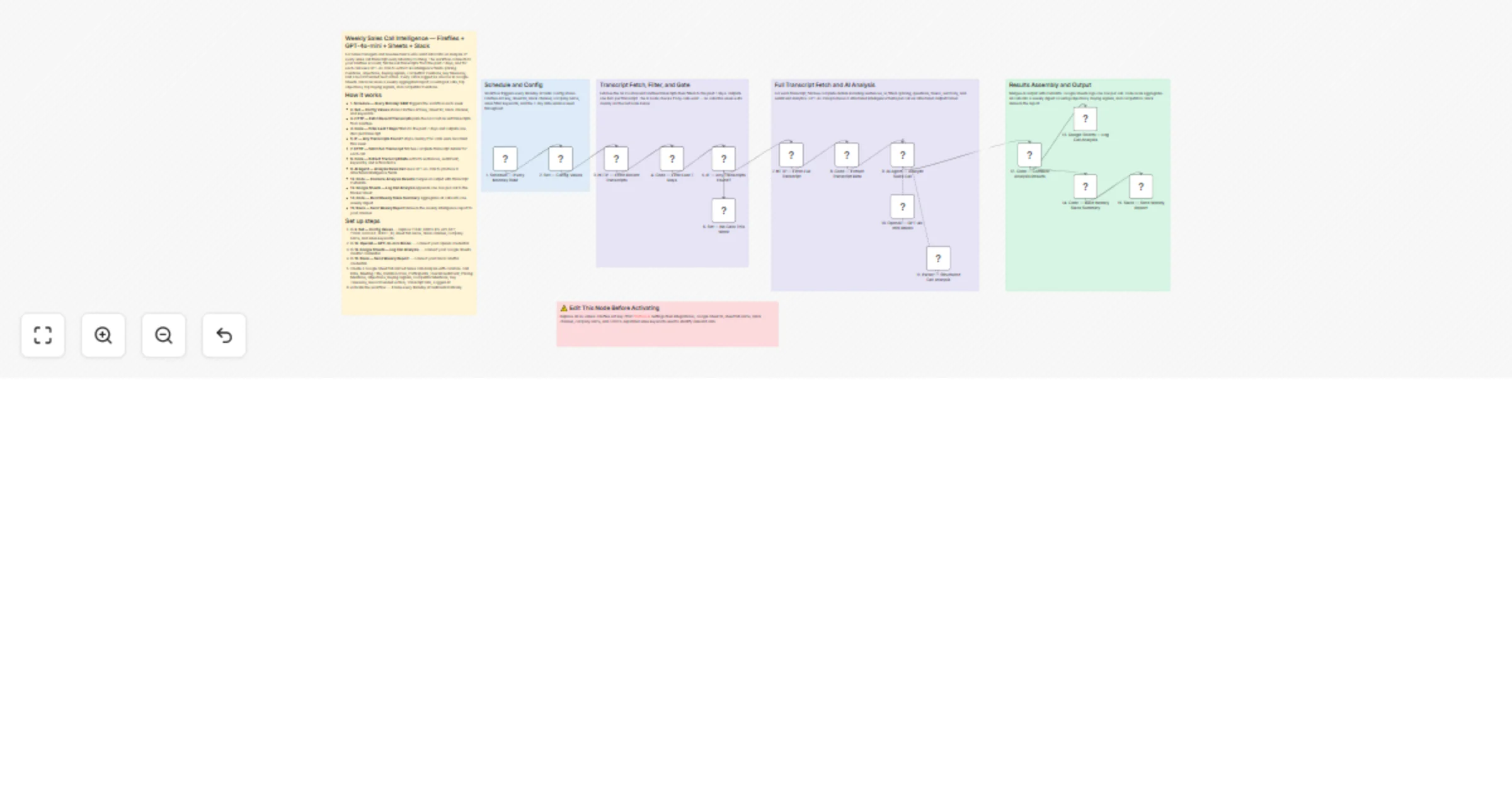

Analyze weekly Fireflies sales calls with GPT-4o-mini, Google Sheets and Slack

Description Connect your Fireflies account once and activate the workflow — every Monday at 9AM it runs automatically...

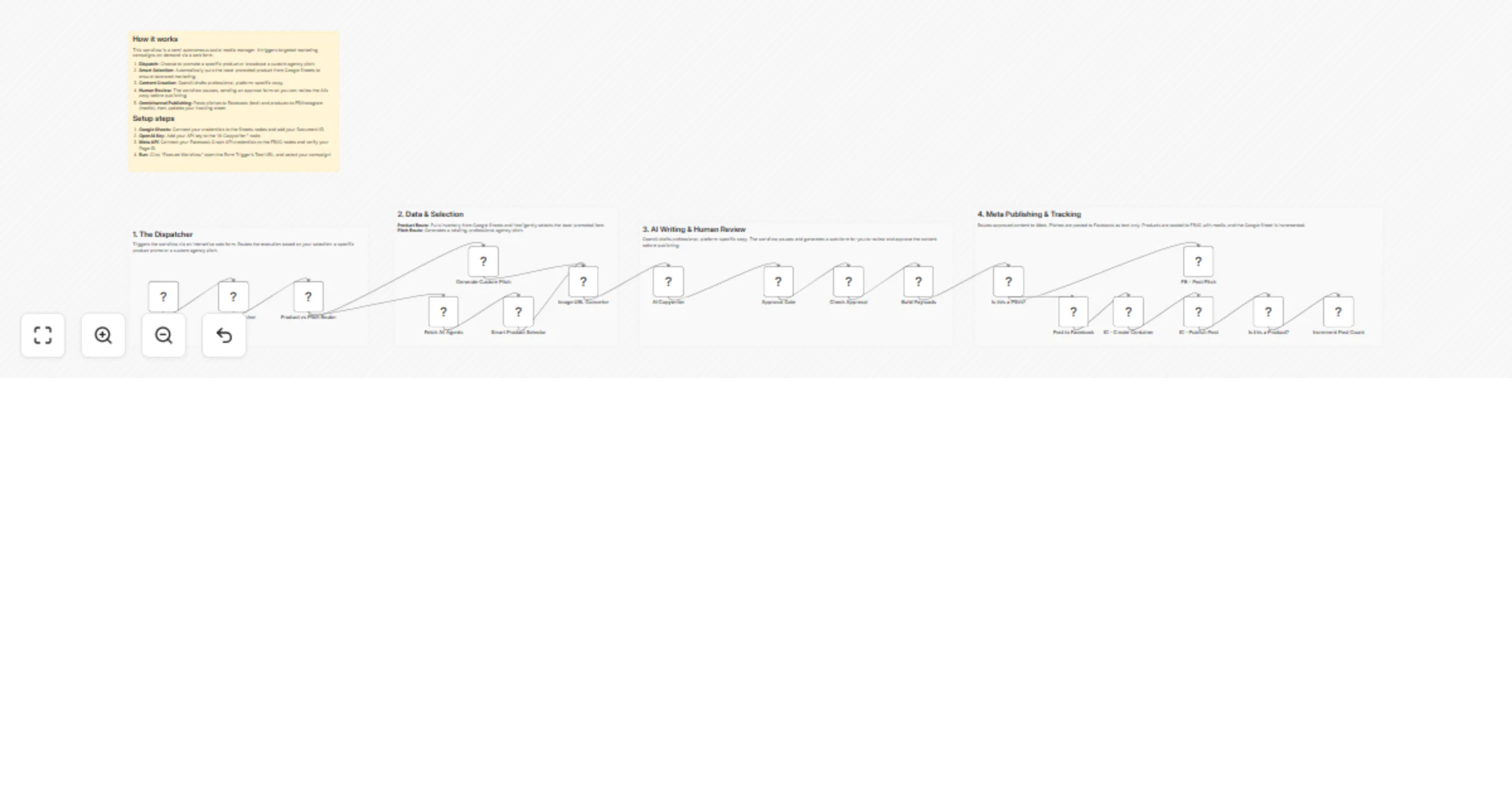

Auto-post Facebook and Instagram content with OpenAI, Google Sheets and review

Who is this for? This workflow is designed for social media managers, marketing agencies, and business owners who wan...

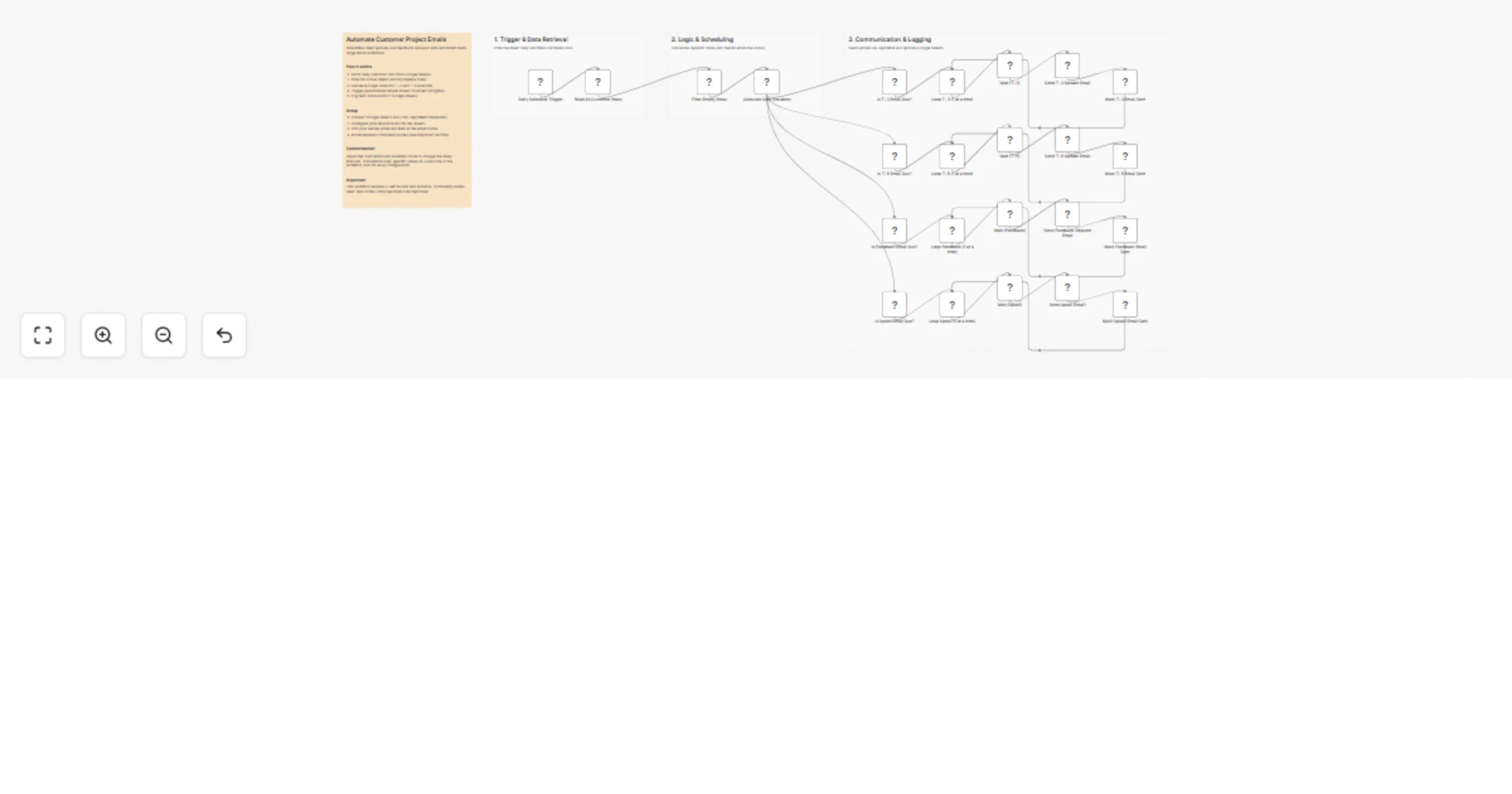

Send multi-stage customer project emails from Google Sheets with Zoho ZeptoMail

Keep your clients informed at every stage of their project without lifting a finger. This workflow runs daily from Go...

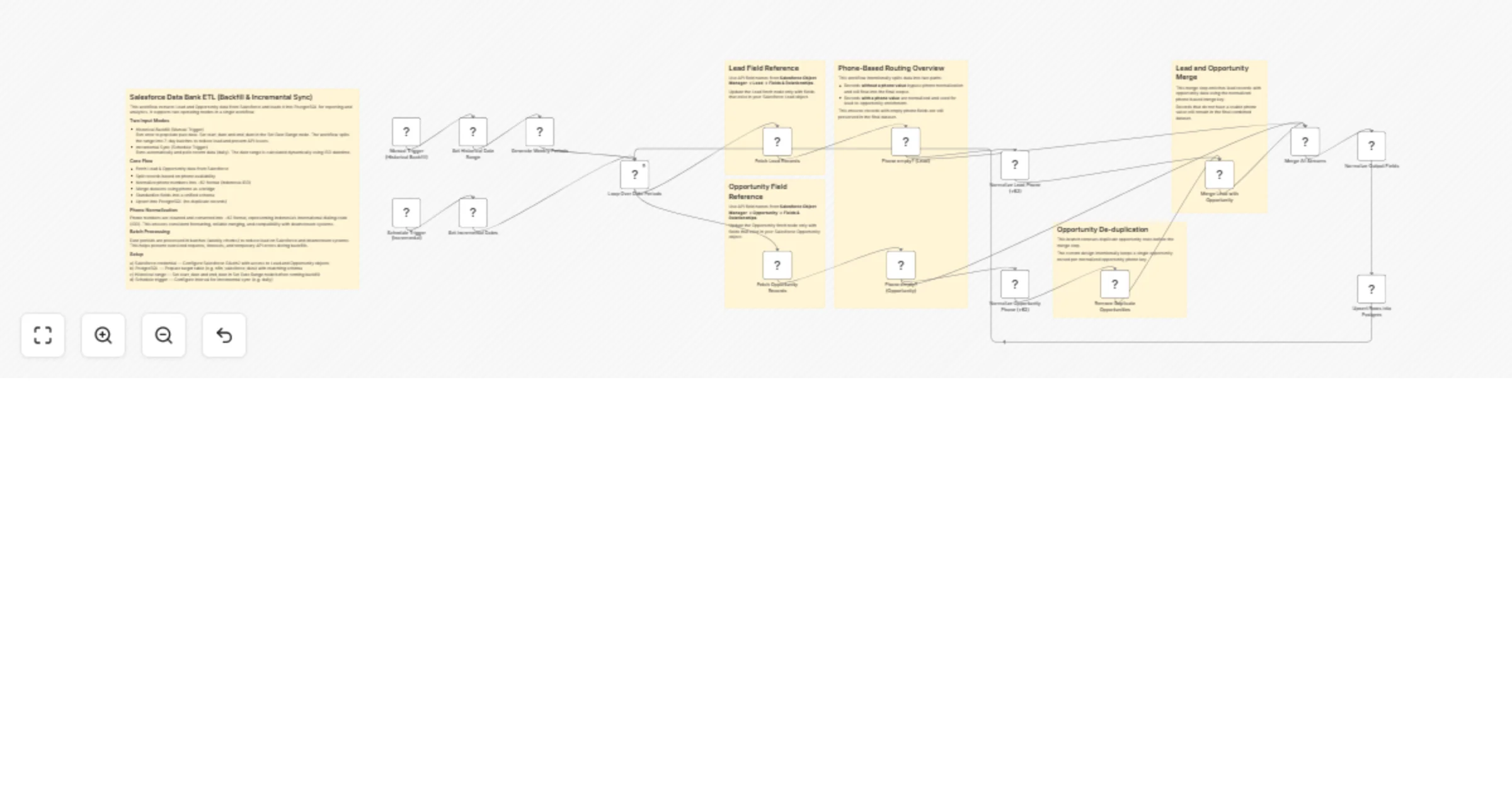

Sync Salesforce leads and opportunities to PostgreSQL with backfill and incremental ETL

Salesforce Leads & Opportunities to PostgreSQL (Backfill & Incremental Sync ETL) This workflow extracts Lead and Oppo...

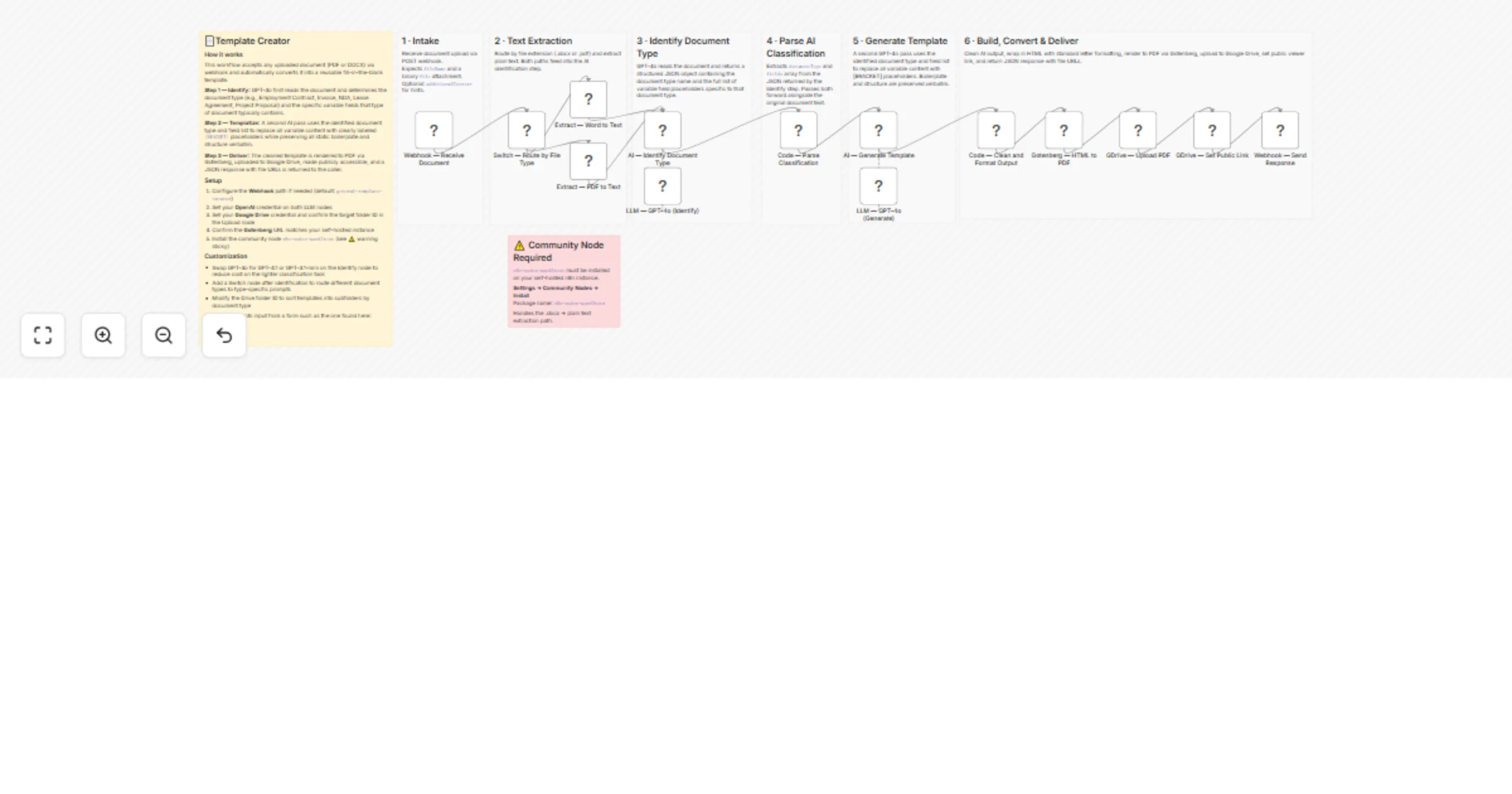

Create fillable document templates from PDF or DOCX with GPT-4o and Google Drive

📄Template Creator How it works This workflow accepts any uploaded document (PDF or DOCX) via webhook and automatical...

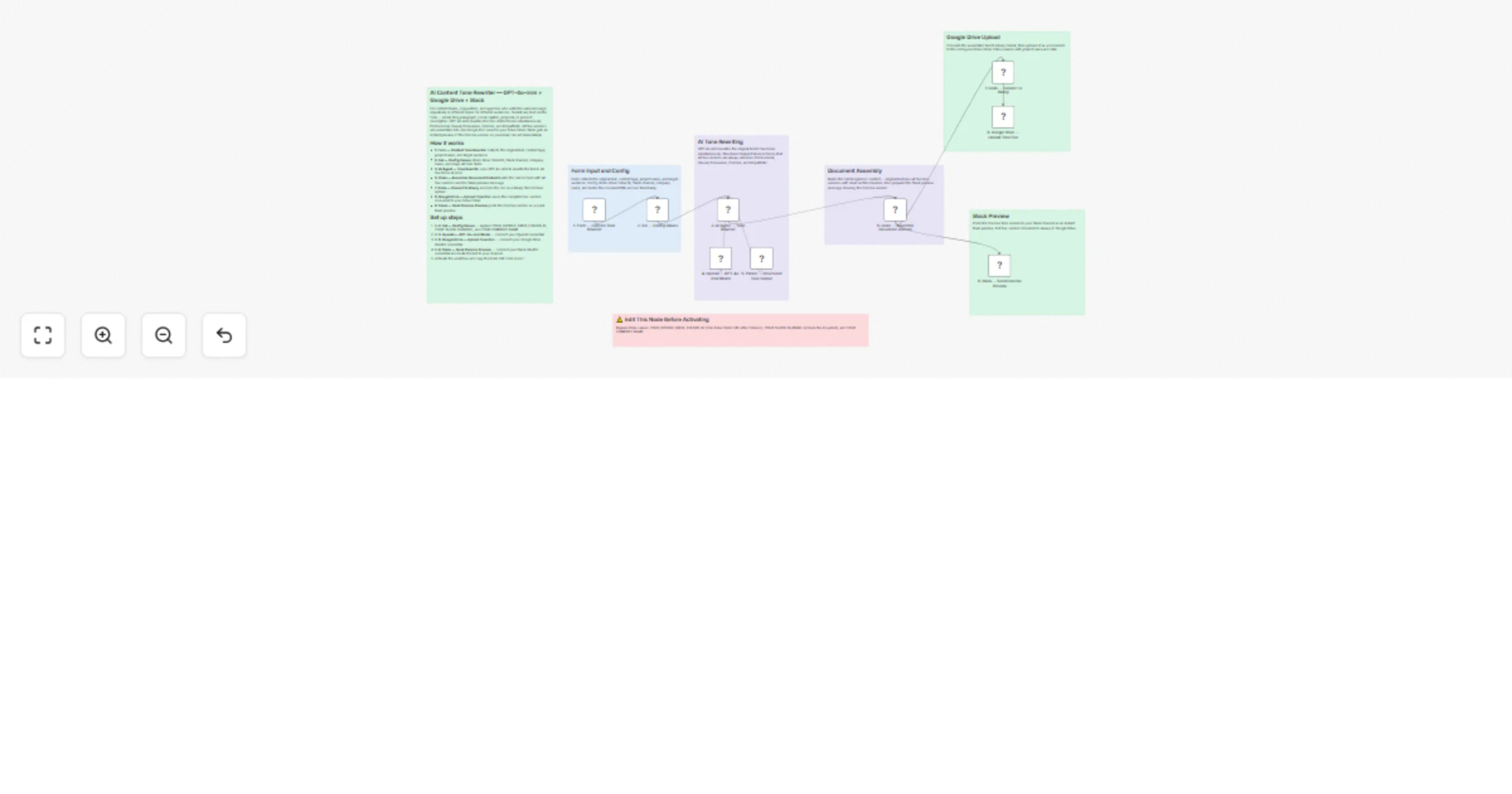

Rewrite content in 5 tones using GPT-4o-mini, Google Drive and Slack

Description Paste any text into a simple form — an email, social caption, blog paragraph, or proposal — and submit. G...

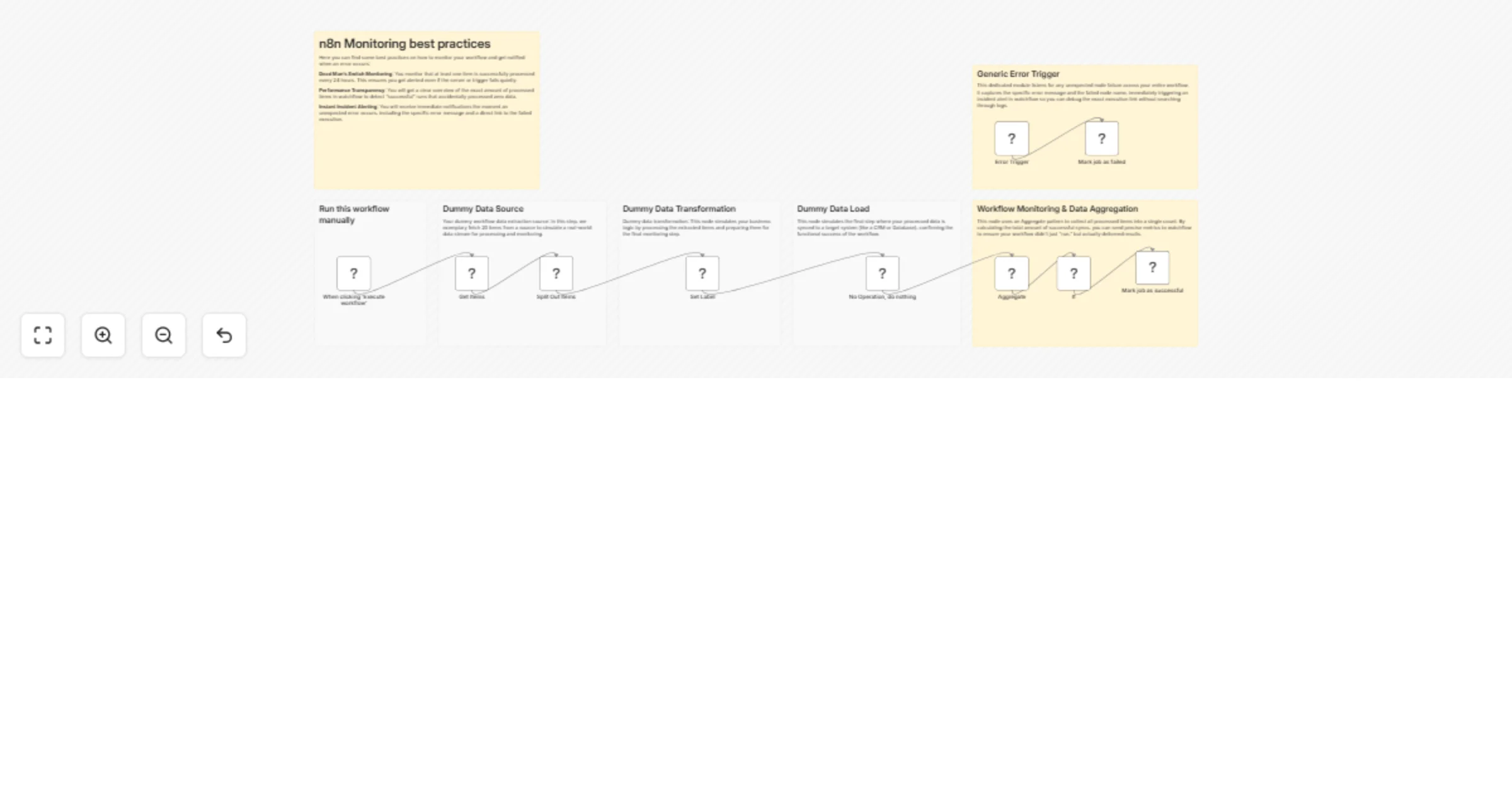

Monitor n8n workflows with Watchflow dead man’s switch and error alerts

🚀 Your n8n Workflows Monitoring Best Practices Template Are you running critical processes in n8n and relying on hop...

Research LinkedIn prospects before sales calls with Bright Data and GPT-5.4

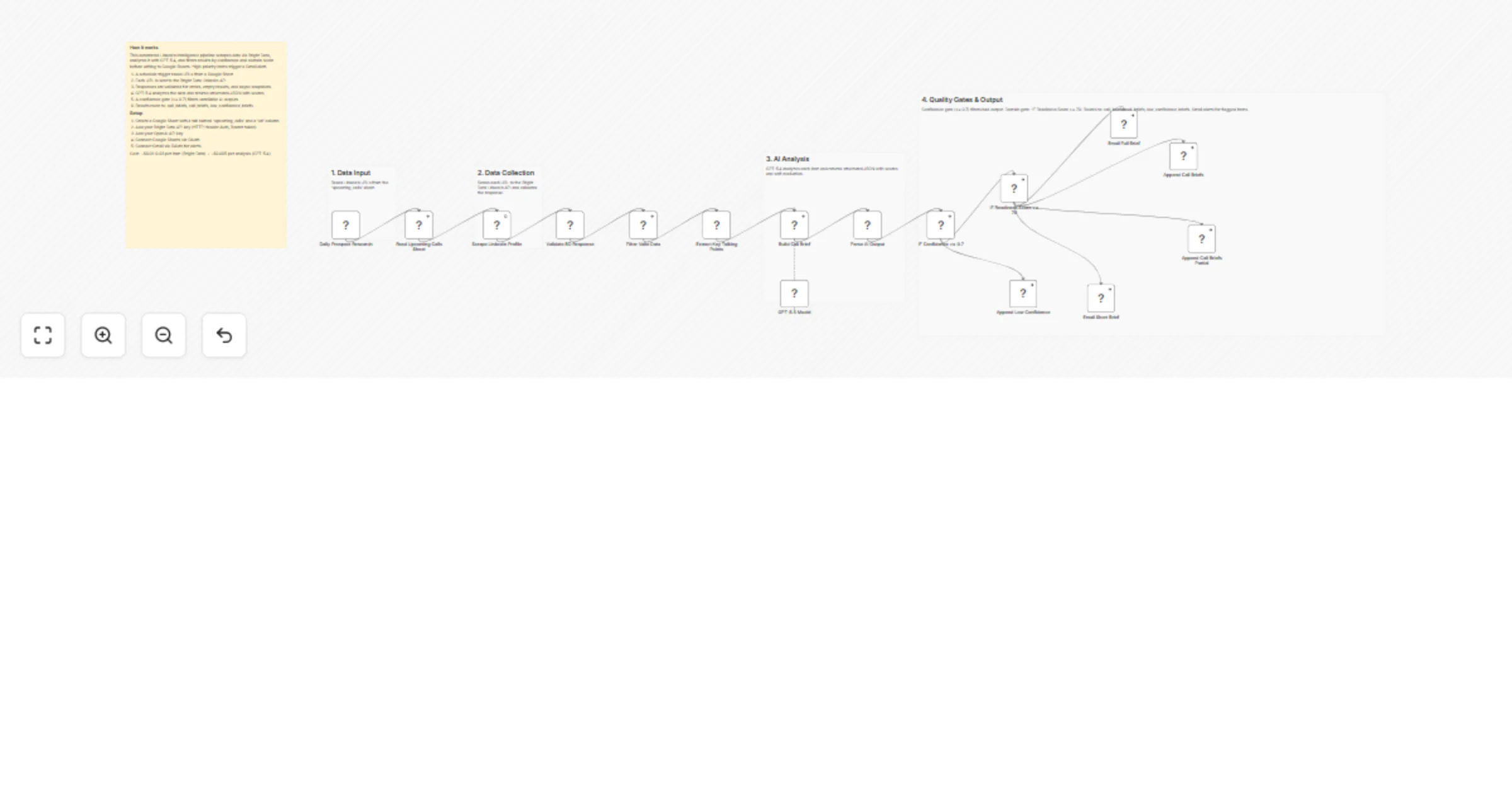

Build AI powered pre call intelligence briefs from LinkedIn profiles Automatically scrape LinkedIn profiles for upcom...

Generate AI music and publish YouTube videos automatically with Blotato, OpenAI, ElevenLabs, and Shotstack

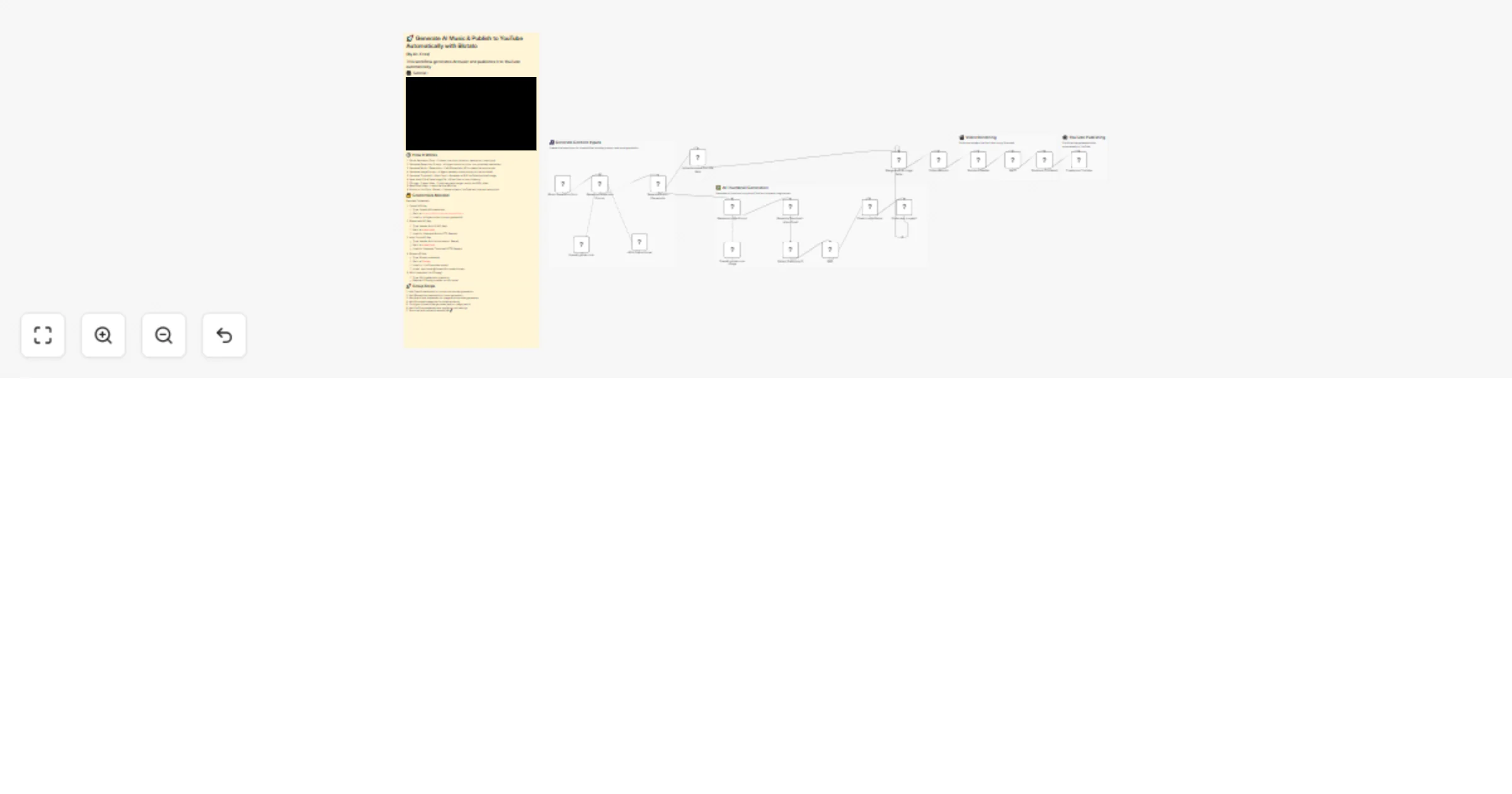

💥 Generate AI Music & Publish to YouTube Automatically with Blotato This workflow automatically generates AI music,...

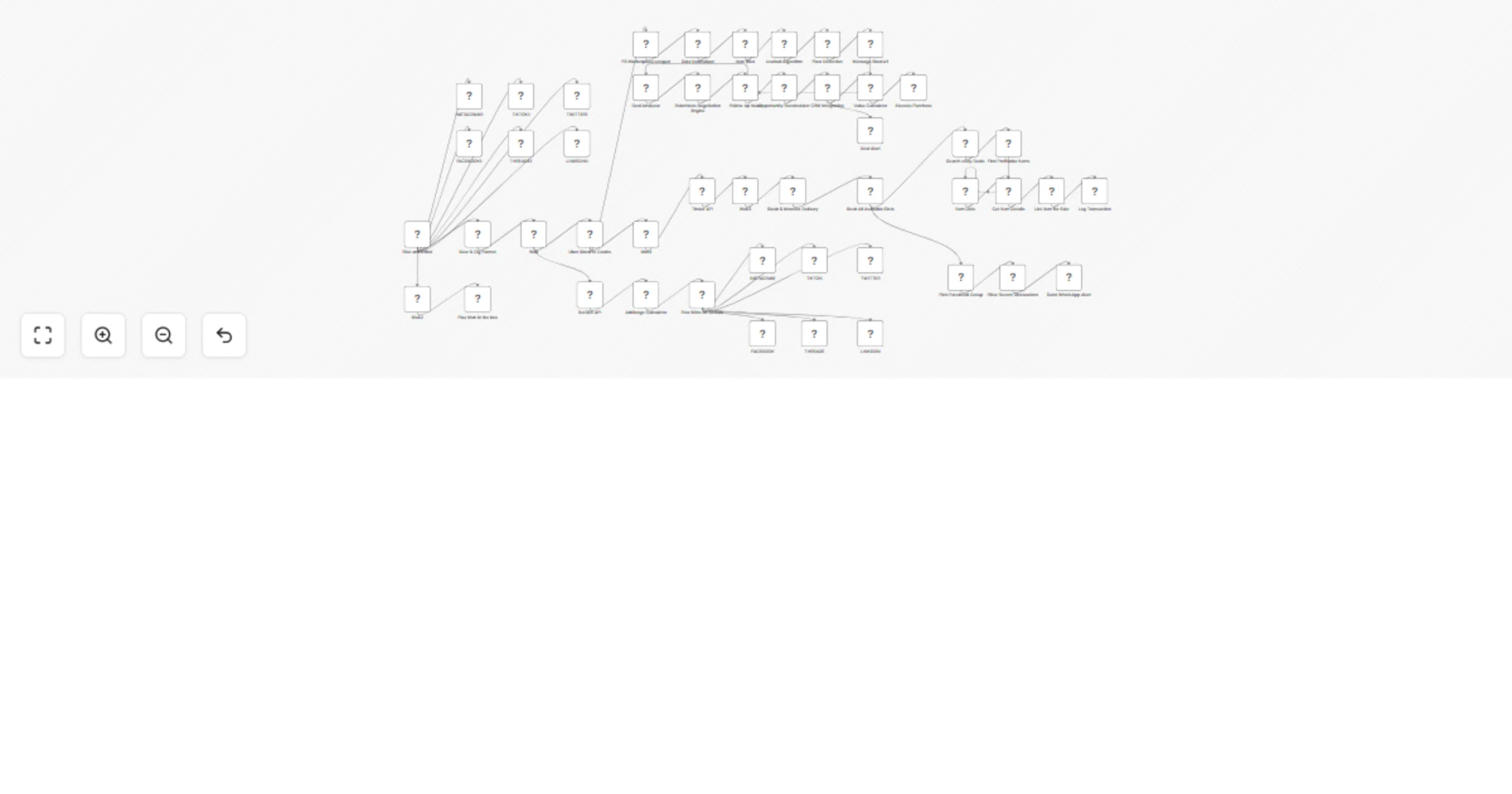

Automate social fan-out, marketplace outreach, and CRM alerts with Salesforce

⚠️ Heads up: this is satire. The "Hell Yeah!" workflow is a parody of "automate your whole life with AI agents" grind...

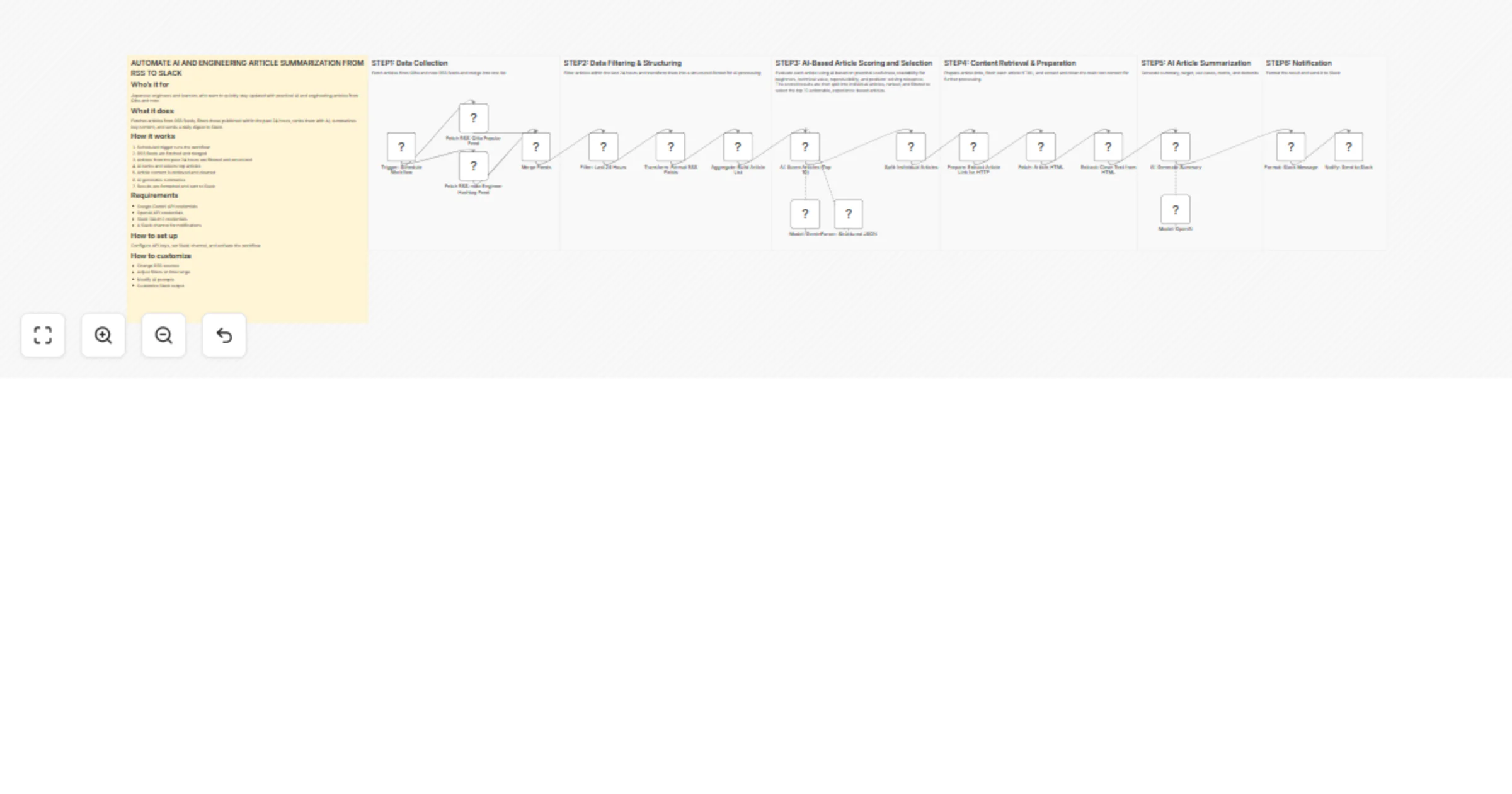

Summarize Japanese AI engineering articles from Qiita and note RSS to Slack

Who’s it for This workflow is designed for Japanese speaking individuals who want to efficiently stay up to date with...