Document Extraction Workflows

Create fillable document templates from PDF or DOCX with GPT-4o and Google Drive

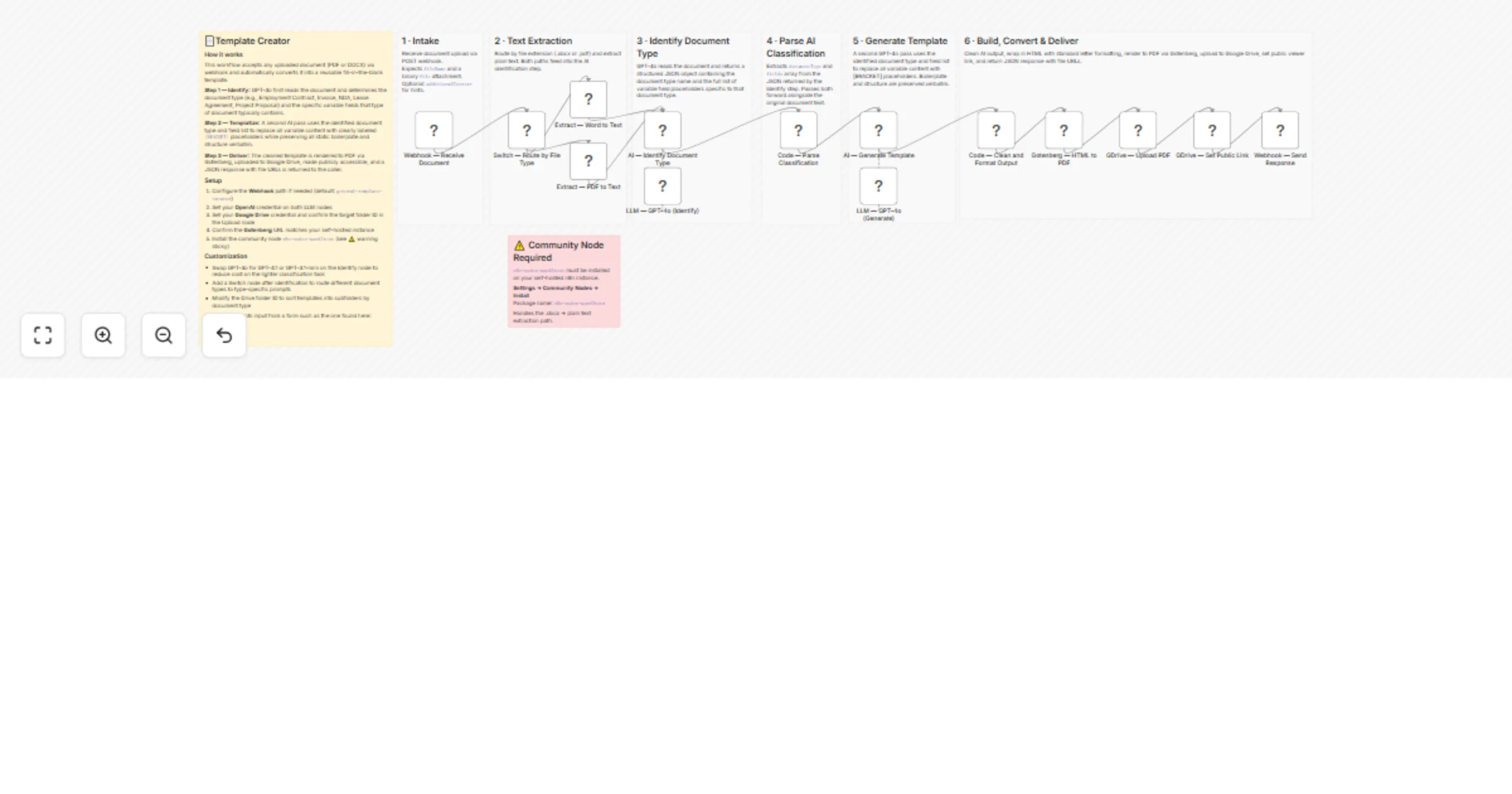

📄Template Creator How it works This workflow accepts any uploaded document (PDF or DOCX) via webhook and automatical...

Generate PDF pricing proposals from Excel with Gotenberg and Outlook

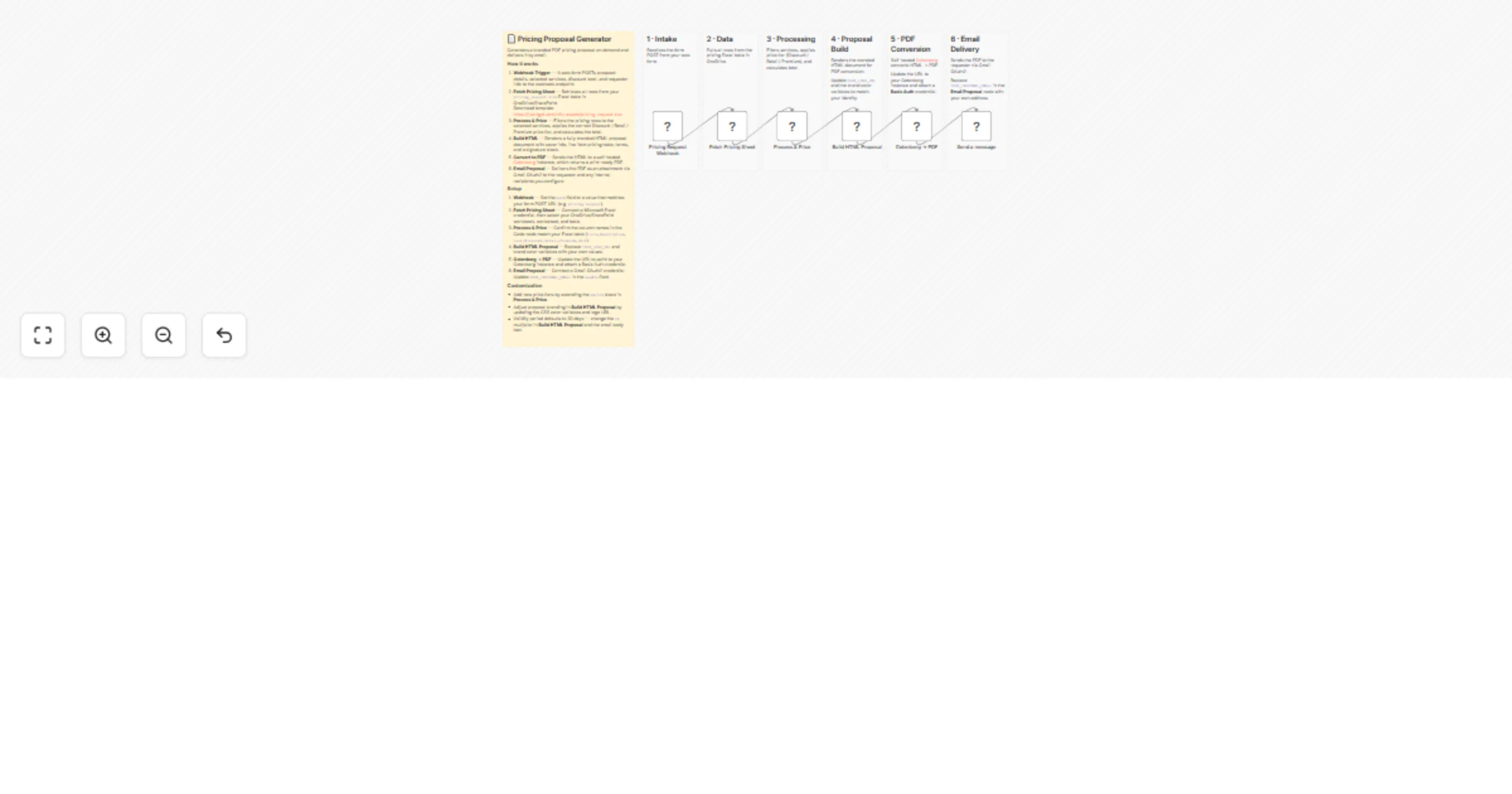

How it works 1. Webhook Trigger — A web form POSTs prospect details, selected services, discount level, and requestor...

Classify and route email attachments with easybits, Gmail and Google Drive

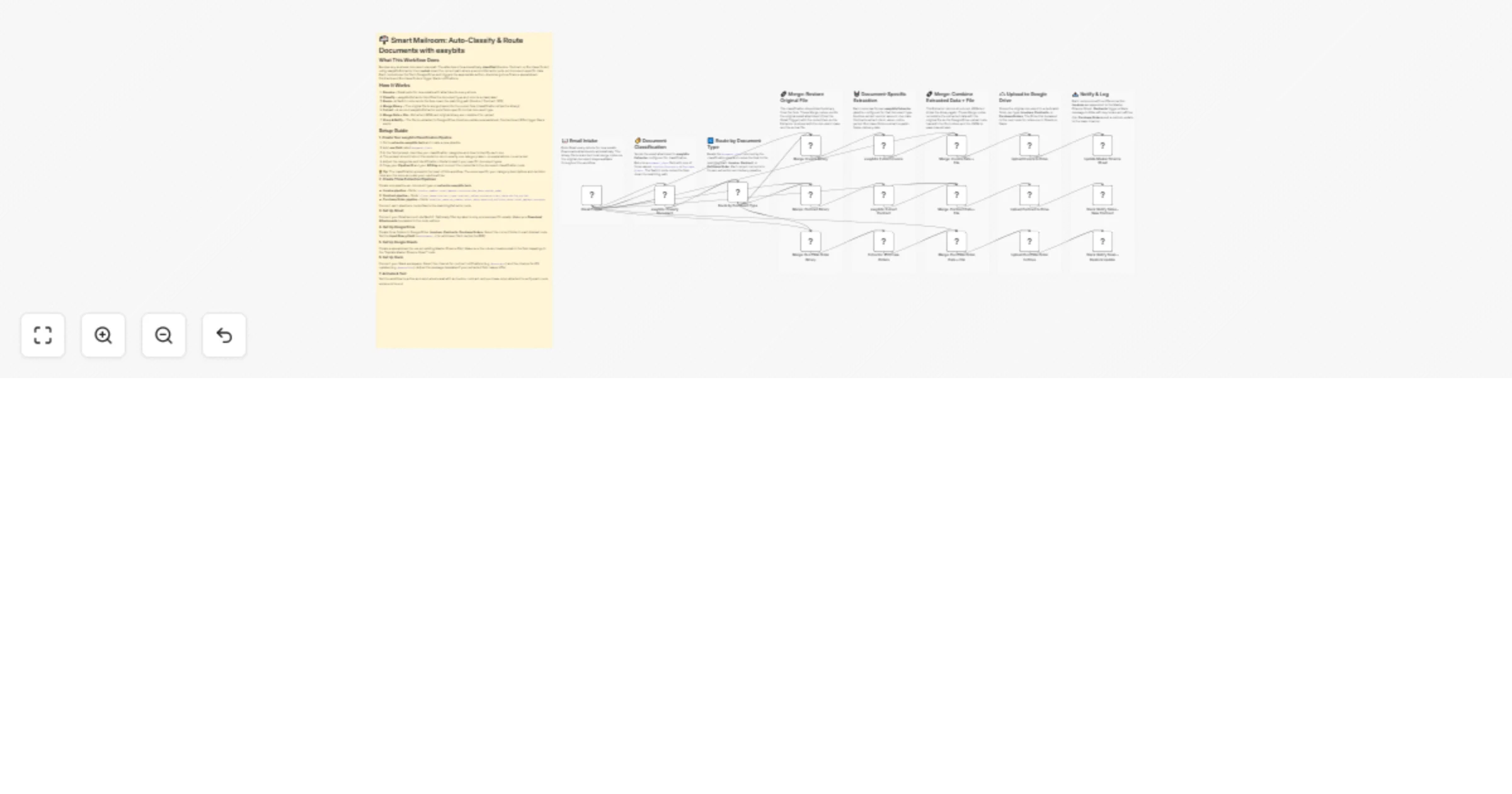

What This Workflow Does Receive any business document via email. The attachment is automatically classified (Invoice,...

Generate a weekly business health report from Google Sheets with Claude

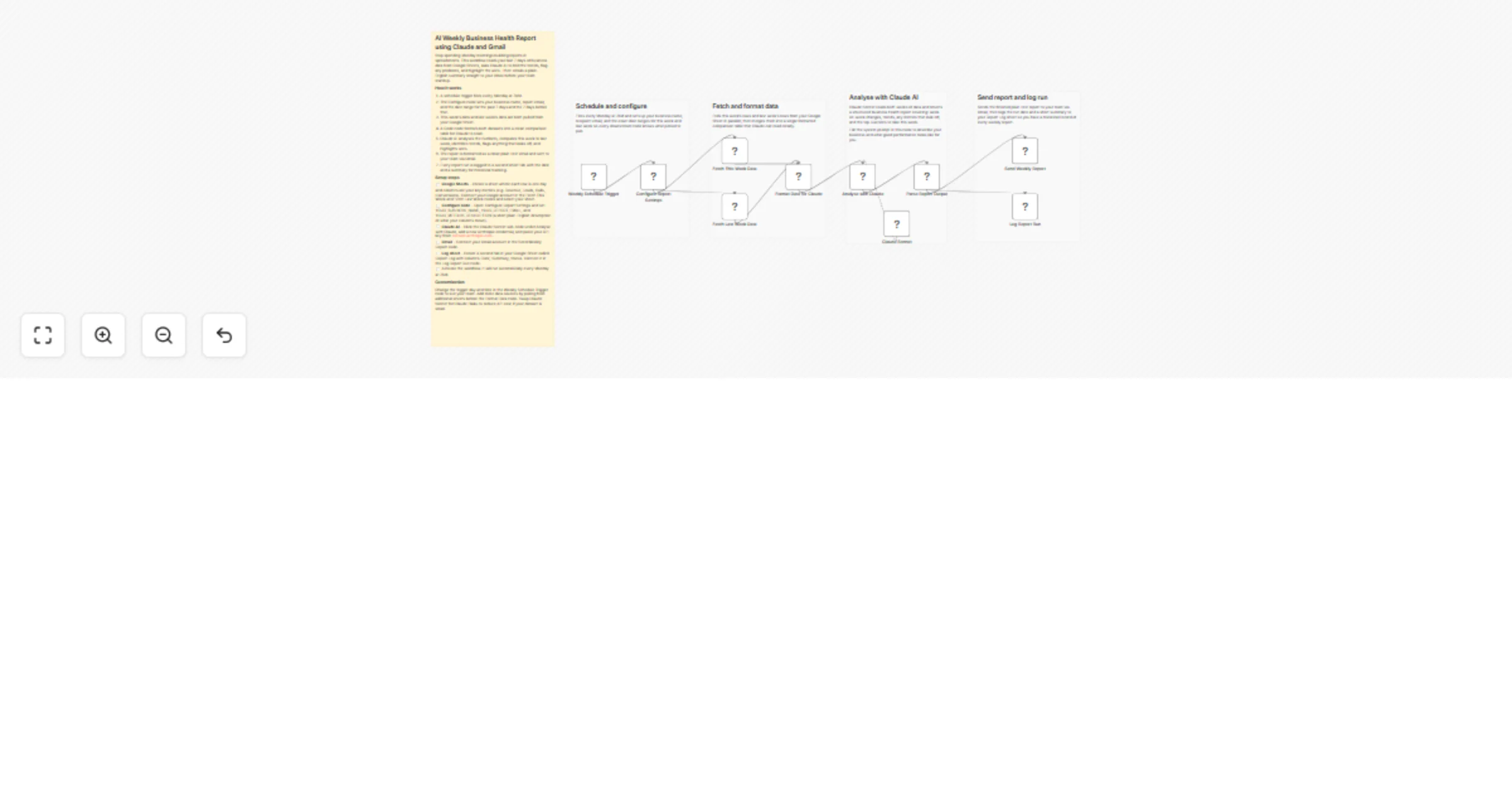

Overview Stop spending Monday mornings manually pulling numbers and building reports. This workflow reads your last 7...

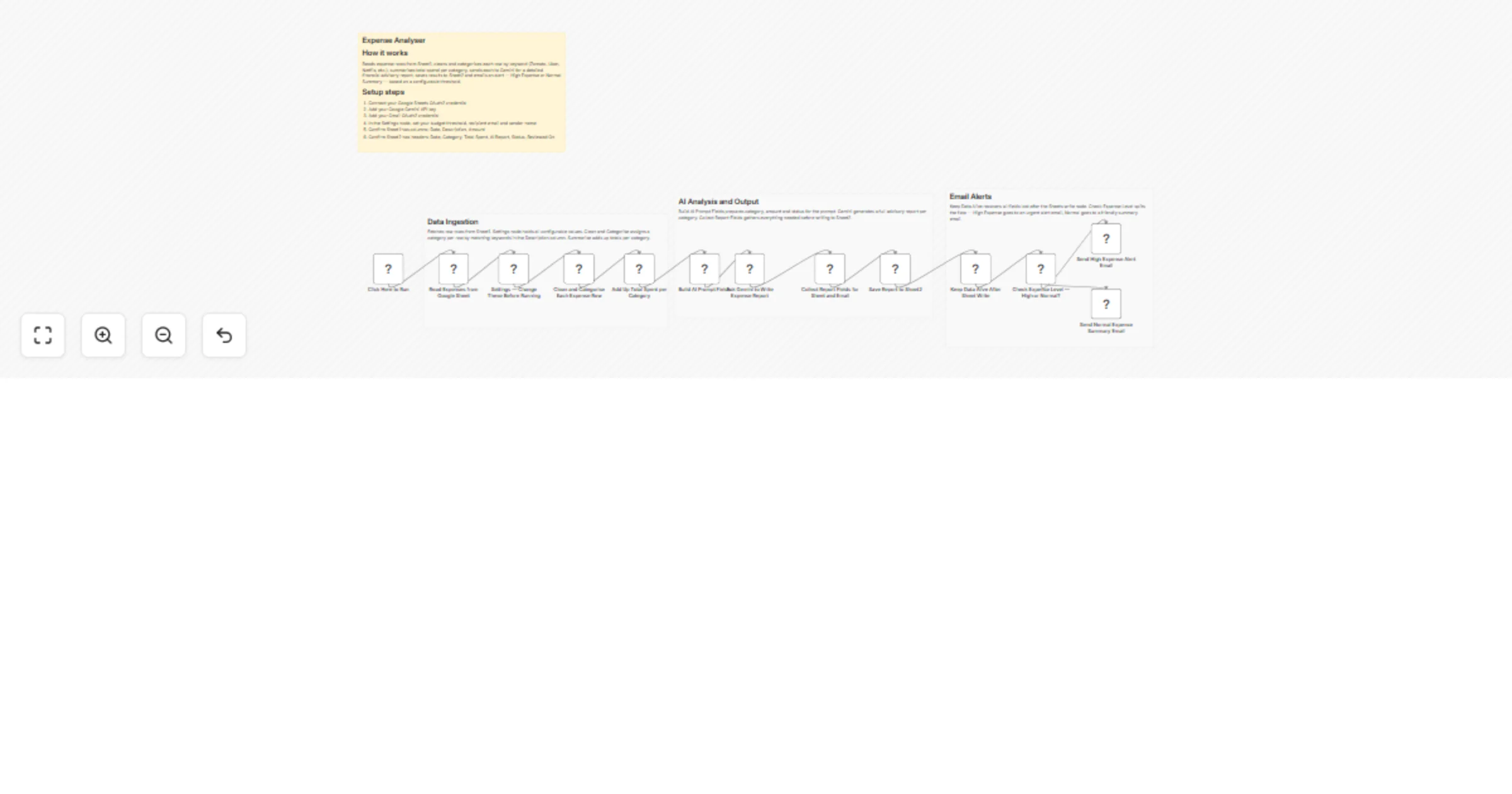

Track and analyze Google Sheets expenses with Gemini and Gmail alerts

Expense Leak Detector Smart Expense Monitoring in Minutes This n8n workflow reads your expenses from Google Sheets, c...

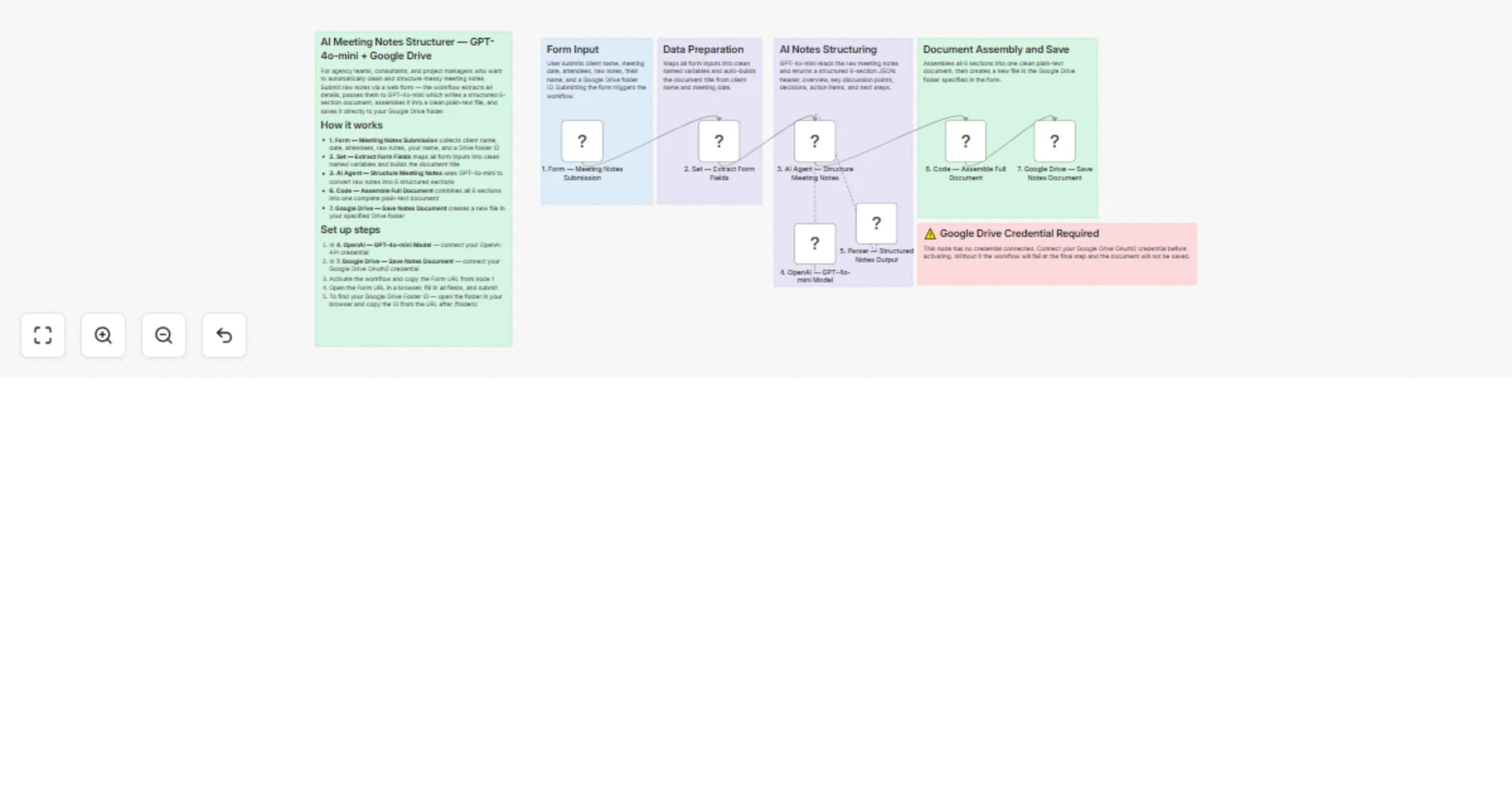

Structure AI meeting notes with GPT-4o-mini and save to Google Drive

Paste your raw meeting notes into a simple web form and submit. The workflow sends your notes to GPT 4o mini, which c...

Analyze contract PDFs and score risk with Claude 3.5, Postgres, email and Slack alerts

Overview This workflow automates end to end contract analysis using AI. It extracts clauses, evaluates risks, tracks...

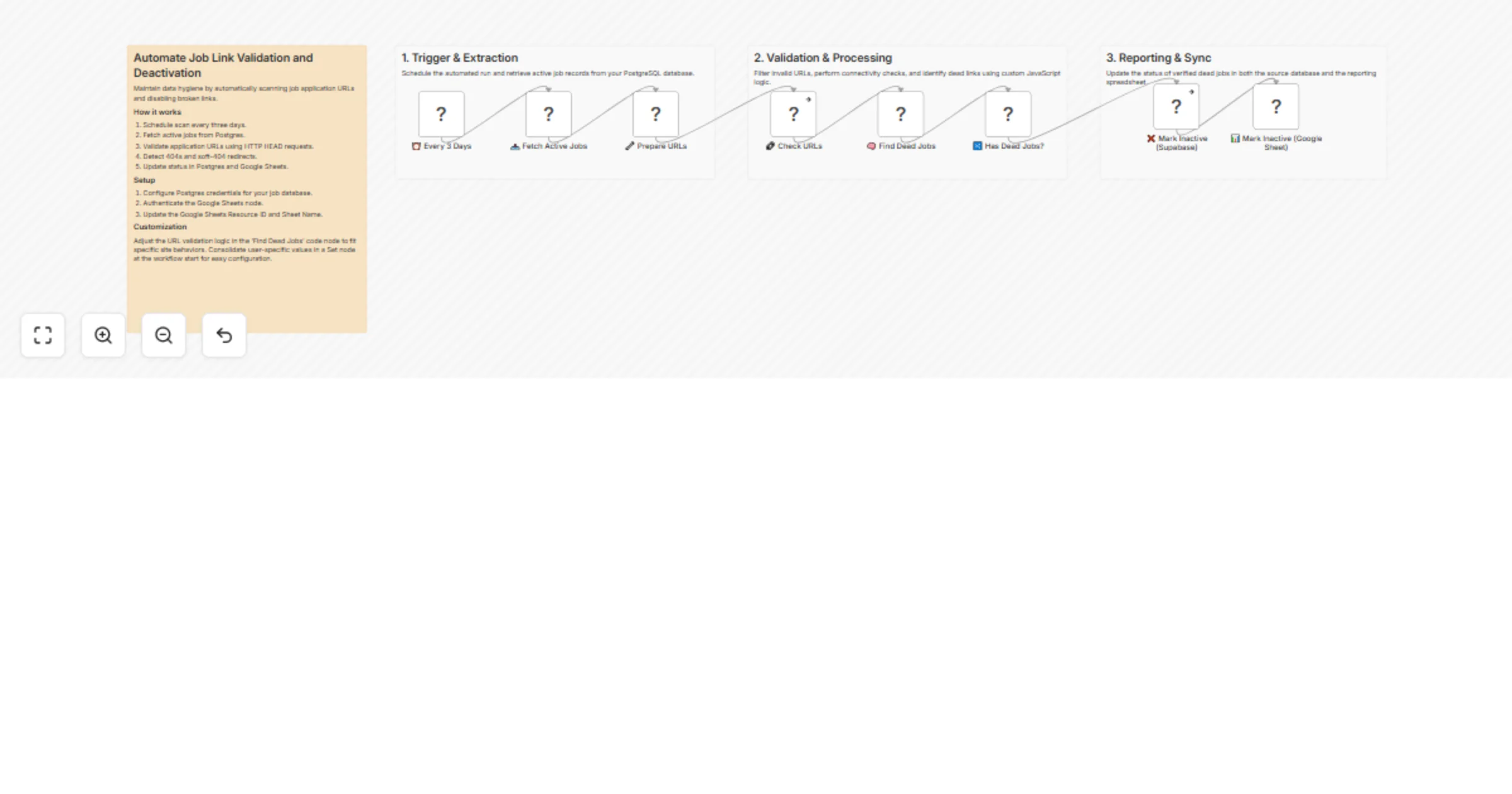

Check job apply URLs and deactivate dead links in Postgres and Google Sheets

Keep your job listings database clean without manual checks. Every three days, this workflow fetches all active jobs...

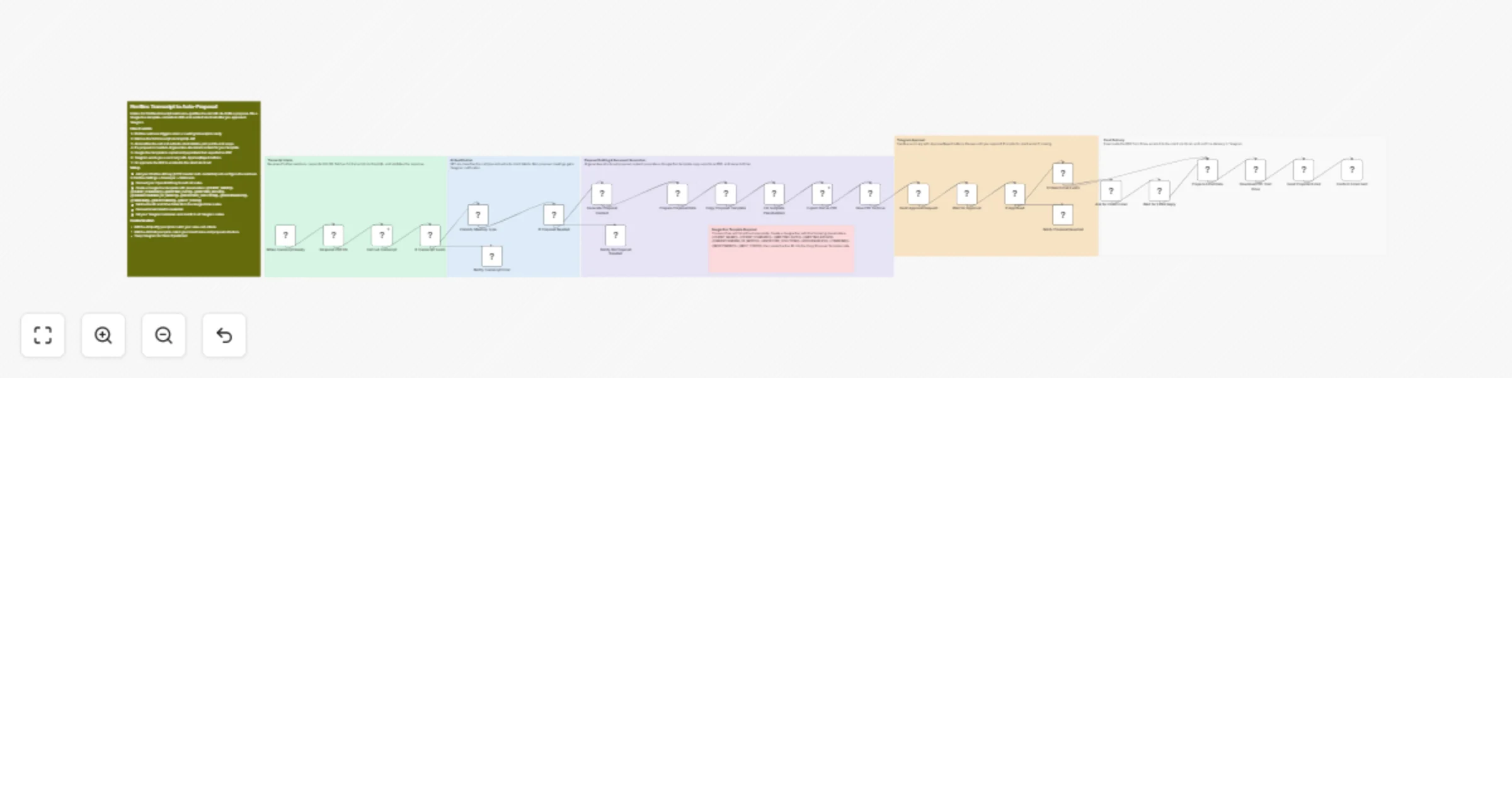

Create AI proposals from Fireflies transcripts with GPT-4o, Google Docs, Gmail and Telegram approval

Fireflies AI Meeting Proposal Automation Listens for completed Fireflies transcripts, qualifies whether a proposal is...

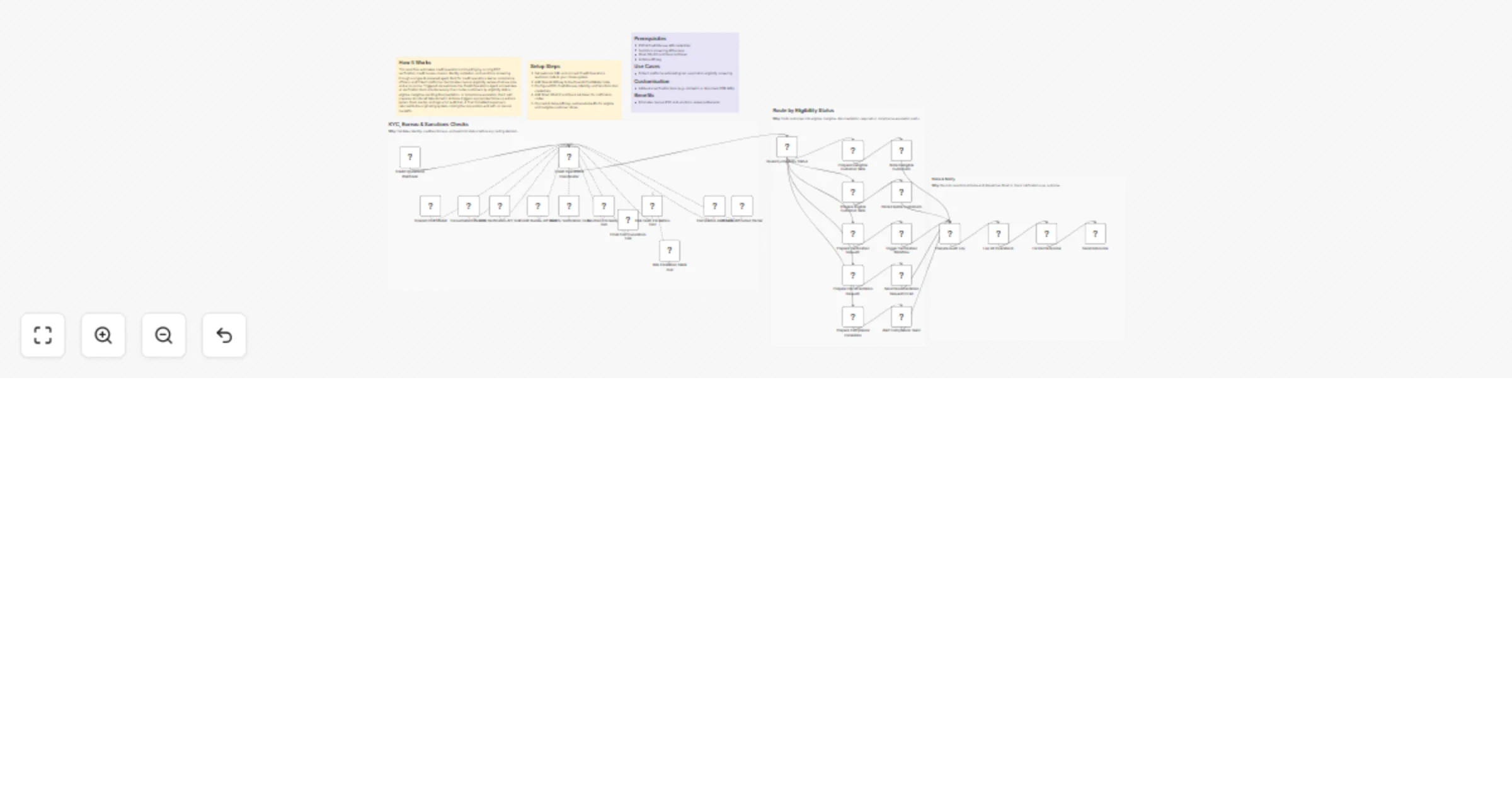

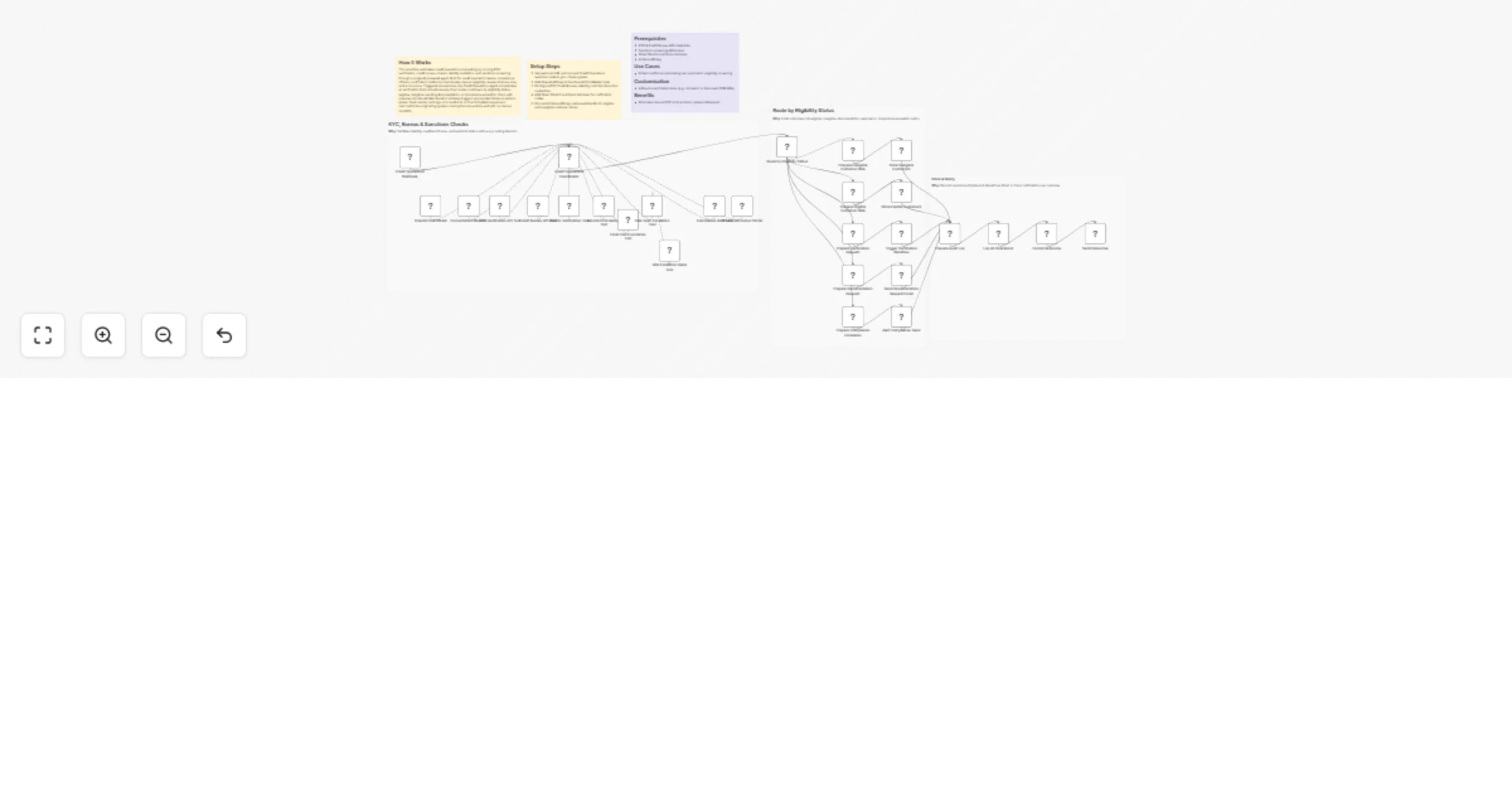

Orchestrate credit onboarding checks with GPT-4o, KYC APIs, Gmail, Slack and Airtable

How It Works This workflow automates credit operations onboarding by running KYC verification, credit bureau checks,...

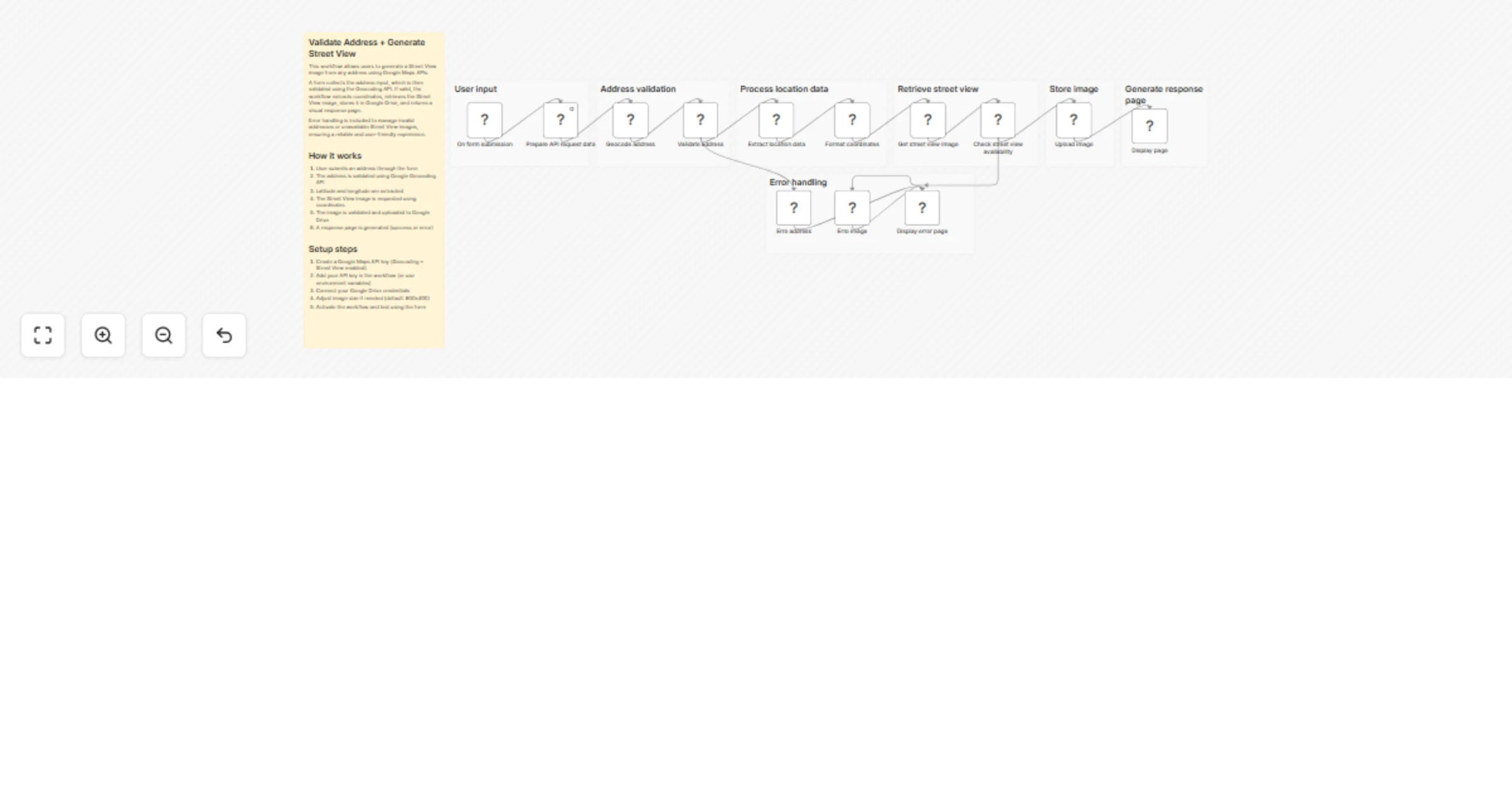

Validate addresses and generate Street View images with Google Maps and Drive

This workflow allows users to validate an address and generate a Street View image using Google Maps APIs. It starts...

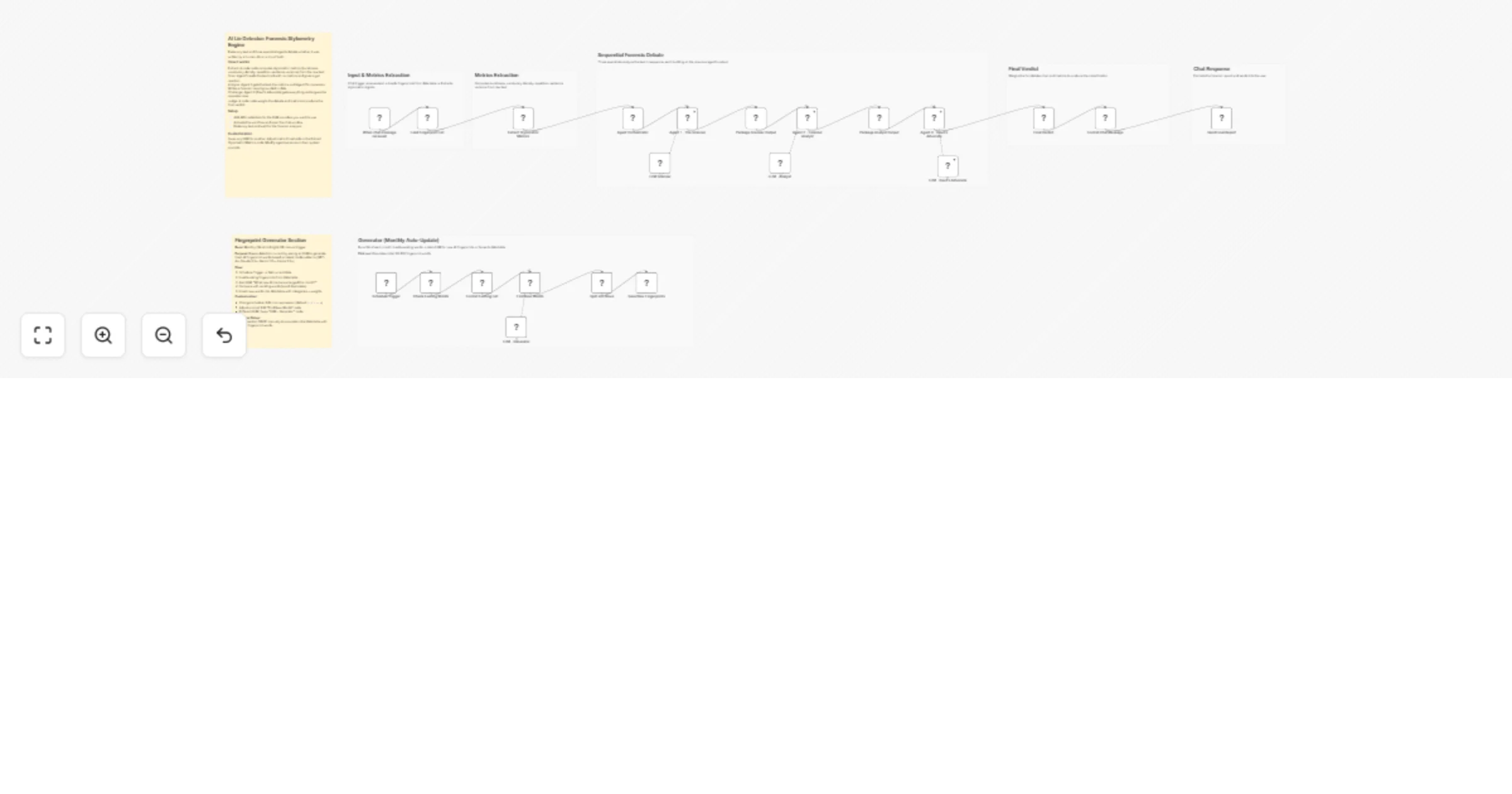

Detect human vs AI text using stylometric metrics and multi‐agent LLM debate

Stop guessing if text came from ChatGPT. Let three AI agents argue about it using forensic data. Paste any text and g...

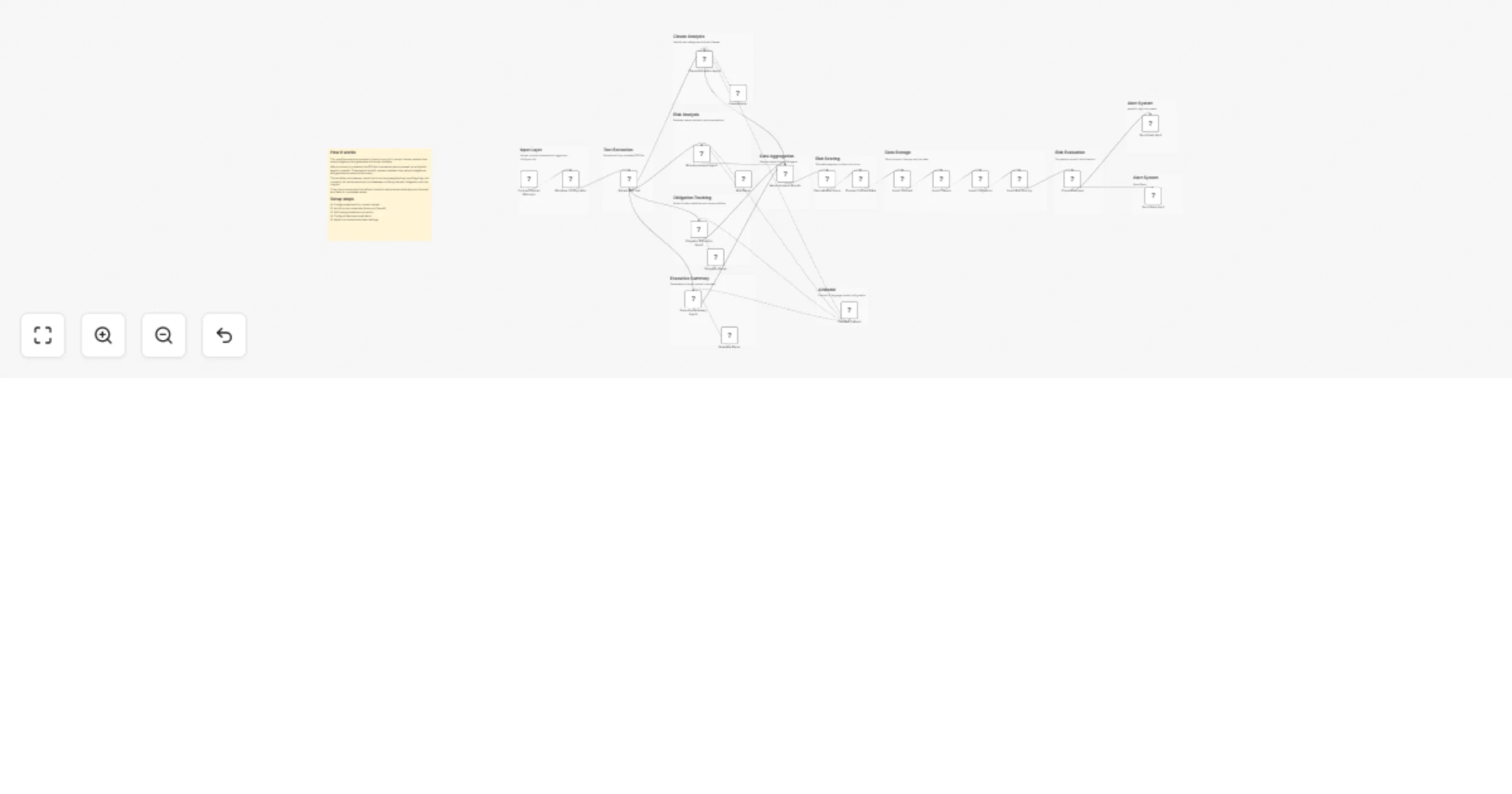

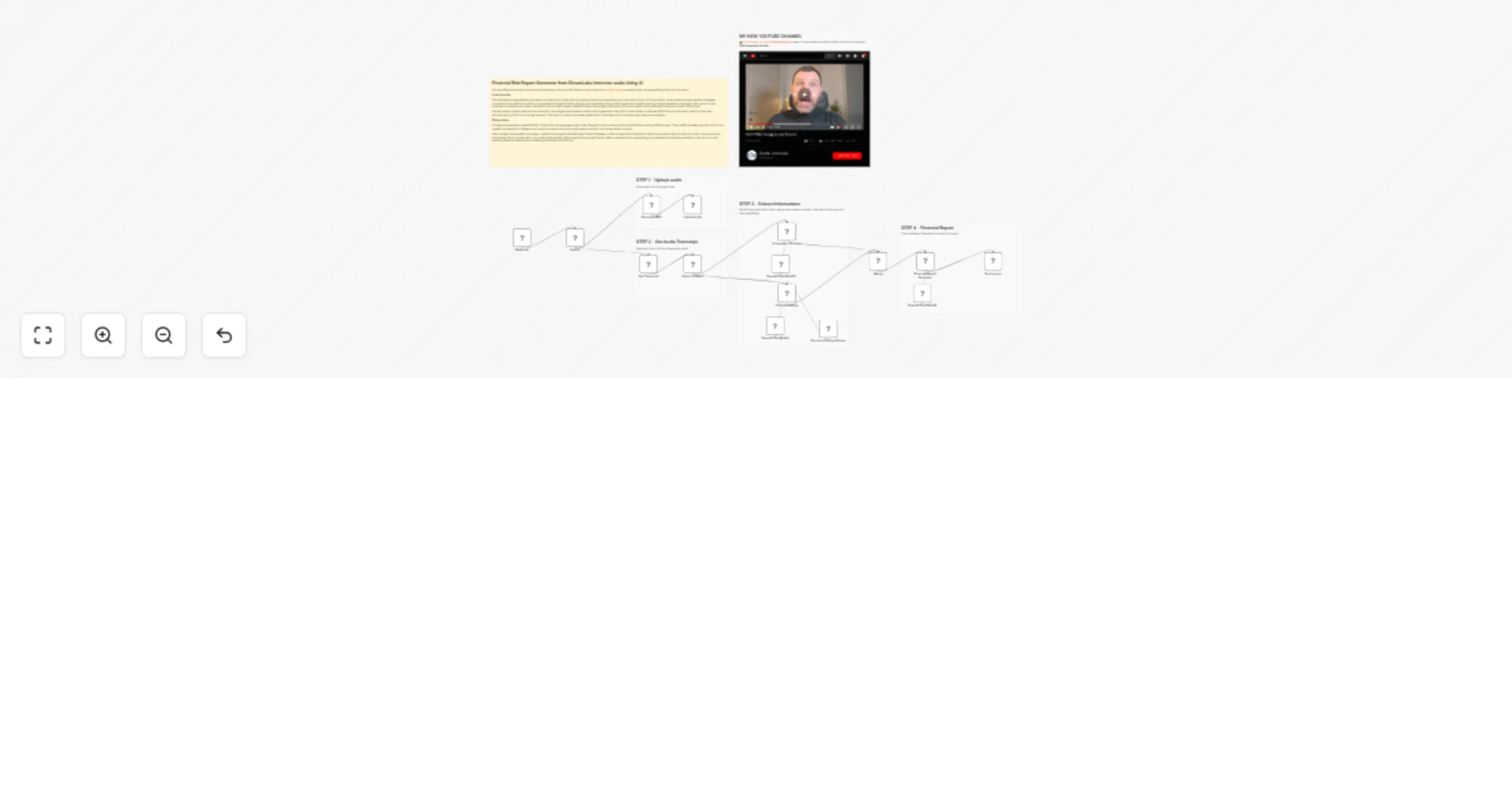

Generate Financial Risk Reports 📈 from ElevenLabs interviews 🎙️using OpenAI

This workflow automates the process of receiving a post call audio file and transcription from ElevenLabs, processing...

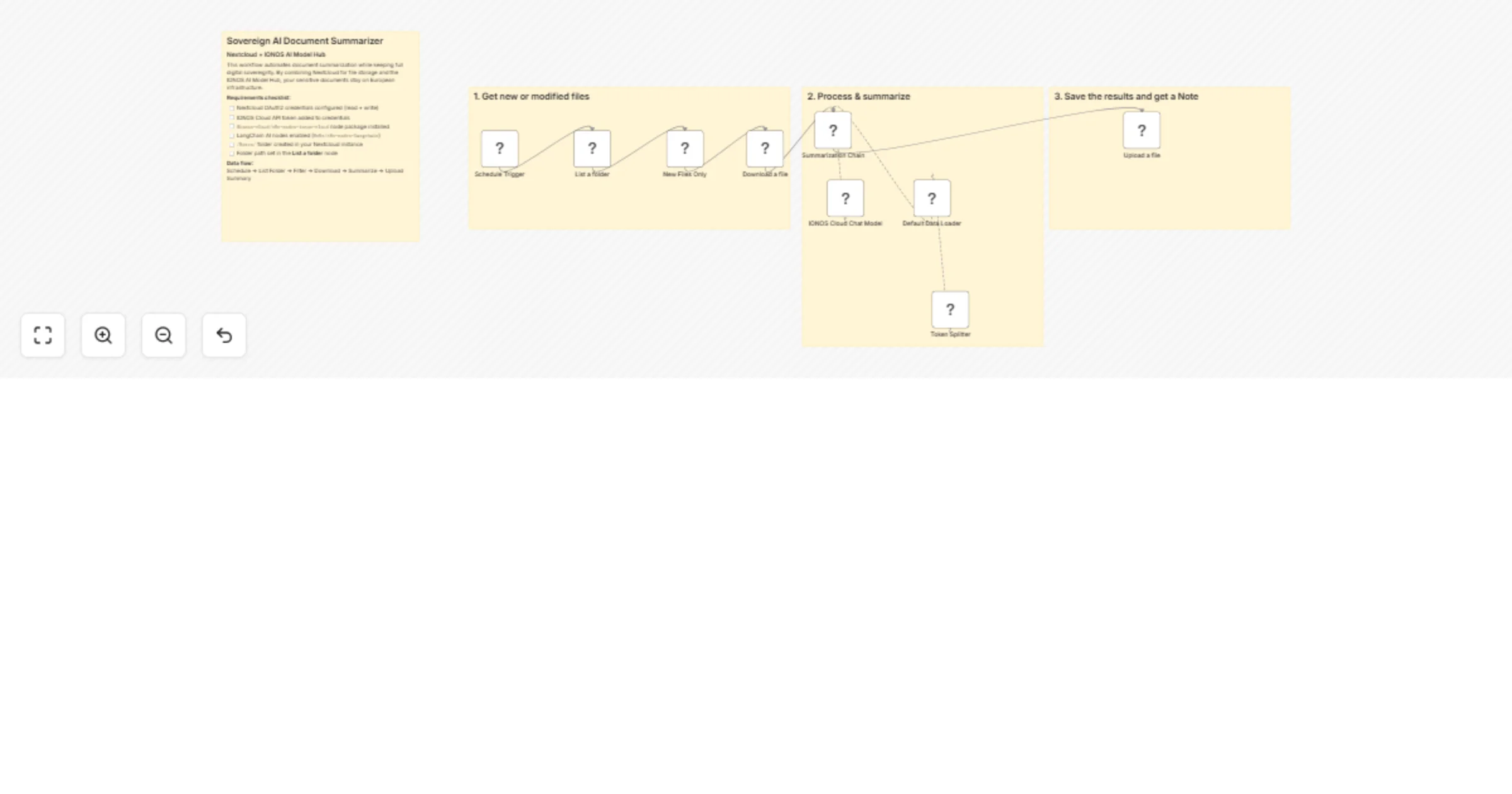

Summarize Nextcloud documents with IONOS AI Model Hub for sovereign AI

This n8n template shows you how to automate document summarization while keeping full digital sovereignty. By combini...

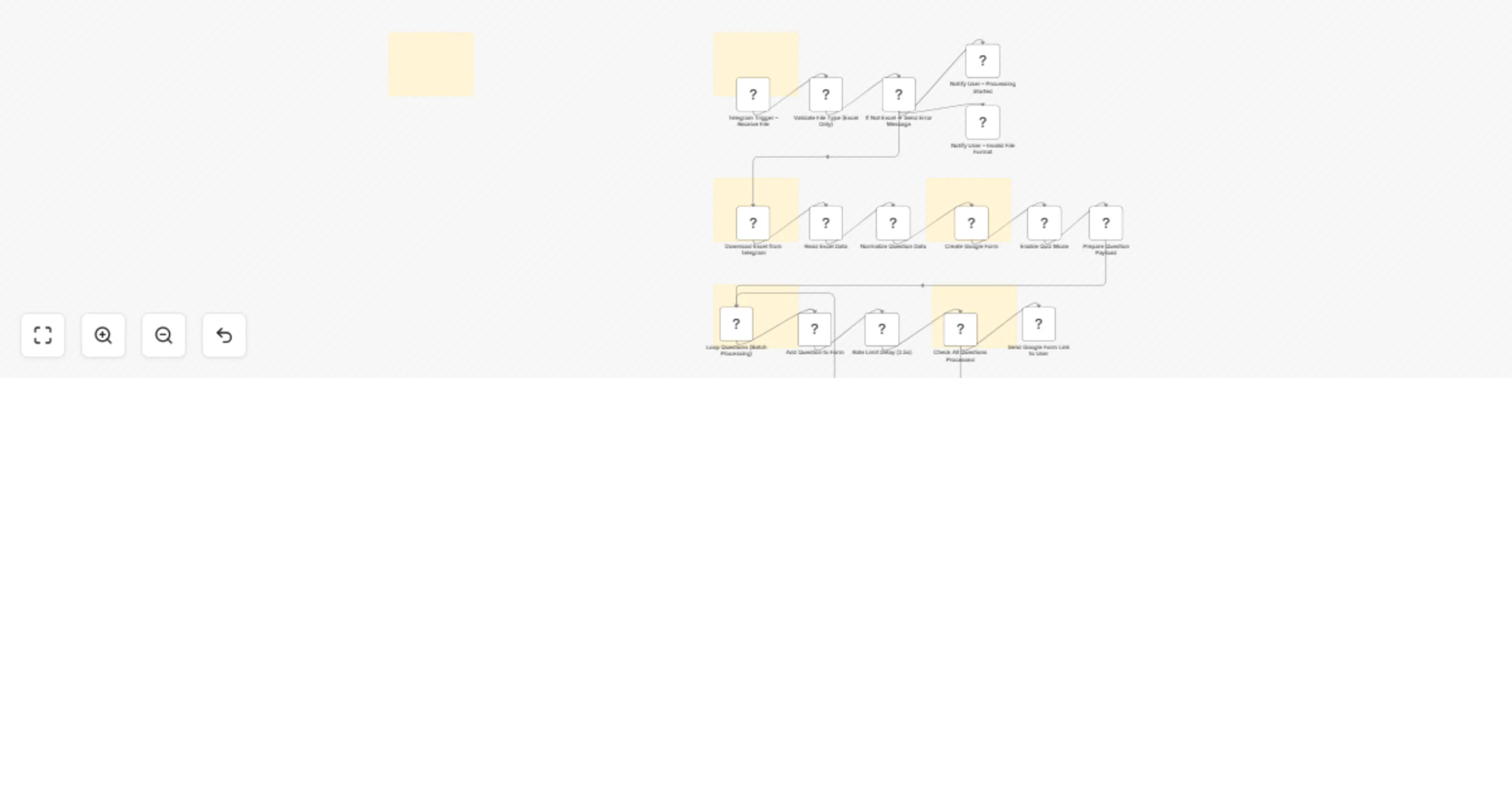

Generate Google Forms quizzes from Excel files sent via Telegram

📊 Generate Google Forms Quiz from Excel via Telegram (n8n Workflow) Turn your Excel question banks into fully functi...

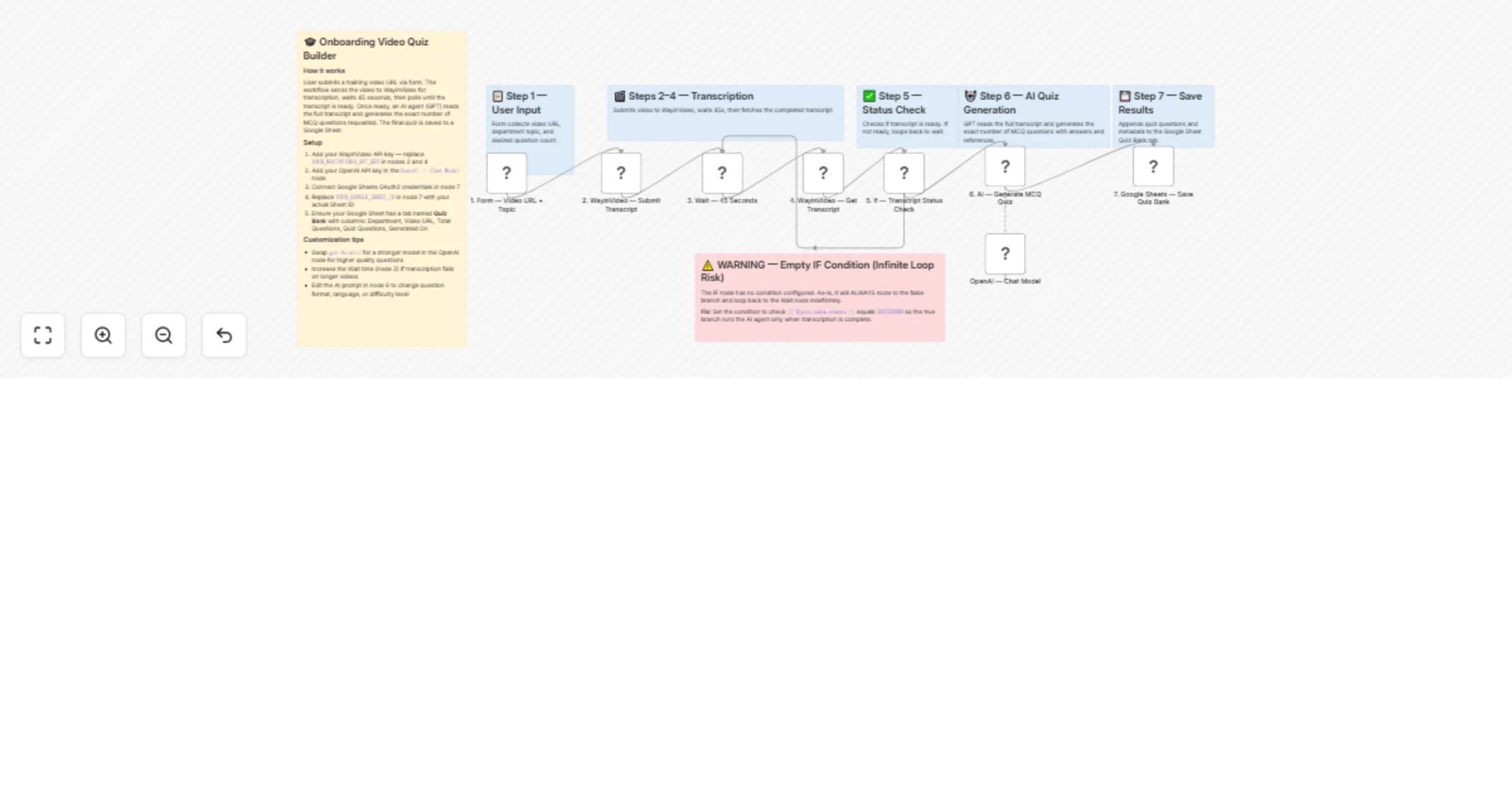

Create onboarding video quizzes using WayinVideo, GPT-4o-mini and Google Sheets

Description Paste any onboarding video URL into a simple form and the workflow handles everything automatically. It t...

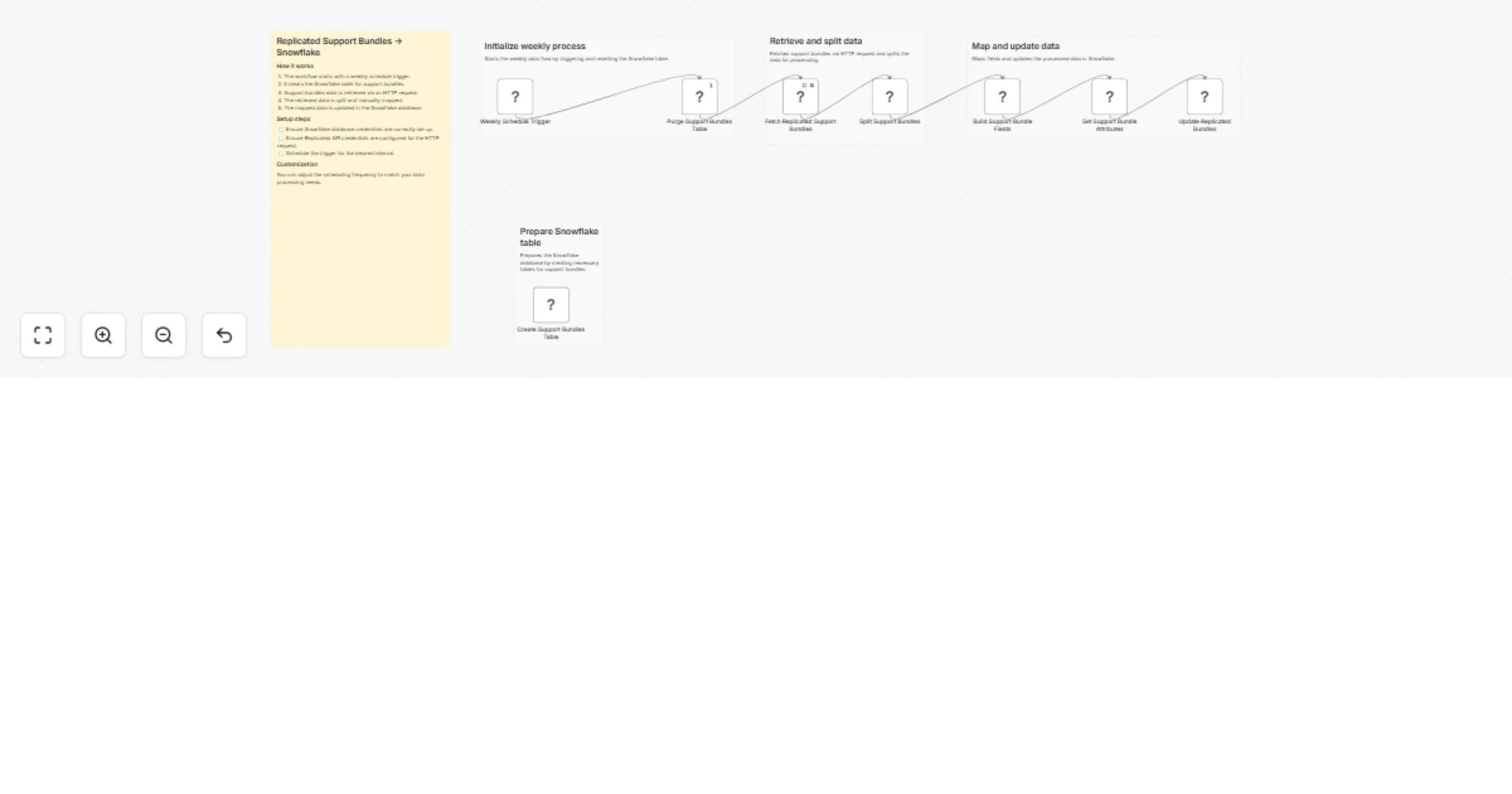

Sync Replicated support bundles into Snowflake on a schedule

Replicated Support Bundles → Snowflake Syncs all Replicated support bundles from your vendor account into a Snowflake...

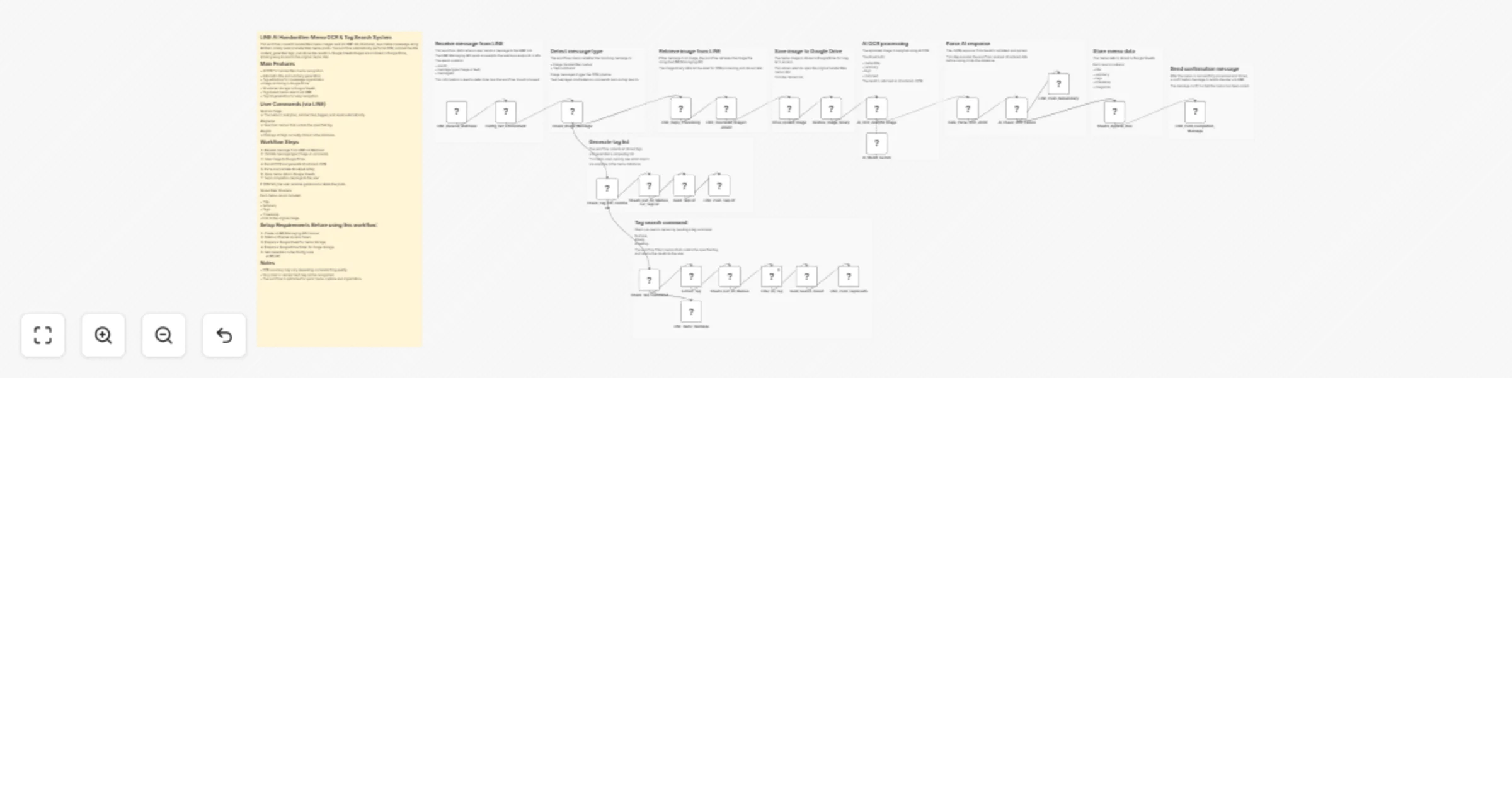

Convert LINE handwritten memo images to tagged, searchable notes with Gemini, Google Drive and Google Sheets

LINE AI Handwritten Memo OCR & Tag Search System This workflow converts handwritten memo images sent via LINE into st...

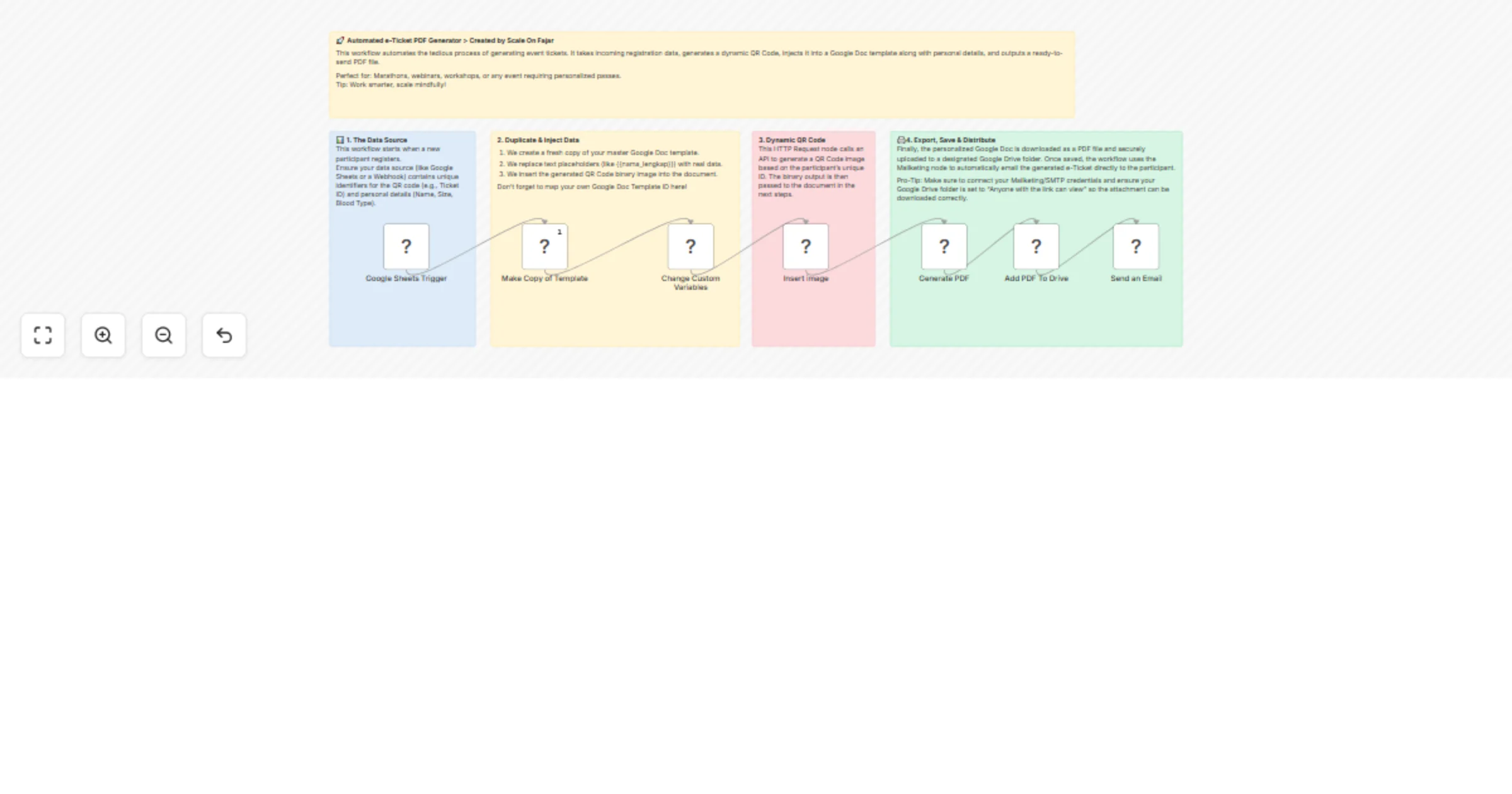

Generate and email event e-tickets with QR codes using Google Workspace

End to End Event e Ticket Generator & Email Dispatcher This workflow provides a complete, hands off solution for mana...

Record Odoo accounting entries from Telegram using ChatGPT (GPT-4o-mini)

What Problem Does It Solve? Business owners, managers, and accountants waste valuable time manually entering daily ex...

Manage Strapi CMS v5 content types via webhook using HTTP requests

n8n workflow template: Strapi CMS v5 content API An importable n8n workflow that creates , updates , and lists entrie...

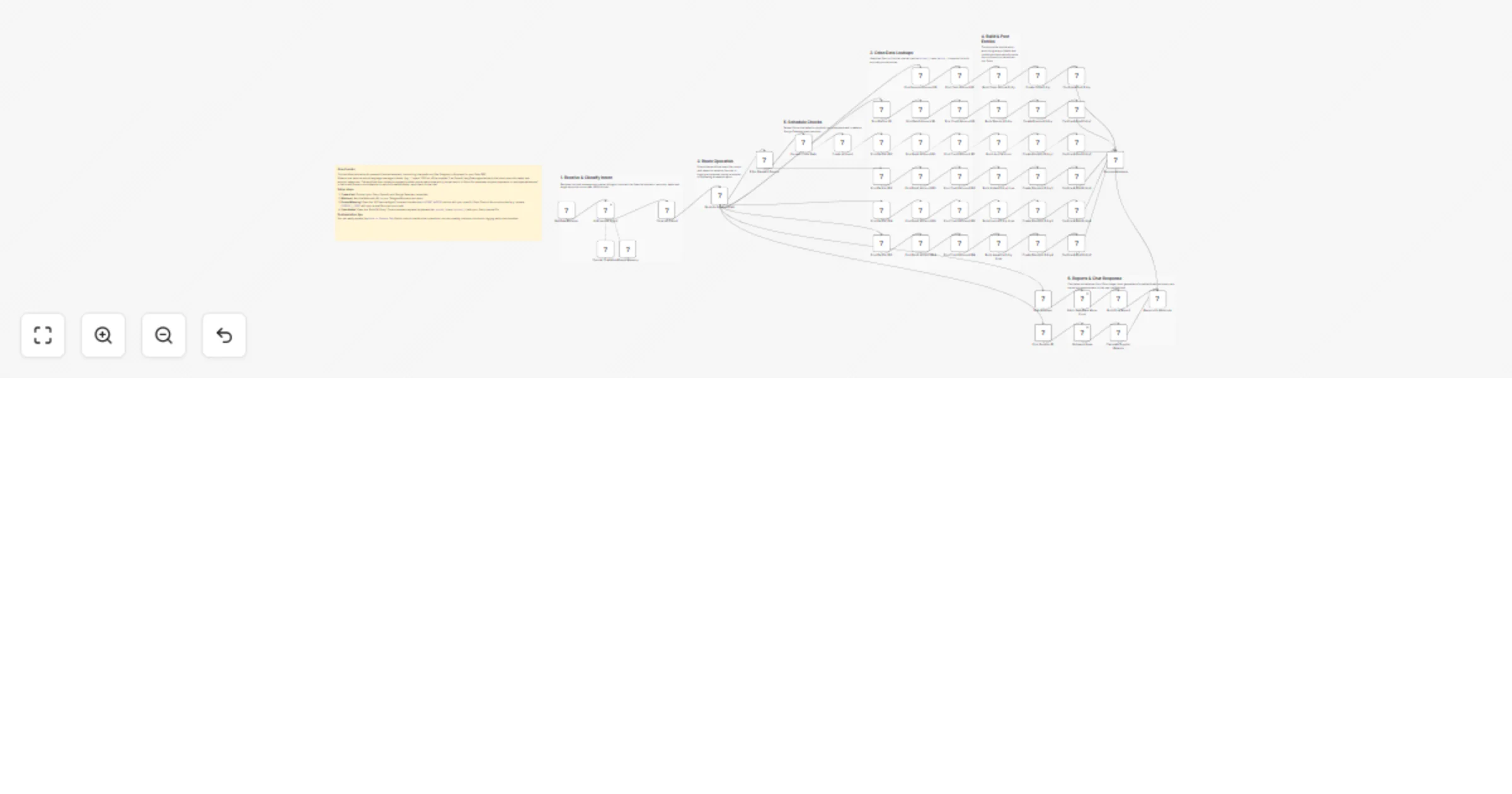

Orchestrate credit onboarding checks with GPT-4o, Airtable, Gmail and Slack

How It Works This workflow automates credit operations onboarding by running KYC verification, credit bureau checks,...

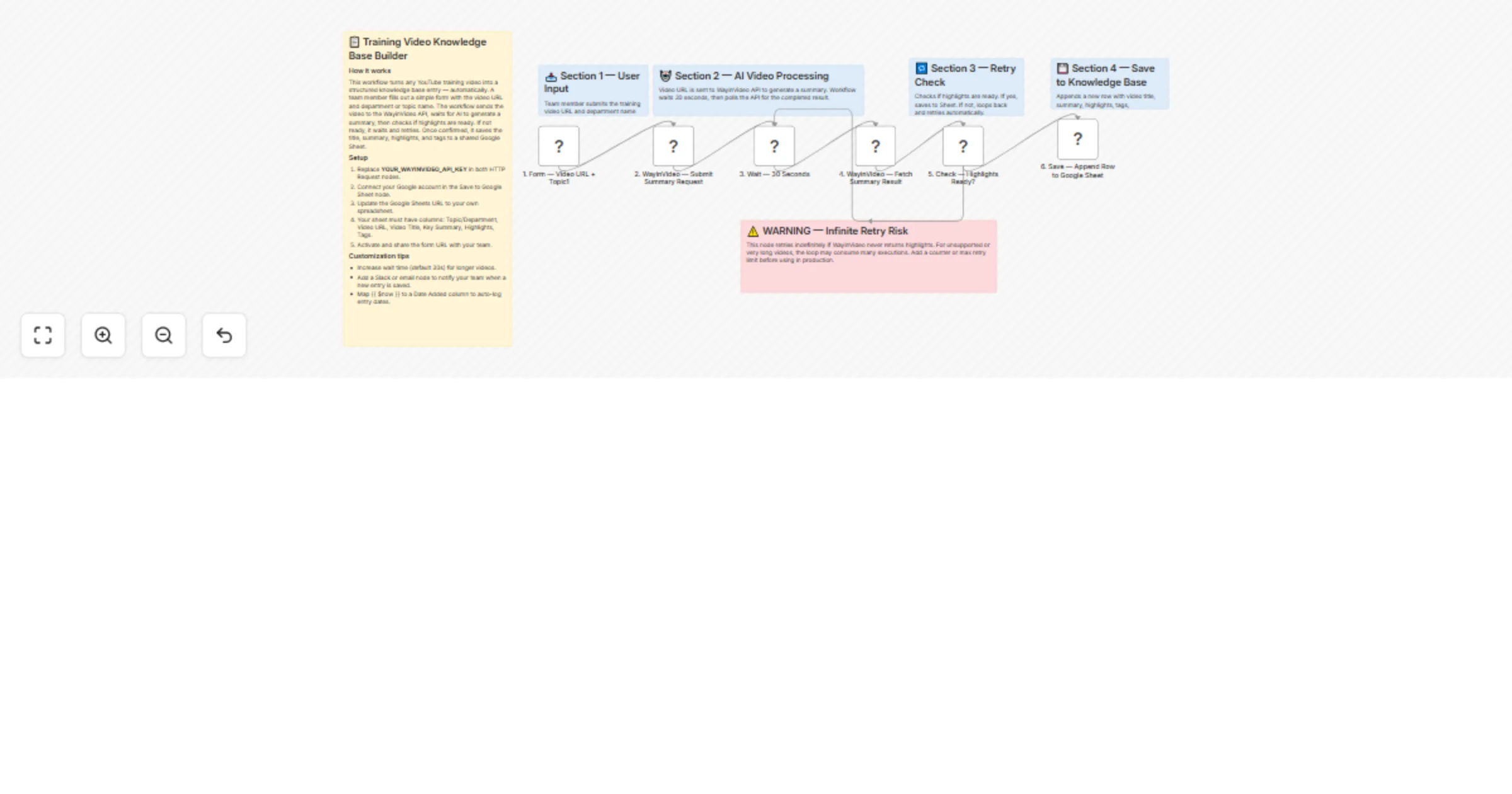

Build an employee training video knowledge base using the WayinVideo summaries API

Description Paste any training video URL in the form — this n8n workflow automatically extracts AI generated key summ...

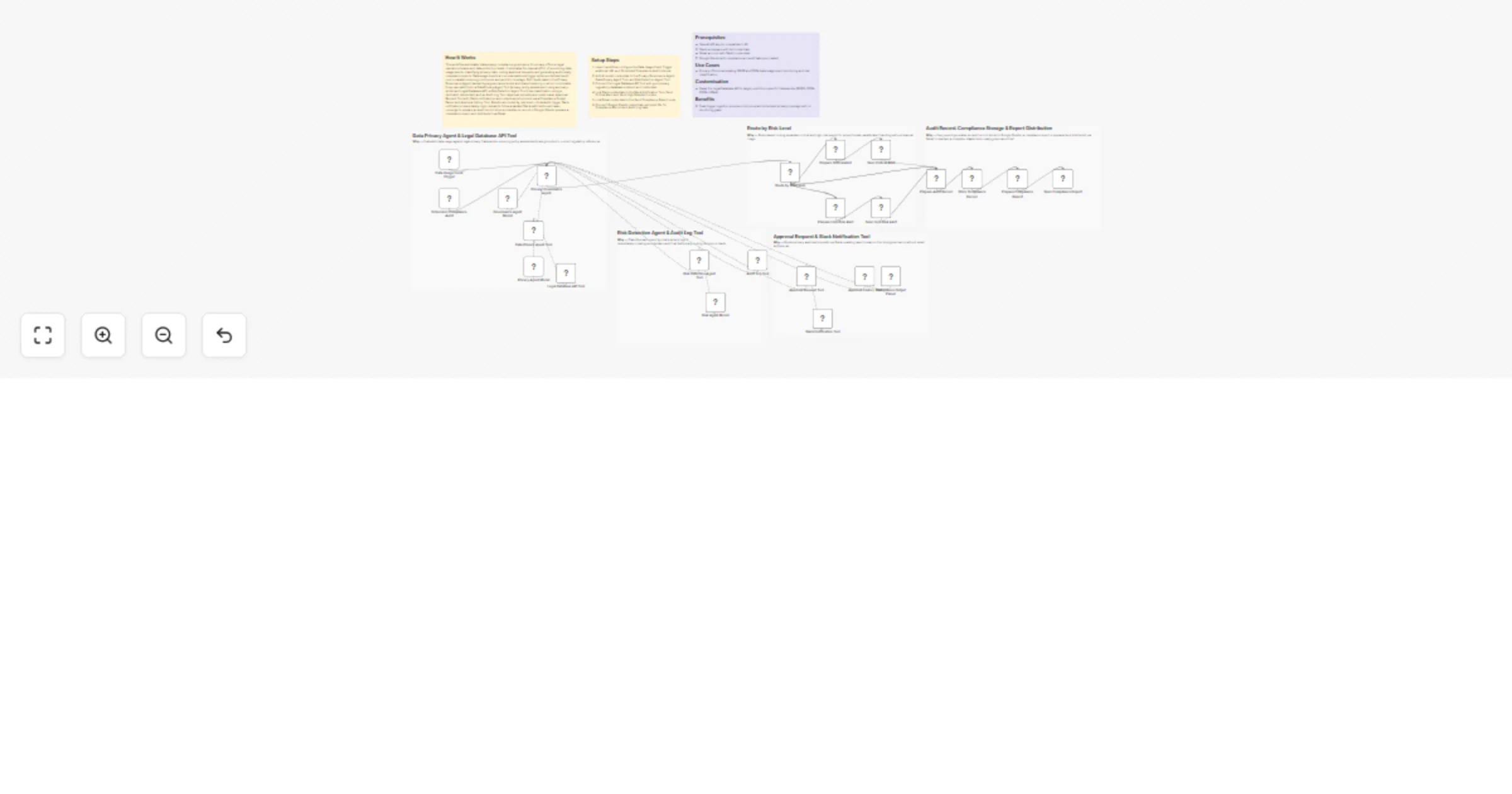

Automate privacy risk detection, approvals, and audit reports with GPT-4o, Slack, Gmail, and Google Sheets

How It Works This workflow automates data privacy compliance governance for privacy officers, legal operations teams,...