Robert Breen

Workflows by Robert Breen

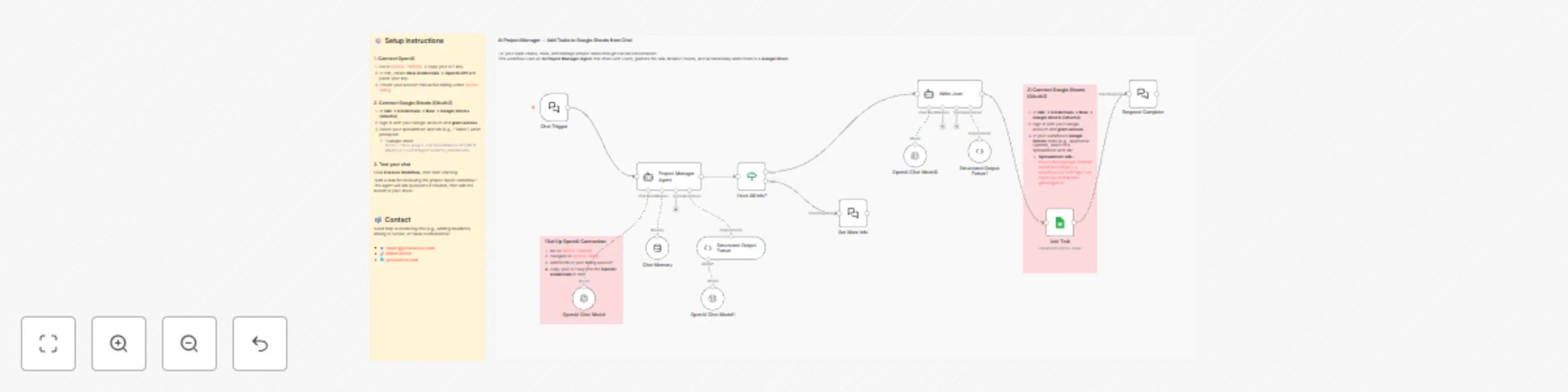

Add Project Tasks to Google Sheets with GPT-4.1-mini Chat Assistant

Let your team create, track, and manage project tasks through natural conversation. This workflow uses an AI Project...

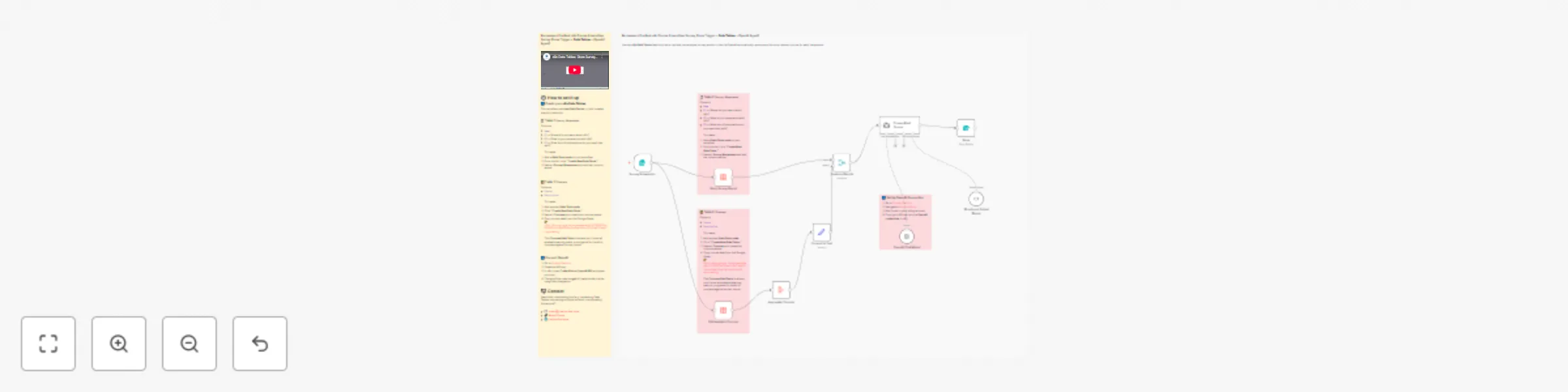

Course recommendation system for surveys with data tables and GPT-4.1-Mini

Use the n8n Data Tables feature to store, retrieve, and analyze survey results — then let OpenAI automatically recomm...

Enrich Google Sheets with Dun & Bradstreet data blocks

Automate company enrichment directly in Google Sheets using Dun & Bradstreet (D&B) Data Blocks . This workflow reads...

Extract structured data from D&B company reports with GPT-4o

Pull a Dun & Bradstreet Business Information Report (PDF) by DUNS, convert the response into a binary PDF file , extr...

Analyze images with OpenAI Vision while preserving binary data for reuse

Use this template to upload an image , run a first pass OpenAI Vision analysis , then re attach the original file (bi...

Voice-driven AI assistant using VAPI and GPT-4.1-mini with memory

Send VAPI voice requests into n8n with memory and OpenAI for conversational automation This template shows how to cap...

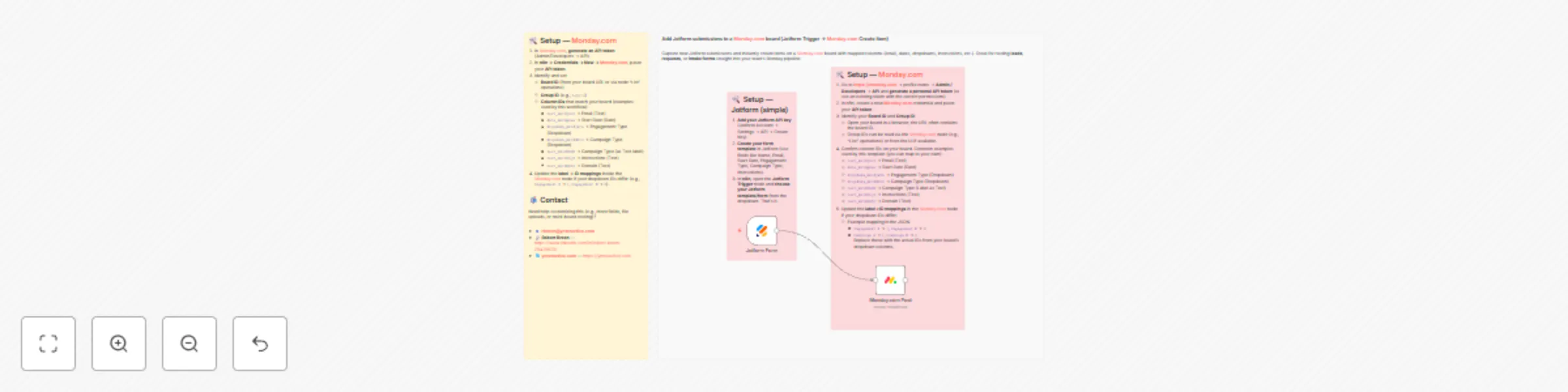

Create Monday.com board items from Jotform submissions with field mapping

Capture new Jotform submissions and instantly create items on a Monday.com board with mapped columns (email, date, dr...

Generate and split sample data records using JavaScript and Python

A minimal, plug and play workflow that generates sample data using n8n’s Code node (both JavaScript and Python versio...

Research business leads with Perplexity AI & save to Google Sheets using OpenAI

Automatically research new leads in your target area, structure the results with AI, and append them into Google Shee...

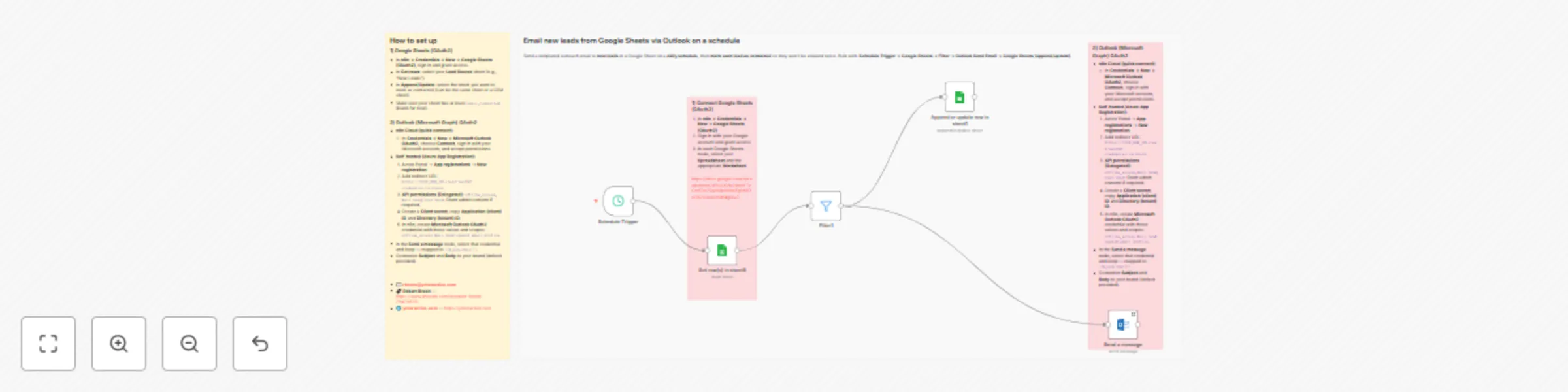

Email new leads from Google Sheets via Outlook on a schedule

Send a templated outreach email to new leads in a Google Sheet on a daily schedule , then mark each lead as contacted...

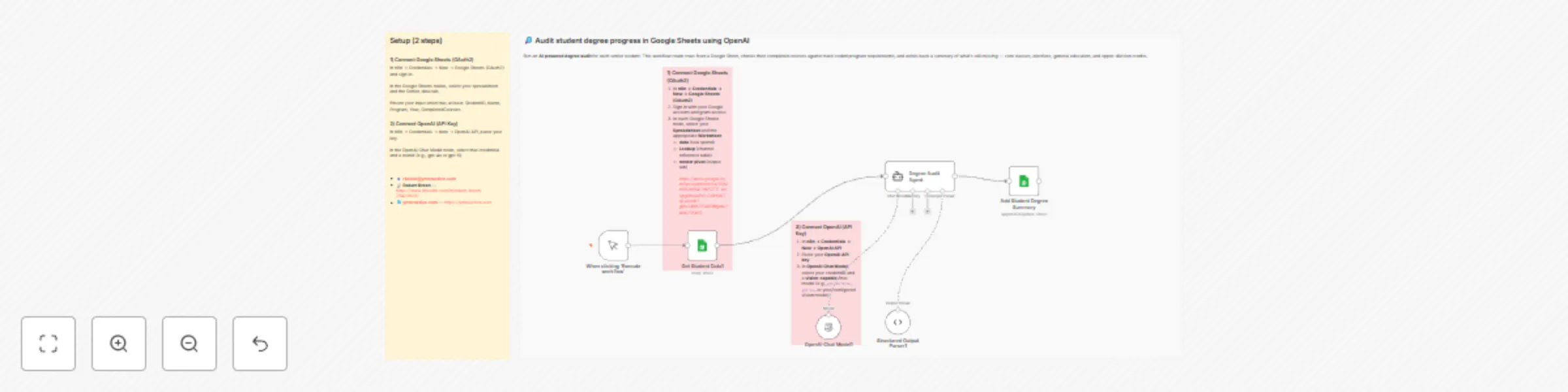

Ai-powered degree audit system with Google Sheets and GPT-5

Run an AI powered degree audit for each senior student. This template reads student rows from Google Sheets, evaluate...

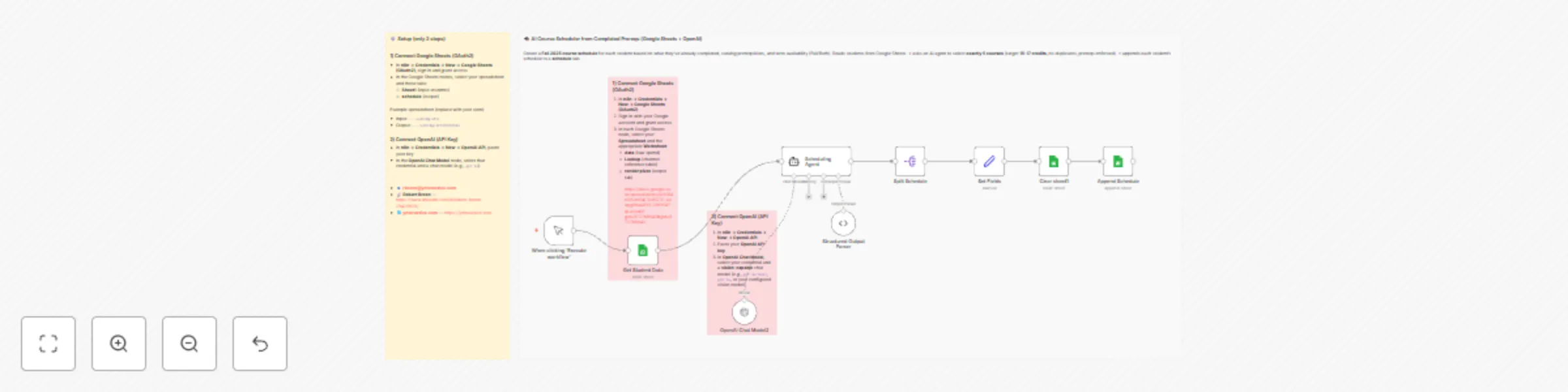

Generate student course schedules based on prerequisites with GPT and Google Sheets

Create a Fall 2025 course schedule for each student based on what they’ve already completed, catalog prerequisites, a...

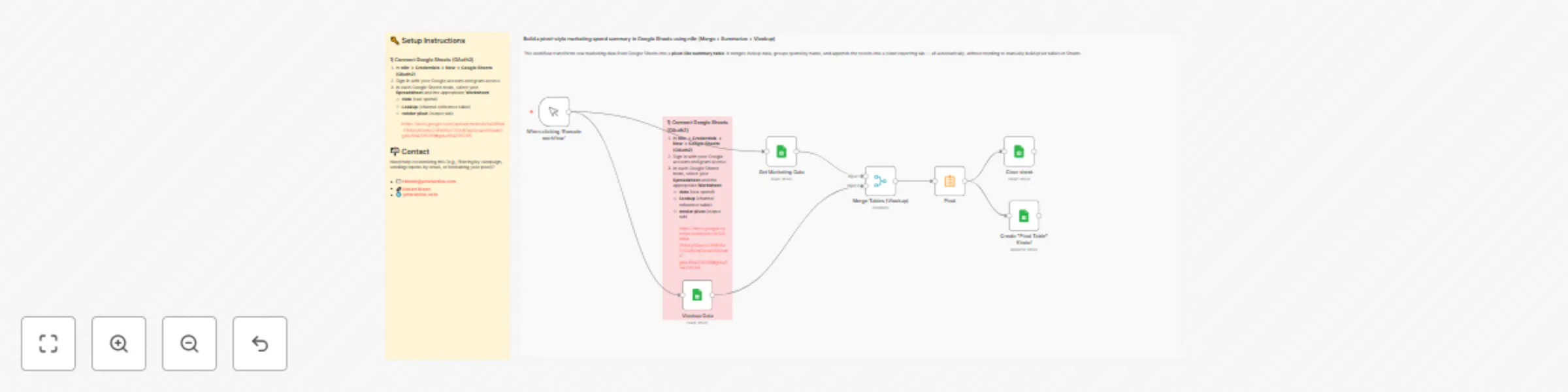

Aggregate marketing spend data with custom pivots & VLOOKUPs in Google Sheets

This workflow transforms raw marketing data from Google Sheets into a pivot like summary table . It merges lookup dat...

Instagram visual analysis with Apify scraping, OpenAI GPT-5 & Google Sheets

Pull recent Instagram post media for any username, fetch the image binaries, and run automated visual analysis with O...

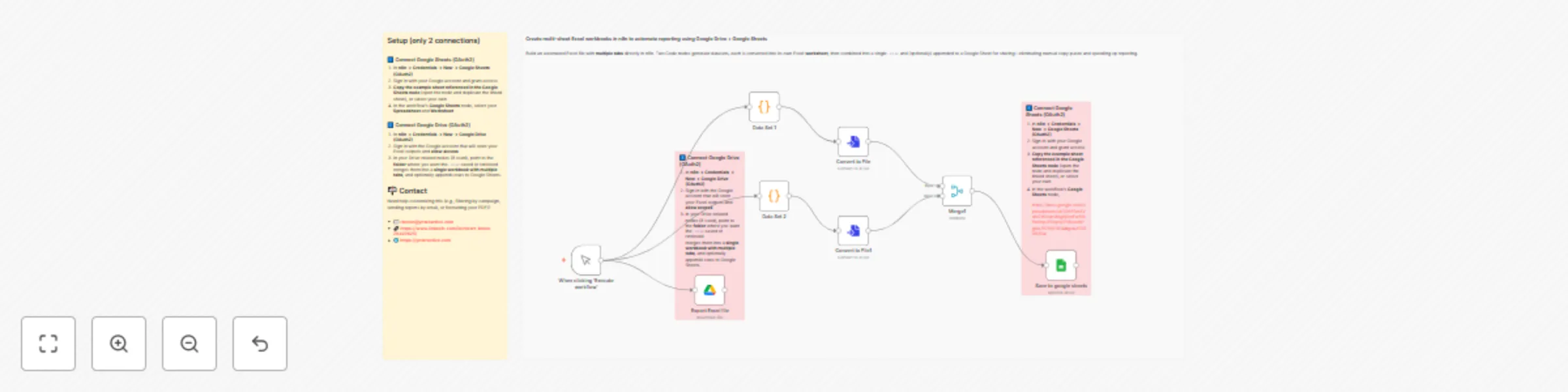

Create multi-sheet Excel workbooks by merging datasets with Google Drive & Sheets

Create multi sheet Excel workbooks in n8n to automate reporting using Google Drive + Google Sheets Build an automated...

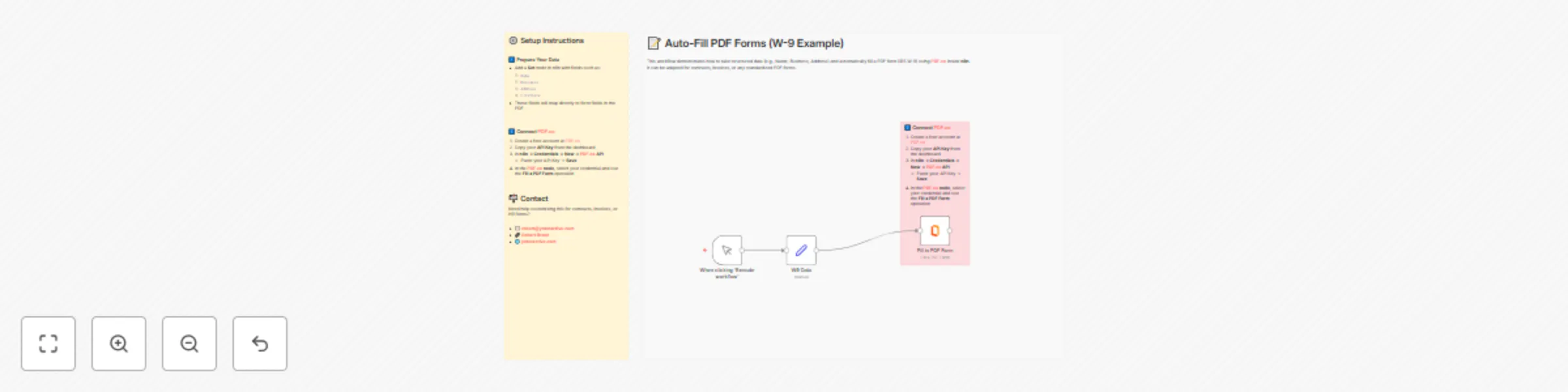

Automated PDF form filling for W-9 and more with PDF.co

🧑💻 Description This workflow demonstrates how to take structured data (e.g., Name, Business, Address) and automati...

Extract invoice data from Google Drive to Sheets using PDF.co AI parser

This workflow looks inside a Google Drive folder , parses each PDF invoice with PDF.co’s AI Invoice Parser , and appe...

Generate marketing reports from Google Sheets with GPT-4 insights and PDF.co

This workflow pulls marketing data from Google Sheets , aggregates spend by channel, generates an AI written summary...

Generate PDF invoices from Google Sheets with PDF.co

This workflow automatically pulls invoice rows from Google Sheets and generates a PDF invoice using a PDF.co template...

Log new Gmail messages automatically in Google Sheets

🧑💻 Description This workflow automatically fetches new Gmail messages since the last run and appends them into a G...

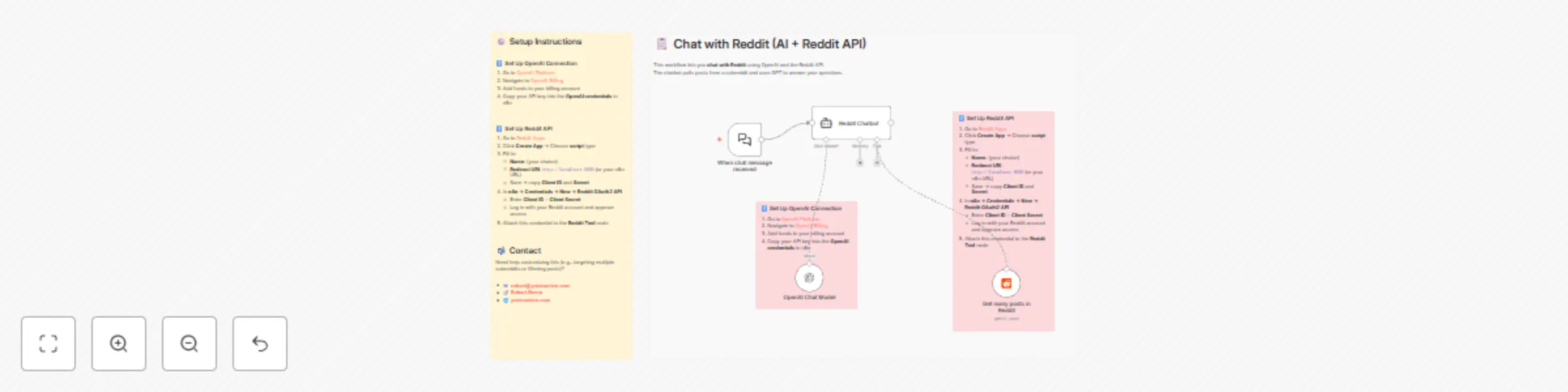

Reddit content Q&A chatbot with GPT-4o and Reddit API

This workflow lets you chat with Reddit using OpenAI and the Reddit API. The chatbot pulls posts from a subreddit and...

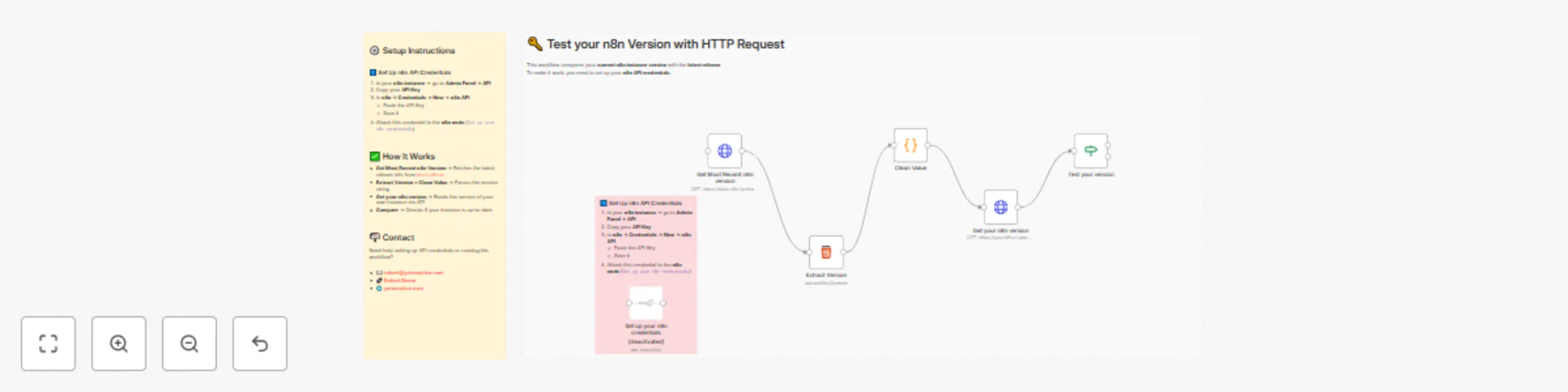

Compare your n8n version with latest release using n8n API

🧑💻 Description This workflow automatically compares the version of your n8n instance with the latest release avail...

Auto-categorize Outlook emails into color categories with GPT-4o

This workflow fetches recent emails from Outlook and uses OpenAI to assign a category (e.g., Red , Yellow , Green )....

Financial data Q&A chatbot with Google Finance, SerpAPI, and OpenAI

Replace with your actual SerpApi key. 2️⃣ Set Up OpenAI Connection 1. Go to OpenAI Platform 2. Navigate to Billing an...