Ranjan Dailata

Workflows by Ranjan Dailata

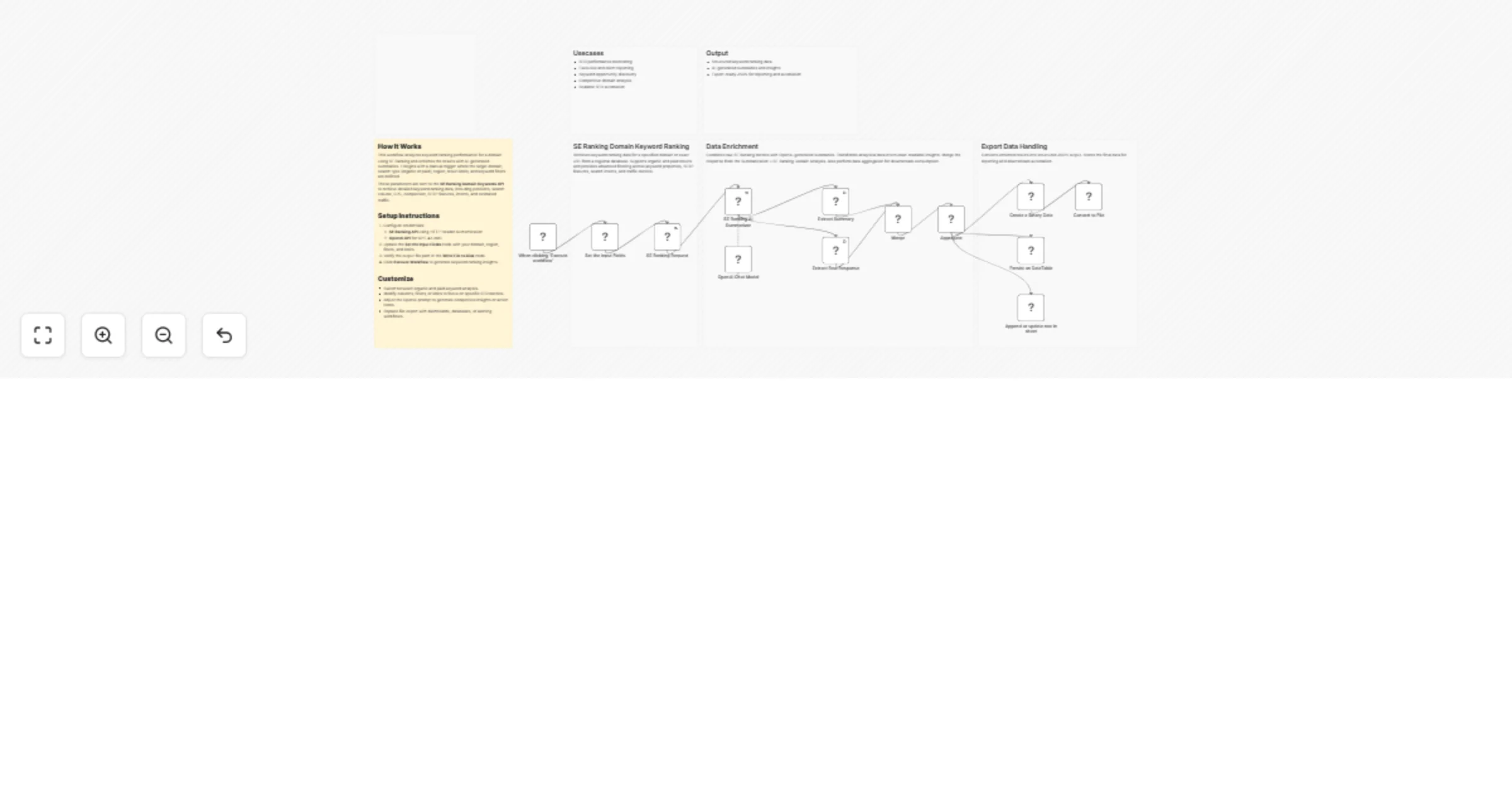

Analyze domain keyword rankings and summarize with SE Ranking and GPT-4.1-mini

This n8n workflow automates domain level keyword ranking analysis and enriches raw SEO metrics with AI generated summ...

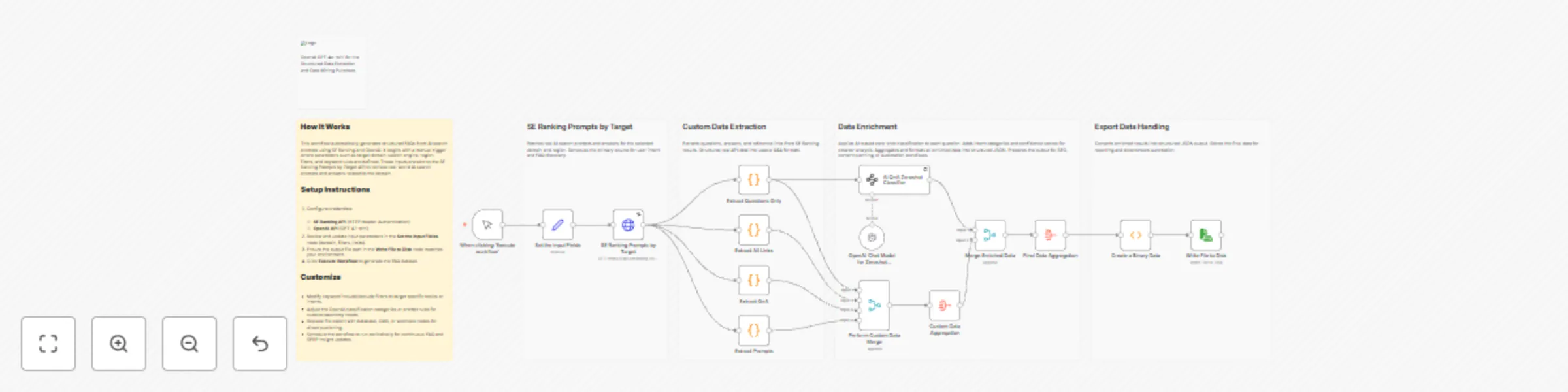

Monitor backlinks and generate SEO insights with SE Ranking and GPT-4.1-mini

This n8n workflow automates backlink monitoring, analysis, and AI driven interpretation for any domain or URL. It com...

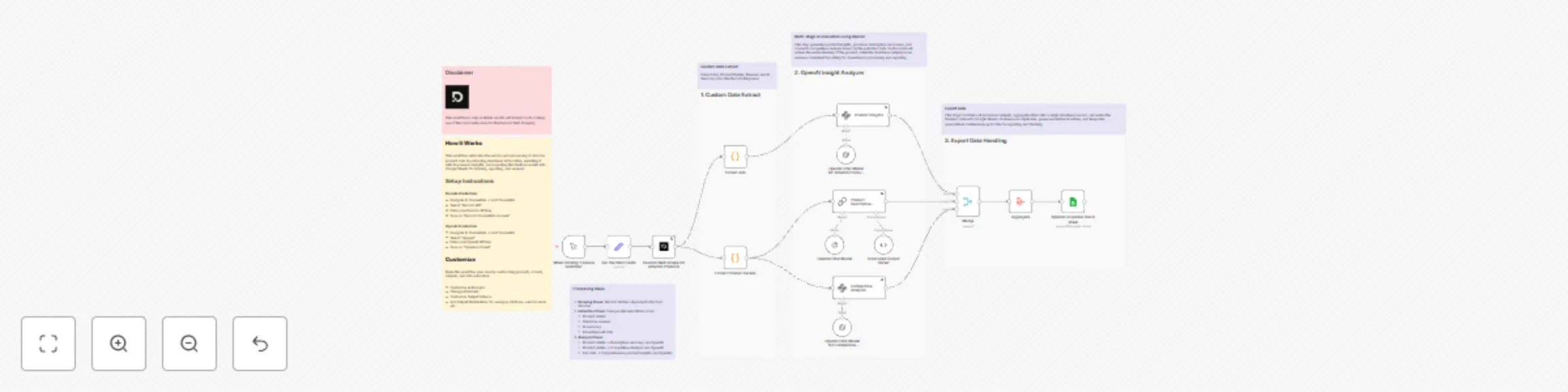

Analyze brand visibility in AI SERPs with SE Ranking and OpenAI GPT-4.1 mini

This workflow automates brand intelligence analysis across AI powered search results by combining SE Ranking’s AI Sea...

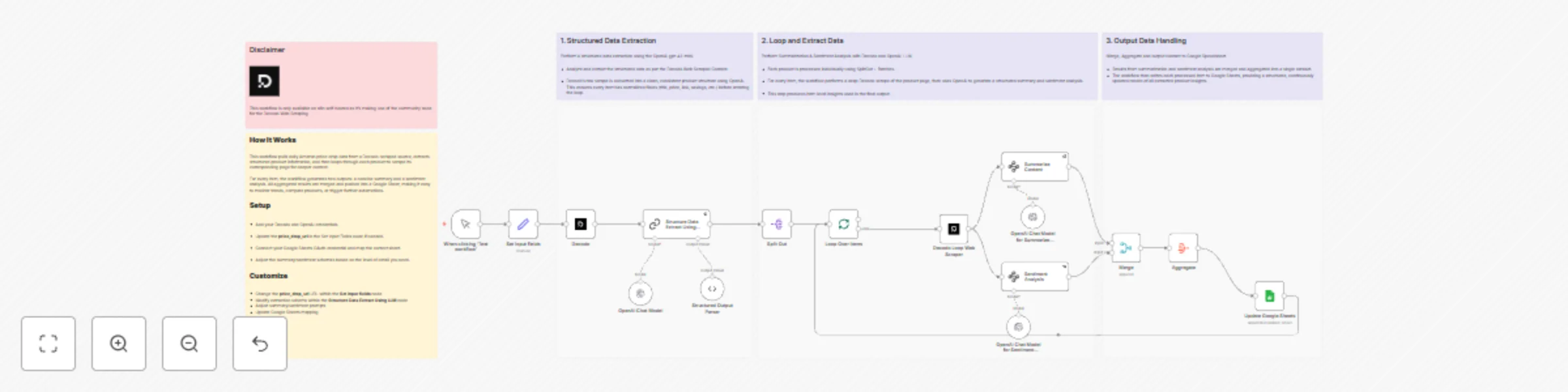

Generate AI search–driven FAQ insights for SEO with SE Ranking and OpenAI GPT-4.1-mini

This workflow automates the discovery and structuring of FAQs from real AI search behavior using SE Ranking and OpenA...

Summarize SE Ranking AI search visibility using OpenAI GPT-4.1-mini

This workflow automates AI powered search insights by combining SE Ranking AI Search data with OpenAI summarization....

Scrape and analyze Amazon product info with Decodo + OpenAI

The Scrape and Analyze Amazon Product Info with Decodo + OpenAI workflow automates the process of extracting product...

Amazon price drop analysis with Decodo, GPT-4.1-mini & Google Sheets integration

This workflow automatically scrapes Amazon price drop data via Decodo, extracts structured product details with OpenA...

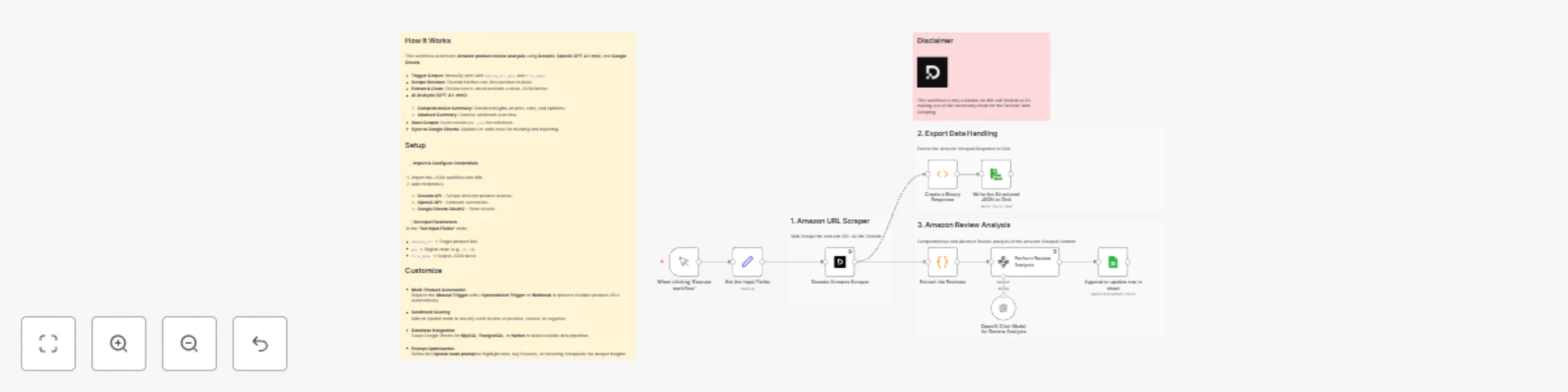

Analyze & summarize Amazon product reviews with Decodo, OpenAI and Google Sheets

Disclaimer Please note This workflow is only available on n8n self hosted as it’s making use of the community node fo...

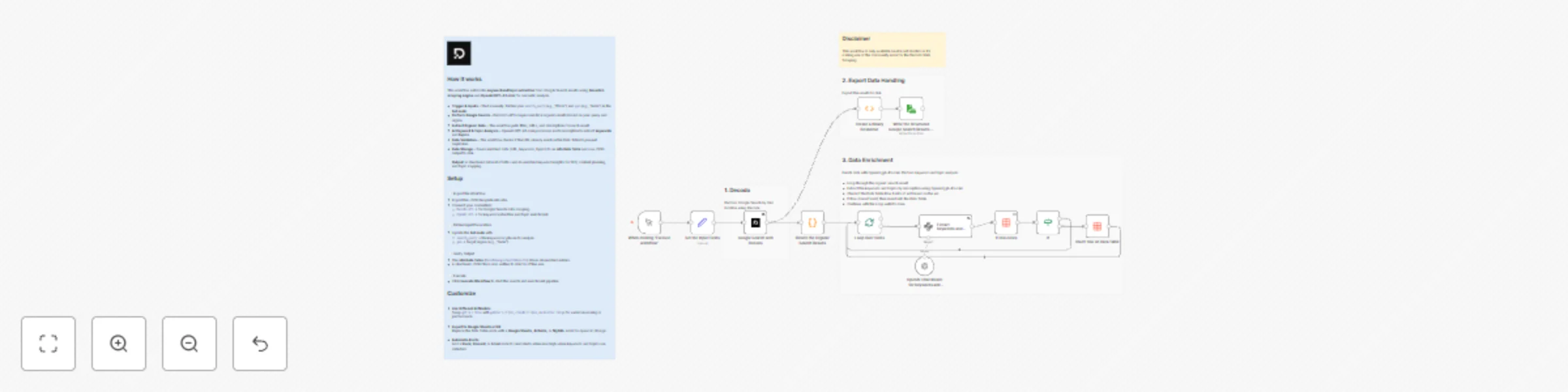

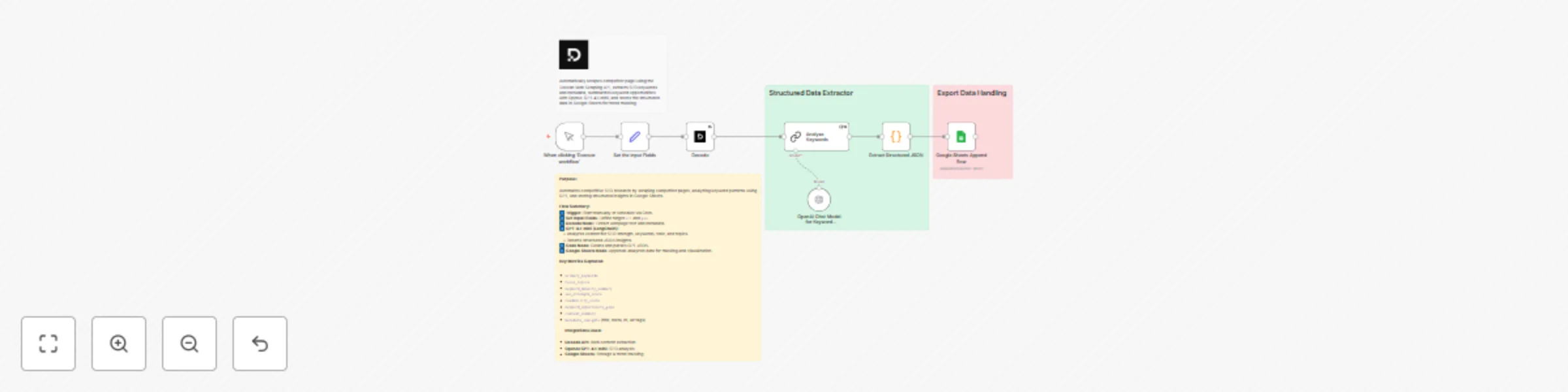

Search & enrich: Smart keyword analysis with Decodo + OpenAI GPT-4.1-mini

Disclaimer Please note This workflow is only available on n8n self hosted as it's making use of the community node fo...

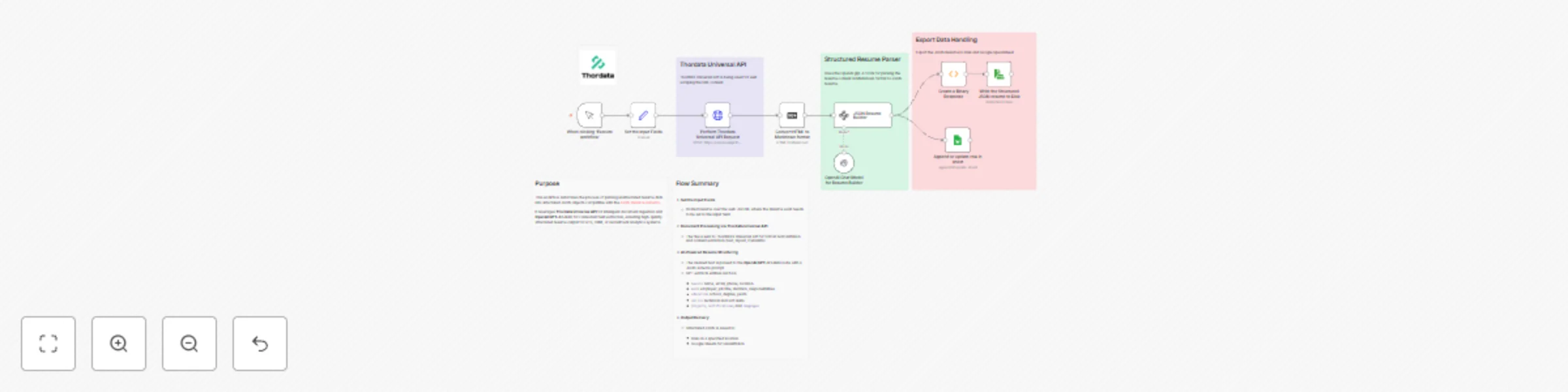

Unstructured resume parser with Thordata Universal API + OpenAI GPT-4.1-mini

Who this is for This workflow is designed for: Recruiters, Talent Intelligence Teams, and HR tech builders automating...

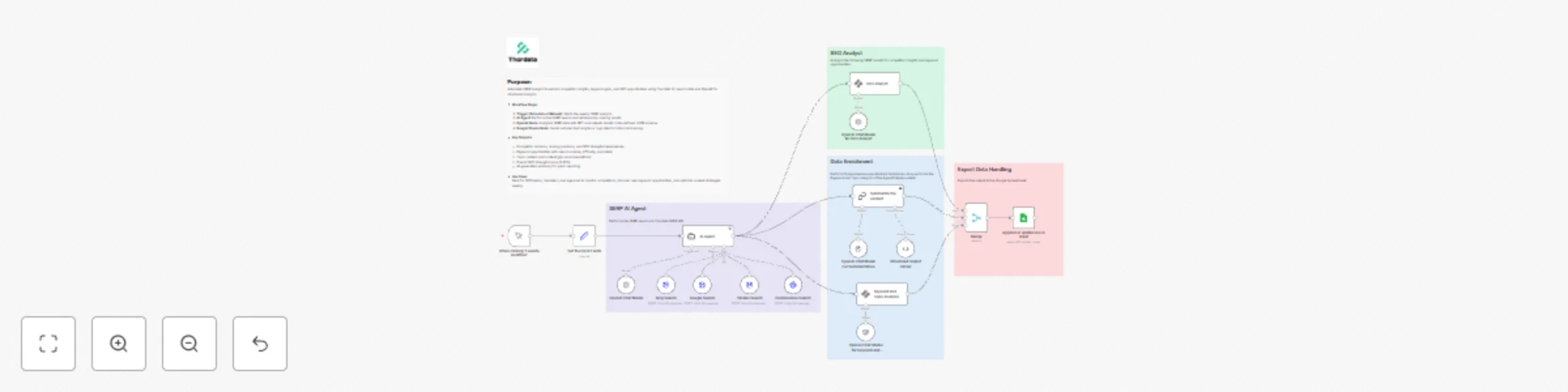

Competitor intelligence agent: SERP monitoring + summary with Thordata + OpenAI

Who this is for? This workflow is designed for: Marketing analysts , SEO specialists , and content strategists who wa...

Track competitor SEO keywords with Decodo + GPT-4.1-mini + Google Sheets

This workflow automates competitor keyword research using OpenAI LLM and Decodo for intelligent web scraping. Who thi...

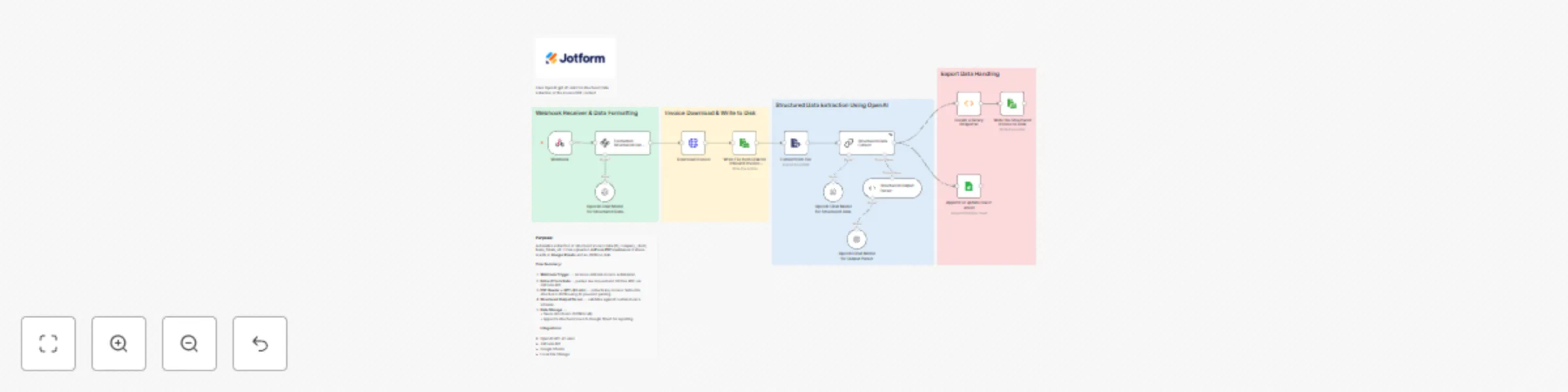

Extract structured invoice data from JotForm PDFs with GPT-4.1-mini & Sheets

Who this is for This workflow is designed for Finance teams, accounting professionals, and automation engineers. Use...

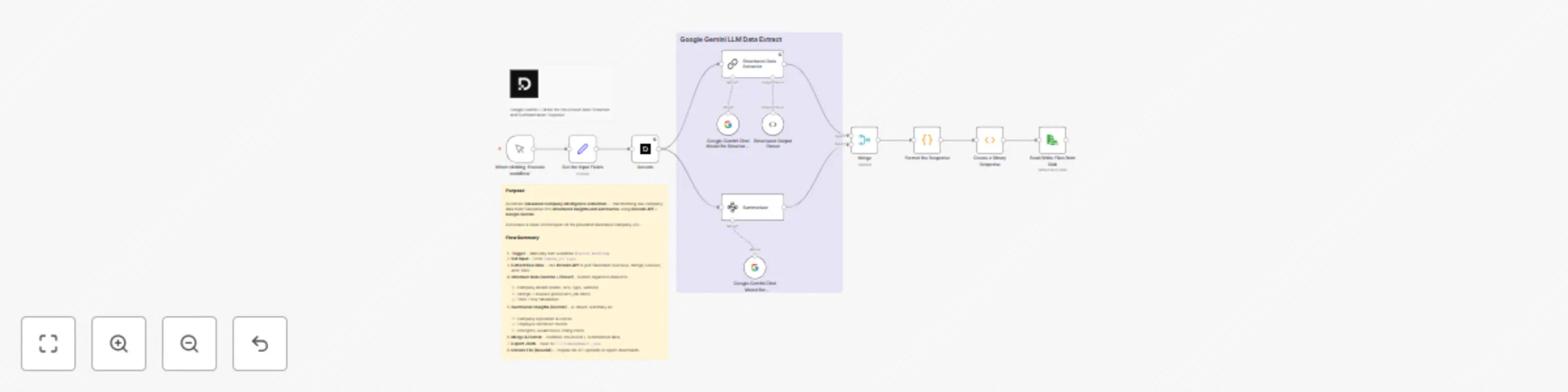

Summarize & extract Glassdoor company info with Google Gemini and Decodo

This workflow automates company research and intelligence extraction from Glassdoor using Decode API for data retriev...

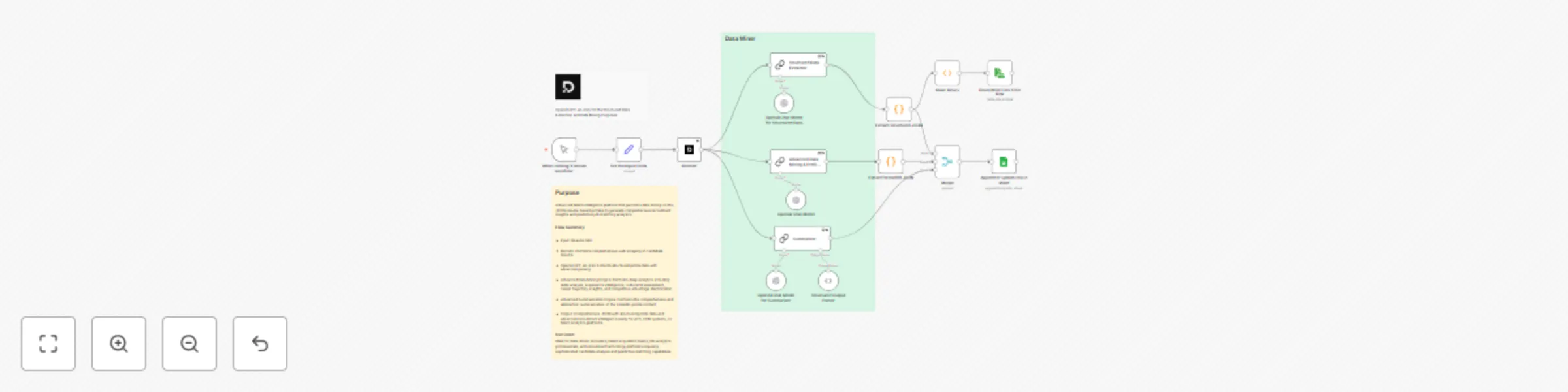

Resume intelligence and data mining using Decodo with GPT-4o-mini

1. Who this is for This workflow is specifically designed for Recruiters, HR analytics teams, and data driven talent...

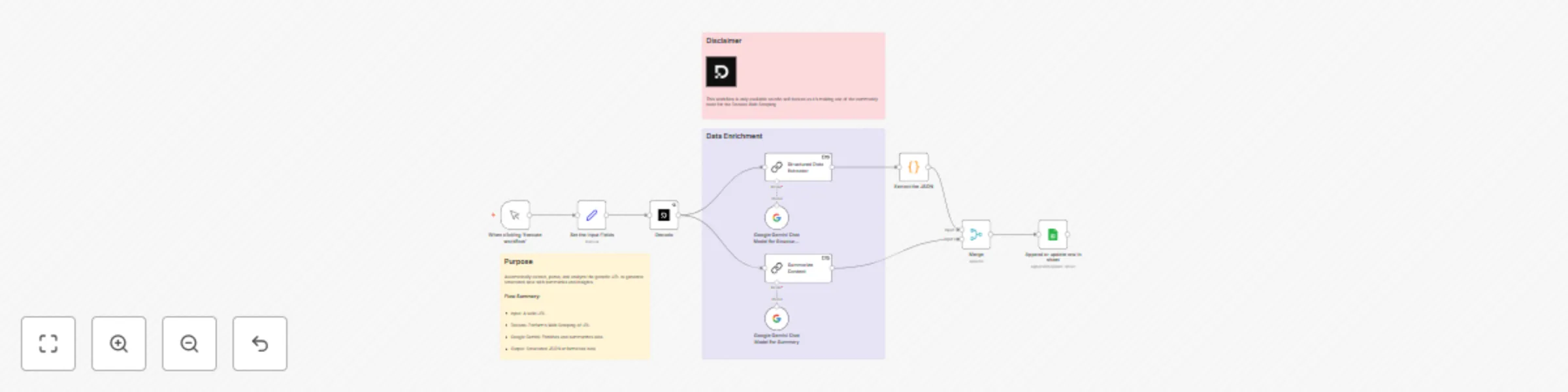

Automated structured data extract & summary via Decodo + Gemini & Google Sheets

Who this is for This workflow is designed for: Automation engineers building AI powered data pipelines Product manage...

Generate comprehensive & abstract summaries from Jotform data with Gemini AI

Who this is for This workflow is designed for researchers, marketing teams, customer success managers, and survey ana...

Automatic Topic & Sentiment Extraction from Jotform Responses with Google Gemini

Who this is for This workflow is designed for teams that collect feedback or survey responses via Jotform and want to...

Extract & summarize LinkedIn profiles with Bright Data, Google Gemini & Supabase

This workflow contains community nodes that are only compatible with the self hosted version of n8n. Overview This wo...

Create structured eBooks in minutes with Google Gemini Flash 2.0 to Google Docs

This workflow contains community nodes that are only compatible with the self hosted version of n8n. Description This...

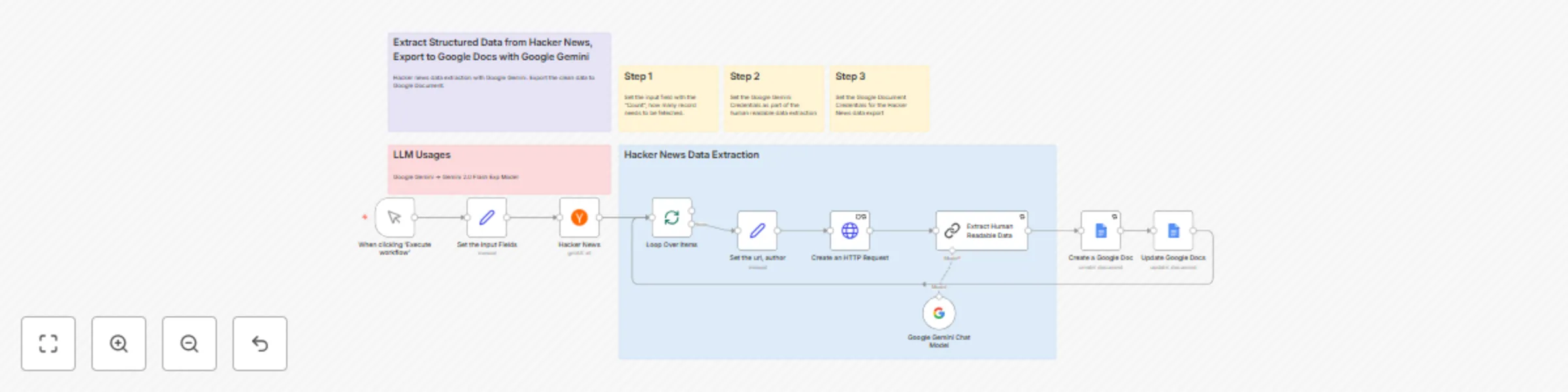

Extract & transform HackerNews data to Google Docs using Gemini 2.0 flash

Description This workflow automates the process of scraping the latest discussions from HackerNews, transforming raw...

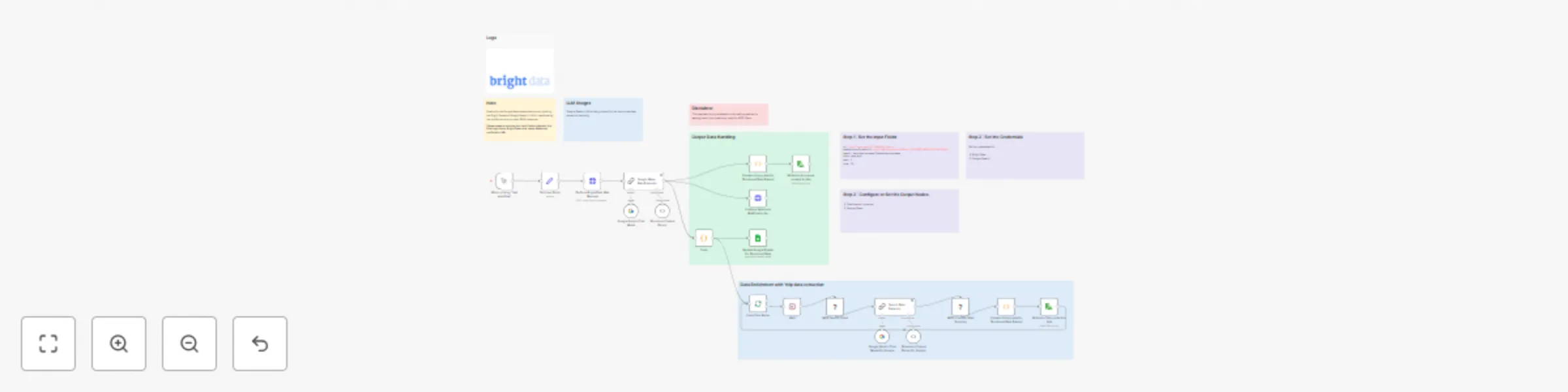

Google Maps business scraper & lead enricher with Bright Data & Google Gemini

Notice Community nodes can only be installed on self hosted instances of n8n. Description This workflow...

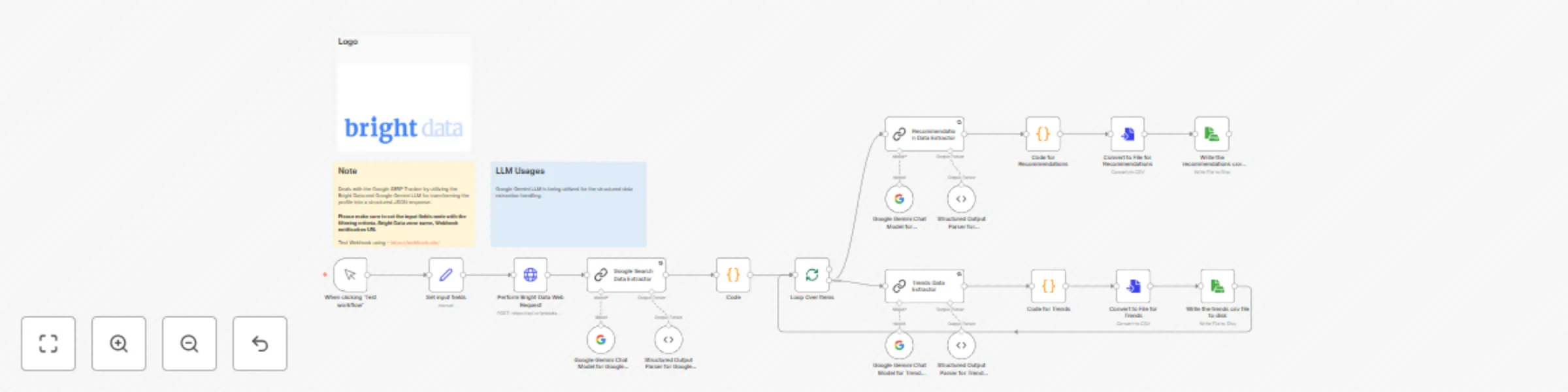

Google SERP + trends and recommendations with Bright Data & Google Gemini

Who this is for? Google SERP Tracker + Trends and Recommendations is an AI powered n8n workflow that extracts Google...

Real-time extract of job, company, salary details via Bright Data MCP & OpenAI

Notice Community nodes can only be installed on self hosted instances of n8n. Who this is ...