J

Jez

6

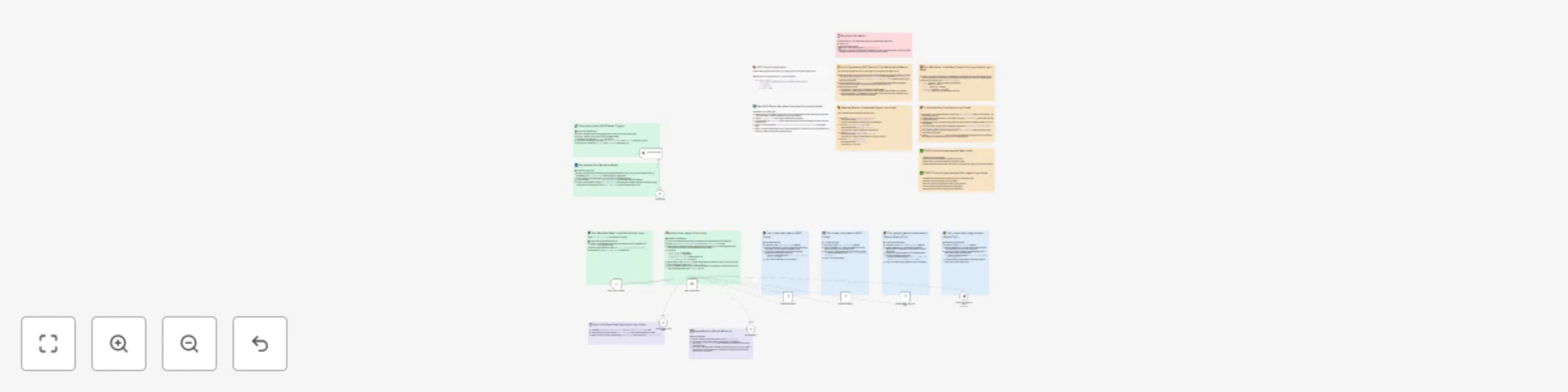

Workflows

Workflows by Jez

Free advanced

Process documents & build semantic search with OpenAI, Gemini & Qdrant

This n8n workflow automates the process of ingesting documents from multiple sources (Google Drive and web forms) int...

J

Jez AI RAG

26 Aug 2025

856

0

Free advanced

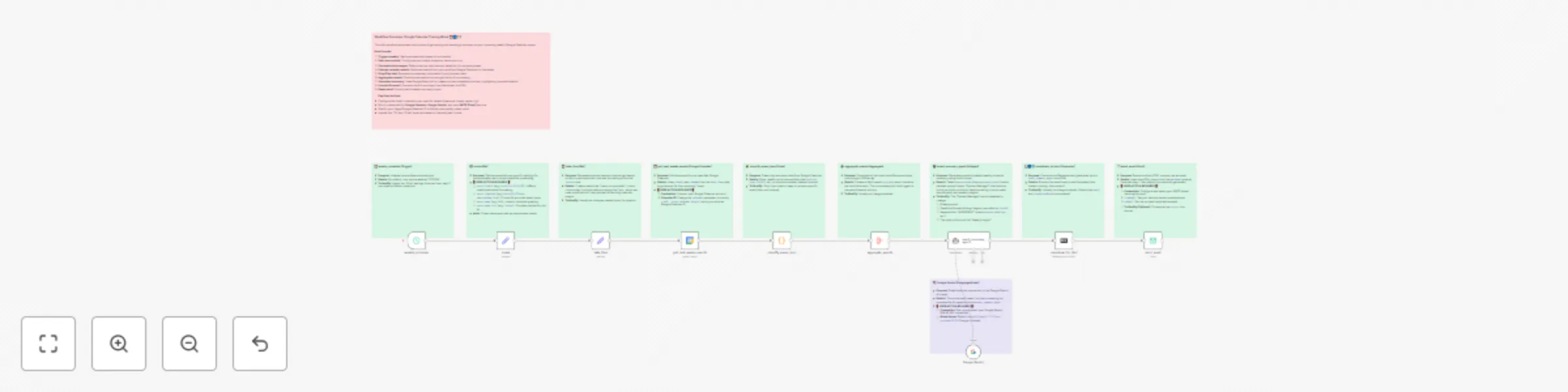

Discover business leads with Gemini, Brave Search and web scraping

This workflow contains community nodes that are only compatible with the self hosted version of n8n. Uncover new busi...

J

Jez Lead Generation

13 Jun 2025

3363

0

Free advanced

Ai-powered local event finder with multi-tool search

Summary This n8n workflow implements an AI powered "Local Event Finder" agent. It takes user criteria (like event typ...

J

Jez Personal Productivity

9 Jun 2025

765

0

Free advanced

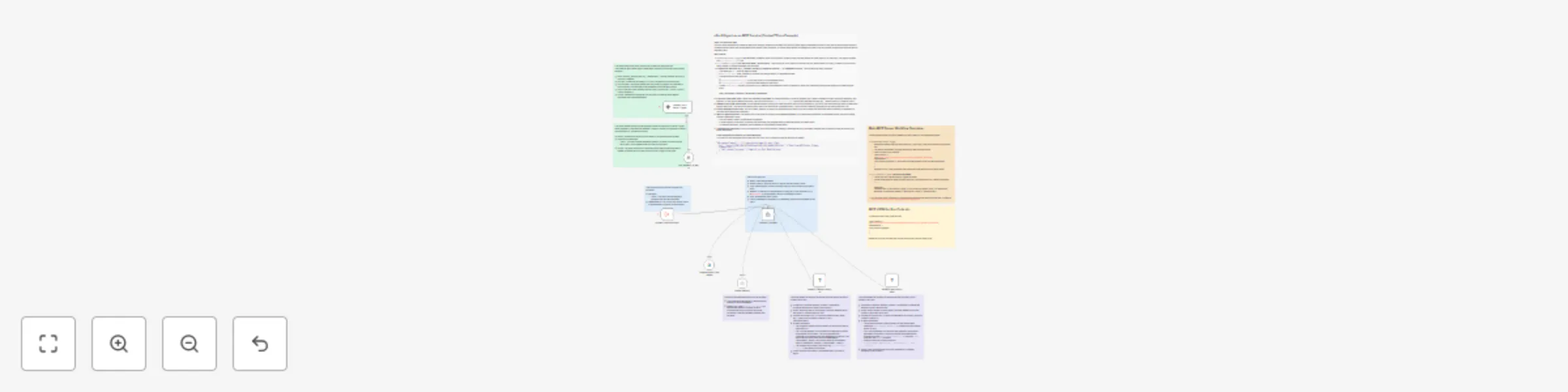

Automated weekly Google Calendar summary via email with AI ✨🗓️📧

Workflow: Automated Weekly Google Calendar Summary via Email with AI ✨🗓️📧 Get a personalized, AI powered summary of...

J

Jez Personal Productivity

8 Jun 2025

4706

0

Free advanced

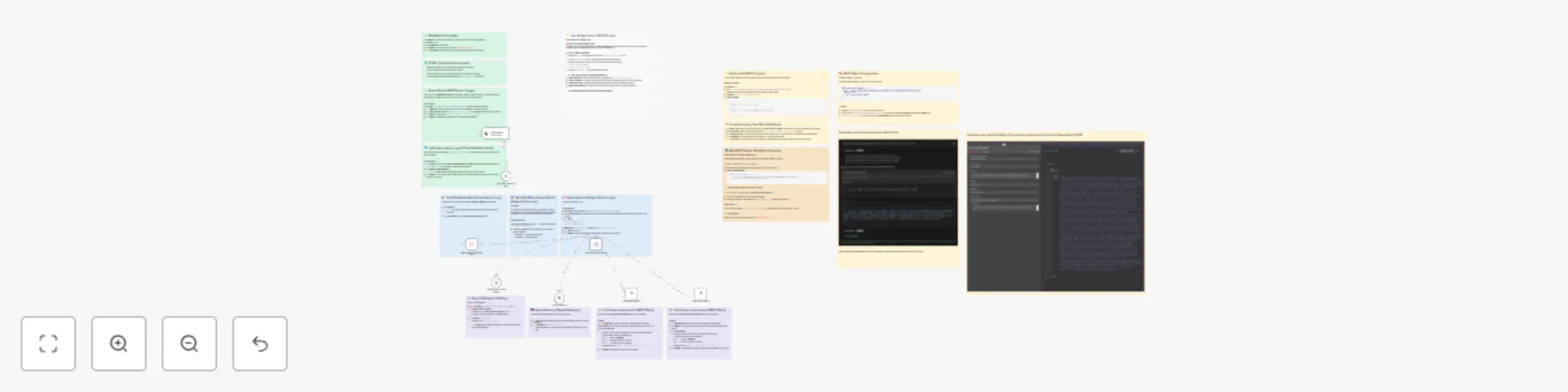

Intelligent web & local search with Brave Search API and Google Gemini MCP Server

Summary This n8n workflow implements an AI powered agent that intelligently uses the Brave Search API (via an externa...

J

Jez Engineering

1 Jun 2025

1701

0

Free advanced

Documentation Lookup AI Agent using Context7 and Gemini

This n8n workflow template uses community nodes and is only compatible with the self hosted version of n8n. This work...

J

Jez Internal Wiki

1 Jun 2025

1599

0