explorium

Workflows by explorium

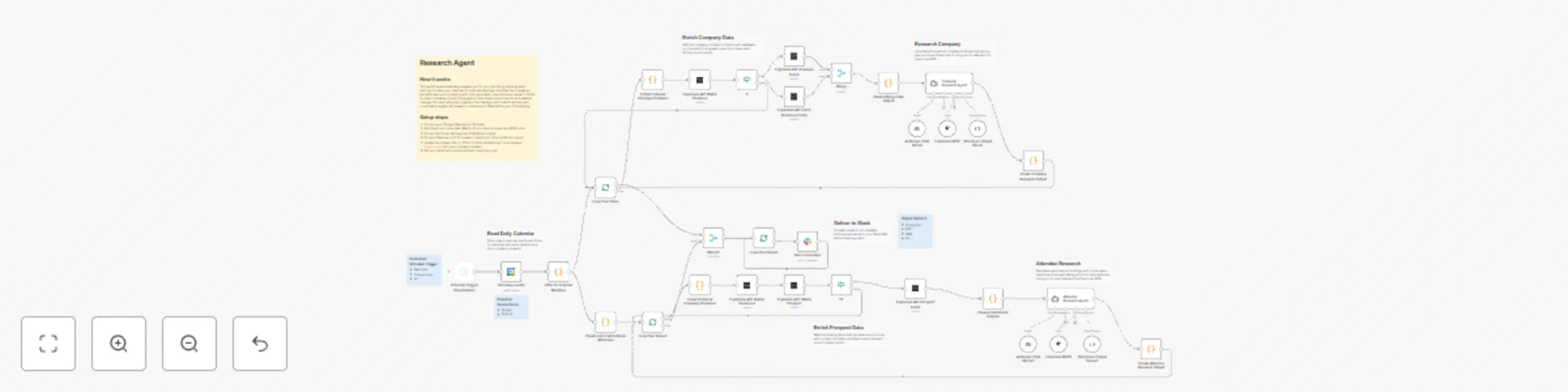

Automate sales meeting prep with Claude AI & Explorium Intelligence

Research Agent Automated Sales Meeting Intelligence This n8n workflow automatically prepares comprehensive sales rese...

Generate personalized sales leads with Claude AI & Explorium for Gmail outreach

Outbound Agent AI Powered Lead Generation with Natural Language Prospecting This n8n workflow transforms natural lang...

Qualify leads with Salesforce, Explorium data & Claude AI analysis of API usage

Inbound Agent AI Powered Lead Qualification with Product Usage Intelligence This n8n workflow automatically qualifies...

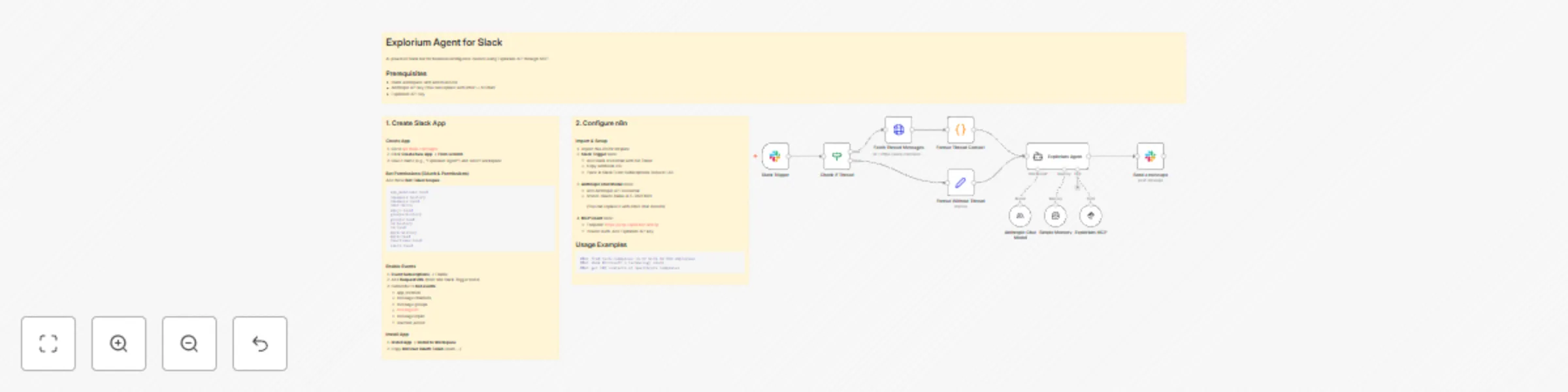

Business intelligence assistant for Slack using Explorium MCP & Claude AI

Explorium Agent for Slack AI powered Slack bot for business intelligence queries using Explorium API through MCP. Pre...

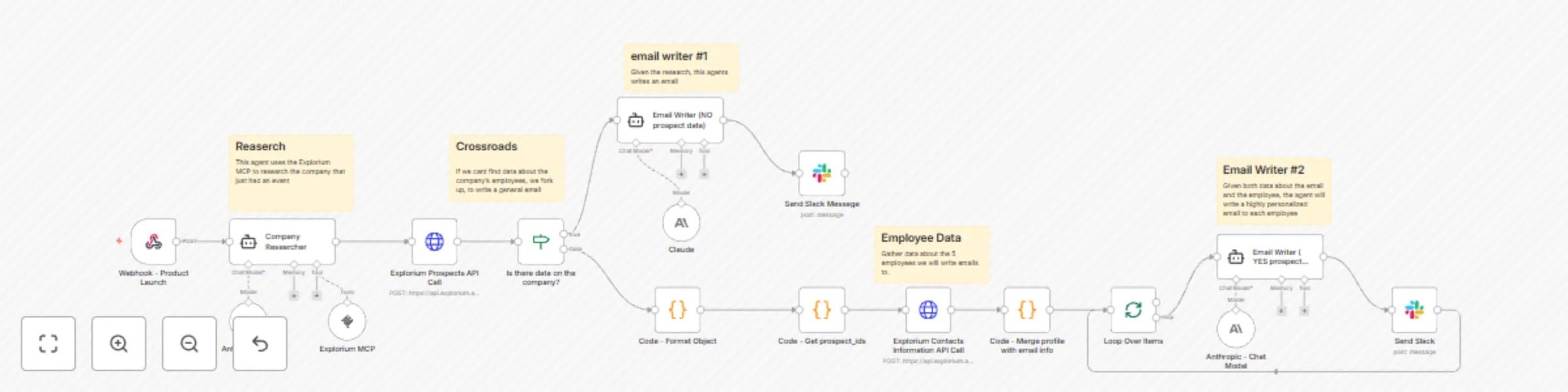

Generate sales emails based on business events with Explorium MCP & Claude

Explorium Event Triggered Outreach This n8n and agent based workflow automates outbound prospecting by monitoring Exp...

Search business prospects with natural language using Claude AI and Explorium MCP

Explorium Prospects Search Chatbot Template Download the following json file and import it to a new n8n workflow: [mc...

Automated AI lead enrichment: Hubspot to Explorium for enhanced prospect data

HubSpot Contact Enrichment with Explorium Template Download the following json file and import it to a new n8n workfl...

Automated AI lead enrichment: Salesforce to Explorium for enhanced prospect data

Salesforce Lead Enrichment with Explorium Template Download the following json file and import it to a new n8n workfl...

Automate HubSpot to Salesforce lead creation with Explorium AI enrichment

Automatically enrich prospect data from HubSpot using Explorium and create leads in Salesforce This n8n workflow stre...

Enrich company firmographic data in Google Sheets with Explorium MCP

Google Sheets Company Enrichment with Explorium MCP Template Download the following json file and import it to a new...