David Olusola

Workflows by David Olusola

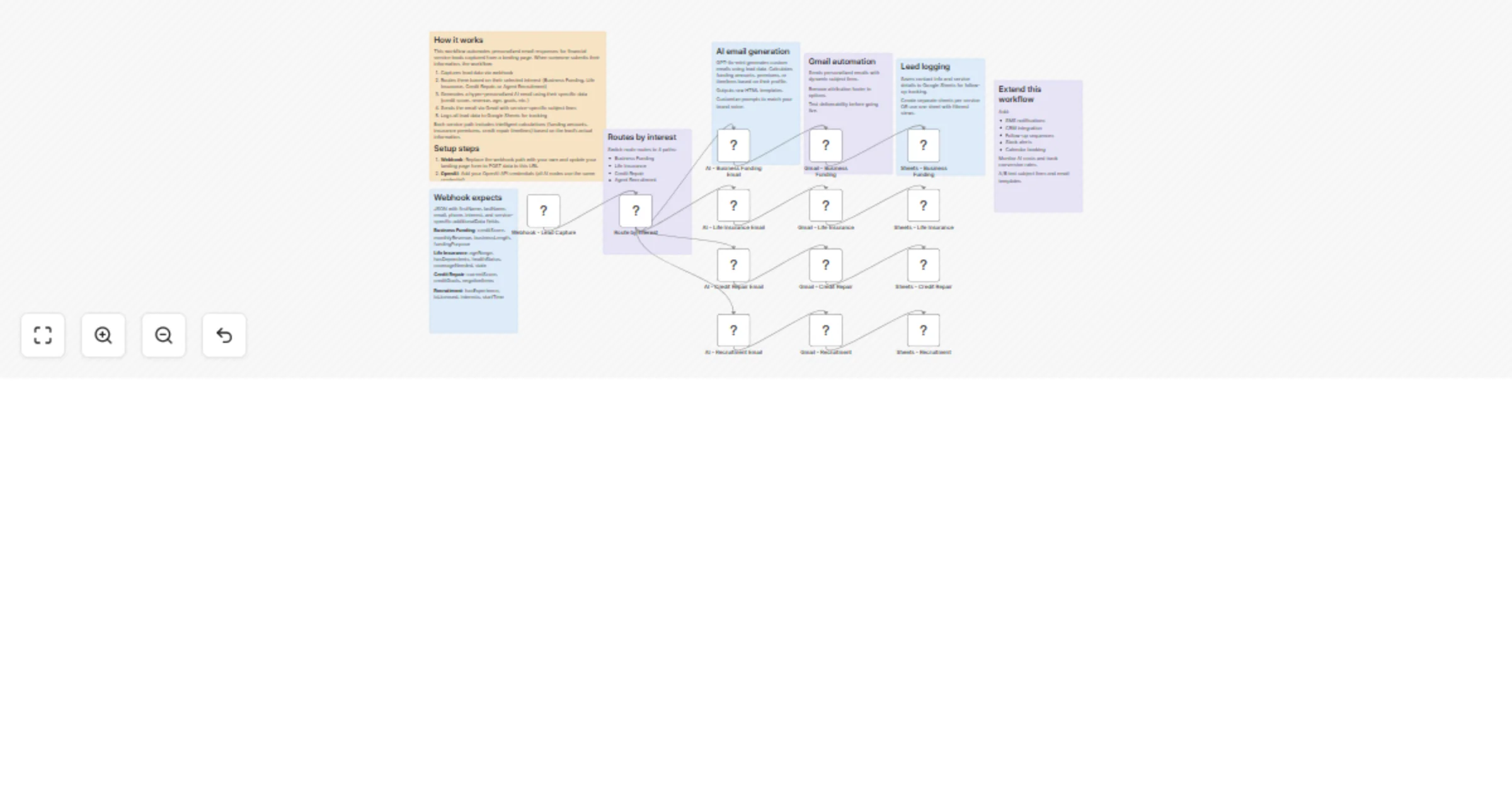

Route and nurture financial services leads with OpenAI, Gmail and Google Sheets

Financial services lead magnet with Lovable/Base44 and n8n Automatically route and nurture leads for Business Funding...

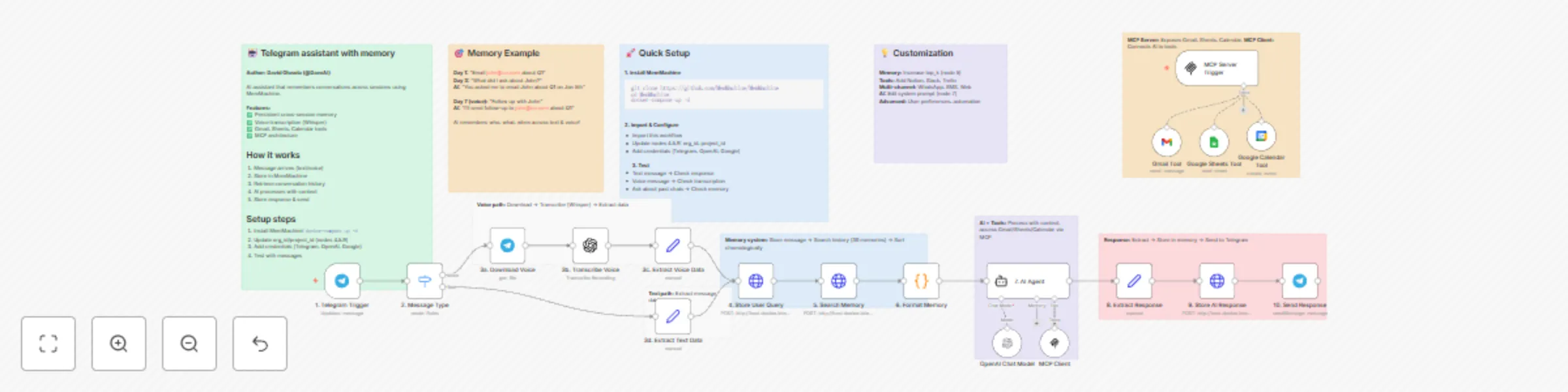

Build a Telegram AI assistant with MemMachine, OpenAI, and voice support

Build a Telegram assistant with MemMachine and voice support An AI assistant that NEVER forgets using MemMachine for...

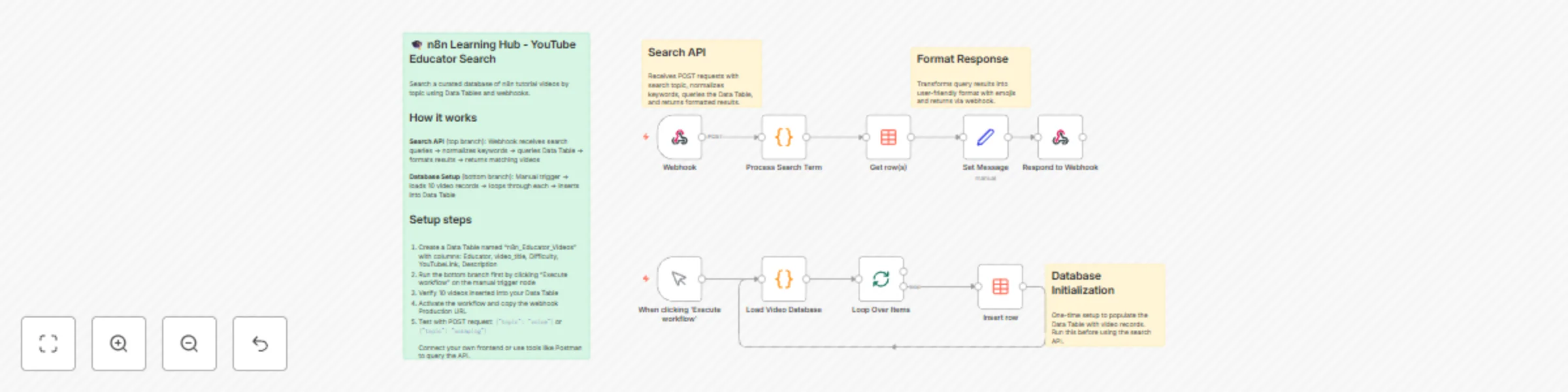

Create a searchable YouTube educator directory with smart keyword matching

🎓 n8n Learning Hub — AI Powered YouTube Educator Directory 📋 Overview This workflow demonstrates how to use n8n Dat...

Sync leads from Google Sheets to instantly email campaigns with data tables

🚀 Automated Lead Management: Google Sheets → Instantly + n8n Data Tables 📋 Overview This workflow automates lead ma...

AI resume screening with GPT-4o & error handling | Google Sheets & Drive flow

GPT 4o Resume Screener with Error Handling Google Sheets & Drive Pipeline How it works Enterprise grade resume screen...

AI resume screening with Gmail, GPT-4o & Google Sheets - automated hiring pipeline

AI Resume Screening with GPT 4o & Google Drive Automated Hiring Pipeline How it works Transform your hiring process w...

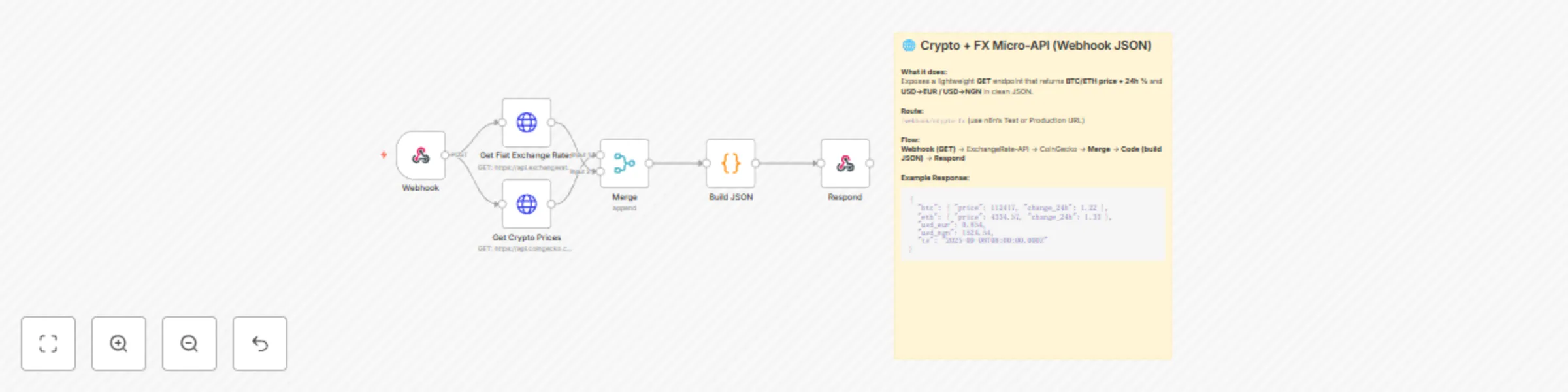

Create a BTC/ETH price & USD exchange rate API with CoinGecko & ExchangeRate-API

🌐 Crypto + FX Micro API (Webhook JSON) 📌 Overview Spin up a tiny, serverless style API from n8n that returns BTC/ET...

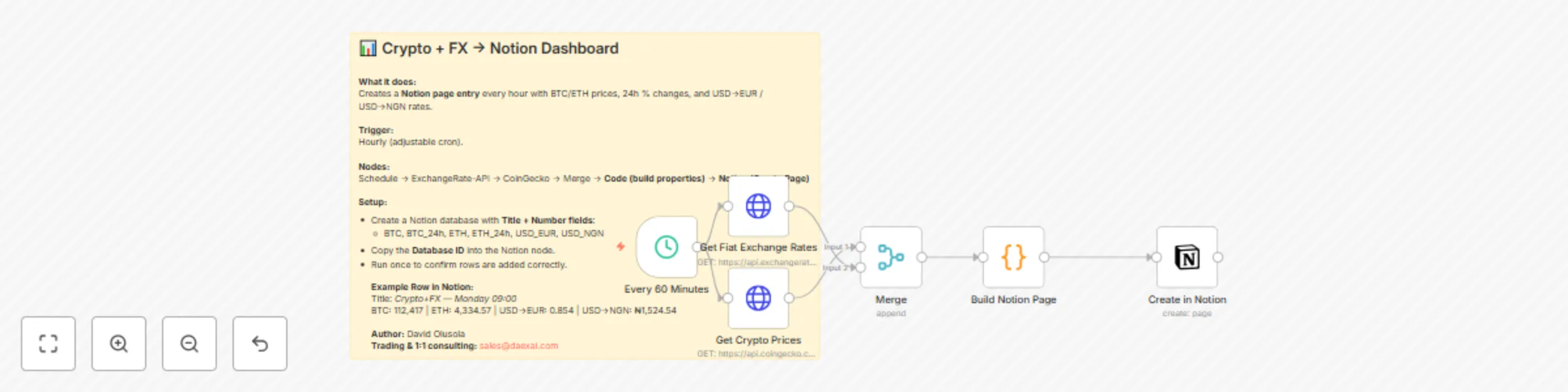

Track crypto prices & FX rates with CoinGecko & ExchangeRate-API to Notion

📊 Log BTC/ETH Prices and USD Exchange Rates to Notion (Hourly) 📌 Overview This workflow automatically logs live cry...

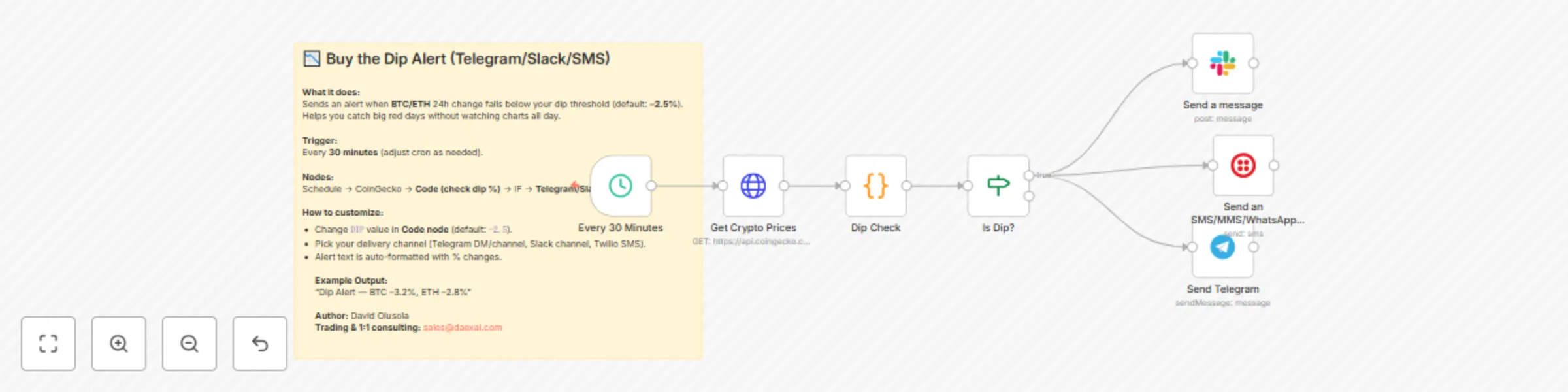

Cryptocurrency dip alerts for Bitcoin & Ethereum via Telegram, Slack & SMS

📉 Buy the Dip Alert (Telegram/Slack/SMS) 📌 Overview This workflow automatically notifies you when Bitcoin or Ethere...

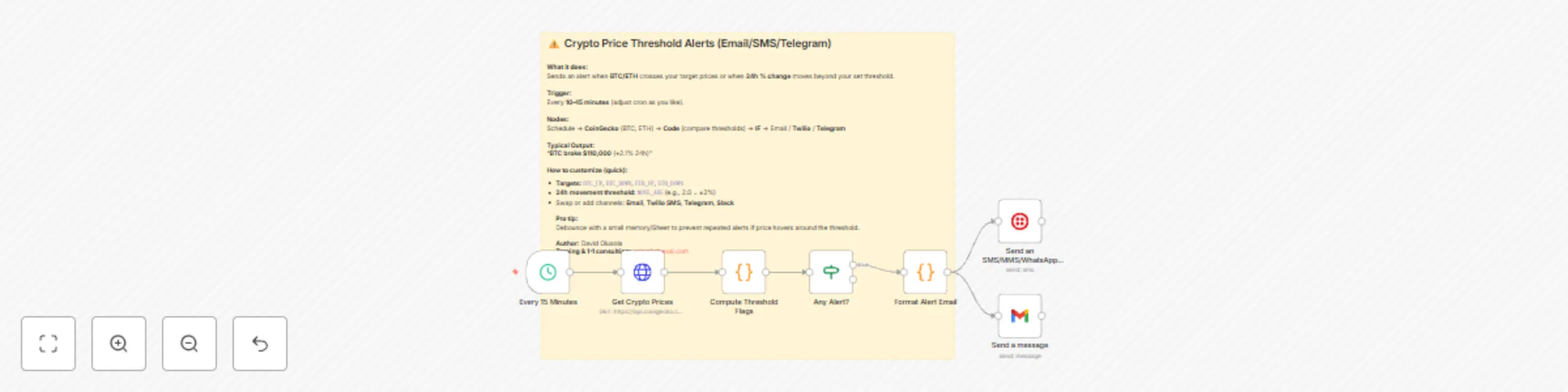

Monitor Bitcoin & Ethereum prices with CoinGecko alerts via Email/SMS

⚠️ Crypto Price Threshold Alerts (Email/SMS/Telegram) 📌 Overview This workflow monitors Bitcoin (BTC) and Ethereum (...

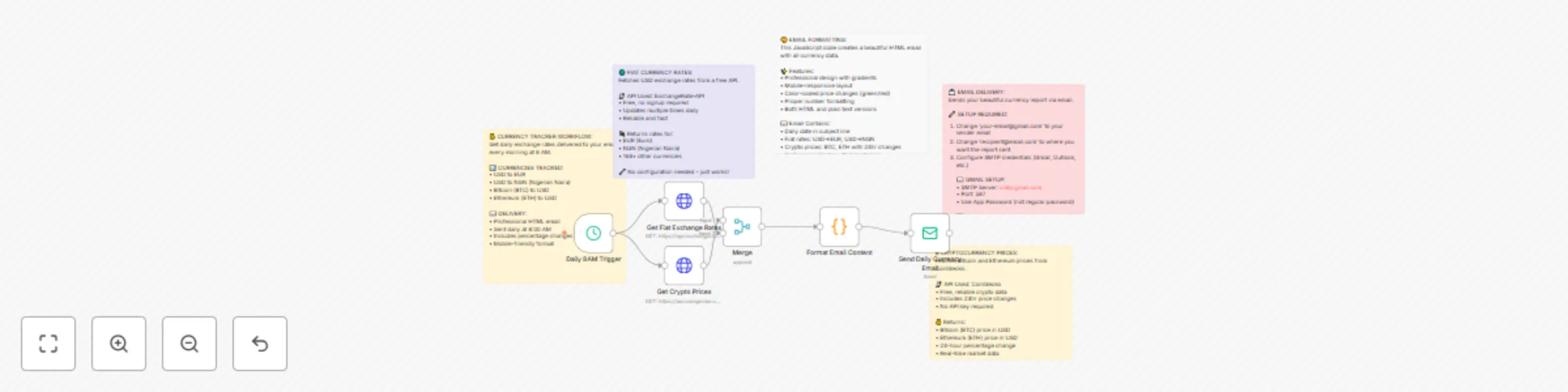

Daily currency rates email report with USD→EUR/NGN & BTC/ETH price tracking

💰 Track Daily Fiat & Crypto Exchange Rates Report with ExchangeRate API & CoinGecko A simple, reliable workflow that...

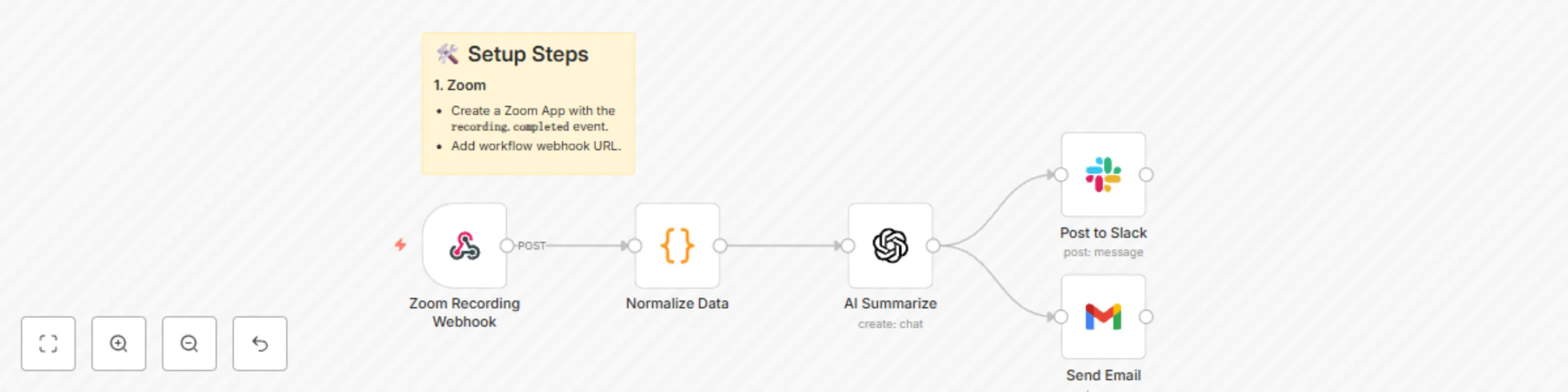

Auto-summarize Zoom recordings with GPT-4 → Slack & email

🎥 Auto Summarize Zoom Recordings → Slack & Email Never lose meeting insights again! This workflow automatically summ...

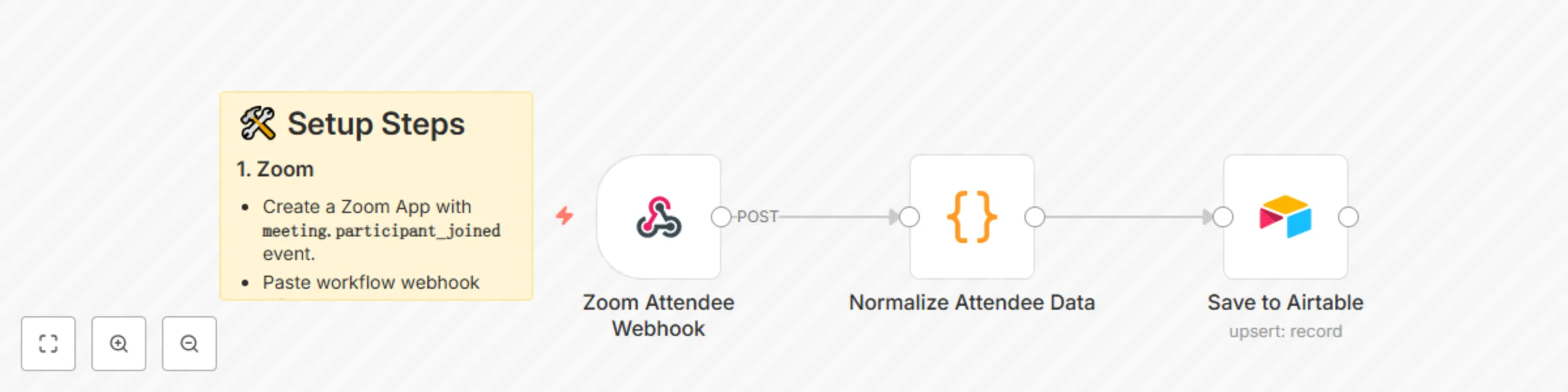

Auto-create Airtable CRM records for Zoom attendees

🗂️ Auto Create Airtable CRM Records for Zoom Attendees This workflow automatically logs every Zoom meeting attendee...

Personalized follow-up emails for Zoom attendees with GPT-4 and Gmail

📧 Auto Send AI Follow Up Emails to Zoom Attendees This workflow automatically emails personalized follow ups to ever...

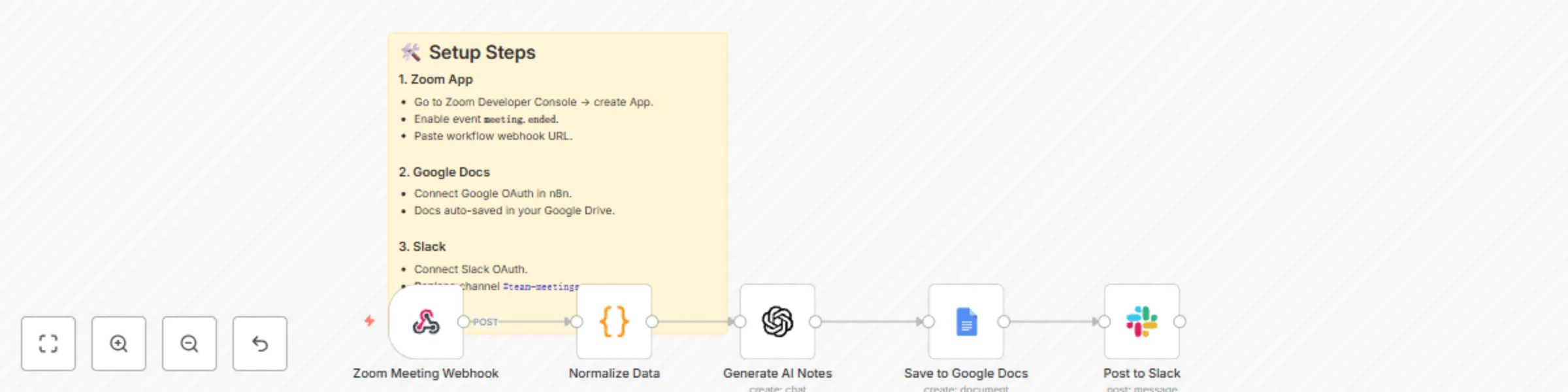

Generate AI meeting notes from Zoom with GPT-4, Google Docs & Slack

📝 Auto Generate Meeting Notes & Summaries (Zoom → Google Docs + Slack) This workflow automatically captures Zoom mee...

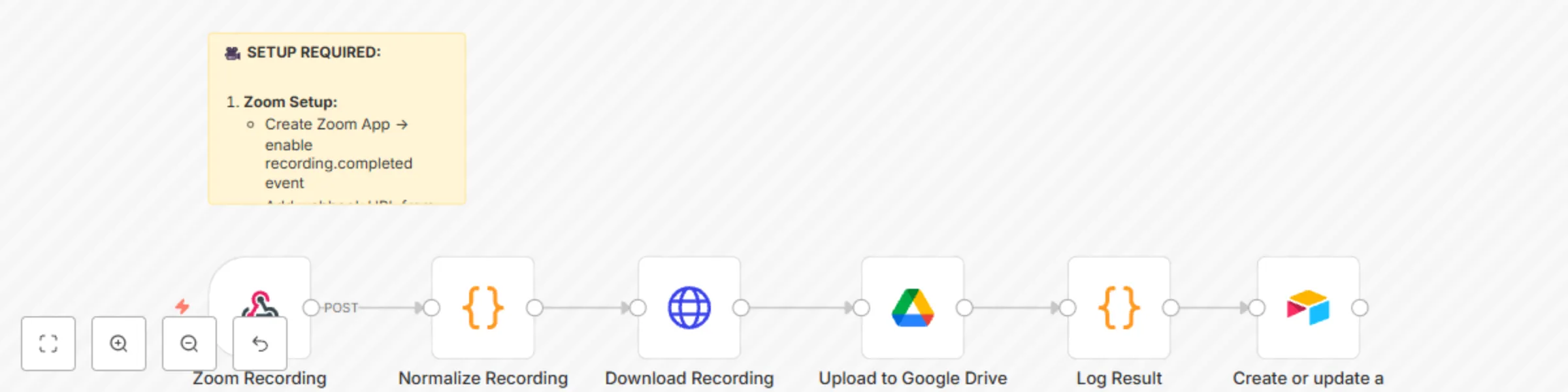

Auto-save Zoom recordings to Google Drive + log meetings in Airtable

🎥 Auto Save Zoom Recordings to Google Drive + Log Meetings in Airtable This workflow automatically saves Zoom meetin...

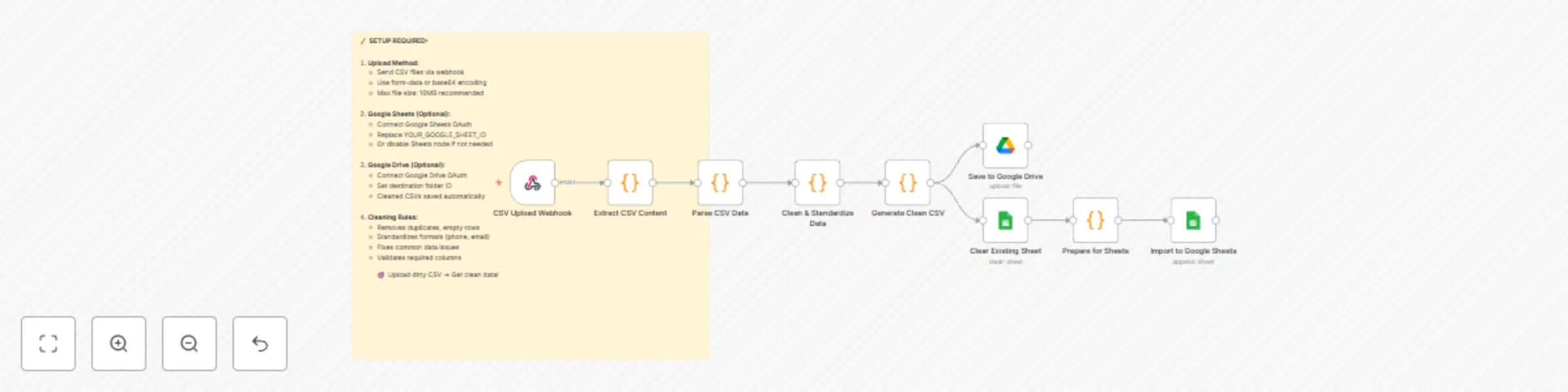

Clean & standardize CSV uploads for Google Sheets and Drive import

🧹 Auto Clean CSV Uploads Before Import This workflow automatically cleans, validates, and standardizes any CSV file...

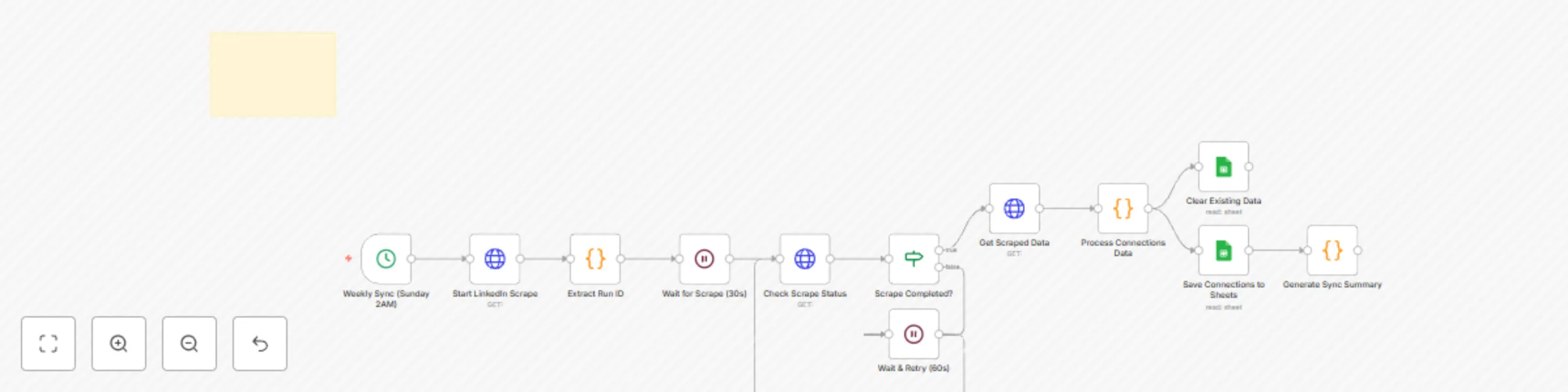

Weekly LinkedIn connections sync & analysis with Apify and Google Sheets

💼 Auto Sync LinkedIn Connections to Google Sheets (Apify + n8n) This workflow automatically scrapes your LinkedIn co...

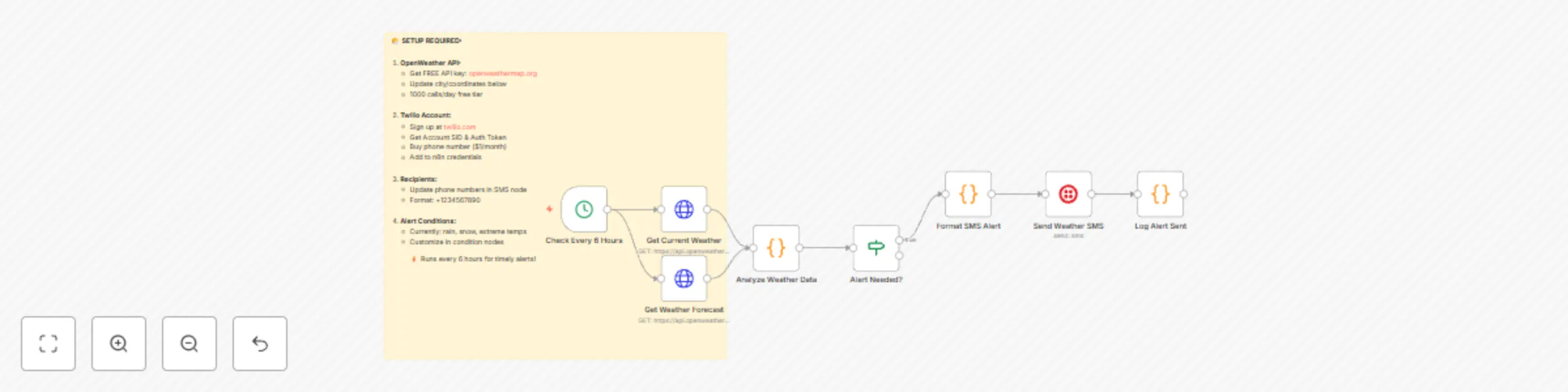

Weather alerts via SMS (OpenWeather + Twilio)

🌤️ Weather Alerts via SMS (OpenWeather + Twilio) This workflow checks the current weather and forecast every 6 hours...

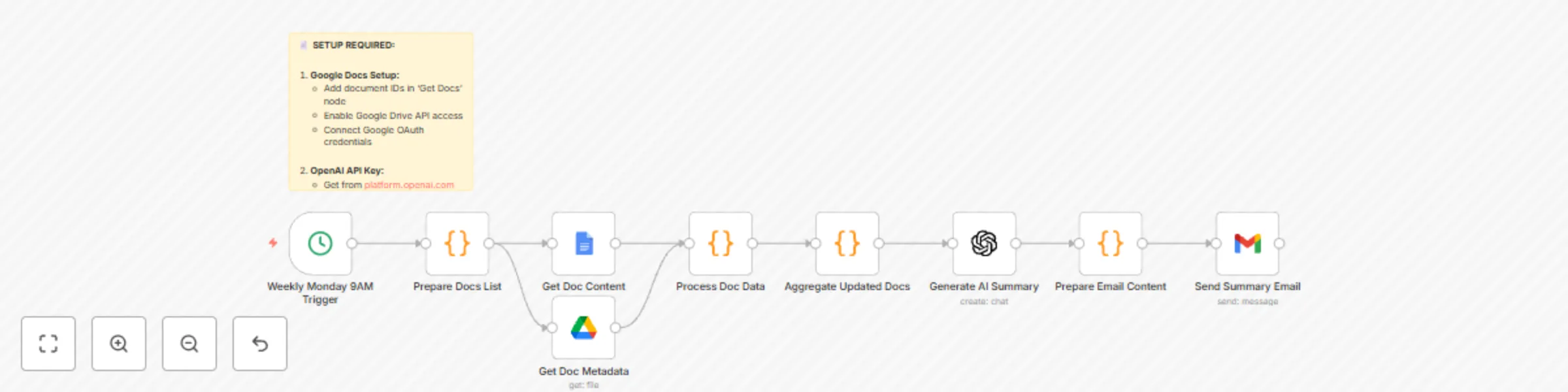

Generate weekly document digests from Google Docs with GPT-4 and email delivery

📄 AI Summarize Weekly Google Docs Updates → Send Email This workflow automatically reviews selected Google Docs ever...

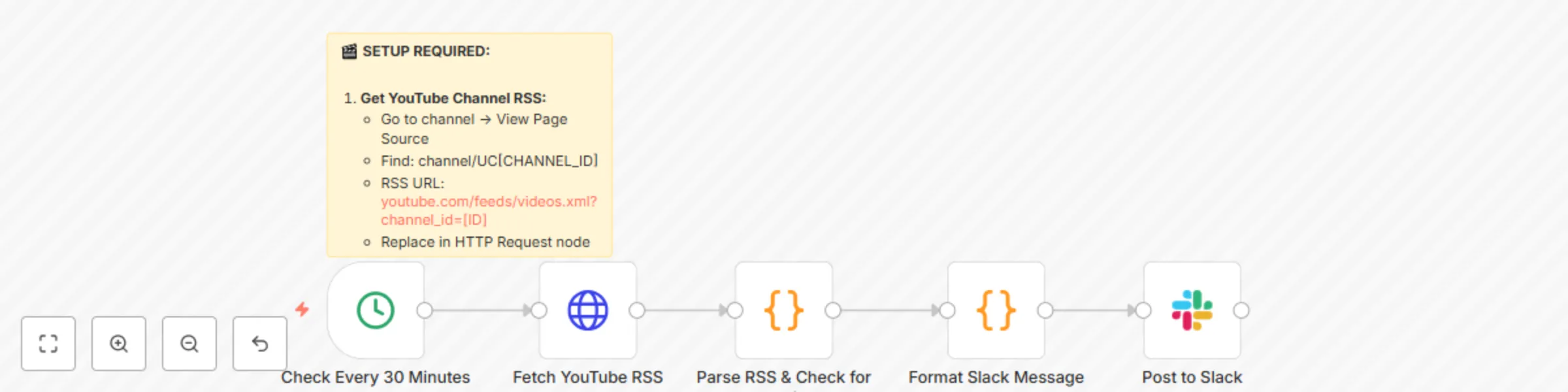

Automate YouTube video notifications to Slack

🎬 YouTube New Video → Auto Post Link to Slack This workflow automatically checks your YouTube channel’s RSS feed eve...

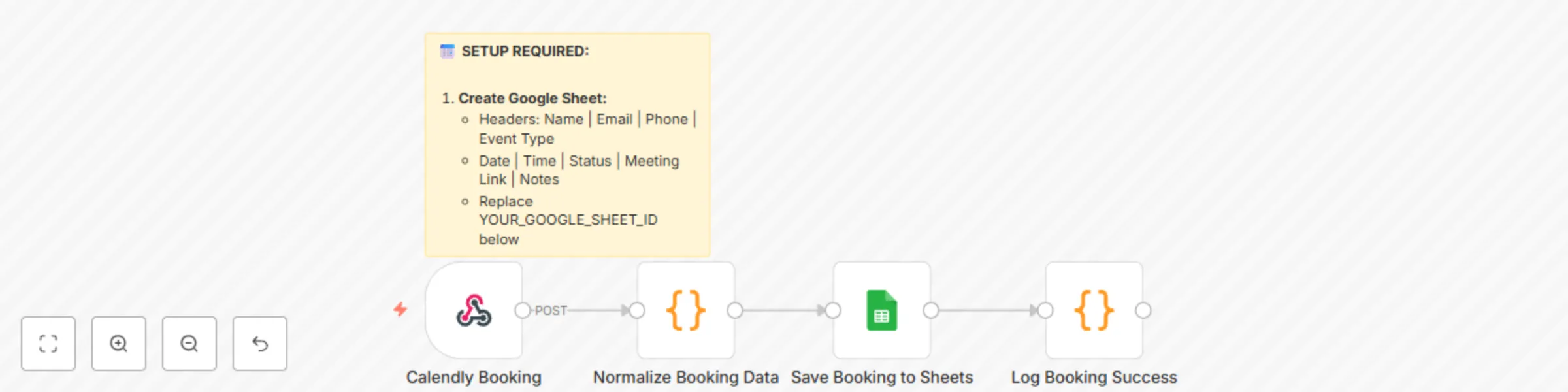

Auto-add new Calendly bookings to Google Sheets

📅 Auto Log Calendly Bookings to Google Sheets This workflow automatically captures new Calendly bookings and saves t...

Auto-translate incoming Gmail emails to English with OpenAI GPT-3.5

🌍 Auto Translate Incoming Emails to English This workflow automatically detects the language of every new Gmail emai...

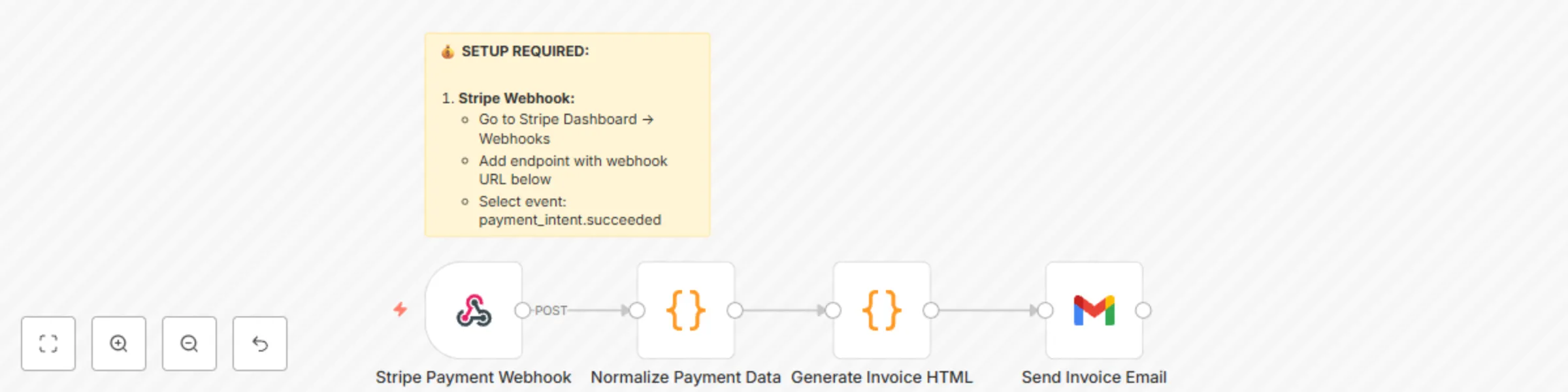

Auto-send PDF invoices with Stripe payment triggers and Gmail

💰 Auto Send PDF Invoice When Stripe Payment is Received This workflow automatically generates a PDF invoice every ti...