C

Chris Jadama

3

Workflows

Workflows by Chris Jadama

Free advanced

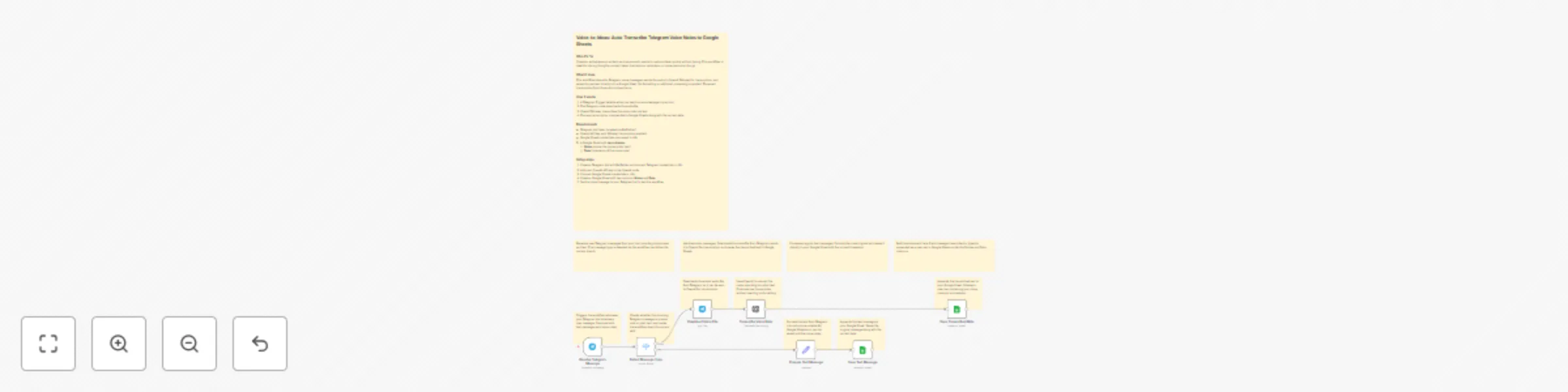

Voice-to-Ideas: Transcribe Telegram Voice Notes with OpenAI Whisper to Google Sheets

Voice to Ideas: Auto Transcribe Telegram Voice Notes to Google Sheets Who it's for Creators, entrepreneurs, writers,...

C

Chris Jadama Personal Productivity

14 Nov 2025

134

0

Free advanced

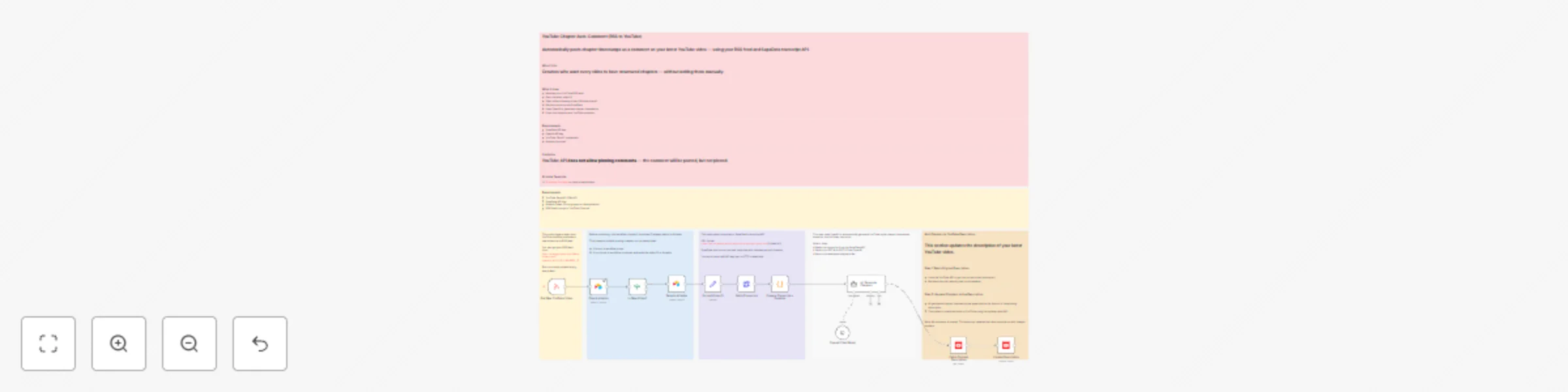

Generate YouTube chapter timestamps with GPT and SupaData transcripts

YouTube Chapter Auto Description with AI This n8n template automatically adds structured timestamp chapters to your l...

C

Chris Jadama Content Creation

11 Nov 2025

77

0

Free advanced

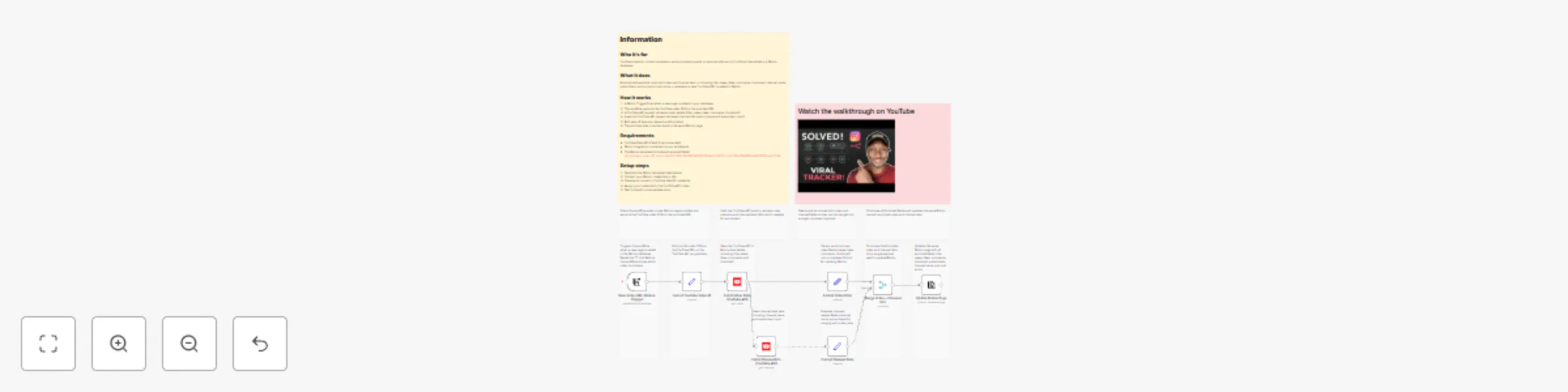

Auto-track YouTube stats & channel data in Notion database

Who it's for YouTube creators, content marketers, and anyone who wants to automatically enrich YouTube links added to...

C

Chris Jadama Market Research

11 Nov 2025

150

0