vinci-king-01

Workflows by vinci-king-01

Sync your HRIS employee directory with Microsoft Teams, Coda, and Slack

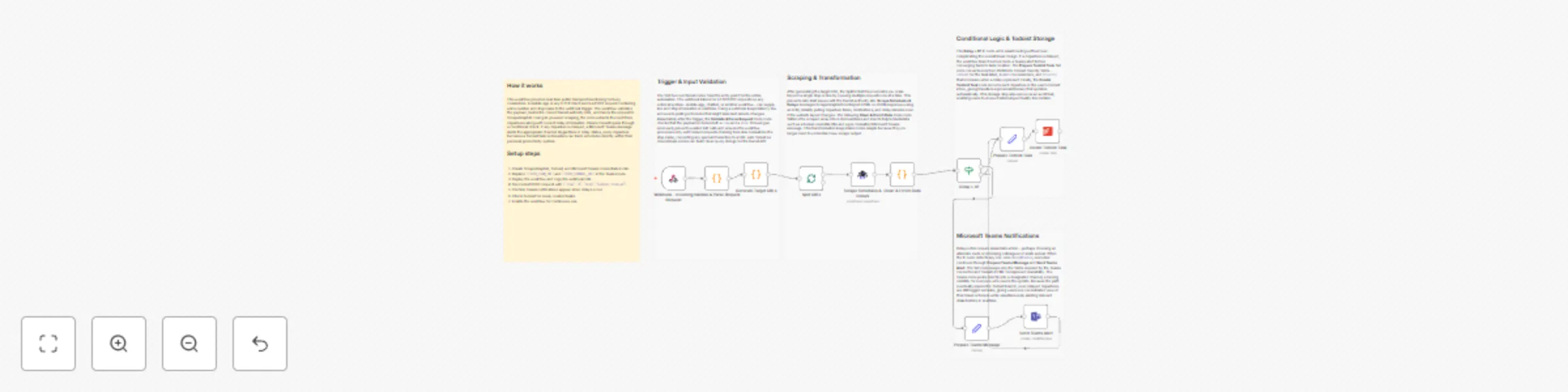

# Employee Directory Sync – Microsoft Teams & Coda **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow keeps your employee directory perfectly synchronized across your HRIS (or any REST-compatible HR database), Microsoft Teams, Coda docs, and Slack channels. It automatically polls the HR system on a schedule, detects additions or updates, and propagates those changes to downstream tools so everyone always has the latest employee information. ## Pre-conditions/Requirements ### Prerequisites - An active n8n instance (self-hosted or n8n cloud) - ScrapeGraphAI community node installed - A reachable HRIS API (BambooHR, Workday, Personio, or any custom REST endpoint) - Existing Microsoft Teams workspace and a team/channel for announcements - A Coda account with an employee directory table - A Slack workspace and channel where directory updates will be posted ### Required Credentials - **Microsoft Teams OAuth2** – To post adaptive cards or messages - **Coda API Token** – To insert/update rows in your Coda doc - **Slack OAuth2** – To push notifications into a Slack channel - **HTTP Basic / Bearer Token** – For your HRIS REST endpoint - **ScrapeGraphAI API Key** – (Only required if you scrape public profile data) ### HRIS Field Mapping | HRIS Field | Coda Column | Teams/Slack Field | |------------|-------------|-------------------| | `firstName`| `First Name`| First Name | | `lastName` | `Last Name` | Last Name | | `email` | `Email` | Email | | `title` | `Job Title` | Job Title | | `department`| `Department`| Department | *(Adjust the mapping in the Set and Code nodes as needed.)* ## How it works This workflow keeps your employee directory perfectly synchronized across your HRIS (or any REST-compatible HR database), Microsoft Teams, Coda docs, and Slack channels. It automatically polls the HR system on a schedule, detects additions or updates, and propagates those changes to downstream tools so everyone always has the latest employee information. ## Key Steps: - **Schedule Trigger**: Fires daily (or at your chosen interval) to start the sync routine. - **HTTP Request**: Fetches the full list of employees from your HRIS API. - **Code (Delta Detector)**: Compares fetched data with a cached snapshot to identify new hires, departures, or updates. - **IF Node**: Branches based on whether changes were detected. - **Split In Batches**: Processes employees in manageable sets to respect API rate limits. - **Set Node**: Maps HRIS fields to Coda columns and Teams/Slack message fields. - **Coda Node**: Upserts rows in the employee directory table. - **Microsoft Teams Node**: Posts an adaptive card summarizing changes to a selected channel. - **Slack Node**: Sends a formatted message with the same update. - **Sticky Note**: Provides inline documentation within the workflow for maintainers. ## Set up steps **Setup Time: 10-15 minutes** 1. **Import the workflow** into your n8n instance. 2. **Open Credentials** tab and create: - Microsoft Teams OAuth2 credential. - Coda API credential. - Slack OAuth2 credential. - HRIS HTTP credential (Basic or Bearer). 3. **Configure the HRIS HTTP Request node** - Replace the placeholder URL with your HRIS endpoint (e.g., `https://api.yourhr.com/v1/employees`). - Add query parameters or headers as required by your HRIS. 4. **Map Coda Doc & Table IDs** in the Coda node. 5. **Select Teams & Slack channels** in their respective nodes. 6. **Adjust the Schedule Trigger** to your desired frequency. 7. **Optional**: Edit the Code node to tweak field mapping or add custom delta-comparison logic. 8. **Execute the workflow manually once** to verify proper end-to-end operation. 9. **Activate** the workflow. ## Node Descriptions ### Core Workflow Nodes: - **Schedule Trigger** – Initiates the sync routine at set intervals. - **HTTP Request (Get Employees)** – Pulls the latest employee list from the HRIS. - **Code (Delta Detector)** – Stores the previous run’s data in workflow static data and identifies changes. - **IF (Has Changes?)** – Skips downstream steps when no changes were detected, saving resources. - **Split In Batches** – Iterates through employees in chunks (default 50) to avoid API throttling. - **Set (Field Mapper)** – Renames and restructures data for Coda, Teams, and Slack. - **Coda (Upsert Rows)** – Inserts new rows or updates existing ones based on email match. - **Microsoft Teams (Post Message)** – Sends a rich adaptive card with the update summary. - **Slack (Post Message)** – Delivers a concise change log to a Slack channel. - **Sticky Note** – Embedded documentation for quick reference. ### Data Flow: 1. **Schedule Trigger** → **HTTP Request** → **Code (Delta Detector)** 2. **Code** → **IF (Has Changes?)** - If **No** → **End** - If **Yes** → **Split In Batches** → **Set** → **Coda** → **Teams** → **Slack** ## Customization Examples ### Change Sync Frequency ```javascript // Inside Schedule Trigger { "mode": "everyDay", "hour": 6, "minute": 0 } ``` ### Extend Field Mapping ```javascript // Inside Set node items[0].json.phone = item.phoneNumber ?? ''; items[0].json.location = item.officeLocation ?? ''; return items; ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "employee": { "id": "123", "firstName": "Jane", "lastName": "Doe", "email": "[email protected]", "title": "Senior Engineer", "department": "R&D", "status": "New Hire", "syncedAt": "2024-05-08T10:15:23.000Z" }, "destination": { "codaRowId": "row_abc123", "teamsMessageId": "msg_987654", "slackTs": "1715158523.000200" } } ``` ## Troubleshooting ### Common Issues 1. **HTTP 401 from HRIS API** – Verify token validity and that the credential is attached to the HTTP Request node. 2. **Coda duplicates rows** – Ensure the key column in Coda is set to “Email” and the Upsert option is enabled. ### Performance Tips - Cache HRIS responses in static data to minimize API calls. - Increase the Split In Batches size only if your API rate limits allow. **Pro Tips:** - Use n8n’s built-in Version Control to track mapping changes over time. - Add a second IF node to differentiate between “new hires” and “updates” for tailored announcements. - Enable Slack’s “threaded replies” to keep your #hr-updates channel tidy.

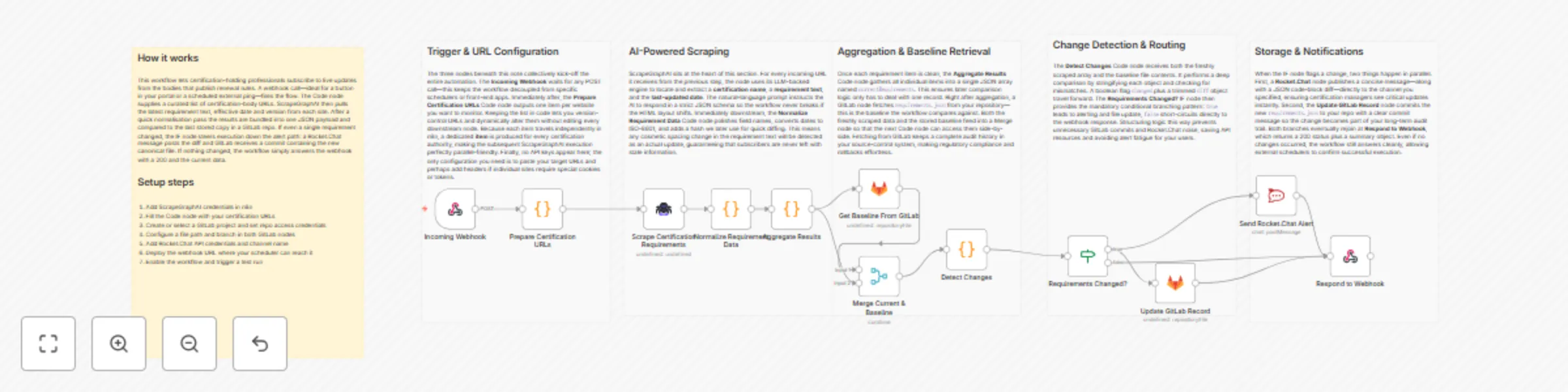

Track certification requirements with ScrapeGraphAI, GitLab and Rocket.Chat

# Certification Requirement Tracker with Rocket.Chat and GitLab **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow automatically monitors websites of certification bodies and industry associations, detects changes in certification requirements, commits the updated information to a GitLab repository, and notifies a Rocket.Chat channel. Ideal for professionals and compliance teams who must stay ahead of annual updates and renewal deadlines. ## Pre-conditions/Requirements ### Prerequisites - Running n8n instance (self-hosted or n8n.cloud) - ScrapeGraphAI community node installed and active - Rocket.Chat workspace (self-hosted or cloud) - GitLab account and repository for documentation - Publicly reachable URL for incoming webhooks (use n8n tunnel, Ngrok, or a reverse proxy) ### Required Credentials - **ScrapeGraphAI API Key** – Enables scraping of certification pages - **Rocket.Chat Access Token & Server URL** – To post update messages - **GitLab Personal Access Token** – With `api` and `write_repository` scopes ### Specific Setup Requirements | Item | Example Value | Notes | | ------------------------------ | ------------------------------------------ | ----- | | GitLab Repo | `gitlab.com/company/cert-tracker` | Markdown files will be committed here | | Rocket.Chat Channel | `#certification-updates` | Receives update alerts | | Certification Source URLs file | `/data/sourceList.json` in the repository | List of URLs to scrape | ## How it works This workflow automatically monitors websites of certification bodies and industry associations, detects changes in certification requirements, commits the updated information to a GitLab repository, and notifies a Rocket.Chat channel. Ideal for professionals and compliance teams who must stay ahead of annual updates and renewal deadlines. ## Key Steps: - **Webhook Trigger**: Fires on a scheduled HTTP call (e.g., via cron) or manual trigger. - **Code (Prepare Source List)**: Reads/constructs a list of certification URLs to scrape. - **ScrapeGraphAI**: Fetches HTML content and extracts requirement sections. - **Merge**: Combines newly scraped data with the last committed snapshot. - **IF Node**: Determines if a change occurred (hash/length comparison). - **GitLab**: Creates a branch, commits updated Markdown/JSON files, and opens an MR (optional). - **Rocket.Chat**: Posts a message summarizing changes and linking to the GitLab diff. - **Respond to Webhook**: Returns a JSON summary to the requester (useful for monitoring or chained automations). ## Set up steps **Setup Time: 20-30 minutes** 1. **Install Community Node**: In n8n UI, go to *Settings → Community Nodes* and install `@n8n/community-node-scrapegraphai`. 2. **Create Credentials**: a. ScrapeGraphAI – paste your API key. b. Rocket.Chat – create a personal access token (`Personal Access Tokens → New Token`) and configure credentials. c. GitLab – create PAT with `api` + `write_repository` scopes and add to n8n. 3. **Clone the Template**: Import this workflow JSON into your n8n instance. 4. **Edit StickyNote**: Replace placeholder URLs with actual certification-source URLs or point to a repo file. 5. **Configure GitLab Node**: Set your repository, default branch, and commit message template. 6. **Configure Rocket.Chat Node**: Select credential, channel, and message template (markdown supported). 7. **Expose Webhook**: If self-hosting, enable n8n tunnel or configure reverse proxy to make the webhook public. 8. **Test Run**: Trigger the workflow manually; verify GitLab commit/MR and Rocket.Chat notification. 9. **Automate**: Schedule an external cron (or n8n Cron node) to `POST` to the webhook yearly, quarterly, or monthly as needed. ## Node Descriptions ### Core Workflow Nodes: - **stickyNote** – Human-readable instructions/documentation embedded in the flow. - **webhook** – Entry point; accepts `POST /cert-tracker` requests. - **code (Prepare Source List)** – Generates an array of URLs; can pull from GitLab or an environment variable. - **scrapegraphAi** – Scrapes each URL and extracts certification requirement sections using CSS/XPath selectors. - **merge (by key)** – Joins new data with previous snapshot for change detection. - **if (Changes?)** – Branches logic based on whether differences exist. - **gitlab** – Creates/updates files and opens merge requests containing new requirements. - **rocketchat** – Sends formatted update to designated channel. - **respondToWebhook** – Returns `200 OK` with a JSON summary. ### Data Flow: 1. **webhook** → **code** → **scrapegraphAi** → **merge** → **if** 2. **if (true)** → **gitlab** → **rocketchat** 3. **if (false)** → **respondToWebhook** ## Customization Examples ### Change Scraping Frequency ```javascript // Replace external cron with n8n Cron node { "nodes": [ { "name": "Cron", "type": "n8n-nodes-base.cron", "parameters": { "schedule": { "hour": "0", "minute": "0", "dayOfMonth": "1" } } } ] } ``` ### Extend Notification Message ```javascript // Rocket.Chat node → Message field const diffUrl = $json["gitlab_diff_url"]; const count = $json["changes_count"]; return `:bell: **${count} Certification Requirement Update(s)**\n\nView diff: ${diffUrl}`; ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "timestamp": "2024-05-15T12:00:00Z", "changesDetected": true, "changesCount": 3, "gitlab_commit_sha": "a1b2c3d4", "gitlab_diff_url": "https://gitlab.com/company/cert-tracker/-/merge_requests/42", "notifiedChannel": "#certification-updates" } ``` ## Troubleshooting ### Common Issues 1. **ScrapeGraphAI returns empty results** – Verify your CSS/XPath selectors and API key quota. 2. **GitLab commit fails (401 Unauthorized)** – Ensure PAT has `api` and `write_repository` scopes and is not expired. ### Performance Tips - Limit the number of pages scraped per run to avoid API rate limits. - Cache last-scraped HTML in an S3 bucket or database to reduce redundant requests. **Pro Tips:** - Use GitLab CI to auto-deploy documentation site whenever new certification files are merged. - Enable Rocket.Chat threading to keep discussions organized per update. - Tag stakeholders in Rocket.Chat messages with `@cert-team` for instant visibility.

Aggregate commercial property listings with ScrapeGraphAI, Baserow and Teams

# Property Listing Aggregator with Microsoft Teams and Baserow **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow automatically aggregates commercial real-estate listings from multiple broker and marketplace websites, stores the fresh data in Baserow, and pushes weekly availability alerts to Microsoft Teams. Ideal for business owners searching for new retail or office space, it runs on a timetable, scrapes property details, de-duplicates existing entries, and notifies your team of only the newest opportunities. ## Pre-conditions/Requirements ### Prerequisites - An n8n instance (self-hosted or n8n.cloud) - ScrapeGraphAI community node installed - A Baserow workspace & table prepared to store property data - A Microsoft Teams channel with an incoming webhook URL - List of target real-estate URLs (CSV, JSON, or hard-coded array) ### Required Credentials - **ScrapeGraphAI API Key** – Enables headless scraping of listing pages - **Baserow Personal API Token** – Grants create/read access to your property table - **Microsoft Teams Webhook URL** – Allows posting messages to your channel ### Baserow Table Schema | Column Name | Type | Notes | |-------------|---------|--------------------------------| | `listing_id`| Text | Unique ID or URL slug (primary)| | `title` | Text | Listing headline | | `price` | Number | Monthly or annual rent | | `sq_ft` | Number | Size in square feet | | `location` | Text | City / neighborhood | | `url` | URL | Original listing link | | `scraped` | Date | Timestamp of last scrape | ## How it works This workflow automatically aggregates commercial real-estate listings from multiple broker and marketplace websites, stores the fresh data in Baserow, and pushes weekly availability alerts to Microsoft Teams. Ideal for business owners searching for new retail or office space, it runs on a timetable, scrapes property details, de-duplicates existing entries, and notifies your team of only the newest opportunities. ## Key Steps: - **Schedule Trigger**: Fires every week (or on demand) to start the aggregation cycle. - **Load URL List (Code node)**: Returns an array of listing or search-result URLs to be scraped. - **Split In Batches**: Processes URLs in manageable groups to avoid rate-limits. - **ScrapeGraphAI**: Extracts title, price, size, and location from each page. - **Merge**: Reassembles batches into a single dataset. - **IF Node**: Checks each listing against Baserow to detect new vs. existing entries. - **Baserow**: Inserts only brand-new listings into the table. - **Set Node**: Formats a concise Teams message with key details. - **Microsoft Teams**: Sends the alert to your designated channel. ## Set up steps **Setup Time: 15-20 minutes** 1. **Install ScrapeGraphAI node**: In n8n, go to “Settings → Community Nodes”, search for “@n8n-nodes/scrapegraphai” and install. 2. **Create Baserow table**: Follow the schema above. Copy your Personal API Token from Baserow profile settings. 3. **Generate Teams webhook**: In Microsoft Teams, open channel → “Connectors” → “Incoming Webhook”, name it, and copy the URL. 4. **Open the workflow** in n8n and set the following credentials: - ScrapeGraphAI API Key - Baserow token (Baserow node) - Teams webhook (Microsoft Teams node) 5. **Define target URLs**: Edit the “Load URL List” Code node and add your marketplace or broker URLs. 6. **Adjust schedule**: Double-click the “Schedule Trigger” and set the cron expression (default: weekly Monday 08:00). 7. **Test-run** the workflow manually to verify scraping and data insertion. 8. **Activate** the workflow once results look correct. ## Node Descriptions ### Core Workflow Nodes: - **stickyNote** – Provides inline documentation and reminders inside the canvas. - **Schedule Trigger** – Triggers the workflow on a weekly cron schedule. - **Code** – Holds an array of URLs and can implement dynamic logic (e.g., API calls to get URLs). - **SplitInBatches** – Splits URL list into configurable batch sizes (default: 5) to stay polite. - **ScrapeGraphAI** – Scrapes each URL and returns structured JSON for price, size, etc. - **Merge** – Combines batch outputs back into one array. - **IF** – Performs existence check against Baserow’s `listing_id` to prevent duplicates. - **Baserow** – Writes new records or updates existing ones. - **Set** – Builds a human-readable message string for Teams. - **Microsoft Teams** – Posts the summary into your channel. ### Data Flow: 1. Schedule Trigger → Code → Split In Batches → ScrapeGraphAI → Merge → IF → Baserow 2. IF (new listings) → Set → Microsoft Teams ## Customization Examples ### Add additional data points (e.g., number of parking spaces) ```javascript // In ScrapeGraphAI "Selectors" field { "title": ".listing-title", "price": ".price", "sq_ft": ".size", "parking": ".parking span" // new selector } ``` ### Change Teams message formatting ```javascript // In Set node return items.map(item => { const l = item.json; item.json = { text: `🏢 *${l.title}* — ${l.price} USD\n📍 ${l.location} | ${l.sq_ft} ft²\n🔗 <${l.url}|View Listing>` }; return item; }); ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "listing_id": "12345-main-street-suite-200", "title": "Downtown Office Space – Suite 200", "price": 4500, "sq_ft": 2300, "location": "Austin, TX", "url": "https://broker.com/listings/12345", "scraped": "2024-05-01T08:00:00.000Z" } ``` ## Troubleshooting ### Common Issues 1. **ScrapeGraphAI returns empty fields** – Update CSS selectors or switch to XPath; run in headless:true mode. 2. **Duplicate records still appear** – Ensure `listing_id` is truly unique (use URL slug) and that the IF node compares correctly. 3. **Teams message not delivered** – Verify webhook URL and that the Teams connector is enabled for the channel. ### Performance Tips - Reduce batch size if websites block rapid requests. - Cache previous URLs to skip unchanged search-result pages. **Pro Tips:** - Rotate proxies in ScrapeGraphAI for larger scraping volumes. - Use environment variables for credentials to simplify migrations. - Add a second Schedule Trigger for daily “hot deal” checks by duplicating the workflow and narrowing the URL list.

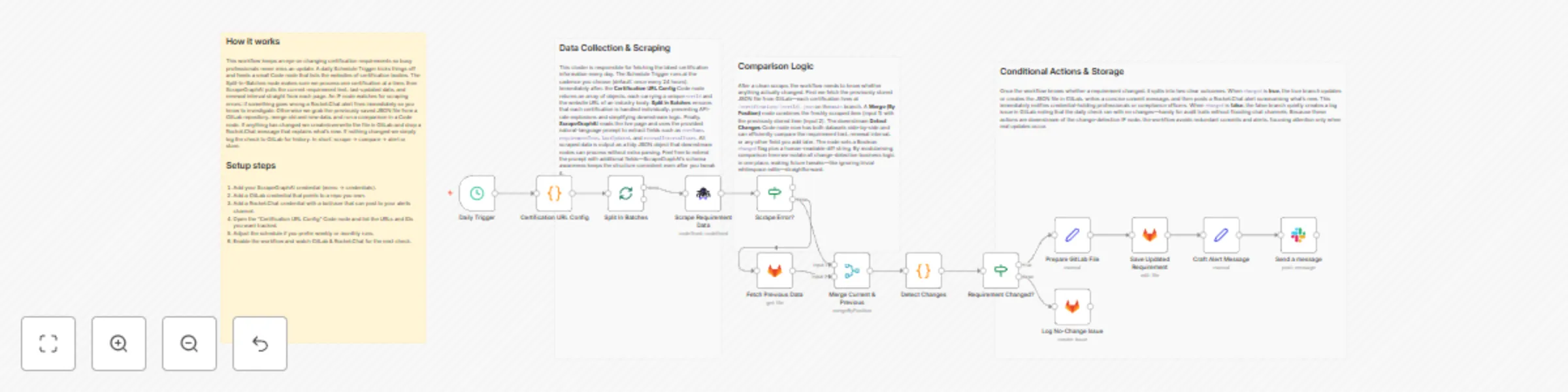

Automatically track certification changes with ScrapeGraphAI, GitLab and Rocket.Chat

# Certification Requirement Tracker with Rocket.Chat and GitLab **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow automatically scrapes certification-issuing bodies once a year, detects any changes in certification or renewal requirements, creates a GitLab issue for the responsible team, and notifies the relevant channel in Rocket.Chat. It helps professionals and compliance teams stay ahead of changing industry requirements and never miss a renewal. ## Pre-conditions/Requirements ### Prerequisites - An n8n instance (self-hosted or n8n.cloud) - ScrapeGraphAI community node installed and activated - Rocket.Chat workspace with Incoming Webhook or user credentials - GitLab account with at least one repository and a Personal Access Token (PAT) - Access URLs for all certification bodies or industry associations you want to monitor ### Required Credentials - **ScrapeGraphAI API Key** – Enables web scraping services - **Rocket.Chat Credentials** – Either: - Webhook URL, or - Username & Password / Personal Access Token - **GitLab Personal Access Token** – To create issues and comments via API ### Specific Setup Requirements | Service | Requirement | Example/Notes | | ------------- | ---------------------------------------------- | ---------------------------------------------------- | | Rocket.Chat | Incoming Webhook URL OR user credentials | `https://chat.example.com/hooks/abc123…` | | GitLab | Personal Access Token with `api` scope | Generate at **Settings → Access Tokens** | | ScrapeGraphAI | Domain whitelist (if running behind firewall) | Allow outbound HTTPS traffic to target sites | | Cron Schedule | Annual (default) or custom interval | `0 0 1 1 *` for 1-Jan every year | ## How it works This workflow automatically scrapes certification-issuing bodies once a year, detects any changes in certification or renewal requirements, creates a GitLab issue for the responsible team, and notifies the relevant channel in Rocket.Chat. It helps professionals and compliance teams stay ahead of changing industry requirements and never miss a renewal. ## Key Steps: - **Scheduled Trigger**: Fires annually (or any chosen interval) to start the check. - **Set Node – URL List**: Stores an array of certification-body URLs to scrape. - **Split in Batches**: Iterates over each URL for parallel scraping. - **ScrapeGraphAI**: Extracts requirement text, effective dates, and renewal info. - **Code Node – Diff Checker**: Compares the newly scraped data with last year’s GitLab issue (if any) to detect changes. - **IF Node – Requirements Changed?**: Routes the flow based on change detection. - **GitLab – Create/Update Issue**: Opens a new issue or comments on an existing one with details of the change. - **Rocket.Chat – Notify Channel**: Sends a message summarizing any changes and linking to the GitLab issue. - **Merge Node**: Collects all branch results for a final summary report. ## Set up steps **Setup Time: 15-25 minutes** 1. **Install Community Node**: In n8n, navigate to **Settings → Community Nodes** and install “ScrapeGraphAI”. 2. **Add Credentials**: a. In **Credentials**, create “ScrapeGraphAI API”. b. Add your Rocket.Chat Webhook or PAT. c. Add your GitLab PAT with `api` scope. 3. **Import Workflow**: Copy the JSON template into n8n (**Workflows → Import**). 4. **Configure URL List**: Open the **Set – URL List** node and replace the sample array with real certification URLs. 5. **Adjust Cron Expression**: Double-click the **Schedule Trigger** node and set your desired frequency. 6. **Customize Rocket.Chat Channel**: In the **Rocket.Chat – Notify** node, set the `channel` or use an incoming webhook. 7. **Run Once for Testing**: Execute the workflow manually to ensure issues and notifications are created as expected. 8. **Activate Workflow**: Toggle **Activate** so the schedule starts running automatically. ## Node Descriptions ### Core Workflow Nodes: - **stickyNote – Workflow Notes**: Contains a high-level diagram and documentation inside the editor. - **Schedule Trigger** – Initiates the yearly check. - **Set (URL List)** – Holds certification body URLs and meta info. - **SplitInBatches** – Iterates through each URL in manageable chunks. - **ScrapeGraphAI** – Scrapes each certification page and returns structured JSON. - **Code (Diff Checker)** – Compares the current scrape with historical data. - **If – Requirements Changed?** – Switches path based on diff result. - **GitLab** – Creates or updates issues, attaches JSON diff, sets labels (`certification`, `renewal`). - **Rocket.Chat** – Posts a summary message with links to the GitLab issue(s). - **Merge** – Consolidates batch results for final logging. - **Set (Success)** – Formats a concise success payload. ### Data Flow: 1. **Schedule Trigger** → **Set (URL List)** → **SplitInBatches** → **ScrapeGraphAI** → **Code (Diff Checker)** → **If** → **GitLab / Rocket.Chat** → **Merge** ## Customization Examples ### Add Additional Metadata to GitLab Issue ```javascript // Inside the GitLab "Create Issue" node ↗️ { "title": `Certification Update: ${$json.domain}`, "description": `**What's Changed?**\n${$json.diff}\n\n_Last checked: {{$now}}_`, "labels": "certification,compliance," + $json.industry } ``` ### Customize Rocket.Chat Message Formatting ```javascript // Rocket.Chat node → JSON parameters { "text": `:bell: *Certification Update Detected*\n>*${$json.domain}*\n>See the GitLab issue: ${$json.issueUrl}` } ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "domain": "example-cert-body.org", "scrapeDate": "2024-01-01T00:00:00Z", "oldRequirements": "Original text …", "newRequirements": "Updated text …", "diff": "- Continuous education hours increased from 20 to 24\n- Fee changed to $200", "issueUrl": "https://gitlab.com/org/compliance/-/issues/42", "notification": "sent" } ``` ## Troubleshooting ### Common Issues 1. **No data returned from ScrapeGraphAI** – Confirm the target site is publicly accessible and not blocking bots. Whitelist the domain or add proper headers via ScrapeGraphAI options. 2. **GitLab issue not created** – Check that the PAT has `api` scope and the project ID is correct in the GitLab node. 3. **Rocket.Chat message fails** – Verify webhook URL or credentials and ensure the channel exists. ### Performance Tips - Limit the batch size in **SplitInBatches** to avoid API rate limits. - Schedule the workflow during off-peak hours to minimize load. **Pro Tips:** - Store last-year scrapes in a dedicated GitLab repository to create a complete change log history. - Use n8n’s built-in **Execution History Pruning** to keep the database slim. - Add an **Error Trigger** workflow to notify you if any step fails.

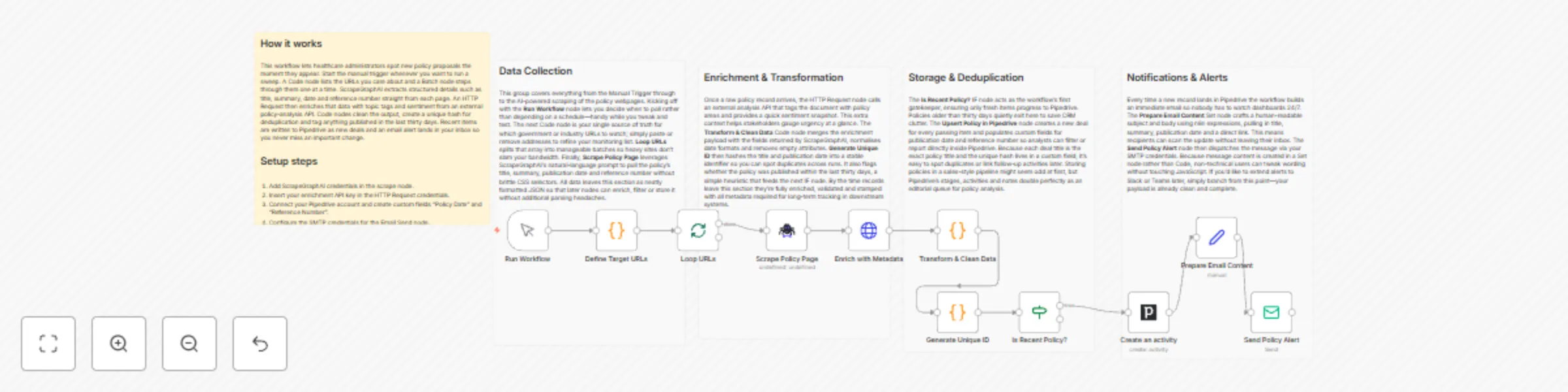

Healthcare policy monitoring with ScrapeGraphAI, Pipedrive and email alerts

# Medical Research Tracker with Email and Pipedrive **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow automatically scans authoritative healthcare policy websites for new research, bills, or regulatory changes, stores relevant findings in Pipedrive, and immediately notifies key stakeholders via email. It is ideal for healthcare administrators and policy analysts who need to stay ahead of emerging legislation or guidance that could impact clinical operations, compliance, and strategy. ## Pre-conditions/Requirements ### Prerequisites - n8n instance (self-hosted or n8n cloud) - ScrapeGraphAI community node installed - Pipedrive account and API token - SMTP credentials (or native n8n Email credentials) for sending alerts - List of target URLs or RSS feeds from government or healthcare policy organizations - Basic familiarity with n8n credential setup ### Required Credentials | Service | Credential Name | Purpose | |--------------------|-----------------|-----------------------------------| | ScrapeGraphAI | API Key | Perform web scraping | | Pipedrive | API Token | Create / update deals & notes | | Email (SMTP/Nodemailer) | SMTP creds | Send alert emails | ### Environment Variables (optional) | Variable | Example Value | Description | |-------------------------|------------------------------|-----------------------------------------------| | N8N_DEFAULT_EMAIL_FROM | [email protected] | Default sender for Email Send node | | POLICY_KEYWORDS | telehealth, Medicare, HIPAA | Comma-separated keywords for filtering | ## How it works This workflow automatically scans authoritative healthcare policy websites for new research, bills, or regulatory changes, stores relevant findings in Pipedrive, and immediately notifies key stakeholders via email. It is ideal for healthcare administrators and policy analysts who need to stay ahead of emerging legislation or guidance that could impact clinical operations, compliance, and strategy. ## Key Steps: - **Manual Trigger**: Kick-starts the workflow or schedules it via cron. - **Set → URL List**: Defines the list of healthcare policy pages or RSS feeds to scrape. - **Split In Batches**: Iterates through each URL so scraping happens sequentially. - **ScrapeGraphAI**: Extracts headlines, publication dates, and links. - **Code (Filter & Normalize)**: Removes duplicates, standardizes JSON structure, and applies keyword filters. - **HTTP Request**: Optionally enriches data with summary content using external APIs (e.g., OpenAI, SummarizeBot). - **If Node**: Checks if the policy item is new (not already logged in Pipedrive). - **Pipedrive**: Creates a new deal or note for tracking and collaboration. - **Email Send**: Sends an alert to compliance or leadership teams with the policy summary. - **Sticky Note**: Provides inline documentation inside the workflow. ## Set up steps **Setup Time: 15–20 minutes** 1. **Install ScrapeGraphAI**: In n8n, go to “Settings → Community Nodes” and install `n8n-nodes-scrapegraphai`. 2. **Create Credentials**: a. Pipedrive → “API Token” from your Pipedrive settings → add in n8n. b. ScrapeGraphAI → obtain API key → add as credential. c. Email SMTP → configure sender details in n8n. 3. **Import Workflow**: Copy the JSON template into n8n (“Import from clipboard”). 4. **Update URL List**: Open the initial Set node and replace placeholder URLs with the sites you monitor (e.g., `cms.gov`, `nih.gov`, `who.int`, state health departments). 5. **Define Keywords (optional)**: a. Open the Code node “Filter & Normalize”. b. Adjust the `const keywords = [...]` array to match topics you care about. 6. **Test Run**: Trigger manually; verify that: - Scraped items appear in the execution logs. - New deals/notes show up in Pipedrive. - Alert email lands in your inbox. 7. **Schedule**: Add a Cron node (e.g., every 6 hours) in place of Manual Trigger for automated execution. ## Node Descriptions ### Core Workflow Nodes: - **Manual Trigger** – Launches the workflow on demand. - **Set – URL List** – Holds an array of target policy URLs/RSS feeds. - **Split In Batches** – Processes each URL one at a time to avoid rate limiting. - **ScrapeGraphAI** – Scrapes page content and parses structured data. - **Code – Filter & Normalize** – Cleans results, removes duplicates, applies keyword filter. - **HTTP Request – Summarize** – Calls a summarization API (optional). - **If – Duplicate Check** – Queries Pipedrive to see if the policy item already exists. - **Pipedrive (Deal/Note)** – Logs a new deal or adds a note with policy details. - **Email Send – Alert** – Notifies subscribed stakeholders. - **Sticky Note** – Embedded instructions inside the canvas. ### Data Flow: 1. **Manual Trigger** → **Set (URLs)** → **Split In Batches** → **ScrapeGraphAI** → **Code (Filter)** → **If (Duplicate?)** → **Pipedrive** → **Email Send** ## Customization Examples ### 1. Add Slack notifications ```javascript // Insert after Email Send { "node": "Slack", "parameters": { "channel": "#policy-alerts", "text": `New policy update: ${$json["title"]} - ${$json["url"]}` } } ``` ### 2. Use different CRM (HubSpot) ```javascript // Replace Pipedrive node config { "resource": "deal", "operation": "create", "title": $json["title"], "properties": { "dealstage": "appointmentscheduled", "description": $json["summary"] } } ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "title": "Telehealth Expansion Act of 2024", "date": "2024-05-30", "url": "https://www.congress.gov/bill/118th-congress-house-bill/1234", "summary": "This bill proposes expanding Medicare reimbursement for telehealth services...", "source": "congress.gov", "status": "new" } ``` ## Troubleshooting ### Common Issues 1. **Empty Scrape Results** – Check if the target site uses JavaScript rendering; ScrapeGraphAI may need a headless browser option enabled. 2. **Duplicate Deals in Pipedrive** – Ensure the “If Duplicate?” node compares a unique field (e.g., URL or title) before creating a new deal. ### Performance Tips - Limit batch size to avoid API rate limits. - Cache or store the last scraped timestamp to skip unchanged pages. **Pro Tips:** - Combine this workflow with an n8n “Cron” or “Webhook” trigger for fully automated monitoring. - Use environment variables for keywords and email recipients to avoid editing nodes each time. - Leverage Pipedrive’s automations to notify additional teams (e.g., legal) when high-priority items are logged.

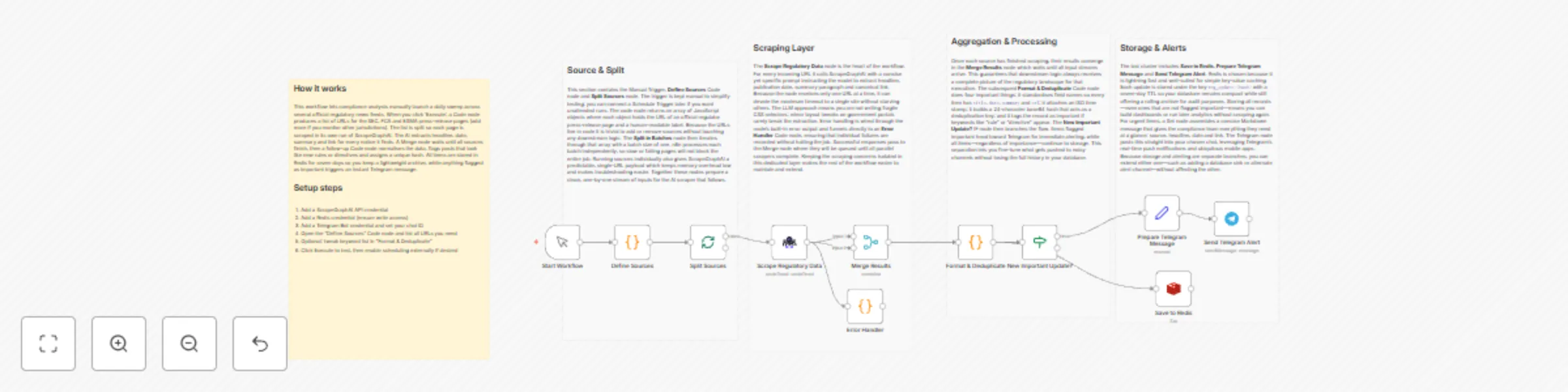

Monitor regulatory updates with ScrapeGraphAI and send alerts via Telegram

# Breaking News Aggregator with Telegram and Redis **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow monitors selected government websites, regulatory bodies, and legal-news portals for new or amended regulations relevant to specific industries. It scrapes the latest headlines, compares them against previously recorded items in Redis, and pushes real-time compliance alerts to a Telegram channel or chat. ## Pre-conditions/Requirements ### Prerequisites - n8n instance (self-hosted or cloud) - ScrapeGraphAI community node installed - Redis server accessible from n8n - Telegram Bot created via BotFather - (Optional) Cron node if you want fully automated scheduling instead of manual trigger ### Required Credentials - **ScrapeGraphAI API Key** – Enables ScrapeGraphAI scraping functionality - **Telegram Bot Token** – Allows n8n to send messages via your bot - **Redis Credentials** – Host, port, and (if set) password for your Redis instance ### Redis Setup Requirements | Key Name | Description | Example | |----------|-------------|---------| | `latestRegIds` | Redis Set used to store hashes/IDs of the most recent regulatory articles processed | `latestRegIds` | > Hint: Use a dedicated Redis DB (e.g., DB 1) to keep workflow data isolated from other applications. ## How it works This workflow monitors selected government websites, regulatory bodies, and legal-news portals for new or amended regulations relevant to specific industries. It scrapes the latest headlines, compares them against previously recorded items in Redis, and pushes real-time compliance alerts to a Telegram channel or chat. ## Key Steps: - **Manual Trigger / Cron**: Starts the workflow manually or on a set schedule (e.g., daily at 06:00 UTC). - **Code (Define Sources)**: Returns an array of URL objects pointing to regulatory pages to monitor. - **SplitInBatches**: Iterates through each source URL in manageable chunks. - **ScrapeGraphAI**: Extracts article titles, publication dates, and article URLs from each page. - **Merge (Combine Results)**: Consolidates scraped items into a single stream. - **If (Deduplication Check)**: Verifies whether each article ID already exists in Redis. - **Set (Format Message)**: Creates a human-readable Telegram message string. - **Telegram**: Sends the formatted compliance alert to your chosen chat/channel. - **Redis (Add New IDs)**: Stores the article ID so it is not sent again in the future. - **Sticky Note**: Provides inline documentation inside the workflow canvas. ## Set up steps **Setup Time: 10-15 minutes** 1. **Install community nodes**: In n8n, go to *Settings → Community Nodes* and install `n8n-nodes-scrapegraphai`. 2. **Create credentials**: a. Telegram → *Credentials → Telegram API* → paste your bot token. b. Redis → *Credentials → Redis* → fill host, port, password, DB. c. ScrapeGraphAI → *Credentials → ScrapeGraphAI API* → enter your key. 3. **Configure the “Define Sources” Code node**: Replace the placeholder URLs with the regulatory pages you need to monitor. 4. **Update Telegram chat ID**: Open any chat with your bot and use `https://api.telegram.org/bot<token>/getUpdates` to find the `chat.id`. Insert this value in the Telegram node. 5. **Adjust frequency**: Replace the Manual Trigger with a Cron node (e.g., daily 06:00 UTC). 6. **Test the workflow**: Execute once manually; confirm messages appear in Telegram and that Redis keys are created. 7. **Activate**: Enable the workflow so it runs automatically according to your schedule. ## Node Descriptions ### Core Workflow Nodes: - **Manual Trigger** – Allows on-demand execution during development/testing. - **Code (Define Sources)** – Returns an array of page URLs and meta info to the workflow. - **SplitInBatches** – Prevents overloading websites by scraping in controlled groups. - **ScrapeGraphAI** – Performs the actual web scraping using an AI-assisted parser. - **Merge** – Merges data streams from multiple batches into one. - **If (Check Redis)** – Filters out already-processed articles using Redis SET membership. - **Set** – Shapes output into a user-friendly Telegram message. - **Telegram** – Delivers compliance alerts to stakeholders in real time. - **Redis** – Persists article IDs to avoid duplicate notifications. - **Sticky Note** – Contains usage tips directly on the canvas. ### Data Flow: 1. **Manual Trigger** → **Code (Define Sources)** → **SplitInBatches** → **ScrapeGraphAI** 2. **ScrapeGraphAI** → **Merge** → **If (Check Redis)** 3. **If (true)** → **Set** → **Telegram** → **Redis** ## Customization Examples ### Change industries or keywords ```javascript // Code node snippet return [ { url: "https://regulator.gov/energy-updates", industry: "Energy", keywords: ["renewable", "grid", "tariff"] }, { url: "https://financewatch.gov/financial-rules", industry: "Finance", keywords: ["AML", "KYC", "cryptocurrency"] } ]; ``` ### Modify Telegram message formatting ```javascript // Set node “Parameters → Value” items[0].json.message = `🛡️ *${$json.industry} Regulation Update*\n\n*${$json.title}*\n${$json.date}\n${$json.url}`; return items; ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "title": "EU Proposes New ESG Disclosure Rules", "date": "2024-04-18", "url": "https://europa.eu/legal/eu-proposes-esg-disclosure", "industry": "Finance" } ``` ## Troubleshooting ### Common Issues 1. **Empty scraped data** – Verify CSS selectors/XPath in the ScrapeGraphAI node; website structure may have changed. 2. **Duplicate alerts** – Ensure Redis credentials point to the same DB across nodes; otherwise IDs are not shared. ### Performance Tips - Limit `SplitInBatches` to 2-3 URLs at a time if sites implement rate limiting. - Use environment variables for credentials to simplify migration between stages. **Pro Tips:** - Combine this workflow with n8n’s **Error Trigger** to log failures to Slack or email. - Maintain a CSV of source URLs in Google Sheets and fetch it dynamically via the Google Sheets node. - Pair with the **Webhook** node to let team members add new sources on the fly.

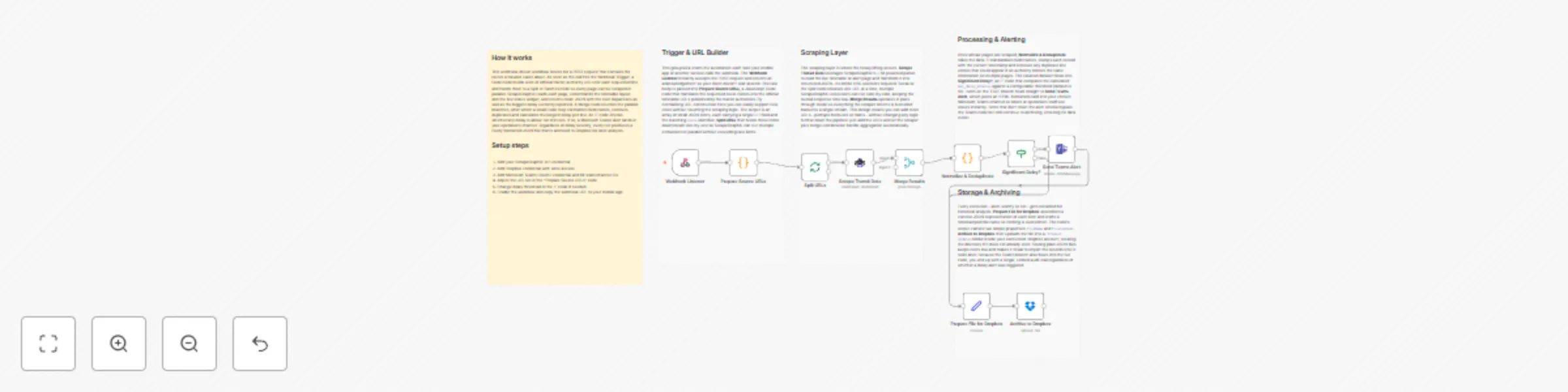

Track & alert public transport delays using ScrapeGraphAI, Teams and Todoist

# Public Transport Delay Tracker with Microsoft Teams and Todoist **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow continuously monitors public-transportation websites and apps for real-time schedule changes and delays, then posts an alert to a Microsoft Teams channel and creates a follow-up task in Todoist. It is ideal for commuters or travel coordinators who need instant, actionable updates about transit disruptions. ## Pre-conditions/Requirements ### Prerequisites - An n8n instance (self-hosted or n8n cloud) - ScrapeGraphAI community node installed - Microsoft Teams account with permission to create an Incoming Webhook - Todoist account with at least one project - Access to target transit authority websites or APIs ### Required Credentials - **ScrapeGraphAI API Key** – Enables scraping of transit data - **Microsoft Teams Webhook URL** – Sends messages to a specific channel - **Todoist API Token** – Creates follow-up tasks - *(Optional)* Transit API key if you are using a protected data source ### Specific Setup Requirements | Resource | What you need | |--------------------------|---------------------------------------------------------------| | Teams Channel | Create a channel → Add “Incoming Webhook” → copy the URL | | Todoist Project | Create “Transit Alerts” project and note its Project ID | | Transit URLs/APIs | Confirm the URLs/pages contain the schedule & delay elements | ## How it works This workflow continuously monitors public-transportation websites and apps for real-time schedule changes and delays, then posts an alert to a Microsoft Teams channel and creates a follow-up task in Todoist. It is ideal for commuters or travel coordinators who need instant, actionable updates about transit disruptions. ## Key Steps: - **Webhook (Trigger)**: Starts the workflow on a schedule or via HTTP call. - **Set Node**: Defines target transit URLs and parsing rules. - **ScrapeGraphAI Node**: Scrapes live schedule and delay data. - **Code Node**: Normalizes scraped data, converts times, and flags delays. - **IF Node**: Determines if a delay exceeds the user-defined threshold. - **Microsoft Teams Node**: Sends formatted alert message to the selected Teams channel. - **Todoist Node**: Creates a “Check alternate route” task with due date equal to the delayed departure time. - **Sticky Note Node**: Holds a blueprint-level explanation for future editors. ## Set up steps **Setup Time: 15–20 minutes** 1. **Install community node**: In n8n, go to “Manage Nodes” → “Install” → search for “ScrapeGraphAI” → install and restart n8n. 2. **Create Teams webhook**: In Microsoft Teams, open target channel → “Connectors” → “Incoming Webhook” → give it a name/icon → copy the URL. 3. **Create Todoist API token**: Todoist → Settings → Integrations → copy your personal API token. 4. **Add credentials in n8n**: Settings → Credentials → create new for ScrapeGraphAI, Microsoft Teams, and Todoist. 5. **Import workflow template**: File → Import Workflow JSON → select this template. 6. **Configure Set node**: Replace example transit URLs with those of your local transit authority. 7. **Adjust delay threshold**: In the Code node, edit `const MAX_DELAY_MINUTES = 5;` as needed. 8. **Activate workflow**: Toggle “Active”. Monitor executions to ensure messages and tasks are created. ## Node Descriptions ### Core Workflow Nodes: - **Webhook** – Triggers workflow on schedule or external HTTP request. - **Set** – Supplies list of URLs and scraping selectors. - **ScrapeGraphAI** – Scrapes timetable, status, and delay indicators. - **Code** – Parses results, converts to minutes, and builds payloads. - **IF** – Compares delay duration to threshold. - **Microsoft Teams** – Posts formatted adaptive-card-style message. - **Todoist** – Adds a task with priority and due date. - **Sticky Note** – Internal documentation inside the workflow canvas. ### Data Flow: 1. **Webhook** → **Set** → **ScrapeGraphAI** → **Code** → **IF** a. **IF (true branch)** → **Microsoft Teams** → **Todoist** b. **IF (false branch)** → (workflow ends) ## Customization Examples ### Change alert message formatting ```javascript // In the Code node const message = `⚠️ Delay Alert: Route: ${item.route} Expected: ${item.scheduled} New Time: ${item.newTime} Delay: ${item.delay} min Link: ${item.url}`; return [{ json: { message } }]; ``` ### Post to multiple Teams channels ```javascript // Duplicate the Microsoft Teams node and reference a different credential items.forEach(item => { item.json.webhookUrl = $node["Set"].json["secondaryChannelWebhook"]; }); return items; ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "route": "Blue Line", "scheduled": "2024-12-01T14:25:00Z", "newTime": "2024-12-01T14:45:00Z", "delay": 20, "status": "Delayed", "url": "https://transit.example.com/blue-line/status" } ``` ## Troubleshooting ### Common Issues 1. **Scraping returns empty data** – Verify CSS selectors/XPath in the Set node and ensure the target site hasn’t changed its markup. 2. **Teams message not sent** – Check that the stored webhook URL is correct and the connector is still active. 3. **Todoist task duplicated** – Add a unique key (e.g., route + timestamp) to avoid inserting duplicates. ### Performance Tips - Limit the number of URLs per execution when monitoring many routes. - Cache previous scrape results to avoid hitting site rate limits. **Pro Tips:** - Use n8n’s built-in Cron instead of Webhook if you only need periodic polling. - Add a SplitInBatches node after scraping to process large route lists incrementally. - Enable execution logging to an external database for detailed audit trails.

Automate commercial real estate monitoring with ScrapeGraphAI, Notion and Mailchimp

# Property Listing Aggregator with Mailchimp and Notion **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow scrapes multiple commercial-real-estate websites, consolidates new property listings into Notion, and emails weekly availability updates or immediate space alerts to a Mailchimp audience. It automates the end-to-end process so business owners can stay on top of the latest spaces without manual searching. ## Pre-conditions/Requirements ### Prerequisites - n8n instance (self-hosted or n8n.cloud) - ScrapeGraphAI community node installed - Active Notion workspace with permission to create/read databases - Mailchimp account with at least one Audience list - Basic understanding of JSON; ability to add API credentials in n8n ### Required Credentials - **ScrapeGraphAI API Key** – Enables web scraping functionality - **Notion OAuth2 / Integration Token** – Writes data into Notion database - **Mailchimp API Key** – Sends campaigns and individual emails - *(Optional)* **Proxy credentials** – If target real-estate sites block your IP ### Specific Setup Requirements | Resource | Requirement | Example | |----------|-------------|---------| | Notion | Database with property fields (Address, Price, SqFt, URL, Availability) | Database ID: `abcd1234efgh` | | Mailchimp | Audience list where alerts are sent | Audience ID: `f3a2b6c7d8` | | ScrapeGraphAI | YAML/JSON config per site | Stored inside the ScrapeGraphAI node | ## How it works This workflow scrapes multiple commercial-real-estate websites, consolidates new property listings into Notion, and emails weekly availability updates or immediate space alerts to a Mailchimp audience. It automates the end-to-end process so business owners can stay on top of the latest spaces without manual searching. ## Key Steps: - **Manual Trigger / CRON**: Starts the workflow weekly or on-demand. - **Code (Site List Builder)**: Generates an array of target URLs for ScrapeGraphAI. - **Split In Batches**: Processes URLs in manageable groups to avoid rate limits. - **ScrapeGraphAI**: Extracts property details from each site. - **IF (New vs Existing)**: Checks whether the listing already exists in Notion. - **Notion**: Inserts new listings or updates existing records. - **Set**: Formats email content (HTML & plaintext). - **Mailchimp**: Sends a campaign or automated alert to subscribers. - **Sticky Notes**: Provide documentation and future-enhancement pointers. ## Set up steps **Setup Time: 15-25 minutes** 1. **Install Community Node** Navigate to *Settings → Community Nodes* and install “ScrapeGraphAI”. 2. **Create Notion Integration** - Go to Notion *Settings → Integrations → Develop your own integration*. - Copy the integration token and share your target database with the integration. 3. **Add Mailchimp API Key** - In Mailchimp: *Account → Extras → API keys*. - Copy an existing key or create a new one, then add it to n8n credentials. 4. **Build Scrape Config** In the ScrapeGraphAI node, paste a YAML/JSON selector config for each website (address, price, sqft, url, availability). 5. **Configure the URL List** Open the first Code node. Replace the placeholder array with your target listing URLs. 6. **Map Notion Fields** Open the Notion node and map scraped fields to your database properties. Save. 7. **Design Email Template** In the Set node, tweak the HTML and plaintext blocks to match your brand. 8. **Test the Workflow** Trigger manually, check that Notion rows are created and Mailchimp sends the message. 9. **Schedule** Add a CRON node (weekly) or leave the Manual Trigger for ad-hoc runs. ## Node Descriptions ### Core Workflow Nodes: - **Manual Trigger / CRON** – Kicks off the workflow either on demand or on a schedule. - **Code (Site List Builder)** – Holds an array of commercial real-estate URLs and outputs one item per URL. - **Split In Batches** – Prevents hitting anti-bot limits by processing URLs in groups (default: 5). - **ScrapeGraphAI** – Crawls each URL, parses DOM with CSS/XPath selectors, returns structured JSON. - **IF (New Listing?)** – Compares scraped listing IDs against existing Notion database rows. - **Notion** – Creates or updates pages representing property listings. - **Set (Email Composer)** – Builds dynamic email subject, body, and merge tags for Mailchimp. - **Mailchimp** – Uses the *Send Campaign* endpoint to email your audience. - **Sticky Note** – Contains inline documentation and customization reminders. ### Data Flow: 1. **Manual Trigger/CRON** → **Code (URLs)** → **Split In Batches** → **ScrapeGraphAI** → **IF (New?)** 2. True path → **Notion (Create)** → **Set (Email)** → **Mailchimp** 3. False path → *(skip)* ## Customization Examples ### Filter Listings by Maximum Budget ```javascript // Inside the IF node (custom expression) {{$json["price"] <= 3500}} ``` ### Change Email Frequency to Daily Digests ```json { "nodes": [ { "name": "Daily CRON", "type": "n8n-nodes-base.cron", "parameters": { "triggerTimes": [ { "hour": 8, "minute": 0 } ] } } ] } ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "address": "123 Market St, Suite 400", "price": 3200, "sqft": 950, "url": "https://examplebroker.com/listing/123", "availability": "Immediate", "new": true } ``` ## Troubleshooting ### Common Issues 1. **Scraper returns empty objects** – Verify selectors in ScrapeGraphAI config; inspect the site’s HTML for changes. 2. **Duplicate entries in Notion** – Ensure the “IF” node checks a unique ID (e.g., listing URL) before creating a page. ### Performance Tips - Reduce batch size or add delays in ScrapeGraphAI to avoid site blocking. - Cache previously scraped URLs in an external file or database for faster runs. **Pro Tips:** - Rotate proxies in ScrapeGraphAI for heavily protected sites. - Use Notion rollups to calculate total available square footage automatically. - Leverage Mailchimp merge tags (`*|FNAME|*`) in the Set node for personalized alerts.

Track certification requirement changes with ScrapeGraphAI, GitHub and email

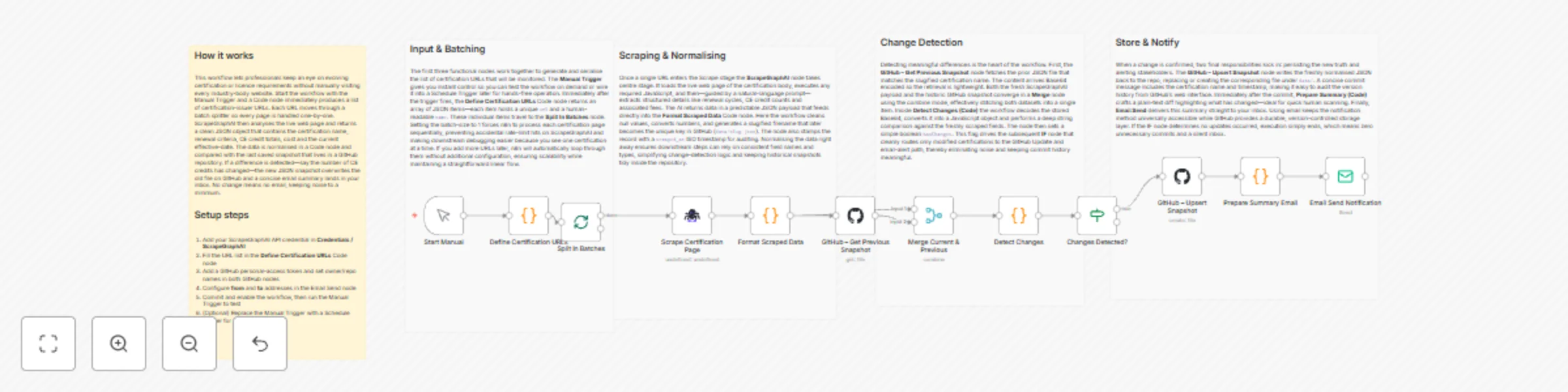

# Job Posting Aggregator with Email and GitHub **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow automatically aggregates certification-related job-posting requirements from multiple industry sources, compares them against last year’s data stored in GitHub, and emails a concise change log to subscribed professionals. It streamlines annual requirement checks and renewal reminders, ensuring users never miss an update. ## Pre-conditions/Requirements ### Prerequisites - n8n instance (self-hosted or n8n cloud) - ScrapeGraphAI community node installed - Git installed (for optional local testing of the repo) - Working SMTP server or other Email credential supported by n8n ### Required Credentials - **ScrapeGraphAI API Key** – Enables web scraping of certification pages - **GitHub Personal Access Token** – Allows the workflow to read/write files in the repo - **Email / SMTP Credentials** – Sends the summary email to end-users ### Specific Setup Requirements | Resource | Purpose | Example | |----------|---------|---------| | GitHub Repository | Stores `certification_requirements.json` versioned annually | `https://github.com/<you>/cert-requirements.git` | | Watch List File | List of page URLs & selectors to scrape | Saved in the repo under `/config/watchList.json` | | Email List | Semicolon-separated list of recipients | `[email protected];[email protected]` | ## How it works This workflow automatically aggregates certification-related job-posting requirements from multiple industry sources, compares them against last year’s data stored in GitHub, and emails a concise change log to subscribed professionals. It streamlines annual requirement checks and renewal reminders, ensuring users never miss an update. ## Key Steps: - **Manual Trigger**: Starts the workflow on demand or via scheduled cron. - **Load Watch List (Code Node)**: Reads the list of certification URLs and CSS selectors. - **Split In Batches**: Iterates through each URL to avoid rate limits. - **ScrapeGraphAI**: Scrapes requirement details from each page. - **Merge (Wait)**: Reassembles individual scrape results into a single JSON array. - **GitHub (Read File)**: Retrieves last year’s `certification_requirements.json`. - **IF (Change Detector)**: Compares current vs. previous JSON and decides whether changes exist. - **Email Send**: Composes and sends a formatted summary of changes. - **GitHub (Upsert File)**: Commits the new JSON file back to the repo for future comparisons. ## Set up steps **Setup Time: 15-25 minutes** 1. **Install Community Node**: From n8n UI → Settings → Community Nodes → search and install “ScrapeGraphAI”. 2. **Create/Clone GitHub Repo**: Add an empty `certification_requirements.json` ( `{}` ) and a `config/watchList.json` with an array of objects like: ```json [ { "url": "https://cert-body.org/requirements", "selector": "#requirements" } ] ``` 3. **Generate GitHub PAT**: Scope `repo`, store in n8n Credentials as “GitHub API”. 4. **Add ScrapeGraphAI Credential**: Paste your API key into n8n Credentials. 5. **Configure Email Credentials**: E.g., SMTP with username/password or OAuth2. 6. **Open Workflow**: Import the template JSON into n8n. 7. **Update Environment Variables** (in the Code node or via n8n variables): - `GITHUB_REPO` (e.g., `user/cert-requirements`) - `EMAIL_RECIPIENTS` 8. **Test Run**: Trigger manually. Verify email content and GitHub commit. 9. **Schedule**: Add a Cron node (optional) for yearly or quarterly automatic runs. ## Node Descriptions ### Core Workflow Nodes: - **Manual Trigger** – Initiates the workflow manually or via external schedule. - **Code (Load Watch List)** – Reads and parses `watchList.json` from GitHub or static input. - **SplitInBatches** – Controls request concurrency to avoid scraping bans. - **ScrapeGraphAI** – Extracts requirement text using provided CSS selectors or XPath. - **Merge (Combine)** – Waits for all batches and merges them into one dataset. - **GitHub (Read/Write File)** – Handles version-controlled storage of JSON data. - **IF (Change Detector)** – Compares hashes/JSON diff to detect updates. - **EmailSend** – Sends change log, including renewal reminders and diff summary. - **Sticky Note** – Provides in-workflow documentation for future editors. ### Data Flow: 1. **Manual Trigger** → **Code (Load Watch List)** → **SplitInBatches** 2. **SplitInBatches** → **ScrapeGraphAI** → **Merge** 3. **Merge** → **GitHub (Read File)** → **IF (Change Detector)** 4. **IF (True)** → **Email Send** → **GitHub (Upsert File)** ## Customization Examples ### Adjusting Scraper Configuration ```javascript // Inside the Watch List JSON object { "url": "https://new-association.com/cert-update", "selector": ".content article:nth-of-type(1) ul" } ``` ### Custom Email Template ```javascript // In Email Send node → HTML Content <div> <h2>📋 Certification Updates – {{ $json.date }}</h2> <p>The following certifications have new requirements:</p> <ul> {{ $json.diffHtml }} </ul> <p>For full details visit our GitHub repo.</p> </div> ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "timestamp": "2024-09-01T12:00:00Z", "source": "watchList.json", "current": { "AWS-SAA": "Version 3.0, requires renewed proctored exam", "PMP": "60 PDUs every 3 years" }, "previous": { "AWS-SAA": "Version 2.0", "PMP": "60 PDUs every 3 years" }, "changes": { "AWS-SAA": "Updated to Version 3.0; exam format changed." } } ``` ## Troubleshooting ### Common Issues 1. **ScrapeGraphAI returns empty data** – Check CSS/XPath selectors and ensure page is publicly accessible. 2. **GitHub authentication fails** – Verify PAT scope includes `repo` and that the credential is linked in both GitHub nodes. ### Performance Tips - Limit `SplitInBatches` size to 3-5 URLs when sources are heavy to avoid timeouts. - Enable n8n execution mode “Queue” for long-running scrapes. **Pro Tips:** - Store selector samples in comments next to each watch list entry for future maintenance. - Use a Cron node set to “0 0 1 1 *” for an annual run exactly on Jan 1st. - Add a Telegram node after Email Send for instant mobile notifications.

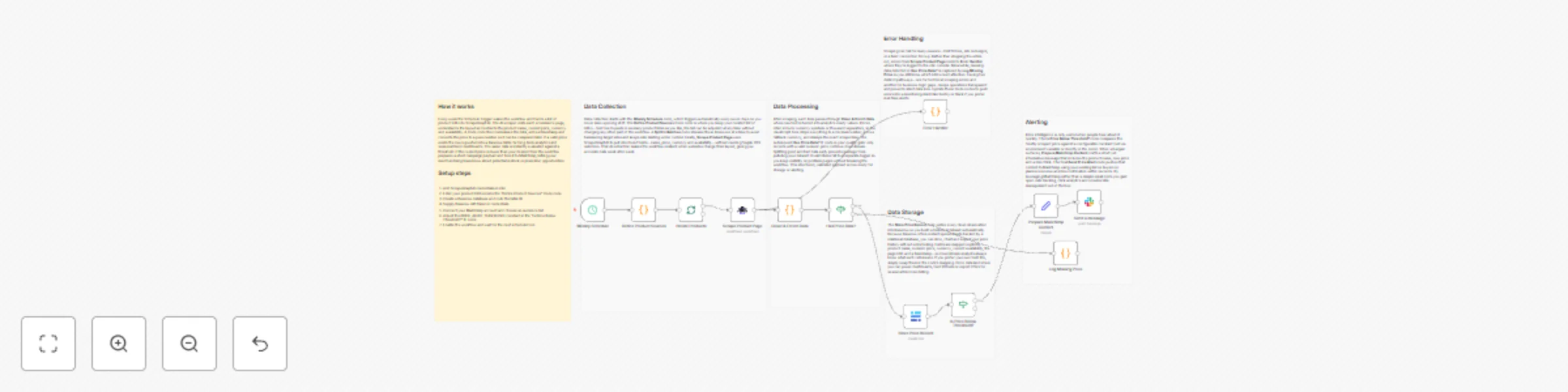

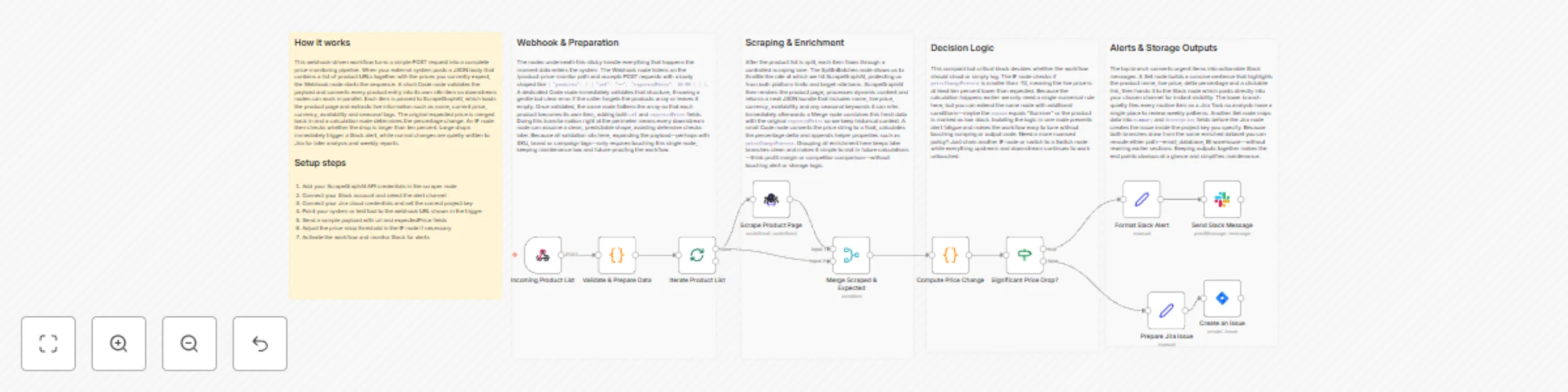

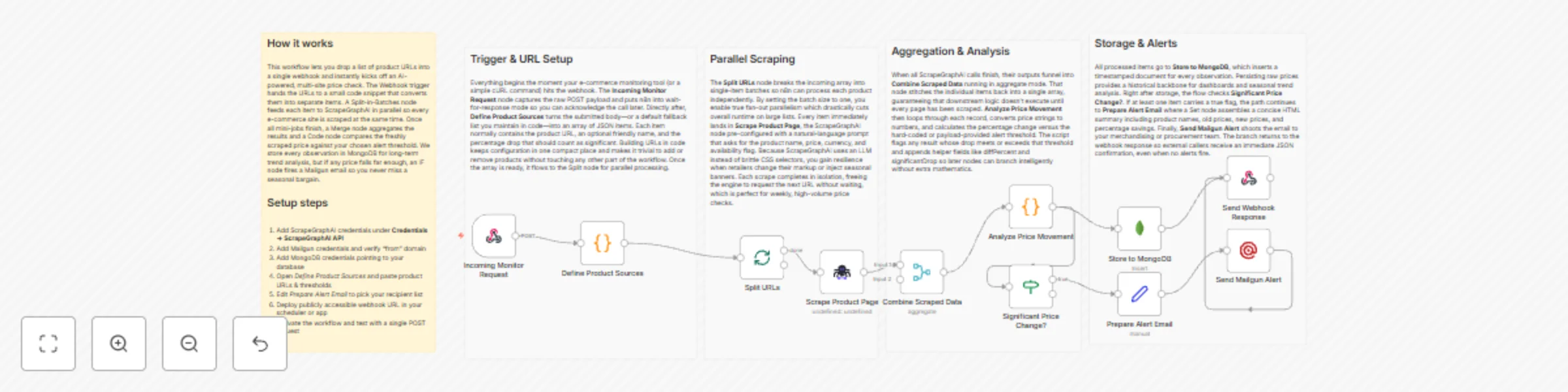

Track e-commerce price changes with ScrapeGraphAI, Baserow & Pushover alerts

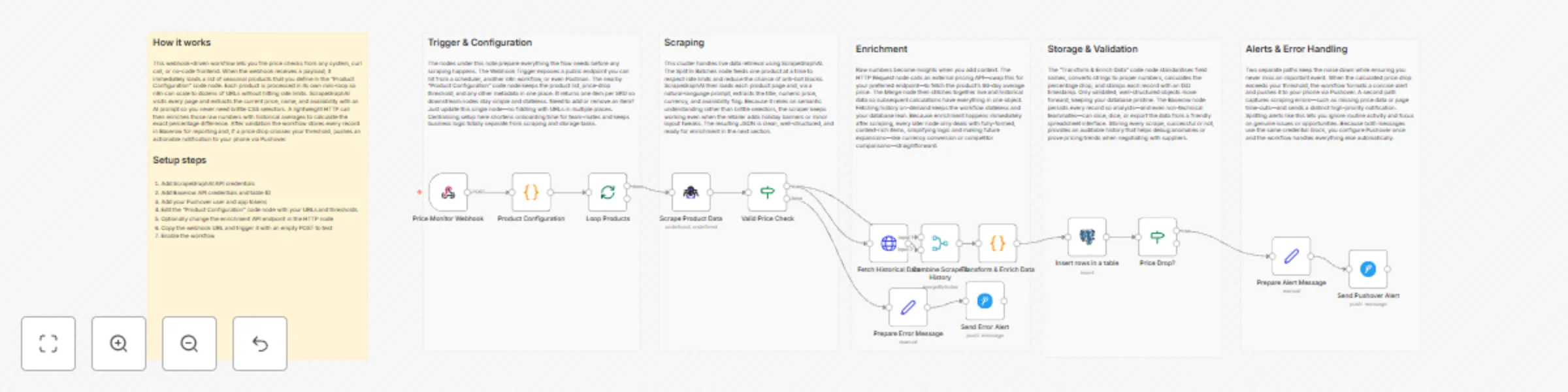

# Product Price Monitor with Pushover and Baserow **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow automatically scrapes multiple e-commerce sites for selected products, analyzes weekly pricing trends, stores historical data in Baserow, and sends an instant Pushover notification when significant price changes occur. It is ideal for retailers who need to track seasonal fluctuations and optimize inventory or pricing strategies. ## Pre-conditions/Requirements ### Prerequisites - An active n8n instance (self-hosted or n8n.cloud) - ScrapeGraphAI community node installed - At least one publicly accessible webhook URL (for on-demand runs) - A Baserow database with a table prepared for product data - Pushover account and registered application ### Required Credentials - **ScrapeGraphAI API Key** – Enables web-scraping capabilities - **Baserow: Personal API Token** – Allows read/write access to your table - **Pushover: User Key & API Token** – Sends mobile/desktop push notifications - (Optional) **HTTP Basic Token or API Keys** for any private e-commerce endpoints you plan to monitor ### Baserow Table Specification | Field Name | Type | Description | |------------|-----------|--------------------------| | Product ID | Number | Internal or SKU | | Name | Text | Product title | | URL | URL | Product page | | Price | Number | Current price (float) | | Currency | Single select (`USD`, `EUR`, etc.) | | Last Seen | Date/Time | Last price check | | Trend | Number | 7-day % change | ## How it works This workflow automatically scrapes multiple e-commerce sites for selected products, analyzes weekly pricing trends, stores historical data in Baserow, and sends an instant Pushover notification when significant price changes occur. It is ideal for retailers who need to track seasonal fluctuations and optimize inventory or pricing strategies. ## Key Steps: - **Webhook Trigger**: Manually or externally trigger the weekly price-check run. - **Set Node**: Define an array of product URLs and metadata. - **Split In Batches**: Process products one at a time to avoid rate limits. - **ScrapeGraphAI Node**: Extract current price, title, and availability from each URL. - **If Node**: Determine if price has changed > ±5 % since last entry. - **HTTP Request (Trend API)**: Retrieve seasonal trend scores (optional). - **Merge Node**: Combine scrape data with trend analysis. - **Baserow Nodes**: Upsert latest record and fetch historical data for comparison. - **Pushover Node**: Send alert when significant price movement detected. - **Sticky Notes**: Documentation and inline comments for maintainability. ## Set up steps **Setup Time: 15-25 minutes** 1. **Install Community Node**: In n8n, go to “Settings → Community Nodes” and install **ScrapeGraphAI**. 2. **Create Baserow Table**: Match the field structure shown above. 3. **Obtain Credentials**: - ScrapeGraphAI API key from your dashboard - Baserow personal token (`/account/settings`) - Pushover user key & API token 4. **Clone Workflow**: Import this template into n8n. 5. **Configure Credentials in Nodes**: Open each ScrapeGraphAI, Baserow, and Pushover node and select/enter the appropriate credential. 6. **Add Product URLs**: Open the first **Set** node and replace the example array with your actual product list. 7. **Adjust Thresholds**: In the **If** node, change the `5` value if you want a higher/lower alert threshold. 8. **Test Run**: Execute the workflow manually; verify Baserow rows and the Pushover notification. 9. **Schedule**: Add a **Cron** trigger or external scheduler to run weekly. ## Node Descriptions ### Core Workflow Nodes: - **Webhook** – Entry point for manual or API-based triggers. - **Set** – Holds the array of product URLs and meta fields. - **SplitInBatches** – Iterates through each product to prevent request spikes. - **ScrapeGraphAI** – Scrapes price, title, and currency from product pages. - **If** – Compares new price vs. previous price in Baserow. - **HTTP Request** – Calls a trend API (e.g., Google Trends) to get seasonal score. - **Merge** – Combines scraping results with trend data. - **Baserow (Upsert & Read)** – Writes fresh data and fetches historical price for comparison. - **Pushover** – Sends formatted push notification with price delta. - **StickyNote** – Documents purpose and hints within the workflow. ### Data Flow: 1. **Webhook** → **Set** → **SplitInBatches** → **ScrapeGraphAI** 2. **ScrapeGraphAI** → **If** - **True** branch → **HTTP Request** → **Merge** → **Baserow Upsert** → **Pushover** - **False** branch → **Baserow Upsert** ## Customization Examples ### Change Notification Channel to Slack ```javascript // Replace the Pushover node with Slack { "channel": "#pricing-alerts", "text": `🚨 ${$json["Name"]} changed by ${$json["delta"]}% – now ${$json["Price"]} ${$json["Currency"]}` } ``` ### Additional Data Enrichment (Stock Status) ```javascript // Add to ScrapeGraphAI's selector map { "stock": { "selector": ".availability span", "type": "text" } } ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "ProductID": 12345, "Name": "Winter Jacket", "URL": "https://shop.example.com/winter-jacket", "Price": 79.99, "Currency": "USD", "LastSeen": "2024-11-20T10:34:18.000Z", "Trend": 12, "delta": -7.5 } ``` ## Troubleshooting ### Common Issues 1. **Empty scrape result** – Check if the product page changed its HTML structure; update CSS selectors in ScrapeGraphAI. 2. **Baserow “Row not found” errors** – Ensure `Product ID` or another unique field is set as the primary key for upsert. ### Performance Tips - Limit batch size to 5-10 URLs to avoid IP blocking. - Use n8n’s built-in proxy settings if scraping sites with geo-restrictions. **Pro Tips:** - Store historical JSON responses in a separate Baserow table for deeper analytics. - Standardize currency symbols to avoid false change detections. - Couple this workflow with an **n8n Dashboard** to visualize price trends in real-time.

Healthcare policy monitoring with ScrapeGraphAI, Pipedrive and Matrix alerts

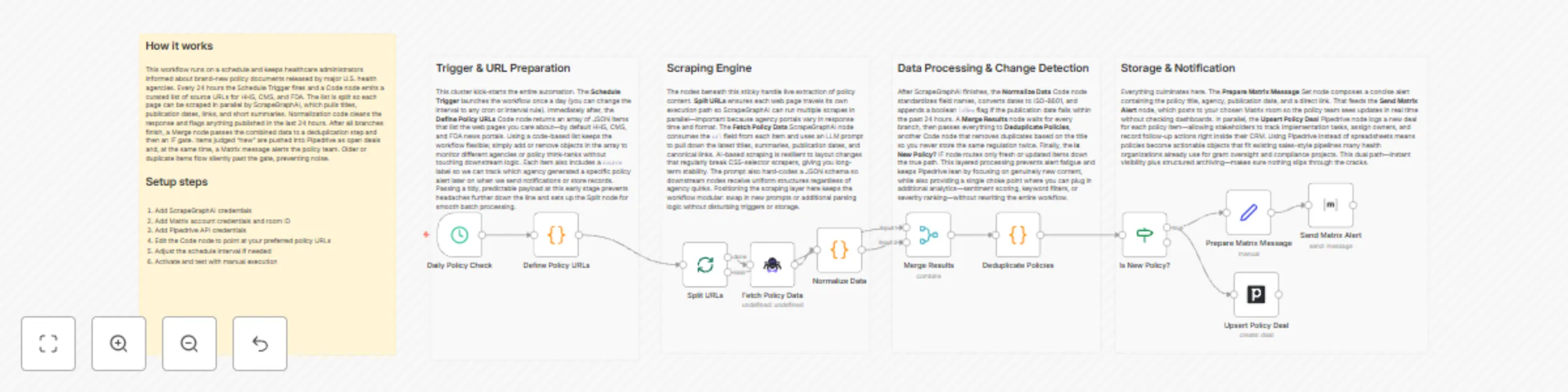

# Medical Research Tracker with Matrix and Pipedrive **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow automatically monitors selected government and healthcare-policy websites, extracts newly published or updated policy documents, logs them as deals in a Pipedrive pipeline, and announces critical changes in a Matrix room. It gives healthcare administrators and policy analysts a near real-time view of policy developments without manual web checks. ## Pre-conditions/Requirements ### Prerequisites - n8n instance (self-hosted or n8n cloud) - ScrapeGraphAI community node installed - Active Pipedrive account with at least one pipeline - Matrix account & accessible room for notifications - Basic knowledge of n8n credential setup ### Required Credentials - **ScrapeGraphAI API Key** – Enables the scraping engine - **Pipedrive OAuth2 / API Token** – Creates & updates deals - **Matrix Credentials** – Homeserver URL, user, access token (or password) ### Specific Setup Requirements | Variable | Description | Example | |----------|-------------|---------| | `POLICY_SITES` | Comma-separated list of URLs to scrape | `https://health.gov/policies,https://who.int/proposals` | | `PD_PIPELINE_ID` | Pipedrive pipeline where deals are created | `5` | | `PD_STAGE_ID_ALERT` | Stage ID for “Review Needed” | `17` | | `MATRIX_ROOM_ID` | Room to send alerts (incl. leading `!`) | `!policy:matrix.org` | Edit the initial *Set* node to provide these values before running. ## How it works This workflow automatically monitors selected government and healthcare-policy websites, extracts newly published or updated policy documents, logs them as deals in a Pipedrive pipeline, and announces critical changes in a Matrix room. It gives healthcare administrators and policy analysts a near real-time view of policy developments without manual web checks. ## Key Steps: - **Scheduled Trigger**: Runs every 6 hours (configurable) to start the monitoring cycle. - **Code (URL List Builder)**: Generates an array from `POLICY_SITES` for downstream batching. - **SplitInBatches**: Iterates through each policy URL individually. - **ScrapeGraphAI**: Scrapes page titles, publication dates, and summary paragraphs. - **If (New vs Existing)**: Compares scraped hash with last run; continues only for fresh content. - **Merge (Aggregate Results)**: Collects all “new” policies into a single payload. - **Set (Deal Formatter)**: Maps scraped data to Pipedrive deal fields. - **Pipedrive Node**: Creates or updates a deal per policy item. - **Matrix Node**: Posts a formatted alert message in the specified Matrix room. ## Set up steps **Setup Time: 15-20 minutes** 1. **Install Community Node** – In n8n, go to *Settings → Community Nodes → Install* and search for **ScrapeGraphAI**. 2. **Add Credentials** – *Create New* credentials for ScrapeGraphAI, Pipedrive, and Matrix under *Credentials*. 3. **Configure Environment Variables** – Open the *Set (Initial Config)* node and replace placeholders (`POLICY_SITES`, `PD_PIPELINE_ID`, etc.) with your values. 4. **Review Schedule** – Double-click the *Schedule Trigger* node to adjust the interval if needed. 5. **Activate Workflow** – Click *Activate*. The workflow will run at the next scheduled interval. 6. **Verify Outputs** – Check Pipedrive for new deals and the Matrix room for alert messages after the first run. ## Node Descriptions ### Core Workflow Nodes: - **stickyNote** – Provides an at-a-glance description of the workflow logic directly on the canvas. - **scheduleTrigger** – Fires the workflow periodically (default 6 hours). - **code (URL List Builder)** – Splits the `POLICY_SITES` variable into an array. - **splitInBatches** – Ensures each URL is processed individually to avoid timeouts. - **scrapegraphAi** – Parses HTML and extracts policy metadata using XPath/CSS selectors. - **if (New vs Existing)** – Uses hashing to ignore unchanged pages. - **merge** – Combines all new items so they can be processed in bulk. - **set (Deal Formatter)** – Maps scraped fields to Pipedrive deal properties. - **matrix** – Sends formatted messages to a Matrix room for team visibility. - **pipedrive** – Creates or updates deals representing each policy update. ### Data Flow: 1. **scheduleTrigger** → **code** → **splitInBatches** → **scrapegraphAi** → **if** → **merge** → **set** → **pipedrive** → **matrix** ## Customization Examples ### 1. Add another data field (e.g., policy author) ```javascript // Inside ScrapeGraphAI node → Selectors { "title": "//h1/text()", "date": "//time/@datetime", "summary": "//p[1]/text()", "author": "//span[@class='author']/text()" // new line } ``` ### 2. Switch notifications from Matrix to Email ```javascript // Replace Matrix node with “Send Email” { "to": "[email protected]", "subject": "New Healthcare Policy Detected: {{$json.title}}", "text": "Summary:\n{{$json.summary}}\n\nRead more at {{$json.url}}" } ``` ## Data Output Format The workflow outputs structured JSON data for each new policy article: ```json { "title": "Affordable Care Expansion Act – 2024", "url": "https://health.gov/policies/acea-2024", "date": "2024-06-14T09:00:00Z", "summary": "Proposes expansion of coverage to rural areas...", "source": "health.gov", "hash": "2d6f1c8e3b..." } ``` ## Troubleshooting ### Common Issues 1. **ScrapeGraphAI returns empty objects** – Verify selectors match the current HTML structure; inspect the site with developer tools and update the node configuration. 2. **Duplicate deals appear in Pipedrive** – Ensure the “Find or Create” option is enabled in the Pipedrive node, using the page `hash` or `url` as a unique key. ### Performance Tips - Limit `POLICY_SITES` to under 50 URLs per run to avoid hitting rate limits. - Increase Schedule Trigger interval if you notice ScrapeGraphAI rate-limiting. **Pro Tips:** - Store historical scraped data in a database node for long-term audit trails. - Use the n8n *Workflow Executions* page to replay failed runs without waiting for the next schedule. - Add an *Error Trigger* node to emit alerts if scraping or API calls fail.

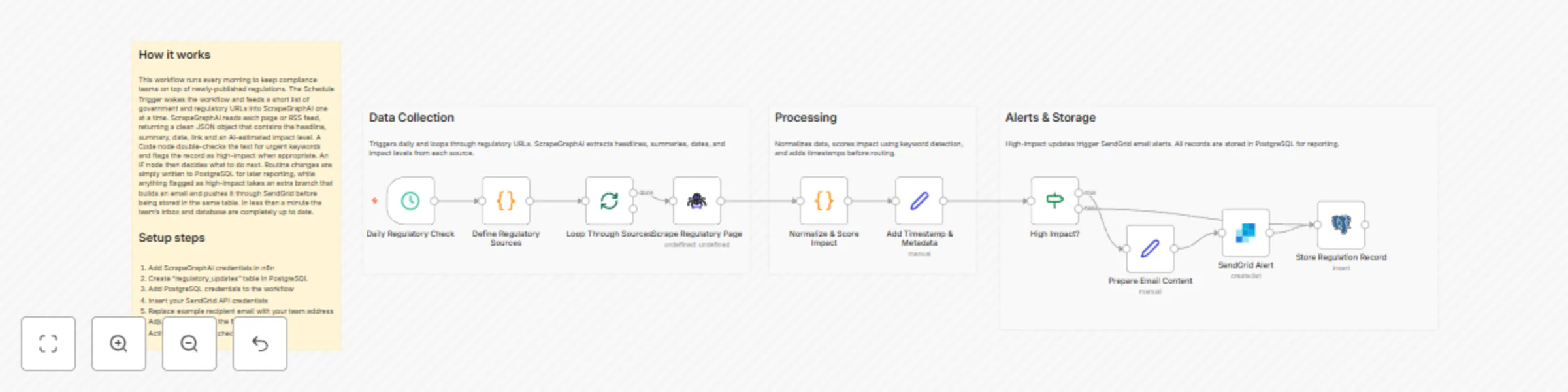

Breaking news aggregator with SendGrid and PostgreSQL

# Breaking News Aggregator with SendGrid and PostgreSQL **⚠️ COMMUNITY TEMPLATE DISCLAIMER: This is a community-contributed template that uses ScrapeGraphAI (a community node). Please ensure you have the ScrapeGraphAI community node installed in your n8n instance before using this template.** This workflow automatically scrapes multiple government and regulatory websites, extracts the latest policy or compliance-related news, stores the data in PostgreSQL, and instantly emails daily summaries to your team through SendGrid. It is ideal for compliance professionals and industry analysts who need near real-time awareness of regulatory changes impacting their sector. ## Pre-conditions/Requirements ### Prerequisites - n8n instance (self-hosted or n8n.cloud) - ScrapeGraphAI community node installed - Operational SendGrid account - PostgreSQL database accessible from n8n - Basic knowledge of SQL for table creation ### Required Credentials - **ScrapeGraphAI API Key** – Enables web scraping and parsing - **SendGrid API Key** – Sends email notifications - **PostgreSQL Credentials** – Host, port, database, user, and password ### Specific Setup Requirements | Resource | Requirement | Example Value | |----------------|------------------------------------------------------------------|------------------------------| | PostgreSQL | Table with columns: `id`, `title`, `url`, `source`, `published_at`| `news_updates` | | Allowed Hosts | Outbound HTTPS access from n8n to target sites & SendGrid endpoint| `https://*.gov`, `https://api.sendgrid.com` | | Keywords List | Comma-separated compliance terms to filter results | `GDPR, AML, cybersecurity` | ## How it works This workflow automatically scrapes multiple government and regulatory websites, extracts the latest policy or compliance-related news, stores the data in PostgreSQL, and instantly emails daily summaries to your team through SendGrid. It is ideal for compliance professionals and industry analysts who need near real-time awareness of regulatory changes impacting their sector. ## Key Steps: - **Schedule Trigger**: Runs once daily (or at any chosen interval). - **ScrapeGraphAI**: Crawls predefined regulatory URLs and returns structured article data. - **Code (JS)**: Filters results by keywords and formats them. - **SplitInBatches**: Processes articles in manageable chunks to avoid timeouts. - **If Node**: Checks whether each article already exists in the database. - **PostgreSQL**: Inserts only new articles into the `news_updates` table. - **Set Node**: Generates an email-friendly HTML summary. - **SendGrid**: Dispatches the compiled summary to compliance stakeholders. ## Set up steps **Setup Time: 15-20 minutes** 1. **Install ScrapeGraphAI Node**: - From n8n, go to “Settings → Community Nodes → Install”, search “ScrapeGraphAI”, and install. 2. **Create PostgreSQL Table**: ```sql CREATE TABLE news_updates ( id SERIAL PRIMARY KEY, title TEXT, url TEXT UNIQUE, source TEXT, published_at TIMESTAMP ); ``` 3. **Add Credentials**: - Navigate to “Credentials”, add ScrapeGraphAI, SendGrid, and PostgreSQL credentials. 4. **Import Workflow**: - Copy the JSON workflow, paste into “Import from Clipboard”. 5. **Configure Environment Variables** (optional): - `REG_NEWS_KEYWORDS`, `SEND_TO_EMAILS`, `DB_TABLE_NAME`. 6. **Set Schedule**: - Open the Schedule Trigger node and define your preferred cron expression. 7. **Activate Workflow**: - Toggle “Active”, then click “Execute Workflow” once to validate all connections. ## Node Descriptions ### Core Workflow Nodes: - **Schedule Trigger** – Initiates the workflow at the defined interval. - **ScrapeGraphAI** – Scrapes and parses news listings into JSON. - **Code** – Filters articles by keywords and normalizes timestamps. - **SplitInBatches** – Prevents database overload by batching inserts. - **If** – Determines whether an article is already stored. - **PostgreSQL** – Executes parameterized INSERT statements. - **Set** – Builds the HTML email body. - **SendGrid** – Sends the daily digest email. ### Data Flow: 1. **Schedule Trigger** → **ScrapeGraphAI** → **Code** → **SplitInBatches** → **If** → **PostgreSQL** → **Set** → **SendGrid** ## Customization Examples ### Change Keyword Filtering ```javascript // Code Node snippet const keywords = ['GDPR','AML','SOX']; // Add or remove terms item.filtered = keywords.some(k => item.title.includes(k)); return item; ``` ### Switch to Weekly Digest ```json { "trigger": { "cronExpression": "0 9 * * 1" // Every Monday at 09:00 } } ``` ## Data Output Format The workflow outputs structured JSON data: ```json { "title": "Data Privacy Act Amendment Passed", "url": "https://regulator.gov/news/1234", "source": "regulator.gov", "published_at": "2024-06-12T14:30:00Z" } ``` ## Troubleshooting ### Common Issues 1. **ScrapeGraphAI node not found** – Install the community node and restart n8n. 2. **Duplicate key error in PostgreSQL** – Ensure the `url` column is marked `UNIQUE` to prevent duplicates. 3. **Emails not sending** – Verify SendGrid API key and check account’s daily limit. ### Performance Tips - Limit initial scrape URLs to fewer than 20 to reduce run time. - Increase SplitInBatches size only if your database can handle larger inserts. **Pro Tips:** - Use environment variables to manage sensitive credentials securely. - Add an Error Trigger node to catch and log failures for auditing purposes. - Combine with Slack or Microsoft Teams nodes to push instant alerts alongside email digests.

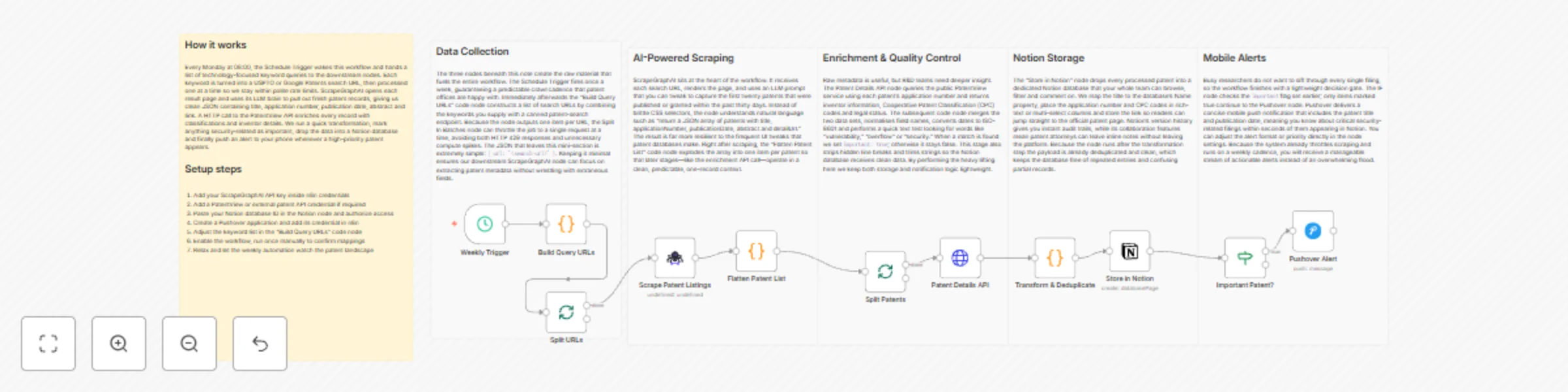

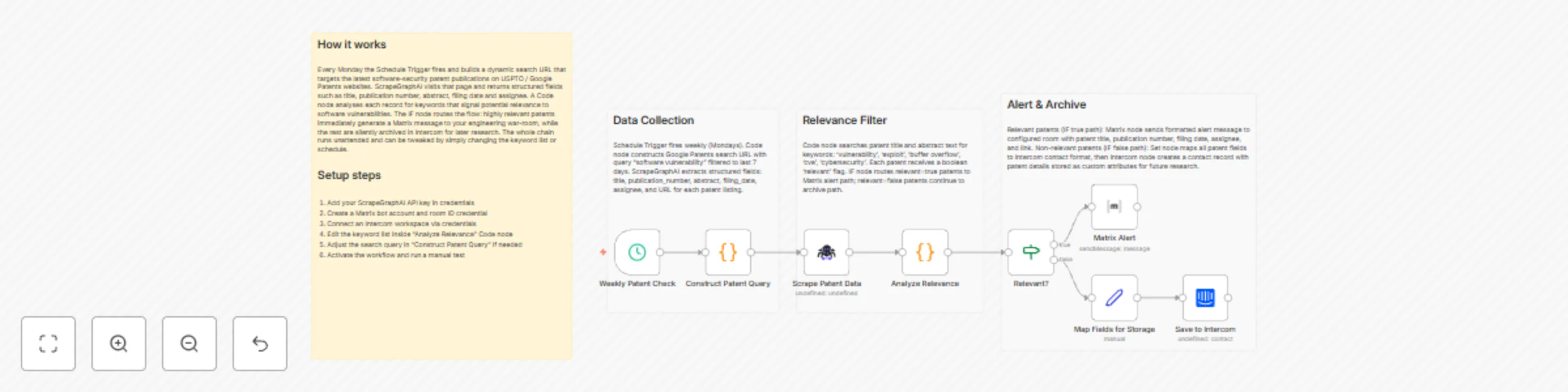

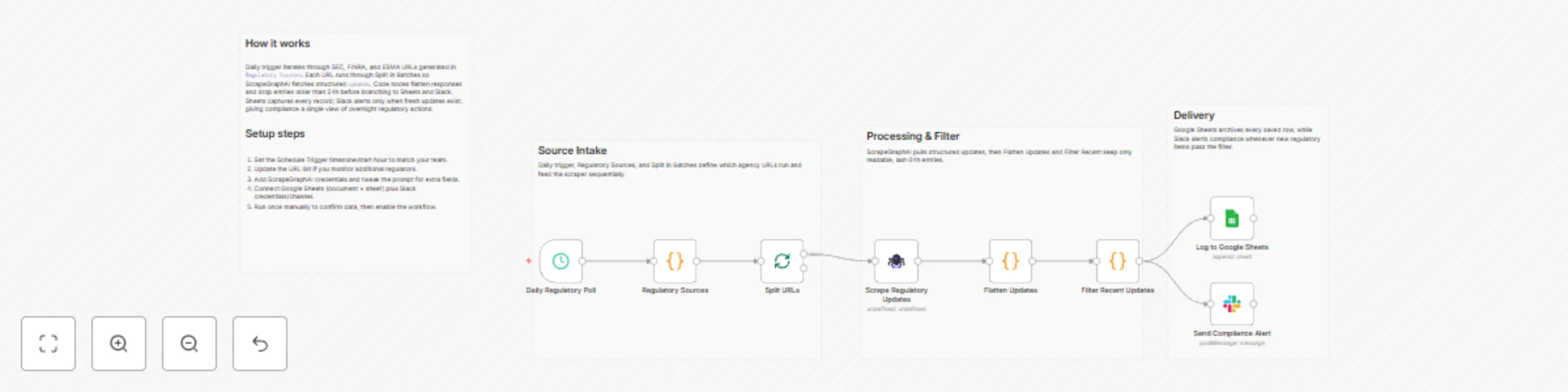

Track software security patents with ScrapeGraphAI, Notion, and Pushover alerts