SIENNA

Workflows by SIENNA

Monitor S3 bucket changes with automated integrity auditing using Mistral AI

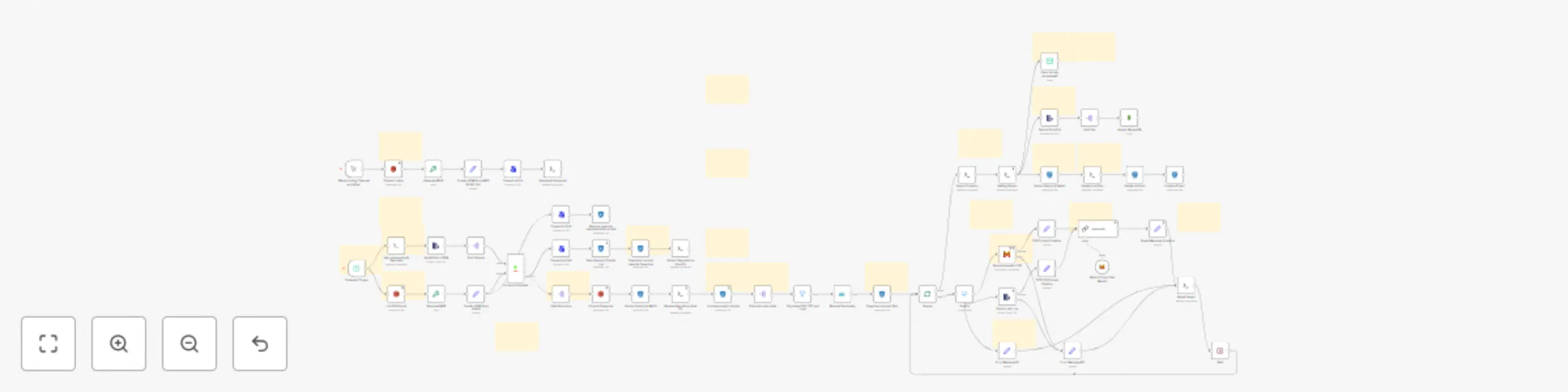

# Automated and Periodic AWS S3 Bucket Object Integrity Audit to detect unexpected changes (by Humans, Tenants or Ransomwares) $\mapsto$ Working with AWS S3, but could also be working with Microsoft Azure, Google Storage Plateform or any other Cloud Provider ! Interested ? Send us an email : [email protected] ## What this workflow does ? This workflow performs automated, periodic audits of an AWS S3 bucket containing objects (files) that can be added, deleted, modified, renamed, or moved at any time by people, tenants, applications, or even ransomware, which could corrupt your resources. Audits are performed on a single bucket and use optical character recognition (OCR) and artificial intelligence (AI) to characterize changes that may have been made to specific files (TXT, PDF, and logs) in order to determine their sensitivity and integrity (is the file sensitive and complete/intact). ## Who's this intended for ? Storage administrators, cloud architects, or DevOps who need a solution to audit movements on remote cloud storage spaces (such as AWS S3). This helps prevent data loss and ensures your compliance level! ## How it works ? Everything is explained in the workflow. It is taking a "picture" of your bucket at a given moment, and then, every X Hours/Days/Weeks, it's taking another picture. After that, we will compare the two photos (like a game of spot the difference) to determine which objects are present in one of the two photos (added or deleted), or present in both but modified. The workflow will extract and download added and modified files, select those in text format (PDF, TXT, and logs), and characterize the changes that have been made to these files to ensure that their integrity level is sufficient. You can obviously modify this workflow to only get Added or Modified Files, and change prompts (ex : tell if the file is containing specific informations, ...). ## Requirements - An access to an AWS S3 Bucket obviously; - A MinIO S3 Bucket running locally on the Host, or on a different VM/LXC/Container (Using PVE ? Check this : https://community-scripts.github.io/ProxmoxVE/scripts?id=minio) ; - Permissions for your N8N Docker Container to write/read on the Host FS (using SSH Nodes with the “execute command” option) ; - A Python Script (here : https://sienna.dev/bitnami/wordpress/wp-content/uploads/assets/fileFormatter.py) for the final AI Report Creation - A Mistral Account for OCR and LLM with API Keys (or an OCR running locally with Ollama for example - MongoDB running on a separate container to get all reports saved Need help with something ? Contact us by Mail ! ## Need a similar Workflow to audit Files & Objects on another Cloud Storage Provider ? Good news, we have what you need! We can work with any cloud provider, audit files and objects on remote spaces (Backblaze, Wasabi, Scaleway, OVH, IBM, Oracle, Storadera, etc.) using custom scripts and open-source tools! Simply contact us by email to get in touch with a cloud storage expert and find out today how you can mitigate your cloud storage issues or recover data from the cloud to an on-premises infrastructure.

Scheduled FTP/SFTP to MinIO backup with preserved folder structure

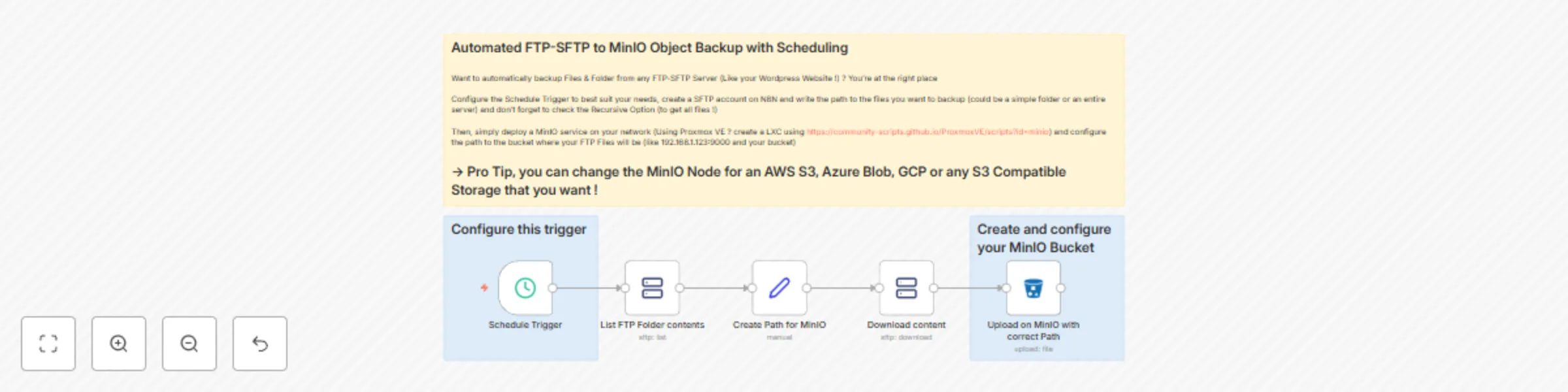

# Automated FTP/SFTP to MinIO Object Backup with Scheduling $\mapsto$ Can work with FTP/SFTP Servers like your Wordpress Website ! ## What this workflow does ? This workflow performs automated, periodic backups of files from a FTP or SFTP server directory to a MinIO S3 bucket running locally or on a dedicated container/VM/server. It can also work if the MinIO bucket is running on a remote cloud provider's infrastructure; you just need to change the URL and keys. ## Who's this intended for ? Storage administrators, cloud architects, or DevOps who need a simple and scalable solution for retrieving data from a remote FTP or SFTP Server. This can also be practical for Wordpress Devs that need to backup data from a server hosting a Wordpress Website. In that case, you'll just need to specify the folder that you want to backup (could be one from wordpress/uploads or even the root one) ## How it works This workflow uses commands to list and download files from a specific directory on a FTP-SFTP Server, then send them to MinIO using their version of the S3 API. The source directory can be a specific one or the entire server (the root directory) ## Requirements None, just a source folder/directory on a FTP/SFTP Server and a destination bucket on MinIO. You'll also need to get MinIO running. You're using Proxmox VE ? Create a MinIO LXC Container : https://community-scripts.github.io/ProxmoxVE/scripts?id=minio ## Need a Backup from another Cloud Storage Provider ? Need automated backup from another Cloud Storage Provider ? $\mapsto$ Check out our templates, we've done it with AWS, Azure, and GCP, and we even have a version for FTP/SFTP servers! $\odot$ These workflow can be integrated to bigger ones and modified to best suit your needs ! You can, for example, replace the MinIO node to another S3 Bucket from another Cloud Storage Provider (Backblaze, Wasabi, Scaleway, OVH, ...)

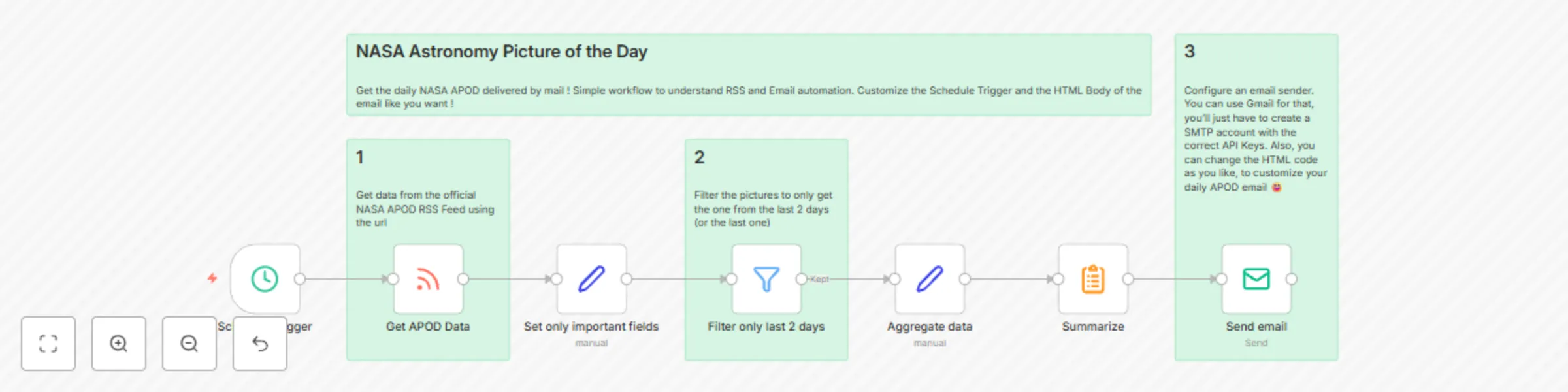

Daily NASA astronomy picture delivered to your inbox via email

Simple workflow to get NASA APOD each day in your mail inbox, using the official RSS feed and a SMTP Node to use the server that you want (We recommend using GMAIL as it's free and simple to get an API Key). You can change the RSS Feed to another one that you like and customize the HTML of the template as you want !

Automated AWS S3 / Azure / Google to local MinIO object backup with scheduling

# Automated AWS S3 / Azure / Google to local MinIO Object Backup with Scheduling ## What this workflow does ? This workflow performs automated, periodic backups of objects from an AWS S3 bucket, an Azure Container or a Google Storage Space to a MinIO S3 bucket running locally or on a dedicated container/VM/server. It can also work if the MinIO bucket is running on a remote cloud provider's infrastructure; you just need to change the URL and keys. ## Who's this intended for ? Storage administrators, cloud architects, or DevOps who need a simple and scalable solution for retrieving data from AWS, Azure or GCP. ## How it works This workflow uses the official AWS S3 API to list and download objects from a specific bucket, or the Azure BLOB one, then send them to MinIO using their version of the S3 API. ## Requirements None, just a source Bucket on your Cloud Storage Provider and a destination one on MinIO. You'll also need to get MinIO running. You're using Proxmox VE ? Create a MinIO LXC Container : https://community-scripts.github.io/ProxmoxVE/scripts?id=minio ## Need a Backup from another Cloud Storage Provider ? Need automated backup from another Cloud Storage Provider ? $\mapsto$ Check out our templates, we've done it with AWS, Azure, and GCP, and we even have a version for FTP/SFTP servers! For a dedicated source Cloud Storage Provider, please contact us ! $\odot$ These workflow can be integrated to bigger ones and modified to best suit your needs ! You can, for example, replace the MinIO node to another S3 Bucket from another Cloud Storage Provider (Backblaze, Wasabi, Scaleway, OVH, ...)