PiAPI

Workflows by PiAPI

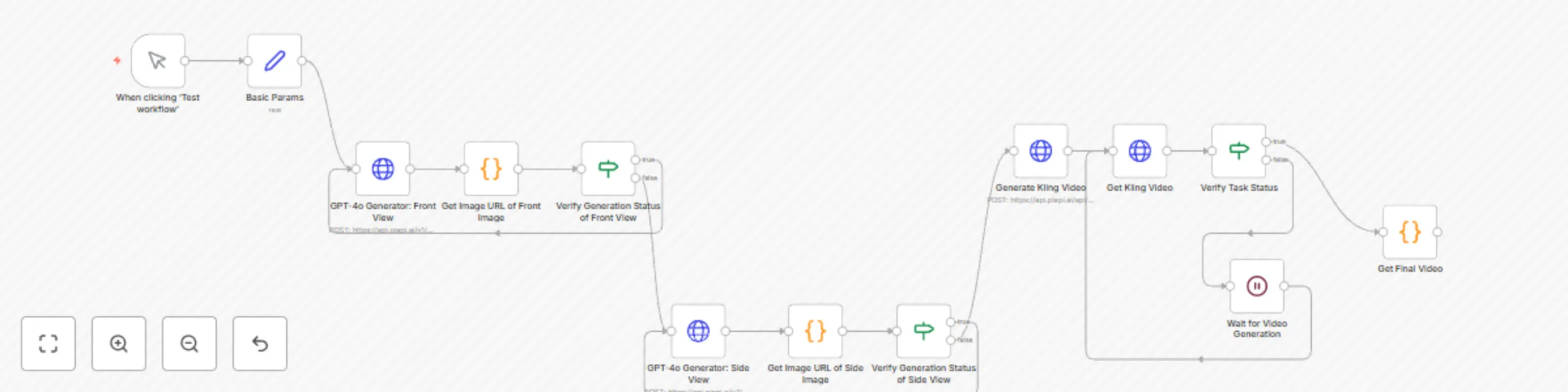

Convert 3-view drawings to 360° videos with GPT-4o-Image and Kling API

What this workflow does? This workflow converts orthographic three view drawings into 360° rotation videos through Pi...

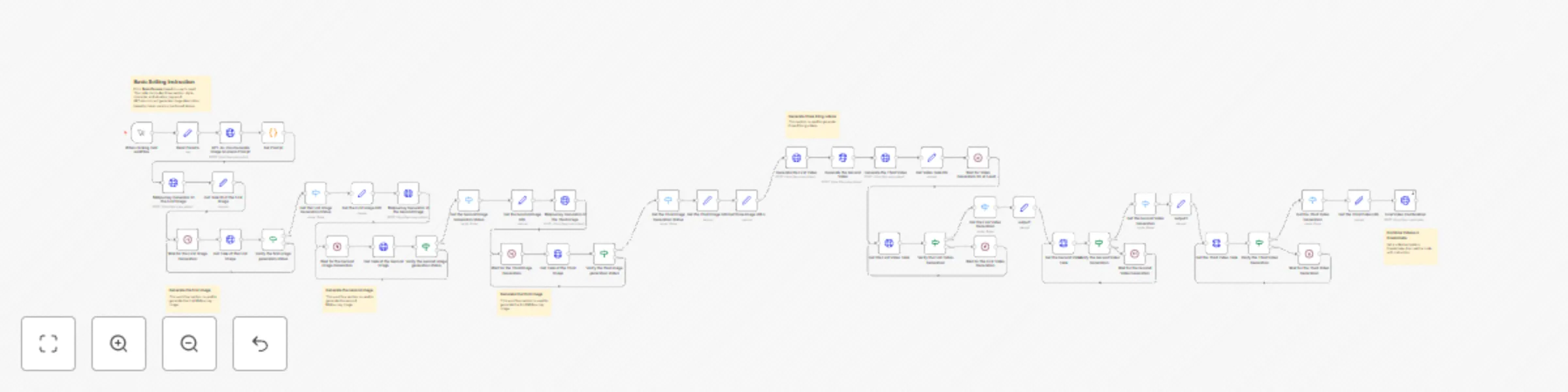

Create animated stories using GPT-4o-mini, Midjourney, Kling and Creatomate API

What does the workflow do? This workflow is designed to generate high quality short videos, primarily uses GPT 4o min...

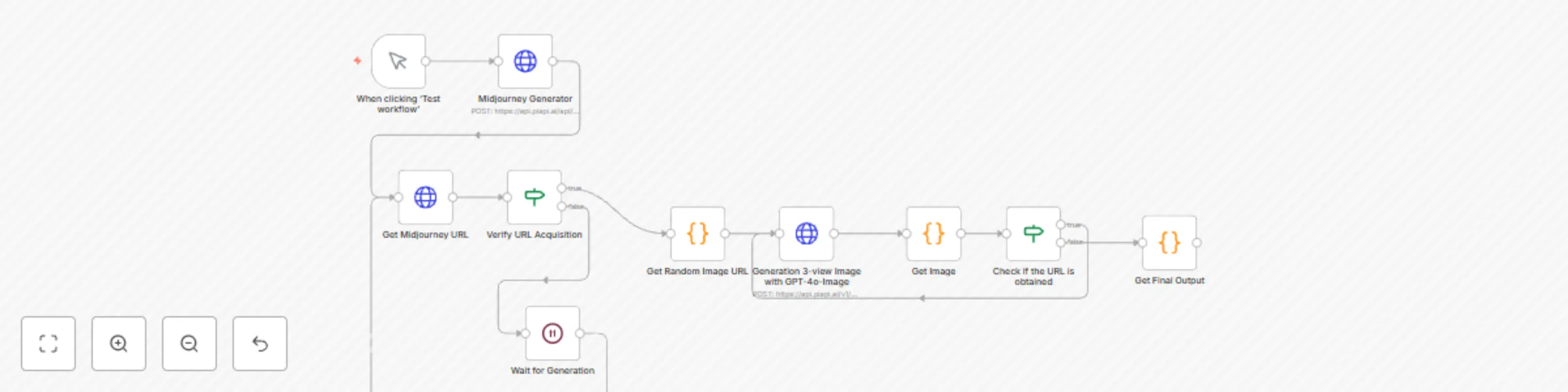

3D figurine orthographic views with Midjourney and GPT-4o-image API

What this workflow does? This workflow primarily uses the GPT 4o API from PiAPI and automatically creates front/side/...

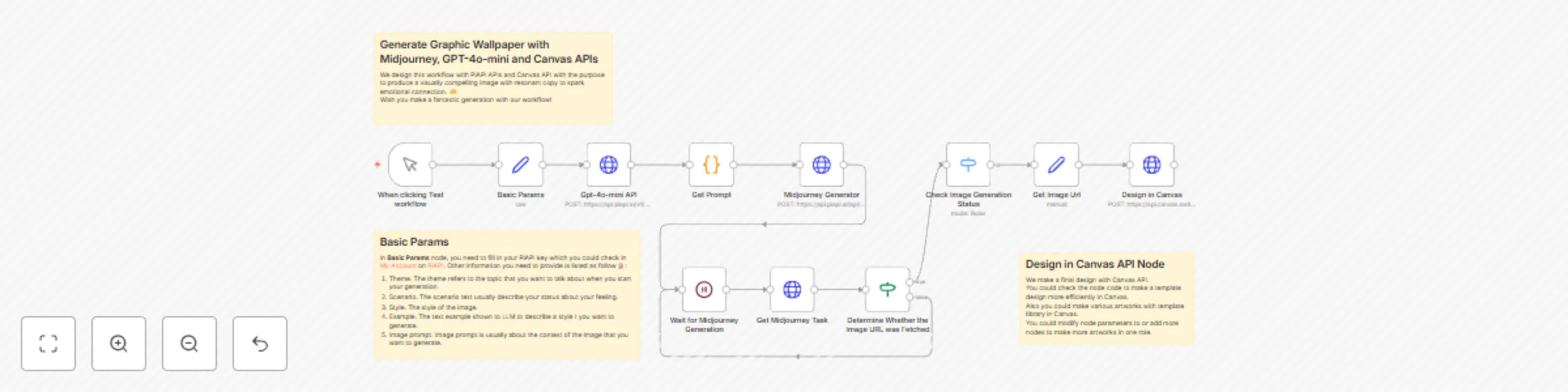

Generate graphic wallpaper with Midjourney, GPT-4o-mini and Canvas APIs

Who is the template for? This workflow is specifically designed for content creators and social media professionals ,...

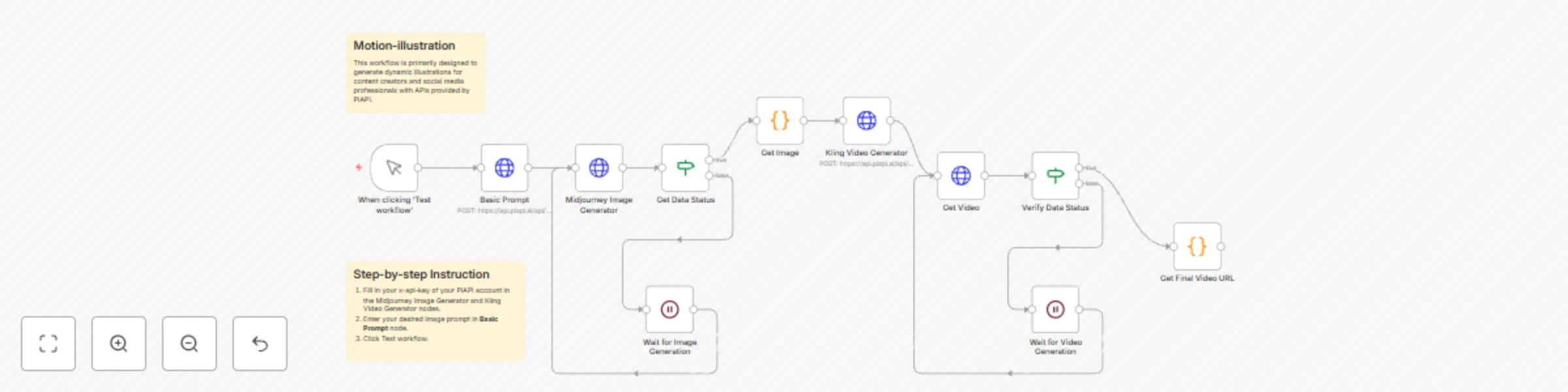

Create animated illustrations from text prompts with Midjourney and Kling API

What does the workflow do? This workflow is primarily designed to generate animated illustrations for content creator...

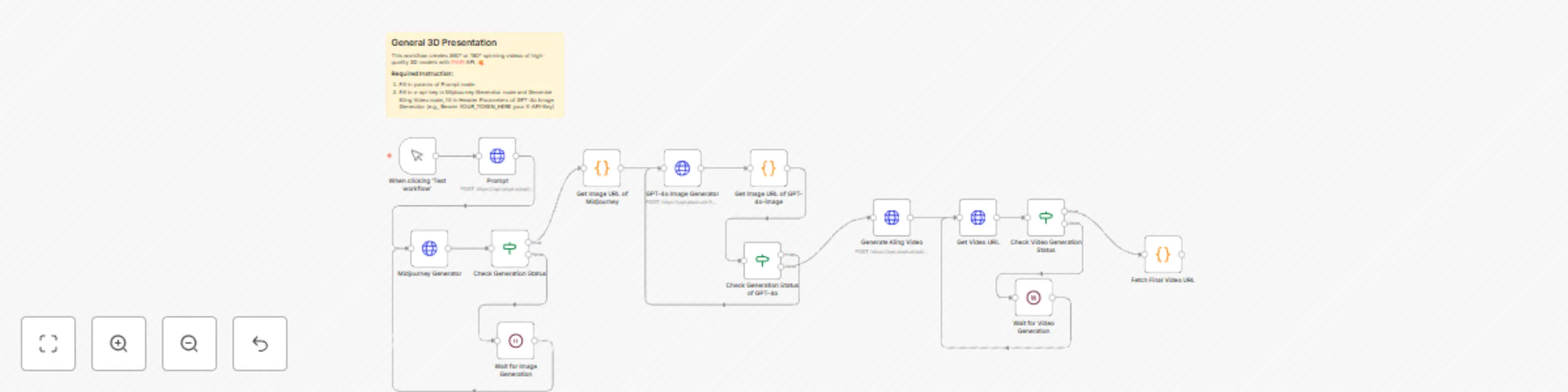

General 3D presentation workflow with Midjourney, GPT-4o-image and Kling APIs

Who is this template for? This workflow creates 360° or 180° spinning videos of high quality 3D models with PiAPI API...

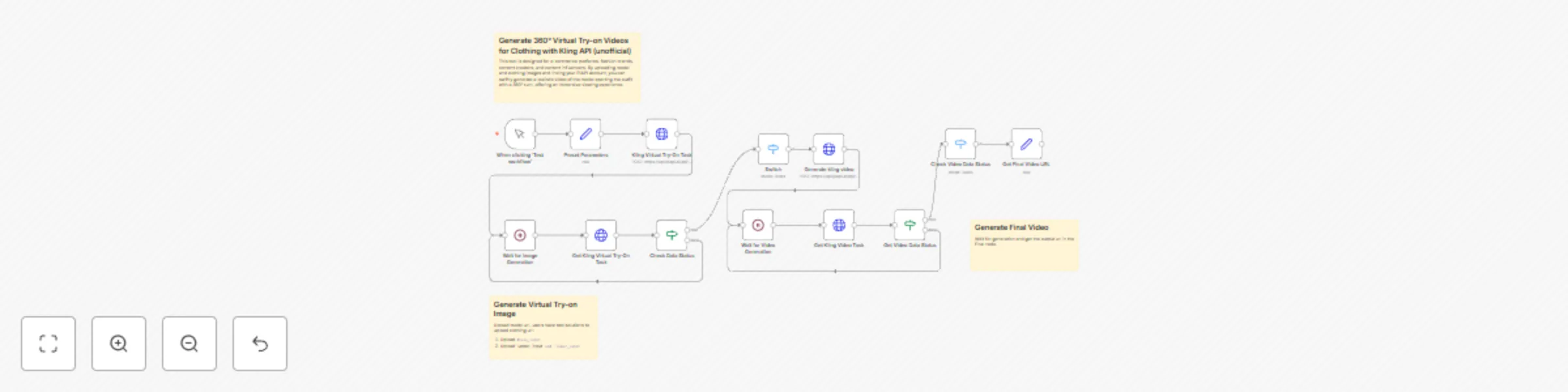

Generate 360° virtual try-on videos for clothing with Kling API (unofficial)

What's the workflow used for? Leverage this Kling API (unofficial) provided by PiAPI workflow to streamline virtual t...