Davide

Workflows by Davide

Translate 🎙️and upload dubbed YouTube videos 📺 using ElevenLabs AI Dubbing

This workflow automates the end-to-end process of **video dubbing** using **ElevenLabs**, storage on Google Drive, and publishing on **Youtube**. This workflow is ideal for creators, agencies, and media teams that need to **TRANSLATE process** and publish large volumes of video content consistently. For this workflow, I started from my [Italian YouTube Short](https://iframe.mediadelivery.net/play/580928/c445daec-e3fe-4019-b035-58ac3bf386dd), and by applying the same workflow, the result was this [English version](https://iframe.mediadelivery.net/play/580928/2179db44-e7e2-43e6-82a1-13b12e18ba8b). --- ### Key Advantages #### 1. ✅ Full Automation of Video Localization The entire process—from video download to AI dubbing and publishing—is automated, eliminating manual steps and reducing human error. #### 2. ✅ Fast Multilingual Content Scaling With AI-powered dubbing, the same video can be quickly localized into different languages, enabling global audience expansion. #### 3. ✅ Efficient Time Management The workflow intelligently waits for the dubbing process to finish using dynamic timing, avoiding unnecessary retries or failures. #### 4. ✅ Centralized Content Distribution A single workflow handles storage, social posting, and YouTube uploads, simplifying content operations across platforms. #### 5. ✅ Reduced Operational Costs Automating dubbing and publishing significantly lowers costs compared to manual voiceovers, video editing, and uploads. #### 6. ✅ Easy Customization & Reusability Parameters like video URL, language, title, and platform can be easily changed, making the workflow reusable for different projects or clients. --- ### **How It Works** 1. The workflow begins with a manual trigger that sets input parameters: a video URL and the target language for dubbing (e.g., `en` for English). 2. The video is fetched from the provided URL via an HTTP request. 3. The video file is sent to the **ElevenLabs Dubbing API**, which initiates audio dubbing in the specified target language. 4. The workflow then waits for a calculated duration (video length + 120 seconds) to allow the dubbing process to complete. 5. After the wait, it checks the dubbing status using the `dubbing_id` and retrieves the final dubbed audio file. 6. The dubbed video is then processed in parallel: - Uploaded to **Google Drive** in a designated folder. - Uploaded to **Postiz** for social media management. - Uploaded via **Upload-Post.com API** for YouTube publishing. 7. Finally, the workflow triggers a **Postiz** node to schedule or publish the content to YouTube with the prepared metadata. --- ### **Set Up Steps** 1. **Configure Input Parameters** In the *Set params* node, define: - `video_url`: Direct URL to the source video. - `target_audio`: Language code (e.g., `en`, `es`, `fr`) for dubbing. 2. **Set Up Credentials** Ensure the following credentials are configured in n8n: - **[ElevenLabs API](https://try.elevenlabs.io/ahkbf00hocnu)** (for dubbing) - **Google Drive OAuth2** (for file upload) - **[Postiz API](https://affiliate.postiz.com/n3witalia)** (for social media scheduling) - **[Upload-Post.com API](https://www.upload-post.com/?linkId=lp_144414&sourceId=n3witalia&tenantId=upload-post-app)** (for YouTube upload) 3. **Adjust Wait Time** Modify the *Wait* node if needed: `expected_duration_sec + 120` ensures enough time for dubbing. Adjust based on video length. 4. **Customize Upload Destinations** Update folder IDs (Google Drive) and platform settings (Upload-Post.com) as needed. 5. **Set Post Content** In the *Youtube Postiz* and *Youtube Upload-Post* nodes, replace `YOUR_CONTENT` and `YOUR_USERNAME` with actual titles, descriptions, and channel details. 6. **Activate and Test** Activate the workflow in n8n, click *Execute workflow*, and monitor execution for errors. Ensure all API keys and permissions are valid. --- 👉 [Subscribe to my new **YouTube channel**](https://youtube.com/@n3witalia). Here I’ll share videos and Shorts with practical tutorials and **FREE templates for n8n**. [](https://youtube.com/@n3witalia) --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

Scrape Trustpilot reviews 📊 with ScrapegraphAI and OpenAI Reputation analysis

This workflow automates the **collection, analysis, and reporting of Trustpilot reviews** for a specific company, transforming unstructured customer feedback into **structured insights and actionable intelligence**. --- ### Key Advantages #### 1. ✅ End-to-End Automation The entire process—from scraping reviews to delivering a polished management report—is fully automated, eliminating manual data collection and analysis . #### 2. ✅ Structured Insights from Unstructured Data The workflow transforms raw, unstructured review text into structured fields and standardized sentiment categories, making analysis reliable and repeatable. #### 3. ✅ Company-Level Reputation Intelligence Instead of focusing on individual products, the analysis evaluates the **overall brand, service quality, customer experience, and operational performance**, which is critical for leadership and strategic teams. #### 4. ✅ Action-Oriented Outputs The AI-generated report goes beyond summaries by: * Identifying reputational risks * Highlighting improvement opportunities * Proposing concrete actions with priorities, effort estimates, and KPIs #### 5. ✅ Visual & Executive-Friendly Reporting Automatic sentiment charts and structured executive summaries make insights immediately understandable for non-technical stakeholders. #### 6. ✅ Scalable and Configurable * Easily adaptable to different companies or review volumes * Page limits and batching protect against rate limits and excessive API usage #### 7. ✅ Cross-Team Value The output is tailored for multiple internal teams: * Management * Marketing * Customer Support * Operations * Product & UX --- ### Ideal Use Cases * Brand reputation monitoring * Voice-of-the-customer programs * Executive reporting * Customer experience optimization * Competitive benchmarking (by reusing the workflow across brands) --- ### **How It Works** This workflow automates the complete process of scraping Trustpilot reviews, extracting structured data, analyzing sentiment, and generating comprehensive reports. The workflow follows this sequence: 1. **Trigger & Configuration**: The workflow starts with a manual trigger, allowing users to set the target company URL and the number of review pages to scrape. 2. **Review Scraping**: An HTTP request node fetches review pages from Trustpilot with pagination support, extracting review links from the HTML content. 3. **Review Processing**: The workflow processes individual review pages in batches (limited to 5 reviews per execution for efficiency). Each review page is converted to clean markdown using ScrapegraphAI. 4. **Data Extraction**: An information extractor using OpenAI's GPT-4.1-mini model parses the markdown to extract structured review data including author, rating, date, title, text, review count, and country. 5. **Sentiment Analysis**: Another OpenAI model performs sentiment classification on each review text, categorizing it as Positive, Neutral, or Negative. 6. **Data Aggregation**: Processed reviews are collected and compiled into a structured dataset. 7. **Analytics & Visualization**: - A pie chart is generated showing sentiment distribution - A comprehensive reputation analysis report is created using an AI agent that evaluates company-level insights, recurring themes, and provides actionable recommendations 8. **Reporting & Delivery**: The analysis is converted to HTML format and sent via email, providing stakeholders with immediate insights into customer feedback and company reputation. ## **Set Up Steps** To configure and run this workflow: 1. **Credential Setup**: - Configure OpenAI API credentials for the chat models and information extraction - Set up ScrapegraphAI credentials for webpage-to-markdown conversion - Configure Gmail OAuth2 credentials for email notifications 2. **Company Configuration**: - In the "Set Parameters" node, update `company_id` to the target Trustpilot company URL - Adjust `max_page` to control how many review pages to scrape 3. **Review Processing Limits**: - The "Limit" node restricts processing to 5 reviews per execution to manage API costs and processing time - Adjust this value based on your needs and OpenAI usage limits 4. **Email Configuration**: - Update the "Send a message" node with the recipient email address - Customize the email subject and content as needed 5. **Analysis Customization**: - Modify the prompt in the "Company Reputation Analyst" node to tailor the report format - Adjust sentiment analysis categories if different classification is needed 6. **Execution**: - Click "Test workflow" to execute the manual trigger - Monitor execution in the n8n editor to ensure all API calls succeed - Check the configured email inbox for the generated report **Note**: Be mindful of API rate limits and costs associated with OpenAI and ScrapegraphAI services when processing large numbers of reviews. The workflow includes a 5-second delay between paginated requests to comply with Trustpilot's terms of service. --- 👉 [Subscribe to my new **YouTube channel**](https://youtube.com/@n3witalia). Here I’ll share videos and Shorts with practical tutorials and **FREE templates for n8n**. [](https://youtube.com/@n3witalia) --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

WooCommerce 🛒 Product Review Sentiment Analysis and AI Report 🤖 for Improvement

This workflow automates the **end-to-end analysis of WooCommerce product reviews**, transforming raw customer feedback into **actionable product and customer-care insights**, and delivering them in a structured, visual, and shareable format. This workflow analyzes product review sentiment from WooCommerce using AI. It starts by retrieving reviews for a specified product via the WooCommerce. Each review then undergoes sentiment analysis using LangChain's Sentiment Analysis. The workflow aggregates sentiment data, creates a pie chart visualization via QuickChart, and compiles a comprehensive report using an AI Agent. The report includes executive summaries, quantitative data, qualitative analysis, product diagnostics, and operational recommendations. Finally, the **AI-generated report** is converted to HTML and emailed to a designated recipient for review by customer and product teams. --- ### Key Advantages #### 1. ✅ Full Automation of Review Analysis Eliminates manual work by automating data collection, sentiment analysis, reporting, visualization, and delivery in a single workflow. #### 2. ✅ Scalable and Reliable Batch processing ensures the workflow can handle **dozens or hundreds of reviews** without performance issues. #### 3. ✅ Action-Oriented Insights (Not Just Sentiment) Instead of stopping at sentiment scores, the workflow produces: * Root-cause hypotheses * Concrete improvement actions * Prioritized recommendations (P0 / P1 / P2) * Measurable KPIs #### 4. ✅ Combines Quantitative and Qualitative Analysis Merges hard metrics (averages, distributions, outliers) with qualitative insights (themes, risks, opportunities), giving a **360° view of customer feedback**. #### 5. ✅ Visual + Narrative Output Stakeholders receive both: * **Visual sentiment charts** for quick understanding * **Structured written reports** for strategic decision-making #### 6. ✅ Ready for Product & Customer Care Teams The output format is tailored for non-technical teams: * Clear language * Masked personal data (GDPR-friendly) * Immediate usability in meetings, emails, or documentation #### 7. ✅ Easily Extensible The workflow can be extended to: * Run on a schedule * Analyze multiple products * Store results in a database or CRM * Trigger alerts for negative sentiment spikes #### Ideal Use Cases * Continuous monitoring of product sentiment * Supporting product roadmap decisions * Identifying customer pain points early * Improving customer support response strategies * Reporting customer voice to stakeholders automatically --- ### How it works 1. **Manual Trigger & Configuration** The workflow starts manually and sets the target **WooCommerce product ID** and **store URL**. 2. **Data Retrieval from WooCommerce** * Fetches **all reviews** for the selected product via the WooCommerce REST API. * Retrieves **product details** (name, description, categories) to enrich the analysis context. 3. **Batch Processing of Reviews** Reviews are processed in batches to ensure scalability and reliability, even with a large number of reviews. 4. **AI-Powered Sentiment Analysis** * Each review is analyzed using an OpenAI-based sentiment analysis model. * For every review, the workflow extracts: * Sentiment category (Positive / Negative / Neutral) * Strength (intensity) * Confidence (reliability of the classification) 5. **Data Normalization & Aggregation** * Review text is cleaned and structured. * Sentiment data is aggregated to compute overall distributions and metrics. 6. **Visual Sentiment Distribution** * A pie chart is dynamically generated via QuickChart to visually represent sentiment distribution. 7. **Advanced AI Insight Generation** A specialized AI agent (“Product Insights Analyst”) transforms the raw and aggregated data into a **professional, structured report**, including: * Executive summary * Quantitative statistics * Qualitative themes * Product diagnosis * Operational recommendations * Product backlog ideas * Next steps 8. **HTML Conversion & Delivery** * The report is converted into clean HTML. * The final output is automatically sent via **email** to stakeholders (e.g. product or customer care teams). --- ### Set up steps 1. **Configure credentials**: - Set up WooCommerce API credentials in the HTTP Request node. - Add OpenAI API credentials for both sentiment analysis and reporting. - Configure Gmail OAuth2 credentials for sending the final email report. 2. **Set parameters**: - In the "Product ID" node, replace `PRODUCT_ID` and `YOUR_WEBSITE` with actual product ID and WooCommerce site URL. - Update the recipient email address in the "Send a message" node. 3. **Optional adjustments**: - Modify the pie chart design in the "QuichChart" node if needed. - Adjust the report structure or language in the "Product Insights Analyst" system prompt. 4. **Run the workflow**: - Click "Execute workflow" on the manual trigger to start the process. - Monitor execution in n8n to ensure all nodes process correctly. Once configured, the workflow will automatically analyze product reviews, generate insights, and deliver a formatted report via email. --- 👉 [Subscribe to my new **YouTube channel**](https://youtube.com/@n3witalia). Here I’ll share videos and Shorts with practical tutorials and **FREE templates for n8n**. [](https://youtube.com/@n3witalia) --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

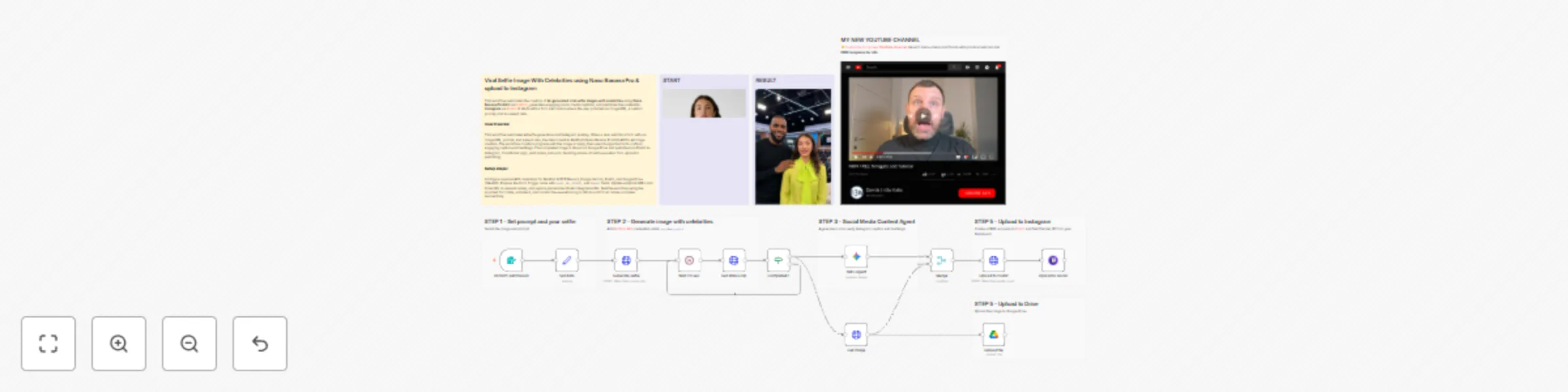

Create Viral 😎 AI celebrity selfies 📸 with Nano Banana Pro & upload to Instagram

This workflow automates the creation of **AI-generated viral selfie images with celebrities** using **Nano Banana Pro Edit** via [RunPod](https://get.runpod.io/n3witalia), generates engaging social media captions, and publishes the content to **Instagram** via [Postiz](https://affiliate.postiz.com/n3witalia). It starts with a form submission where the user provides an image URL, a custom prompt, and an aspect ratio. | START | RESULT | |------|--------| |  |  | --- ### Key Advantages #### 1. ✅ Full Automation, Zero Manual Effort From image generation to caption writing and publishing, the entire process is automated. This drastically reduces production time and eliminates repetitive manual tasks. #### 2. ✅ Scalable Content Creation The workflow can handle unlimited submissions, making it ideal for: * Creators * Agencies * Growth teams * SaaS products offering AI-generated content #### 3. ✅ Consistent Viral Quality By using a dedicated AI content agent with strict guidelines, every post is: * Optimized for engagement * Consistent in tone and quality * Designed to maximize comments, shares, and saves #### 4. ✅ No Technical Skills Required for End Users The form-based entry point allows anyone to generate high-quality, celebrity-style content without understanding AI, APIs, or automation. #### 5. ✅ Multi-Tool Integration in One Pipeline The workflow seamlessly connects: * AI image generation (RunPod) * AI content intelligence (Google Gemini) * Asset storage (Google Drive) * Social media distribution (Postiz) #### 6. ✅ Brand-Safe and Platform-Native Output The captions are written to feel human and authentic, avoiding: * Obvious AI language * Overuse of emojis * Mentions of AI generation This increases trust and platform compatibility. #### 7. ✅ Perfect for Growth and Monetization This workflow is ideal for: * Viral growth experiments * Personal brand scaling * Automated influencer-style content * AI-powered SaaS or lead magnets --- ### How it works The workflow then: 1. Sends the image and prompt to RunPod’s Nano Banana Pro Edit API for AI image generation. 2. Periodically checks the generation status until it is completed. 3. Once the image is ready, it is downloaded and analyzed by Google Gemini to generate a viral-ready Instagram caption and hashtags. 4. The final image is uploaded to Google Drive and to Postiz for social media publishing. 5. The caption and image are combined and scheduled for posting on Instagram through the Postiz integration. The process includes conditional logic, waiting intervals, and error handling to ensure reliable execution from input to publication. --- ### Set up steps To use this workflow in n8n: 1. **Configure credentials**: - Add [RunPod API](https://get.runpod.io/n3witalia) credentials under `httpBearerAuth` named “Runpods”. - Set up Google Gemini (PaLM) API credentials for caption generation. - Add [Postiz API](https://affiliate.postiz.com/n3witalia) credentials for social media posting. - Configure Google Drive OAuth2 credentials for image backup. 2. **Prepare nodes**: - Ensure the Form Trigger node is properly set up with the required fields: `IMAGE_URL`, `PROMPT`, and `FORMAT`. - Update the RunPod API endpoints in the “Generate selfie” and “Get status clip” nodes if needed. - Verify the Google Drive folder ID in the “Upload file” node. - Replace `XXX` in the “Upload to Social” node with a valid Postiz integration ID. 3. **Test the flow**: - Use the pinned test data in the “On form submission” node to simulate a form entry. - Activate the workflow and submit the form to trigger the process. - Monitor execution in n8n’s workflow view to ensure all nodes run successfully. --- 👉 [Subscribe to my new **YouTube channel**](https://youtube.com/@n3witalia). Here I’ll share videos and Shorts with practical tutorials and **FREE templates for n8n**. [](https://youtube.com/@n3witalia) --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

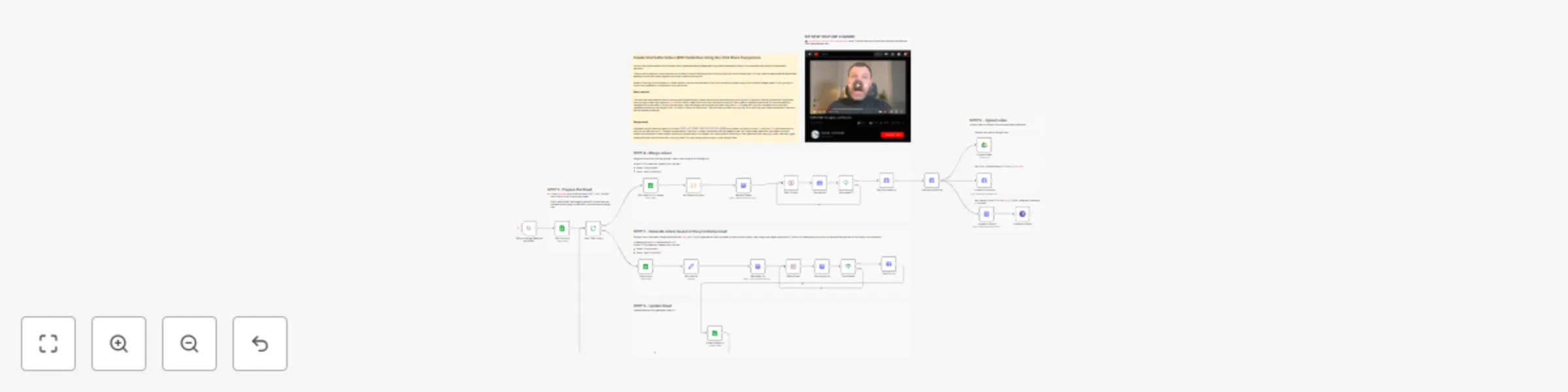

Create AI Viral Selfie videos 🎬 with celebrities 😎 using Google Veo 3.1

This workflow demonstrates how to create **viral AI-generated selfie videos featuring famous characters** using a fully automated and platform-independent approach. The process is designed to replicate the kind of celebrity selfie videos that are currently going viral on social media and YouTube, where a **realistic selfie-style video** appears to show the creator together with a well-known **public figure**. Instead of relying on a proprietary or closed platform, the workflow explains how to build the entire pipeline using direct access to **Google Veo 3.1** APIs, giving full control over generation, orchestration, and distribution. --- ### Key Advantages #### 1. ✅ Fully automated video pipeline From prompt to final published video, the entire process runs without manual intervention. #### 2. ✅ Spreadsheet-driven control Non-technical users can manage video production simply by editing Google Sheets: * Add new prompts * Adjust duration * Control merge logic #### 3. ✅ Scalable and modular * Supports batch processing of many videos * Easy to extend with new AI models, platforms, or output formats #### 4. ✅ Reliable async handling * Built-in wait and status-check logic ensures robustness * Prevents failures caused by long-running AI jobs #### 5. ✅ Centralized asset management * Automatically stores video URLs and statuses * Keeps production data organized and auditable #### 6. ✅ Multi-platform ready * One generated video can be reused for: * YouTube * TikTok * Instagram * Other social channels #### 7. ✅ Cost and time efficiency * Eliminates repetitive manual video editing * Reduces production time from hours to minutes #### Ideal Use Cases * AI-generated storytelling videos * Social media content automation * Marketing video campaigns * Short-form video experiments at scale * Faceless or semi-automated content channels --- ### **How it Works** This workflow automates the generation of short video clips using AI, merges them into a final video, and optionally uploads the result to multiple platforms. 1. **Trigger & Data Fetching** The workflow starts with a manual trigger. It reads a Google Sheet containing prompts, image URLs (first and last frames), and duration settings for each video clip to be generated. 2. **Video Clip Generation** For each row in the sheet, the workflow calls the **fal.ai VEO 3.1 API** to generate a video clip based on the provided prompt, start image, end image, and duration. The clip is created asynchronously, so the workflow polls the API for status until completion. 3. **Status Polling & URL Retrieval** Once a clip is marked as `COMPLETED`, its video URL is fetched and written back to the Google Sheet in the corresponding row. 4. **Video Merging** After all clips are generated, the workflow collects the video URLs from rows marked for merging and sends them to the **fal.ai FFmpeg API** to be combined into a single video. 5. **Final Video Processing** The merged video is polled until ready, then its final URL is retrieved. The video file is downloaded via HTTP request. 6. **Upload & Distribution** The final video can be uploaded to: - Google Drive - YouTube (via [upload-post.com API](https://www.upload-post.com/?linkId=lp_144414&sourceId=n3witalia&tenantId=upload-post-app)) - [Postiz](https://affiliate.postiz.com/n3witalia) (for multi-platform social media posting) Each upload step is currently disabled and requires configuration (usernames, titles, platform settings). **WARNING** It may happen that the workflow stops at the video generation node with the following message: > *Your request is invalid or could not be processed by the service [item 0]* > *The content could not be processed because it contained material flagged by a content checker.* This occurs because images are checked both **before and after** the video generation process. If this happens, you can either use **less restrictive video models** while keeping the same workflow structure, or **change the source images** in the Google Sheets file. --- ### **Set Up Steps** 1. **Google Sheets Setup** - Prepare a Google Sheet with columns: `START`, `LAST`, `PROMPT`, `DURATION`, `VIDEO URL`, `MERGE` - Connect n8n to Google Sheets using OAuth2 credentials. 2. **Fal.ai API Configuration** - Obtain an API key from fal.ai. - Set up **HTTP Header Auth** credentials in n8n with the key. 3. **Upload Services Configuration** - **Google Drive**: Configure OAuth2 credentials and specify the target folder ID. - [**YouTube/upload-post.com**](https://www.upload-post.com/?linkId=lp_144414&sourceId=n3witalia&tenantId=upload-post-app): Enter your username and title in the respective node. - [**Postiz**](https://affiliate.postiz.com/n3witalia): Set up Postiz API credentials and configure platform channels. 4. **Enable Required Nodes** - Enable the upload nodes (`Upload Video`, `Upload to Youtube`, `Upload to Postiz`, `Upload to Social`) once credentials are configured. 5. **Adjust Polling Intervals** - Modify wait times (`Wait 30 sec.`, `Wait 60 sec.`) as needed based on video processing times. 6. **Test Execution** - Start the workflow manually via the trigger node. - Monitor execution in n8n’s editor and check the Google Sheet for updated video URLs. This workflow is designed for batch video creation and merging, ideal for content pipelines involving AI-generated media. --- 👉 [Subscribe to my new **YouTube channel**](https://youtube.com/@n3witalia). Here I’ll share videos and Shorts with practical tutorials and **FREE templates for n8n**. [](https://youtube.com/@n3witalia) --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

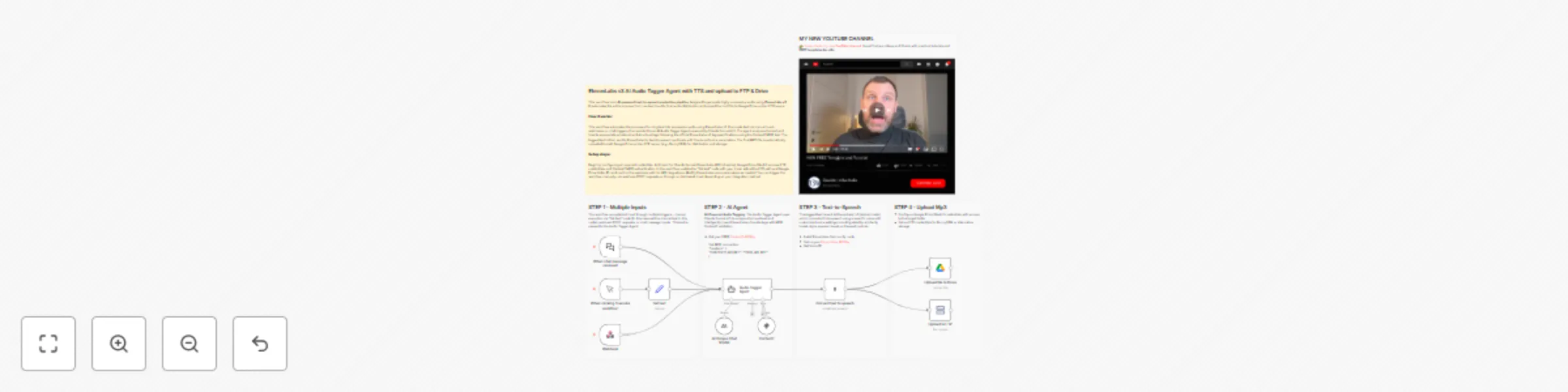

Generate highly expressive audio 🎙️ using ElevenLabs v3 TTS Audio Tags

This workflow is an **AI-powered text-to-speech production pipeline** designed to generate highly expressive audio using **ElevenLabs v3**. It automates the entire process from raw text input to final audio distribution and upload the mp3 file to Google Drive and an FTP space. --- ### Key Advantages #### 1. ✅ Cinematic-quality audio output By combining AI-driven emotional tagging with ElevenLabs v3, the workflow produces audio that feels **acted**, not simply read. #### 2. ✅ Fully automated pipeline From raw text to hosted audio file, everything is handled automatically: * No manual tagging * No manual uploads * No post-processing #### 3. ✅ Multi-input flexibility The workflow supports: * Manual testing * Chat-based usage * API/Webhook integrations This makes it ideal for **apps, CMSs, games, and content platforms**. #### 4. ✅ Language-agnostic The agent preserves the **original language** of the input text and applies tags accordingly, making it suitable for **international projects**. #### 5.✅ Consistent and correct tagging The use of **Context7** ensures that all audio tags follow the **official ElevenLabs v3 specifications**, reducing errors and incompatibilities. #### 6. ✅ Scalable and production-ready Automatic uploads to Drive and FTP make this workflow ready for: * Large content volumes * CDN delivery * Team collaboration #### 7.✅ Perfect for storytelling and media The workflow is especially effective for: * Horror and cinematic storytelling * Audiobooks and podcasts * Games and immersive narratives * Voiceovers with emotional depth --- ### How it Works 1. **Text Input & Processing**: The workflow accepts text input through multiple triggers - manual execution via "Set text" node, webhook POST requests, or chat message inputs. This text is passed to the Audio Tagger Agent. 2. **AI-Powered Audio Tagging**: The Audio Tagger Agent uses Claude Sonnet 4.5 to analyze the input text and intelligently insert ElevenLabs v3 audio tags. The agent follows strict rules: maintaining original meaning, adding tags for pauses, rhythm, emphasis, emotional tones, breathing, laughter, and delivery variations while keeping the output in the original language. 3. **Reference Validation**: During tagging, the agent consults the Context7 MCP tool, which provides access to the official ElevenLabs v3 audio tags guide to ensure correct and consistent tag usage. 4. **Text-to-Speech Conversion**: The tagged text is sent to ElevenLabs' v3 (alpha) model, which converts it into speech using a specific voice with customized voice settings including stability, similarity boost, style, speaker boost, and speed controls. 5. **Dual Output Distribution**: The generated audio file is simultaneously uploaded to two destinations: Google Drive (in a specified "Elevenlabs" folder) and an FTP server (BunnyCDN), ensuring the file is stored in both cloud storage platforms. --- ### Set Up Steps 1. **Prerequisite Configuration**: - Configure Anthropic API credentials for Claude Sonnet access - Set up [ElevenLabs API](https://try.elevenlabs.io/ahkbf00hocnu) credentials with access to v3 (alpha) models - Configure Google Drive OAuth2 credentials with access to the target folder - Set up FTP credentials for BunnyCDN or alternative storage - Configure Context7 MCP tool with appropriate authentication headers 2. **Workflow-Specific Setup**: - In the "Set text" node, replace "YOUR TEXT" with the default text you want to process (for manual execution) - In the "Upload to FTP" node, update the path from "/YOUR_PATH/" to your actual FTP directory structure - Verify the Google Drive folder ID points to your intended destination folder - Ensure the webhook path is correctly configured for external integrations - Adjust voice parameters in the ElevenLabs node if different voice characteristics are desired 3. **Execution Options**: - For one-time processing: Use the manual trigger and set text in the "Set text" node - For API integration: Use the webhook endpoint to receive text via POST requests - For chat-based interaction: Use the chat trigger for conversational text input --- 👉 [Subscribe to my new **YouTube channel**](https://youtube.com/@n3witalia). Here I’ll share videos and Shorts with practical tutorials and **FREE templates for n8n**. [](https://youtube.com/@n3witalia) --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

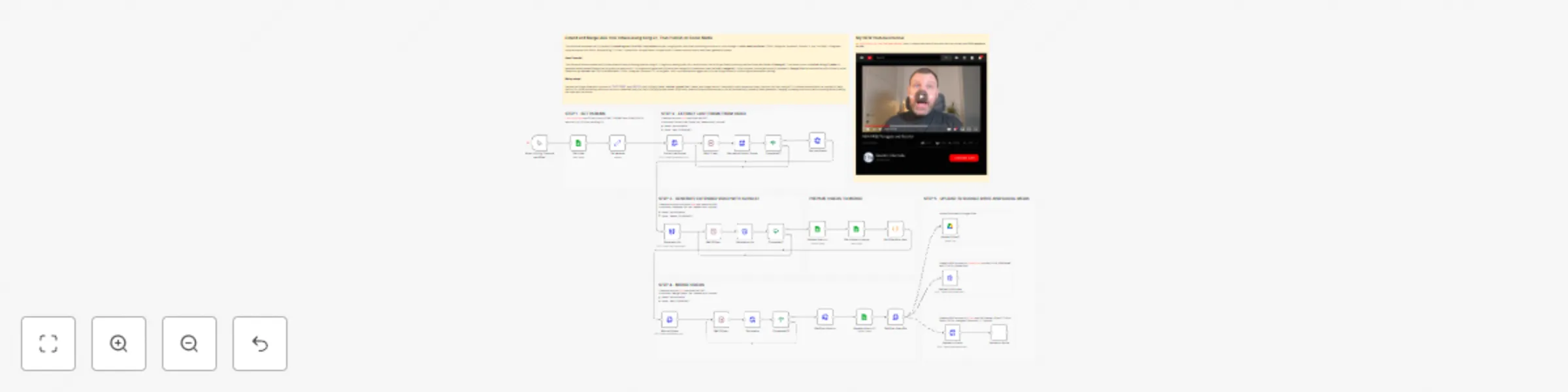

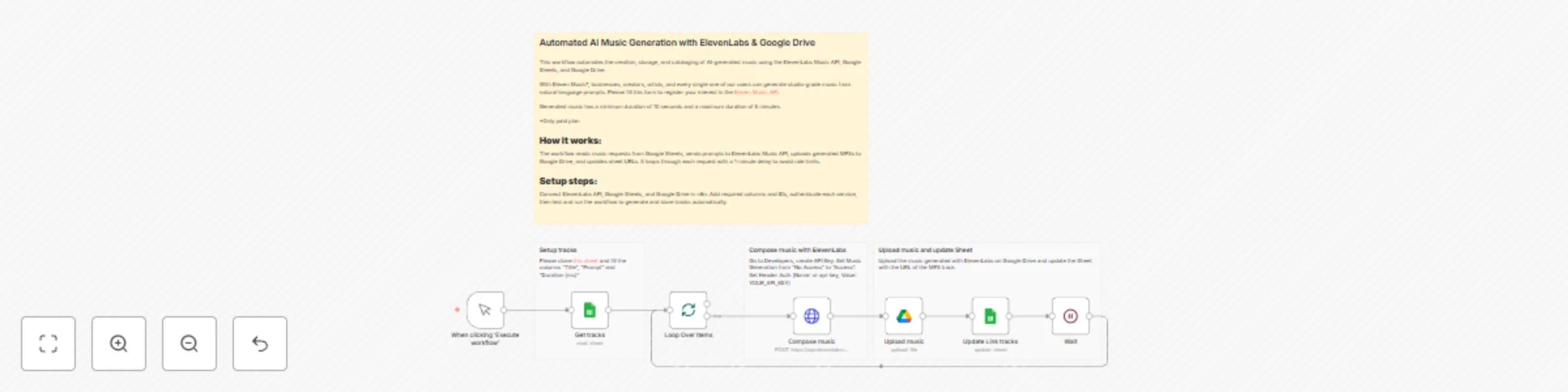

Extend and merge UGC viral videos using Kling 2.1, then publish on social media

This workflow automates the full pipeline for **extending short Viral UGC-style videos** using AI, merging them, and finally publishing the output to cloud storage or **social media platforms** (*TikTok, Instagram, Facebook, Linkedin, X, and YouTube*). It integrates multiple external APIs (Fal.ai, Runpod/Kling 2.1, Postiz, Upload-Post, Google Sheets, Google Drive) to create a smooth end-to-end video-generation system. --- ### **Key Advantages** #### **1. ✅ Full End-to-End Automation** The workflow covers the entire process: 1. Read inputs 2. Generate extended clips 3. Merge them 4. Save outputs 5. Publish on social platforms No manual intervention required after starting the workflow. #### **2. ✅ AI-Powered Video Extension (Kling 2.1 or other models like Veo 3.1 or Sora 2)** The system uses Kling 2.1 (Kling 2.1 or other models like Veo 3.1 or Sora 2) to extend short videos realistically, enabling: * Longer UGC clips * Consistent cinematic style * Smooth transitions based on extracted frames Ideal for viral social media content. #### **3. ✅ Smart Integration with Google Sheets** The spreadsheet becomes a **control panel**: * Add new videos to extend * Control merging * Automatically store URLs and results This makes the system user-friendly even for non-technical operators. #### **4. ✅ Robust Asynchronous Job Handling** Every external API includes: * Status checks * Waiting loops * Error prevention steps This ensures reliability when working with long-running AI processes. #### **5. ✅ Automatic Merging and Publishing** Once videos are generated, the workflow: * Merges them in the correct order * Uploads them to Google Drive * Posts them automatically to selected social platforms This drastically reduces time required for content production and distribution. #### **6. ✅ Highly Scalable and Customizable** Because it is built in n8n: * You can add more APIs * You can add editing steps * You can connect custom triggers (e.g., Airtable, webhooks, Shopify, etc.) * You can fully automate your video-production pipeline --- ### **How It Works** This workflow automates the process of extending and merging videos using AI-generated content, then publishing the final result to social media platforms. The process consists of five main stages: - **Data Input & Frame Extraction** The workflow starts by reading video and prompt data from a Google Sheet. It extracts the last frame from the input video using Fal.ai’s FFmpeg API. - **AI Video Generation** The extracted frame is sent to RunPod’s Kling 2.1 AI model to generate a new video clip based on the provided prompt and desired duration. - **Video Merging** Once the AI-generated clip is ready, it is merged with the original video using Fal.ai’s FFmpeg merge functionality to create a seamless extended video. - **Storage & Publishing** The final merged video is uploaded to Google Drive and simultaneously distributed to social media platforms via: - YouTube (via Upload-Post) - TikTok, Instagram, Facebook, X, and YouTube (via Postiz) - **Progress Tracking** Throughout the process, the Google Sheet is updated with the status, video URLs, and completion markers to keep track of each step. --- ### **Set Up Steps** To configure this workflow, follow these steps: 1. **Prepare the Google Sheet** - Use the provided template or clone [this sheet](https://docs.google.com/spreadsheets/d/14zlCDJFLrJIhcq7HwFGdKAHIwvjmkwP-FSTHmLTj0ow/edit). - Fill in the `START` (video URL), `PROMPT` (AI prompt), and `DURATION` (in seconds) columns. 2. **Configure Fal.ai API for Frame Extraction & Merging** - Create an account at [fal.ai](https://fal.ai/). - Obtain your API key. - In the nodes **“Extract last frame”**, **“Merge Videos”**, and related status nodes, set up **HTTP Header Authentication** with: - Name: `Authorization` - Value: `Key YOUR_API_KEY` 3. **Set Up RunPod API for AI Video Generation** - Sign up at [RunPod](https://get.runpod.io/n3witalia) and get your API key. - In the **“Generate clip”** node, configure **HTTP Bearer Authentication** with: - Value: `Bearer YOUR_RUNPOD_API_KEY` 4. **Configure Social Media Publishing** - **For YouTube**: Create a free account at [Upload-Post](https://www.upload-post.com/?linkId=lp_144414&sourceId=n3witalia&tenantId=upload-post-app) and set your `YOUR_USERNAME` and `TITLE` in the **“Upload to Youtube”** node. - **For Multi-Platform Posting**: Sign up at [Postiz](https://postiz.com/?ref=n3witalia) and configure your `Channel_ID` and `TITLE` in the **“Upload to Social”** node. 5. **Connect Google Services** - Set up Google Sheets and Google Drive OAuth2 credentials in their respective nodes to allow reading from and writing to the sheet and uploading videos to Drive. 6. **Execute the Workflow** - Once all credentials are set, trigger the workflow manually via the **“When clicking ‘Execute workflow’”** node. - The process will run autonomously, updating the sheet and publishing the final video upon completion. - --- 👉 [Subscribe to my new **YouTube channel**](https://youtube.com/@n3witalia). Here I’ll share videos and Shorts with practical tutorials and **FREE templates for n8n**. [](https://youtube.com/@n3witalia) --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

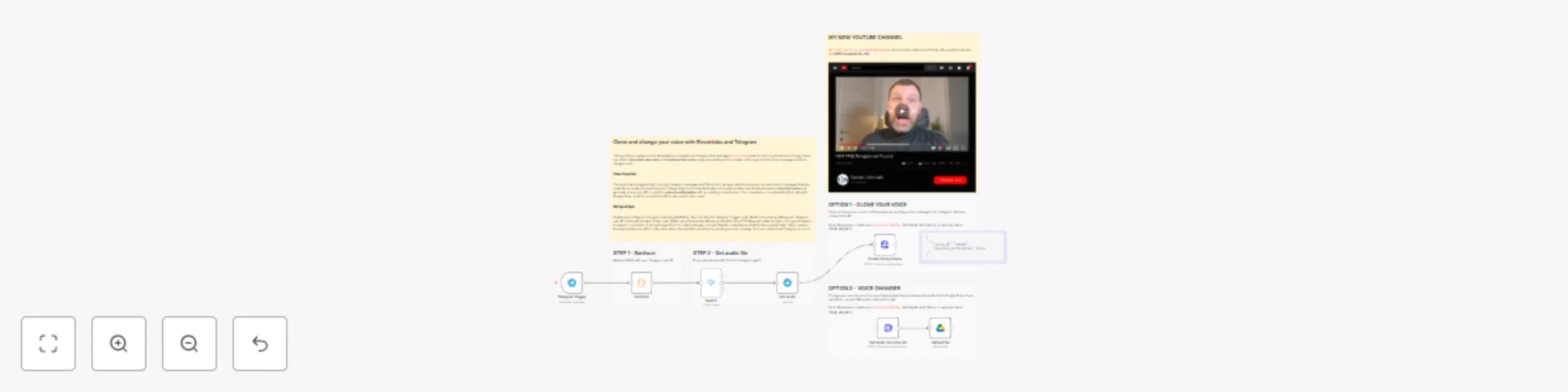

Clone and change your voice 🤖🎙️with Elevenlabs and Telegram

This workflow creates a voice AI assistant accessible via Telegram that leverages [ElevenLabs](https://try.elevenlabs.io/ahkbf00hocnu)* powerful voice synthesis technology. Users can either **clone their own voice** or **transform their voice** using pre-existing voice models, all through simple voice messages sent to a Telegram bot. *ONLY FOR STARTER, CREATOR, PRO PLAN This workflow allows users to: 1. **Clone their voice** by sending a voice message to a Telegram bot (creates a new voice profile on ElevenLabs) 2. **Change their voice** to a cloned voice and save the output to Google Drive --- ### For Best Results **Important Considerations for Best Results:** For optimal voice cloning via Telegram voice messages: **1. Recording Quality & Environment** - Record in a quiet room with minimal echo and background noise - Use a consistent microphone position (10-15cm from mouth) - Ensure clear audio without distortion or clipping **2. Content Selection & Variety** - Send 1 voice messages totaling 5-10 minutes of speech - Include diverse vocal sounds, tones, and natural speaking cadence - Use complete sentences rather than isolated words **3. Audio Consistency** - Maintain consistent volume, tone, and distance from microphone - Avoid interruptions, laughter, coughs, or background voices - Speak naturally without artificial effects or filters **4. Technical Preparation** - Ensure Telegram isn't overly compressing audio (use HQ recording) - Record all messages in the same session with same conditions - Include both neutral speech and varied emotional expressions --- ### **How it works** 1. **Trigger** The workflow starts with a Telegram trigger that listens for incoming messages (text, voice notes, or photos). 2. **Authorization check** A Code node checks whether the sender’s Telegram user ID matches your predefined ID. If not, the process stops. 3. **Message routing** A Switch node routes the message based on its type: - **Text** → Not processed further in this flow. - **Voice message** → Sent to the “Get audio” node to retrieve the audio file from Telegram. - **Photo** → Not processed further in this flow. 4. **Two main options** From the “Get audio” node, the workflow splits into two possible paths: - **Option 1 – Clone voice** The audio file is sent to ElevenLabs via an HTTP request to create a new cloned voice. The voice ID is returned and can be saved for later use. - **Option 2 – Voice changer** The audio is sent to ElevenLabs for speech-to-speech conversion using a pre-existing cloned voice (voice ID must be set in the node parameters). The resulting audio is saved to Google Drive. 5. **Output** - Cloned voice ID (for Option 1). - Converted audio file uploaded to Google Drive (for Option 2). --- ### **Set up steps** 1. **Telegram bot setup** - Create a bot via BotFather and obtain the API token. - Set up the Telegram Trigger node with your bot credentials. 2. **Authorization configuration** - In the “Sanitaze” Code node, replace `XXX` with your Telegram user ID to restrict access. 3. **ElevenLabs API setup** - Get an API key from ElevenLabs. - Configure the HTTP Request nodes (“Create Cloned Voice” and “Generate cloned audio”) with: - API key in the `Xi-Api-Key` header. - Appropriate endpoint URLs (including voice ID for speech-to-speech). 4. **Google Drive setup** (for Option 2) - Set up Google Drive OAuth2 credentials in n8n. - Specify the target folder ID in the “Upload file” node. 5. **Voice ID configuration** - For voice cloning: The voice name can be customized in the “Create Cloned Voice” node. - For voice changing: Replace `XXX` in the “Generate cloned audio” node URL with your ElevenLabs voice ID. 6. **Test the workflow** - Activate the workflow. - Send a voice note from your authorized Telegram account to trigger cloning or voice conversion. --- 👉 [Subscribe to my new **YouTube channel**](https://youtube.com/@n3witalia). Here I’ll share videos and Shorts with practical tutorials and **FREE templates for n8n**. [](https://youtube.com/@n3witalia) --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

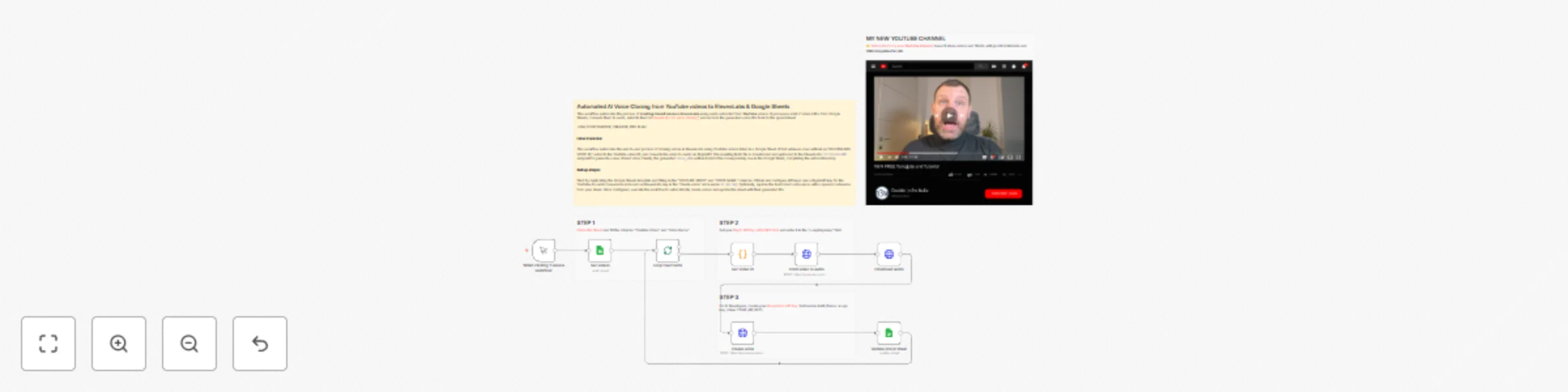

Automated AI voice cloning 🤖🎤 from YouTube videos to ElevenLabs & Google Sheets

This workflow automates the process of **creating cloned voices** in **ElevenLabs** using audio extracted from **YouTube** videos. It processes a list of video URLs from Google Sheets, converts them to audio, submits them to [ElevenLabs for voice cloning](https://try.elevenlabs.io/ahkbf00hocnu)*, and records the generated voice IDs back to the spreadsheet. *ONLY FOR STARTER, CREATOR, PRO PLAN **Important Considerations for Best Results:** For optimal voice cloning quality with ElevenLabs, carefully select your source YouTube videos: - **Duration**: Choose videos that are sufficiently long (preferably 1-5 minutes of clear speech) to provide enough audio data for accurate voice modeling. - **Audio Quality**: Select videos with high-quality audio, minimal background noise, and clear vocal recording. - **Single Speaker**: Use videos featuring only **one primary speaker**. Multiple voices in the same audio will confuse the cloning algorithm and produce poor results. - **Consistent Voice**: Ensure the speaker maintains a consistent tone and speaking style throughout the clip for the most faithful reproduction. --- ### **Key Features** #### **1. ✅ Fully Automated Voice Creation Workflow** * No manual downloading, converting, or uploading is required. * Just paste the YouTube link and voice name into the sheet—everything else happens automatically. #### **2. ✅ Seamless Audio Extraction** Using RapidAPI ensures: * High success rate in extracting audio * Support for virtually any YouTube video * Consistent output format required by ElevenLabs #### **3. ✅ Hands-Off ElevenLabs Voice Creation** The workflow handles all the steps required by the ElevenLabs API, including: * Uploading binary audio * Naming voices * Capturing and storing the resulting voice ID This is much faster than the manual method inside the ElevenLabs dashboard. #### **4. ✅ Centralized, Reusable Setup** Once the API keys are added: * The same workflow can be reused indefinitely * Users don’t need technical skills * Updating only requires editing the sheet --- ### **How it works:** 1. **Data Retrieval**: The workflow starts by fetching data from a Google Sheets spreadsheet that contains YouTube video URLs in the "YOUTUBE VIDEO" column and desired voice names in the "VOICE NAME" column. It specifically targets rows where the "ELEVENLABS VOICE ID" field is empty, ensuring only unprocessed videos are handled. 2. **Video Processing Pipeline**: - **Video ID Extraction**: Each YouTube URL is parsed to extract the unique video identifier using a regular expression. - **Audio Conversion**: The video ID is sent to the RapidAPI "YouTube MP3 2025" service, which converts the YouTube video to an audio file (M4A format). - **Audio Download**: The resulting audio file is downloaded locally for processing. 3. **Voice Creation**: The downloaded audio file is submitted to ElevenLabs API via a POST request to the `/v1/voices/add` endpoint. This creates a new voice clone based on the audio sample. The voice name is currently hardcoded as "Teresa Mannino" in the workflow but should be dynamically configured to use the value from the "VOICE NAME" spreadsheet column. 4. **Data Update**: The workflow captures the `voice_id` returned by ElevenLabs and writes it back to the corresponding row in the Google Sheets spreadsheet in the "ELEVENLABS VOICE ID" column, completing the processing cycle for that video. --- ### **Set up steps:** 1. **Prepare the Data Sheet**: Duplicate the provided Google Sheets template. Fill in the "YOUTUBE VIDEO" column with YouTube URLs and the "VOICE NAME" column with your desired names for the cloned voices. Ensure your videos meet the quality criteria mentioned above. 2. **Configure APIs**: - **RapidAPI**: Sign up for a free trial API key from the "YouTube MP3 2025" service on RapidAPI. Enter this key into the `x-rapidapi-key` header field in the "From video to audio" node. - **ElevenLabs**: Generate an API key from your ElevenLabs account. Configure the "Create voice" node's HTTP Header Authentication with the name `xi-api-key` and your ElevenLabs API key as the value. 3. **Optional Customization**: Modify the "Create voice" node to use the dynamic voice name from your spreadsheet instead of the hardcoded "Teresa Mannino" value for more flexible operation. 4. **Execute**: Run the workflow. It will automatically process each qualifying row, create voices in ElevenLabs, and populate the spreadsheet with the new Voice IDs. Monitor the workflow execution to ensure successful processing of each video. --- 👉 [Subscribe to my new **YouTube channel**](https://youtube.com/@n3witalia). Here I’ll share videos and Shorts with practical tutorials and **FREE templates for n8n**. [](https://youtube.com/@n3witalia) --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

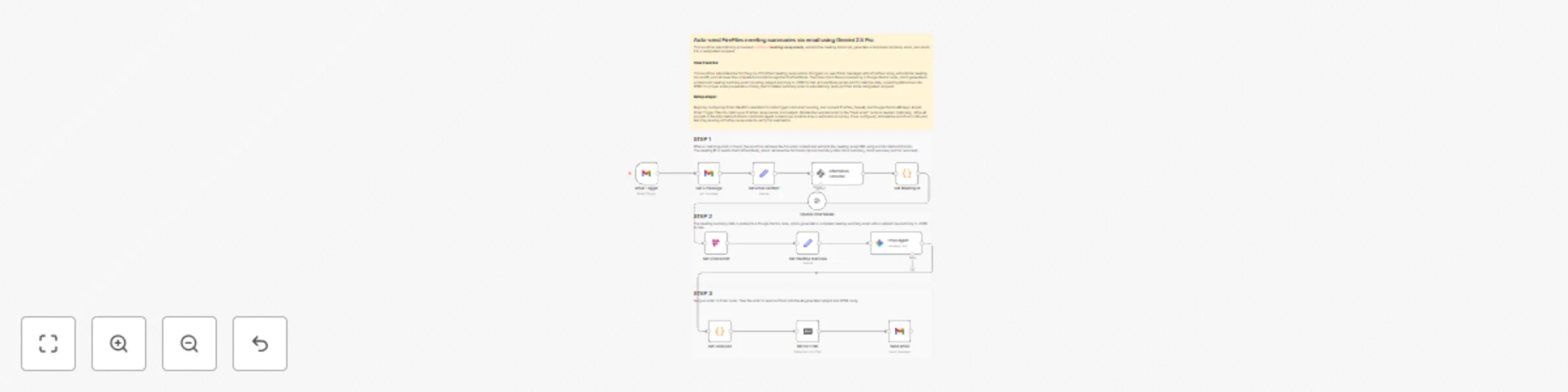

Auto-send FireFlies meeting summaries via email using Gemini 2.5 Pro

This workflow automatically processes [Fireflies.ai](https://app.fireflies.ai/login?referralCode=01K0V2Z1QHY76ZGY9450251C99) **meeting recap emails**, extracts the meeting transcript, generates a structured summary email, and sends it to a designated recipient. --- ### **Key Advantages** * #### **1. ✅ Full Automation of Meeting Summaries** The workflow eliminates all manual steps from receiving the Fireflies email to sending a polished summary. This ensures: * No delays * No forgotten recaps * No repetitive manual tasks * #### **2. ✅ Accurate Extraction of Meeting Information** Using AI-based information extraction and custom parsing, the workflow reliably identifies: * The correct meeting link * The Fireflies meeting ID * Relevant transcript data This avoids human error and ensures consistency. * #### **3. ✅ High-Quality, AI-Generated Email Summaries** The Gemini-powered summary generator: * Produces well-structured, readable emails * Includes decisions, action items, and discussion points * Automatically crafts a professional subject line * Uses real content (no placeholders) This results in clear, usable communication for recipients. * #### **4. ✅ Robust Error-Free Data Handling** The workflow integrates custom JavaScript steps to: * Parse URLs safely * Convert AI responses into valid JSON * Ensure correct formatting before email delivery This guarantees the message is always properly structured. * #### **5. ✅ Professional Formatting** By converting Markdown to HTML, the summary: * Is visually clear * Displays well on all email clients * Enhances readability for recipients * #### **6. ✅ Easily Scalable and Adaptable** The workflow can be expanded to: * Send summaries to multiple recipients * Add storage (e.g., Google Drive) * Trigger based on additional conditions * Integrate with CRMs or project management tools --- ### **How It Works** 1. **Trigger** The workflow starts with a Gmail Trigger that checks for new emails with the subject `"Your meeting recap"` from `[email protected]` every hour. 2. **Email Processing** When a matching email is found, the workflow retrieves the full email content and extracts the meeting recap URL using an **Information Extractor** node powered by OpenAI GPT-4.1-mini. 3. **Meeting ID Extraction** A **Code Node** extracts the meeting ID from the Fireflies URL (between `::` and `?`) for use in the next step. 4. **Transcript Fetching** The meeting ID is sent to the **Fireflies Node**, which retrieves the full transcript and summary data (short summary, short overview, and full overview). 5. **AI-Powered Email Generation** The meeting summary data is passed to a **Google Gemini** node, which generates a complete meeting summary email with a subject line and body in JSON format. 6. **Data Formatting** The raw JSON output is parsed in a **Code Node**, and the email body is converted from Markdown to HTML using the **Markdown Node**. 7. **Email Delivery** Finally, the email is sent via Gmail with the AI-generated subject and HTML body. --- ### **Set Up Steps** 1. **Configure Credentials** - Set up Gmail OAuth2 credentials for email triggering and sending. - Add Fireflies.ai API credentials for fetching transcripts. - Configure OpenAI and Google Gemini API keys for AI processing. 2. **Adjust Email Filters** Update the Gmail Trigger filters (`subject` and `sender`) if Fireflies.ai uses a different sender or subject format. 3. **Customize Output Email** Modify the recipient email in the **Send email** node to the desired address. 4. **Optional: Modify AI Prompts** Adjust the system prompts in the **Information Extractor** and **Email Agent** nodes to change extraction behavior or email tone. 5. **Activate Workflow** Ensure the workflow is set to **Active** in n8n, and test it by sending a sample Fireflies recap email to your connected Gmail account. --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

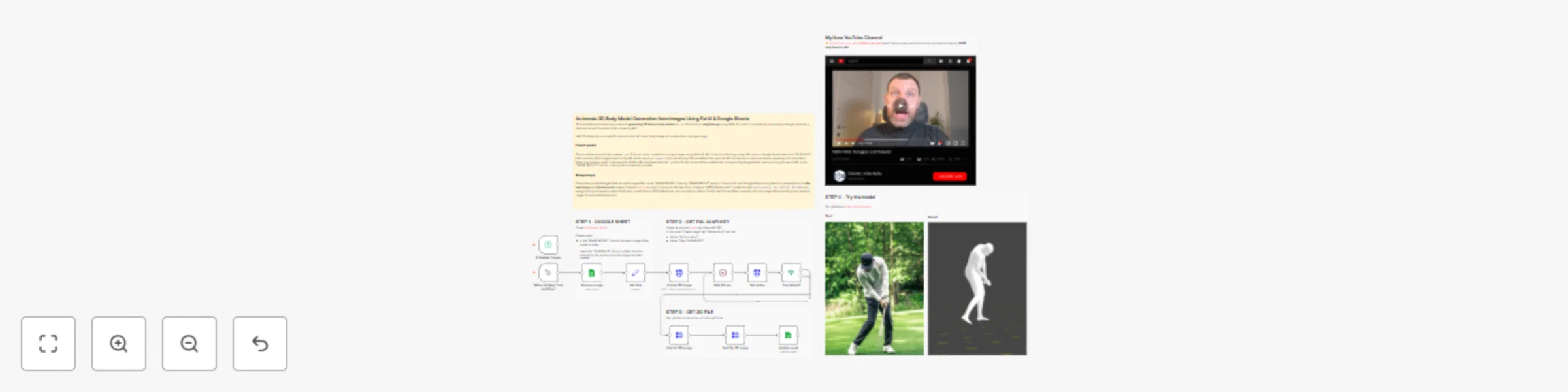

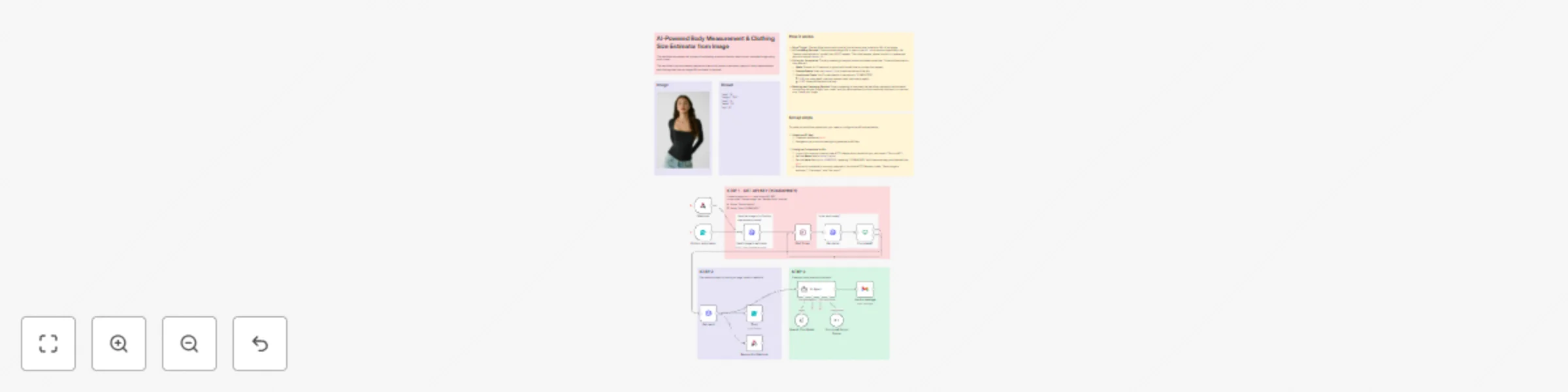

Automate 3D body model generation from images using SAM-3D & Google Sheets

This workflow automates the process of **generating 3D human body models** (in `.glb` format) from **single image** using SAM-3D model. It operates by connecting a Google Sheet as a data source with the external AI processing API. | Start | Result| |--------|---------| |  |  | --- ### **Use Cases** #### **1. ✅ Sports Analysis & Motion Optimization** 3D models allow precise analysis of posture, angles, and technique. Possible applications: * **Golf swing analysis** Identify stance, rotation, shoulder/hip alignment, and follow-through. * **Tennis serve biomechanics** Optimize shoulder rotation, racket angle, leg push-off. * **Running gait analysis** Evaluate stride symmetry, foot strike, and body tilt. * **Cycling posture optimization** Reduce drag by analyzing torso angle and hand position. * **Swimming technique evaluations** Compare ideal vs. actual joint angles. #### **2. ✅ Fitness, Health & Physiotherapy** 3D models can visually highlight imbalances or incorrect positions. * **Posture correction assessments** Identify spinal misalignment or uneven weight distribution. * **Physical therapy progress tracking** Compare poses over time to assess recovery. * **Ergonomics and workplace safety** Evaluate whether a worker’s posture is safe during lifting or repetitive tasks. * **Home fitness coaching** Automated feedback for yoga, pilates, stretching exercises. #### **3. ✅ Fashion, Apparel & Virtual Try-On** Photorealistic body reconstruction helps generate tailored outfits or evaluate fit. * **Virtual try-on for clothing brands** Produce accurate 3D avatars to test garments digitally. * **Custom-made fashion** Use 3D measurements for bespoke tailoring patterns. * **Model pose simulation** Test clothing fit in dynamic or unusual positions (e.g., dance, athletic poses). #### **4. ✅ Gaming, Animation & Digital Content Creation** Quick 3D reconstruction reduces production time for digital humans. * **Character rigging from real people** Generate 3D avatars ready for animation. * **Motion capture alternatives** Recreate specific poses without expensive mocap systems. * **VR/AR content creation** Deploy 3D characters into immersive environments. * **Comics, illustration, and concept art** Use 3D poses as reference models to speed up drawing. #### **5. ✅ Medical, Research & Educational Applications** Human-body 3D models provide insights in scientific or practical contexts. * **Anthropometric measurements** Estimate height, limb length, body proportions from images. * **Posture and musculoskeletal studies** Analyze joint angle distribution in different poses. * **Rehabilitation robotics or exoskeleton design** Fit devices to a patient’s real body shape. * **Training materials for anatomy or movement science** Generate accurate pose examples for students. #### **6.✅ Security, Forensics & Reconstruction** When allowed ethically and legally, 3D models can support investigations. * **Reconstruction of accident scenes** Understand how a person fell, collided, or moved. * **Analysis of body posture in video frames** Useful for determining gesture patterns or mobility constraints. #### **7. ✅ Art, Photography & Creative Industries** Artists often need unusual or complex human poses. * **Pose reference creation** For painting, 3D sculpting, illustration, or storyboarding. * **Recreating dynamic action scenes** Parkour, martial arts, ballet, expressive dance. * **Virtual studio lighting tests** Apply simulated lighting to a 3D model before shooting. --- ### **How It Works** This workflow automates the process of generating 3D human body models (in `.glb` format) from single images using the FAL.AI SAM-3D service. It operates by connecting a Google Sheet as a data source with the external AI processing API. Here is the operational flow: 1. **Trigger & Data Fetch:** The workflow begins either manually (via "Test workflow") or on a schedule. It queries a designated Google Sheet to find rows where the "3D RESULT" column is empty, indicating a new image needs processing. 2. **API Request & Queuing:** For each new image, it sends the image URL to the FAL.AI SAM-3D API endpoint (`/fal-ai/sam-3/3d-body`), which queues the job and returns a unique `request_id`. 3. **Status Polling & Waiting:** The workflow enters a polling loop. It waits 60 seconds, then checks the job's status using the `request_id`. If the status is not "COMPLETED", it waits another 60 seconds and checks again. 4. **Result Retrieval & Storage:** Once the status is "COMPLETED", the workflow fetches the final result, which contains the URL of the generated 3D model file (`.glb`). This file is then downloaded via an HTTP request. 5. **Sheet Update:** Finally, the workflow updates the original Google Sheet row. It writes the URL of the generated 3D model into the "IMAGE RESULT" column for the corresponding `row_number`, thus marking the task as complete. --- ### **Set Up Steps** To configure this workflow in your n8n environment, follow these steps: 1. **Prepare the Google Sheet:** * Clone the provided Google Sheet template. * Insert the URLs of the model images you want to convert into the "IMAGE MODEL" column. * Leave the "IMAGE RESULT" column empty; it will be populated automatically. * In n8n, set up a "Google Sheets OAuth2 API" credential and connect it to the **Get new image** and **Update result** nodes. Ensure the `documentId` points to your cloned sheet. 2. **Configure the FAL.AI API Connection:** * Create an account at [fal.ai](https://fal.ai/) and obtain your API key. * In n8n, create an "HTTP Header Auth" credential. Set the **Header Name** to `Authorization` and the **Header Value** to `Key YOUR_API_KEY_HERE` (replace with your actual key). * Apply this credential to the following nodes: **Create 3D Image**, **Get status**, and **Get Url 3D image**. 3. **Verify Workflow Logic (Key Nodes):** * **Get new image:** Confirm the `filtersUI` is set to look for empty rows in the correct column (e.g., "3D RESULT" or "IMAGE RESULT"). * **Create 3D Image:** Verify the JSON body correctly references the image URL from the previous node (`{{ $json.image }}`). * **Completed? (If node):** Ensure the condition checks for the string `COMPLETED` from `{{ $json.status }}`. * **Update result:** Double-check that the column mapping correctly uses `row_number` to match the row and updates the "IMAGE RESULT" column with the GLB URL 4. **Activate & Test:** * Save the workflow. * Use the **When clicking ‘Test workflow’** node for an initial manual test with one image URL in your sheet. * Once confirmed working, you can enable the **Schedule Trigger** node for automatic, periodic execution. --- 👉 [Subscribe to my new **YouTube channel**](https://youtube.com/@n3witalia). Here I’ll share videos and Shorts with practical tutorials and **FREE templates for n8n**. [](https://youtube.com/@n3witalia) --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

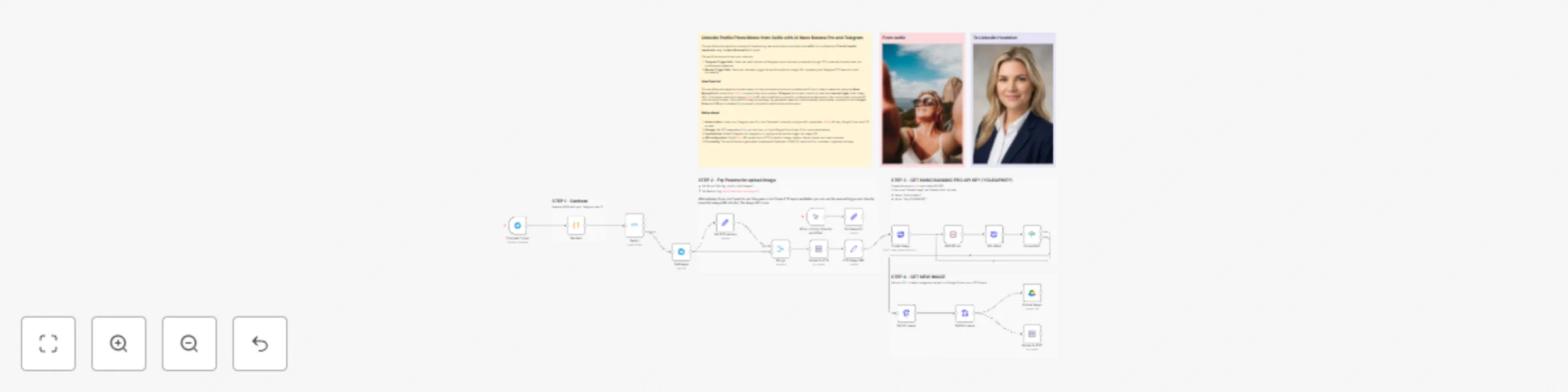

Transform selfies into professional LinkedIn headshots with Nano Banana Pro & Telegram

This workflow automates the process of transforming user-submitted photos (also bad **selfie**) into professional **CV and LinkedIn headshots** using the **Nano Banana Pro** AI model. | From selfie | To CV/Linkedin Headshot | |:----------------:|:-----------------------------------------:| |  |  | --- ### **Key Advantages** * #### **1. ✅ Fully Automated Professional Image Enhancement** From receiving a photo to delivering a polished LinkedIn-style headshot, the workflow requires **zero manual intervention**. * #### **2. ✅ Seamless Telegram Integration** Users can simply send a picture via Telegram—no need to log into dashboards or upload images manually. * #### **3. ✅ Secure Access Control** Only the authorized Telegram user can trigger the workflow, preventing unauthorized usage. * #### **4. ✅ Reliable API Handling with Auto-Polling** The workflow includes a robust status-checking mechanism that: * Waits for the Fal.ai model to finish * Automatically retries until the result is ready * Minimizes the chance of failures or partial results * #### **5. ✅ Flexible Input Options** You can run the workflow either: * Via Telegram * Or manually by setting the image URL if no FTP space is available This makes it usable in multiple environments. * #### **6. ✅ Dual Storage Output (Google Drive + FTP)** Processed images are automatically stored in: * **Google Drive** (organized and timestamped) * **FTP** (ideal for websites, CDN delivery, or automated systems) * #### **7. ✅ Clean and Professional Output** Thanks to detailed prompt engineering, the workflow consistently produces: * Realistic headshots * Studio-style lighting * Clean backgrounds * Professional attire adjustments Perfect for LinkedIn, CVs, or corporate profiles. * #### **8. ✅ Modular and Easy to Customize** Each step is isolated and can be modified: * Change the prompt * Replace the storage destination * Add extra validation * Modify resolution or output formats --- ### **How It Works** The workflow supports two input methods: 1. **Telegram Trigger Path**: Users can send photos via Telegram, which are then processed through FTP upload and transformed into professional headshots. 2. **Manual Trigger Path**: Users can manually trigger the workflow with an image URL, bypassing the Telegram/FTP steps for direct processing. The core process involves: - Receiving an input image (from Telegram or manual URL) - Sending the image to Fal.ai's Nano Banana Pro API with specific prompts for professional headshot transformation - Polling the API for completion status - Downloading the generated image and uploading it to both **Google Drive** and **FTP storage** - Using a conditional check to ensure processing is complete before downloading results --- ### **Set Up Steps** 1. **Authorization Setup**: - Replace in the "Sanitaze" node with your actual Telegram user ID - Configure Fal.ai API key in the "Create Image" node (Header Auth: `Authorization: Key YOURAPIKEY`) - Set up Google Drive and FTP credentials in their respective nodes 2. **Storage Configuration**: - In the "Set FTP params" node, configure: - `ftp_path`: Your server directory path (e.g., `/public_html/images/`) - `base_url`: Corresponding base URL (e.g., `https://website.com/images/`) - Configure Google Drive folder ID in the "Upload Image" node 3. **Input Method Selection**: - For Telegram usage: Ensure Telegram bot is properly configured - For manual usage: Set the image URL in the "Fix Image Url" node or use the manual trigger 4. **API Endpoints**: - Ensure all Fal.ai API endpoints are correctly configured in the HTTP Request nodes for creating images, checking status, and retrieving results 5. **File Naming**: - Generated files use timestamp-based naming: `yyyyLLddHHmmss-filename.ext` - Output format is set to PNG with 1K resolution The workflow handles the complete pipeline from image submission through AI processing to storage distribution, with proper error handling and status checking throughout. --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

Automate email tracking & generate pixel for lead nurturing with Google Sheet

This workflow automates the process of sending personalized lead-nurturing emails and tracking when each recipient opens the message through a custom tracking pixel. It integrates **Google Sheets**, **Gmail**, **OpenAI**, and **webhooks** to generate, deliver, and monitor engagement with your email sequence. It sends personalized emails containing a unique, invisible tracking pixel and then monitors who opens the email by detecting when the pixel is loaded, logging the activity back to a Google Sheets CRM. --- ### Key Features #### ✅ **1. Fully Automated Lead Nurturing** Once leads are added to the Google Sheet, the workflow handles everything: * Generating email content * Creating tracking pixels * Sending emails * Updating CRM fields No manual actions required. #### ✅ **2. Real-Time Email Open Tracking** Thanks to the pixel + webhook integration: * You instantly know when a lead opens an email * Data is written back to the CRM automatically * No external email marketing platforms are needed #### ✅ **3. Infinite Scalability with Zero Extra Cost** You can send emails and track performance using: * n8n (self-hosted or cloud) * Gmail * Google Sheets * AI-generated content This replicates features of expensive tools like HubSpot or Mailchimp—without their limits or pricing tiers. #### ✅ **4. Clean and Organized CRM Updates** The system keeps your CRM spreadsheet structured by automatically updating: * Send dates * Pixel IDs * Open status This ensures you always have accurate, up-to-date engagement data. #### ✅ **5. Easy to Customize and Expand** You can easily add: * Multi-step email sequences * Click tracking * Lead scoring * Zapier/Make integrations * CRM synchronization The workflow is modular, so each step can be modified or extended. --- ### **How it Works** 1. **Load Lead Data from Google Sheets** The workflow reads your CRM-like Google Sheet containing lead information (name, email, and status fields such as *EMAIL 1 SEND*, *PIXEL EMAIL 1*, etc.). This allows the system to fetch only the leads that haven’t received Email 1 yet. 2. **Generate a Unique Tracking Pixel** For each lead, the workflow creates a unique identifier (“pixel ID”). This ID is later appended to a small invisible 1×1 image—your tracking pixel. Example pixel structure used in emails: ``` <img src width="1" height="1"> ``` When the email client loads this image, n8n detects the open event via the webhook. 3. **Use AI to Generate a Personalized HTML Email** An OpenAI node creates the email body in HTML, inserting the tracking pixel directly inside the content. This ensures the email is personalized, consistent, and automatically includes the tracking mechanism. 4. **Send the Email via Gmail** The Gmail node sends the generated HTML email to the lead. After sending, the workflow updates the Google Sheet to log: * Email sent flag * Pixel ID generated * Sending date 5. **Detect Email Opens with Webhook + Pixel Image** When the recipient opens the email, their client loads the hidden pixel. That triggers your webhook, which: * Extracts the pixel ID and email address from the query parameters * Matches it with the lead in Google Sheets 6. **Update CRM When Email Is Opened** The workflow updates the CRM by marking *OPEN EMAIL 1* as “yes” for the corresponding pixel ID. This transforms your sheet into a live tracking dashboard of lead engagement. --- ### **Set up Steps** To configure this workflow, follow these steps: 1. **Prepare the CRM**: * Make a copy of the provided Google Sheet template. * In your copy, fill in the "DATE," "FIRST NAME," "LAST NAME," and "EMAIL" columns with your lead data. 2. **Configure the Workflow**: * In the "Get CRM," "Update CRM," and "Update Open email 1" nodes, update the `documentId` field to point to your new Google Sheet copy. * In the "Generate Pixel" node, locate the `webhook_url` assignment. Replace the placeholder text `https://YOUR_N8N_WEBHOOK_URL` with the actual, production webhook URL generated by the "Webhook" node in your n8n environment. **Important:** After setting this, you must activate the workflow for the webhook to be live and able to receive requests. 3. **Configure Credentials**: * Ensure the following credentials are correctly set up in your n8n instance: * **Google Sheets OAuth2 API**: For reading from and updating the CRM sheet. * **Gmail OAuth2**: For sending emails. * **OpenAI API**: For generating the email content. 4. **Test and Activate**: * Execute the workflow once manually to send test emails. Check the Google Sheet to confirm that the "EMAIL 1 SEND," "PIXEL EMAIL 1," and "EMAIL 1 DATE" columns are populated. * Open one of the sent test emails to trigger the tracking pixel. * Verify in the Google Sheet that the corresponding lead's "OPEN EMAIL 1" field is updated to "yes." * Once testing is successful, activate the workflow. --- ### **Summary** This workflow provides a powerful, low-cost automation system that: * Sends personalized AI-generated emails * Tracks email opens via a unique pixel * Logs all actions into Google Sheets * Automatically updates lead engagement data --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

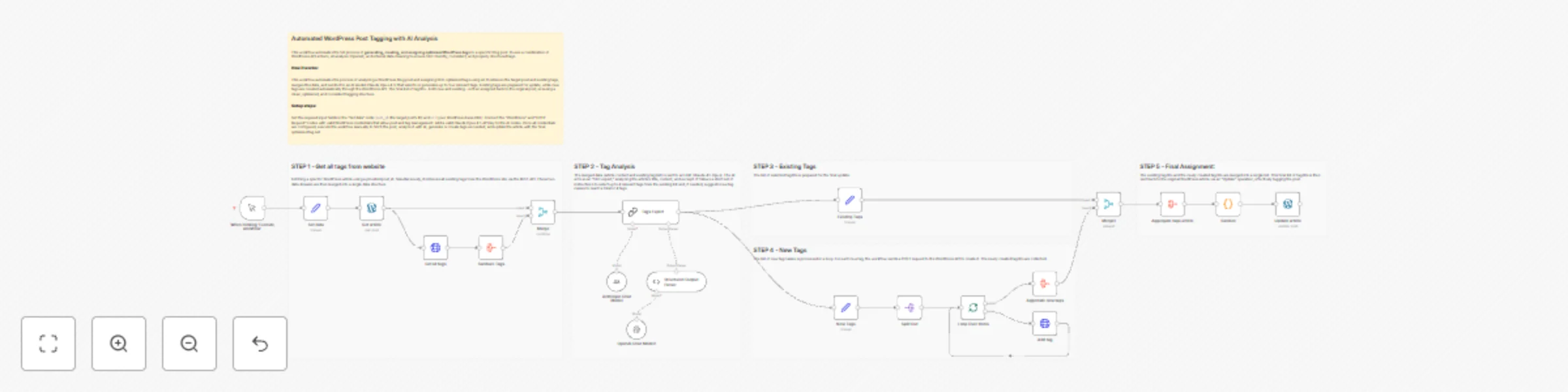

Automated WordPress post tagging with AI analysis and Claude Opus 4.5

This workflow automates the full process of **generating, creating, and assigning optimized WordPress tags** to a specific blog post. It uses a combination of WordPress API actions, AI analysis (Claude Opus 4.5), and internal data cleaning to ensure SEO-friendly, consistent, and properly structured tags. --- ### **Key Features** #### ✅ **1. Full Tag Automation** The workflow removes the need for manual tag selection or creation. It automatically: * Reads the article content * Chooses relevant existing tags * Creates new SEO-optimized ones * Assigns them to the article This eliminates human error and saves significant editorial time. #### ✅ **2. AI-Optimized SEO** Thanks to the integrated Claude analysis, tags are: * Semantically relevant * Optimized for search intent * Designed to improve discoverability and CTR * Adapted to the specific content structure This allows for a much higher SEO quality compared to manual tagging. #### ✅ **3. Intelligent Tag Management** The system ensures: * A maximum of 4 total tags * No irrelevant or duplicate tags * Tags follow naming conventions (e.g., multi-word or acronyms) This creates a clean, consistent tag taxonomy across the WordPress site. #### ✅ **4. Automated Tag Creation in WordPress** New tags are automatically created directly in WordPress via API, ensuring: * Perfect synchronization with your CMS * No need to manually add new tags from the WordPress backend * Immediate availability for future posts #### ✅ **5. Clean and Reliable Data Handling** Custom code nodes and aggregation steps: * Merge tag arrays safely * Remove duplicates * Produce clean, valid JSON outputs This makes the workflow stable even with large or complex tag lists. #### ✅ **6. Modular and Scalable Architecture** Every step (fetching, AI analysis, creation, merge, update) is separated into independent nodes, making it easy to: * Extend the workflow (e.g., add categories, multilingual tags, taxonomy validation) * Plug in different AI models * Reuse the structure for other WordPress automations #### ✅ **7. Consistent Output Validation** The Structured Output Parser ensures: * Correct JSON schema * Safe handling of AI output * No malformed data sent to WordPress This makes the automation robust and production-ready. --- ### How it works This workflow is an intelligent, AI-powered tag suggestion and assignment system for WordPress. It automates the process of analyzing a blog post's content and assigning the most relevant tags, creating new ones if necessary. 1. **Data Retrieval & Preparation:** The workflow starts by fetching a specific WordPress article using a provided `post_id`. Simultaneously, it retrieves all existing tags from the WordPress site via the REST API. These two data streams are then merged into a single data structure. 2. **AI-Powered Tag Analysis:** The merged data (article content and existing tag list) is sent to an LLM (Claude Opus 4.5). The AI acts as an "SEO expert," analyzing the article's title, content, and excerpt. It follows a strict set of instructions to select up to 4 relevant tags from the existing list and, if needed, suggests new tag names to reach a total of 4 tags. 3. **Tag Processing & Creation:** The workflow splits the AI's output into two paths: * **Existing Tags:** The list of selected tag IDs is prepared for the final update. * **New Tags:** The list of new tag names is processed in a loop. For each new tag, the workflow sends a `POST` request to the WordPress API to create it. The newly created tag IDs are collected. 4. **Final Assignment:** The existing tag IDs and the newly created tag IDs are merged into a single list. This final list of tag IDs is then sent back to the original WordPress article via an "Update" operation, effectively tagging the post. --- ### Set up steps To configure and run this workflow, follow these steps: 1. **Provide Input Data:** In the "Set data" node, you must configure the two required assignment fields: * `post_id`: Set this to the numerical ID of the WordPress post you want to analyze and tag. * `url`: Set this to the base URL of your WordPress site (e.g., `https://yourwebsite.com/`). 2. **Configure WordPress Credentials:** Ensure that the "Wordpress" and "HTTP Request" nodes are correctly linked to a valid set of WordPress credentials within n8n. These credentials must have the necessary permissions to read and update posts, as well as create new tags. 3. **Configure Claude Opus 4.5 Credentials:** Verify that the "Claude Chat Model" nodes are linked to a valid Claude API key credential in n8n. 4. **Execute:** Once the credentials and input data are set, click "Execute Workflow" on the manual trigger node to run the process. The workflow will fetch the article, analyze it with AI, create any new tags, and update the post with the final selection of tags. --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

Build a RAG system by uploading PDFs to the Google Gemini File Search Store

This workflow implements a **Retrieval-Augmented Generation (RAG)** system using **Google Gemini's File Search API**. It allows users to upload files to a dedicated search store and then ask questions about their content in a chat interface. The system automatically retrieves relevant information from the uploaded files to provide accurate, context-aware answers. --- ### **Key Advantages** **1. ✅ Seamless Integration of File Upload + AI Context** The workflow automates the entire lifecycle: * Upload file * Index file * Retrieve content for AI chat Everything happens inside one n8n automation, without manual actions. **2. ✅ Automatic Retrieval for Every User Query** The AI agent is instructed to always query the Search Store. This ensures: * More accurate answers * Context-aware responses * Ability to reference the exact content the user has uploaded Perfect for knowledge bases, documentation Q&A, internal tools, and support. **3. ✅ Reusable Search Store for Multiple Sessions** Once created, the Search Store can be reused: * Multiple files can be imported * Many queries can leverage the same indexed data A sustainable foundation for scalable RAG operations. **4. ✅ Visual and Modular Workflow Design** Thanks to n8n’s node-based flow: * Each step is clearly separated * Easy to debug * Easy to expand (e.g., adding authentication, connecting to a database, notifications, etc.) **5. ✅ Supports Both Form Submission and Chat Messages** The workflow is built with two entry points: * A form for uploading files * A chat-triggered entry point for RAG conversations Meaning the system can be embedded in multiple user interfaces. **6. ✅ Compliant and Efficient Interaction With Gemini APIs** Your workflow respects the structure of Gemini’s File Search API: * `/fileSearchStores` (create store) * `upload` endpoint * `importFile` endpoint * `generateContent` with file search tools This ensures compatibility and future expandability. **7. ✅ Memory-Aware Conversations** With the **Memory Buffer** node, the chat session preserves context across messages—providing a more natural and sophisticated conversational experience. --- ### **How it Works** #### **STEP 1 - Create a new Search Store** Triggered manually via the *“Execute workflow”* node, this step sends a request to the Gemini API to create a **FileSearch Store**, which acts as a private vector index for your documents. * The store name is then saved using a *Set* node. * This store will later be used for file import and retrieval. #### **STEP 2 - Upload and import a file into the Search Store** When the form is submitted (through the *Form Trigger*), the workflow: 1. **Accepts a file upload** via the form. 2. **Uploads the file** to Gemini using the `/upload` endpoint. 3. **Imports the uploaded file into the Search Store**, making it searchable. This step ensures content is stored, chunked, and indexed so the AI model can retrieve relevant sections later. #### **STEP 3 - RAG-enabled Chat with Google Gemini** When a chat message is received: * The workflow loads the Search Store identifier. * A *LangChain Agent* is used along with the **Google Gemini Chat Model**. * The model is configured to **always use the SearchStore tool**, so every user query is enriched by a search inside the indexed files. * The system retrieves relevant chunks from your documents and uses them as context for generating more accurate responses. This creates a fully functioning **RAG chatbot** powered by Gemini. --- ### **Set up Steps** Before activating this workflow, you must complete the following configuration: 1. **Google Gemini API Credentials:** Ensure you have a valid Google AI Studio API key. This key must be entered in all HTTP Request nodes (`Create Store`, `Upload File`, `Import to Store`, and `SearchStore`). 2. **Configure the Search Store:** * Manually trigger the "Create Store" node once via the "Execute Workflow" button. This will call the Gemini API to create a new File Search Store and return its resource name (e.g., `fileSearchStores/my-store-12345`). * Copy this resource name and update the **"Get Store"** and **"Get Store1"** Set nodes. Replace the placeholder value `fileSearchStores/my-store-XXX` in both nodes with the actual name of your newly created store. 3. **Deploy Triggers:** For production use, you should activate the workflow. This will generate public URLs for the **"On form submission"** node (for file uploads) and the **"When chat message received"** node (for the chat interface). These URLs can be embedded in your applications (e.g., a website or dashboard). Once these steps are complete, the workflow is ready. Users can start uploading files via the form and then ask questions about them in the chat. --- ### **Need help customizing?** [Contact me](mailto:[email protected]) for consulting and support or add me on [Linkedin](https://www.linkedin.com/in/davideboizza/).

Automate Calendly user onboarding & offboarding with Google Sheets and human approval