Maxim Osipovs

Workflows by Maxim Osipovs

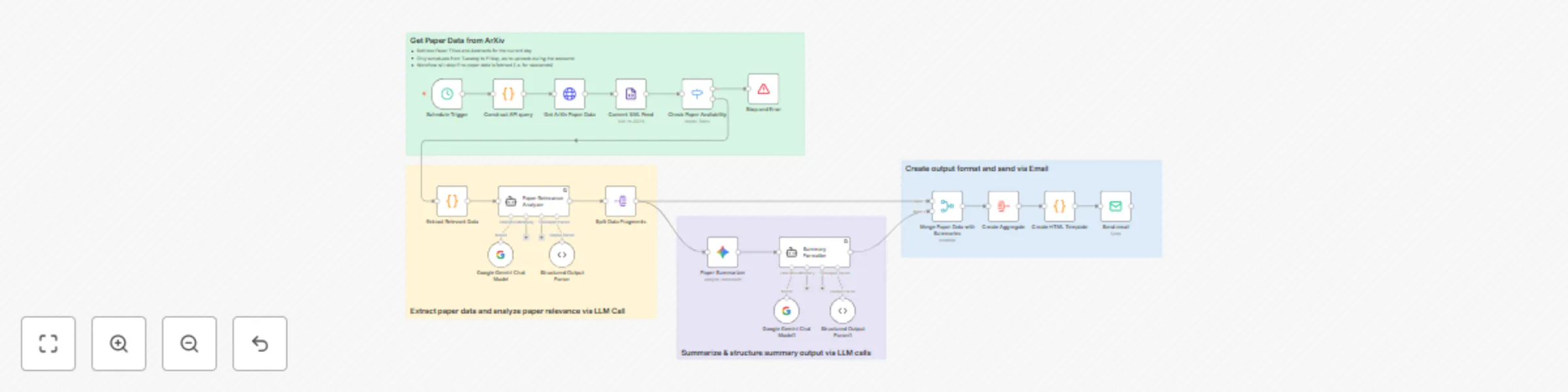

Monitor AI research papers with Gemini-powered filtering and email summaries

#### This n8n workflow template implements an intelligent research paper monitoring system that automatically tracks new publications in ArXiv's Artificial Intelligence category, filters them for relevance using LLM-based analysis, generates structured summaries, and delivers a formatted email digest. The system uses a three-stage pipeline architecture: 1. automated paper retrieval from ArXiv's API 2. AI-powered relevance filtering and analysis via Google Gemini 3. Intelligent summarization with HTML formatting for clean email delivery This eliminates the need to manually browse ArXiv daily while ensuring you only receive summaries of papers genuinely relevant to your research interests. ## What This Template Does (Step-by-Step) 1. Runs on a configurable schedule (Tuesday-Friday) to fetch new papers from ArXiv's cs.AI category via their API. 2. Uses Google Gemini with structured output parsing to analyze each paper's relevance based on your defined criteria for "applicable AI research." 3. Generates concise, structured summaries for the three selected papers using a separate LLM call 4. Aggregates three relevant paper's data and summaries into a single, readable digest ## Important Notes - The workflow only runs Tuesday through Friday, as ArXiv typically doesn't publish new papers on weekends - Customize the "Paper Relevance Analyzer" criteria to match your specific research interests - Adjust the similarity threshold and selection logic to control how many papers are included in each digest ## Required Integrations: - ArXiv API (no authentication required) - Google Gemini API (for relevance analysis and summarization) - Email service (SMTP or email provider like Gmail, SendGrid, etc.) ## Best For: 🎓 Academic researchers tracking AI developments in their field 💼 ML practitioners and data scientists staying current with new techniques 🧠 AI enthusiasts who want curated, digestible research updates 🏢 Technical teams needing regular competitive intelligence on emerging approaches ## Key Benefits: ✅ Automates daily ArXiv monitoring, saving 60+ minutes of manual research time ✅ Uses AI to pre-filter papers, reducing information overload by 80-90% ✅ Delivers structured, readable summaries instead of raw abstracts ✅ Fully customizable relevance criteria to match your specific interests ✅ Professional HTML formatting makes digests easy to scan and share ✅ Eliminates the risk of missing important papers in your field

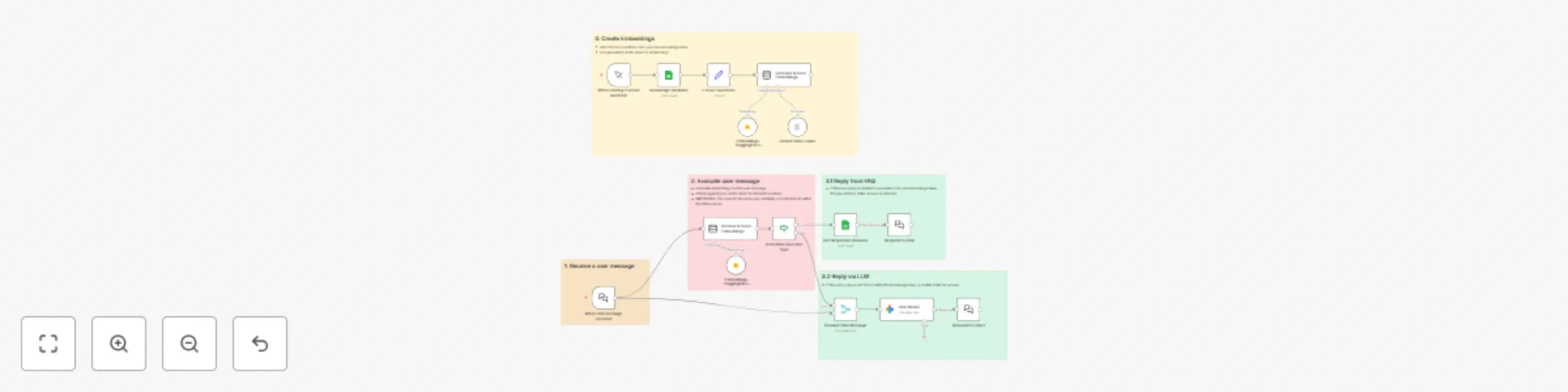

Dual-path customer support system with Google Sheets, vectors & Gemini

#### This n8n workflow template implements a dual-path architecture for AI customer support, based on the principles outlined in the research paper "[A Locally Executable AI System for Improving Preoperative Patient Communication: A Multi-Domain Clinical Evaluation](https://arxiv.org/abs/2510.01671)" (Sato et al.). The system, named **LENOHA** (Low Energy, No Hallucination, Leave No One Behind Architecture), uses a high-precision classifier to differentiate between high-stakes queries and casual conversation. Queries matching a known FAQ are answered with a pre-approved, verbatim response, structurally eliminating hallucination risk. All other queries are routed to a standard generative LLM for conversational flexibility. This template provides a **practical ++blueprint++** for building safer, more reliable, and cost-efficient AI agents, particularly in **regulated** or **high-stakes domains** where factual accuracy is critical. ### What This Template Does (Step-by-Step) - Loads an expert-curated FAQ from Google Sheets and creates a searchable vector store from the questions during a one-time setup flow. - Receives incoming user queries in real-time via a chat trigger. - Classifies user intent by converting the query to an embedding and searching the vector store for the most semantically similar FAQ question. - Routes the query down one of two paths based on a configurable similarity score threshold. - Responds with a verbatim, pre-approved answer if a match is found (safe path), or generates a conversational reply via an LLM if no match is found (casual path). ### Important Note for Production Use This template uses an in-memory Simple Vector Store for demonstration purposes. For a production application, this should be replaced with a persistent vector database (e.g., Pinecone, Chroma, Weaviate, Supabase) to store your embeddings permanently. ### Required Integrations: - Google Sheets (for the FAQ knowledge base) - Hugging Face API (for creating embeddings) - An LLM provider (e.g., OpenAI, Anthropic, Mistral) - (Recommended) A persistent Vector Store integration. ### Best For: 🏦 Organizations in regulated industries (finance, healthcare) requiring high accuracy. 💰 Applications where reducing LLM operational costs is a priority. ⚙️ Technical support agents that must provide precise, unchanging information. 🔒 Systems where auditability and deterministic responses for known issues are required. ### Key Benefits: ✅ Structurally eliminates hallucination risk for known topics. ✅ Reduces reliance on expensive generative models for common queries. ✅ Ensures deterministic, accurate, and consistent answers for your FAQ. ✅ Provides high-speed classification via vector search. ✅ Implements a research-backed architecture for building safer AI systems.