Jaruphat J.

Workflows by Jaruphat J.

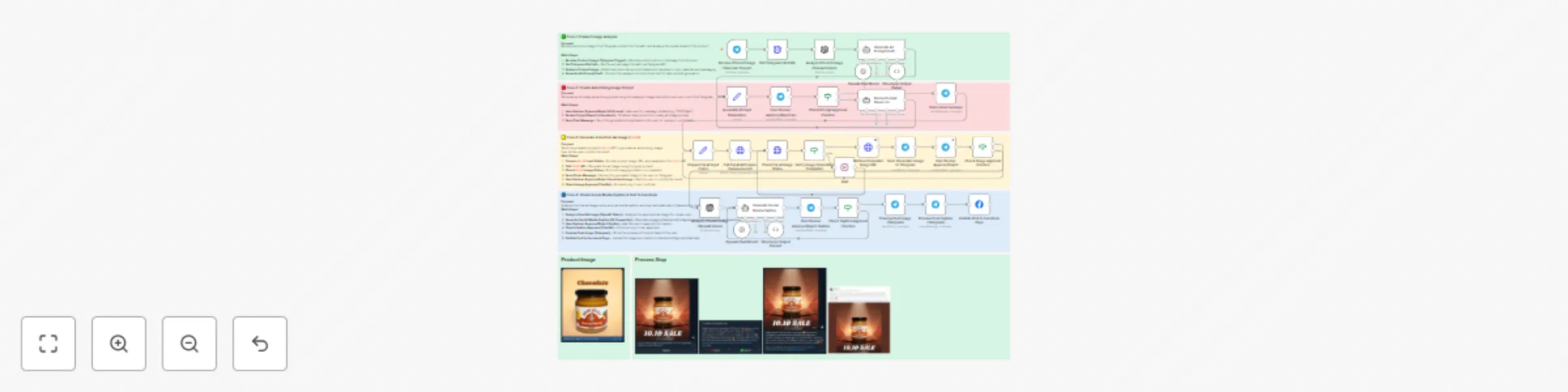

Automate product ad creation with Telegram, Fal.AI & Facebook posting

This workflow automates the entire process of creating and publishing social media ads — directly from Telegram. By s...

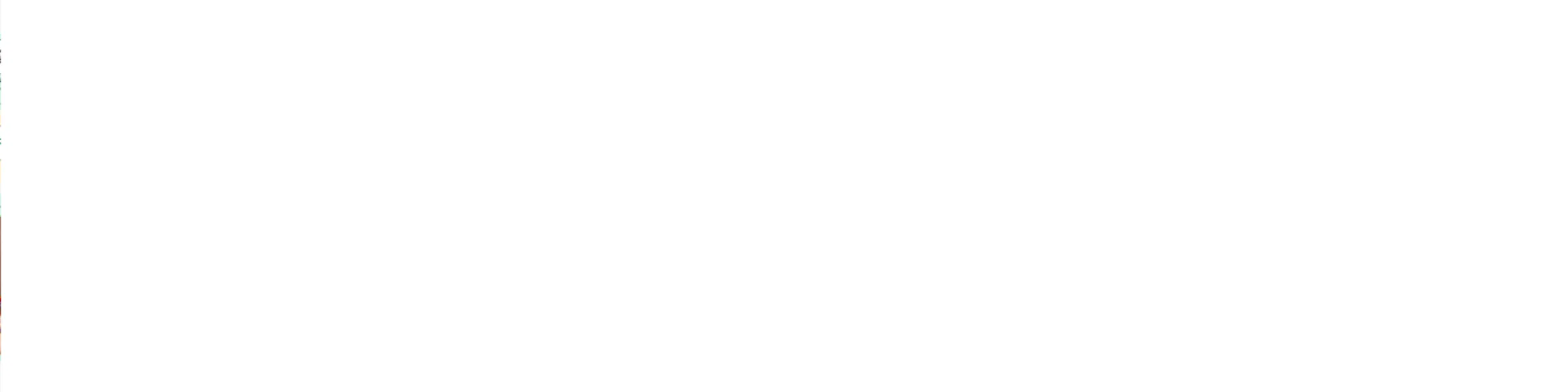

Generate AI product ad images from Google Sheets using Fal.ai and OpenAI

⚠️ Note: All sensitive credentials should be set via n8n Credentials or environment variables. Do not hardcode API ke...

Generate UGC ads from Google Sheets with Fal.ai models (nano-banana, WAN2.2, Veo3)

⚠️ Note: All sensitive credentials should be set via n8n Credentials or environment variables. Do not hardcode API ke...

Process Thai documents with TyphoonOCR & AI to Google Sheets (multi-page PDF)

⚠️ Note: This template requires a community node and works only on self hosted n8n installations. It uses the Typhoon...

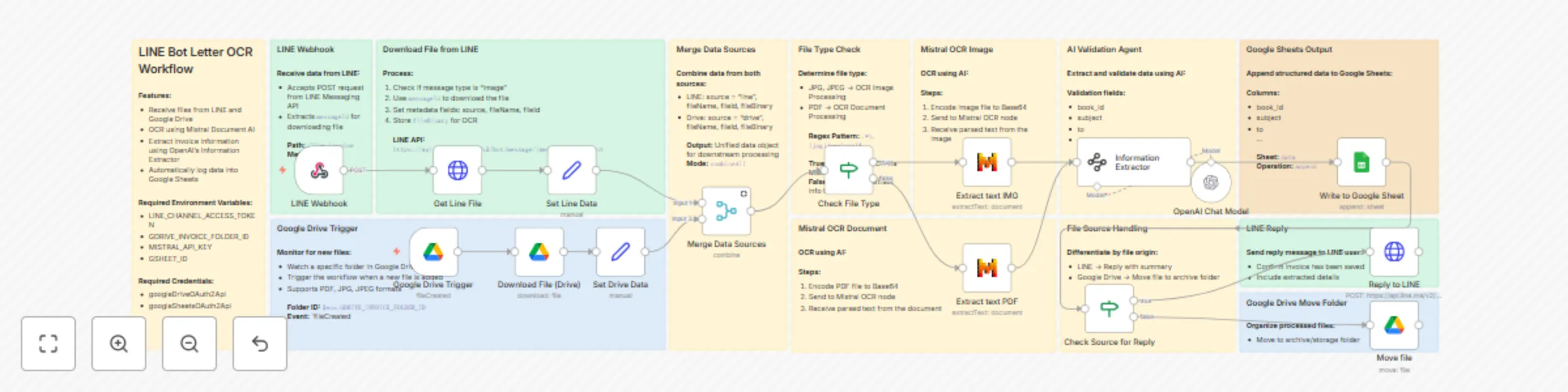

Extract data from Thai Government letters with Mistral OCR and store in Google Sheets

LINE OCR Workflow to Extract and Save Thai Government Letters to Google Sheets This template automates the extraction...

Generate cinematic videos from text prompts with GPT-4o, Fal.AI Seedance & Audio

Who’s it for? This workflow is built for: AI storytellers , content creators , YouTubers , and short form video marke...

Generate video from prompt using Vertex AI Veo 3 and upload to Google Drive

Who’s it for This template is perfect for content creators, AI enthusiasts, marketers, and developers who want to aut...

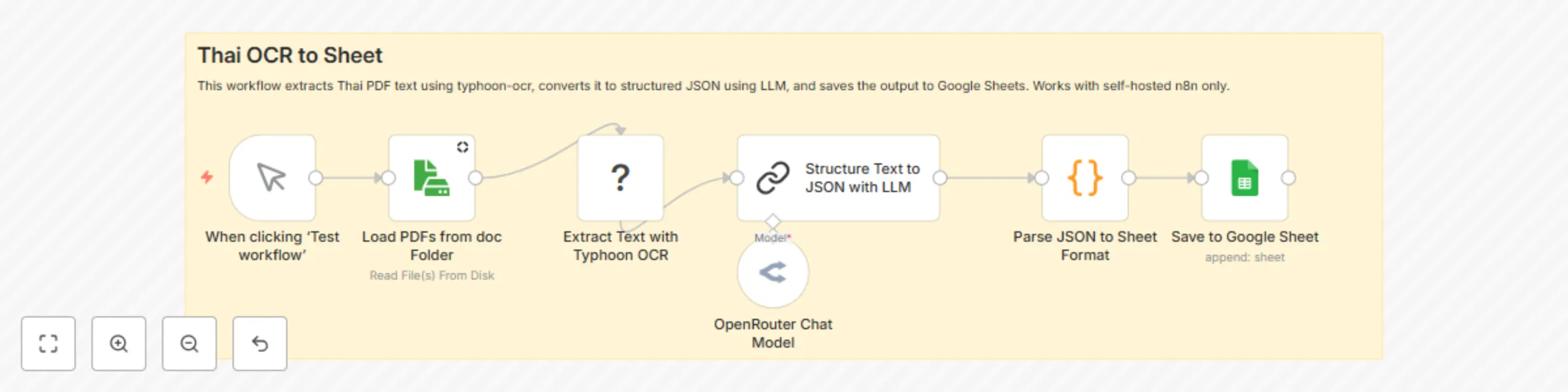

Extract and structure Thai documents to Google Sheets using Typhoon OCR and Llama 3.1

⚠️ Note: This template requires a community node and works only on self hosted n8n installations. It uses the Typhoon...

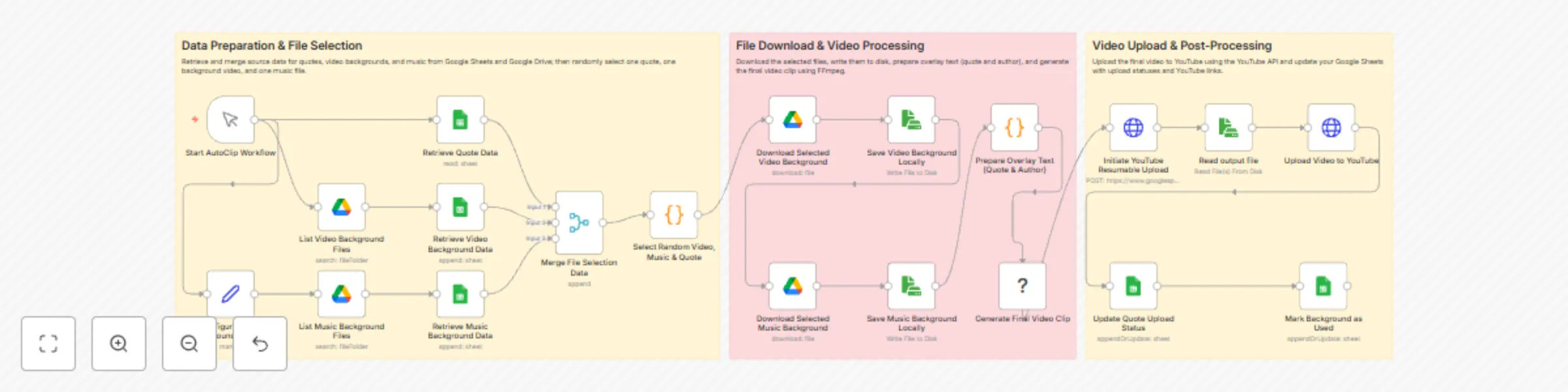

Automatically create cinematic quote videos with AI and upload to YouTube

⚠️ Important Disclaimer: This template is only compatible with a self hosted n8n instance using a community node. Who...

Automatically create and upload YouTube videos with quotes in Thai using FFmpeg

Who is this for? This workflow is perfect for digital content creators, marketers, and social media managers who regu...

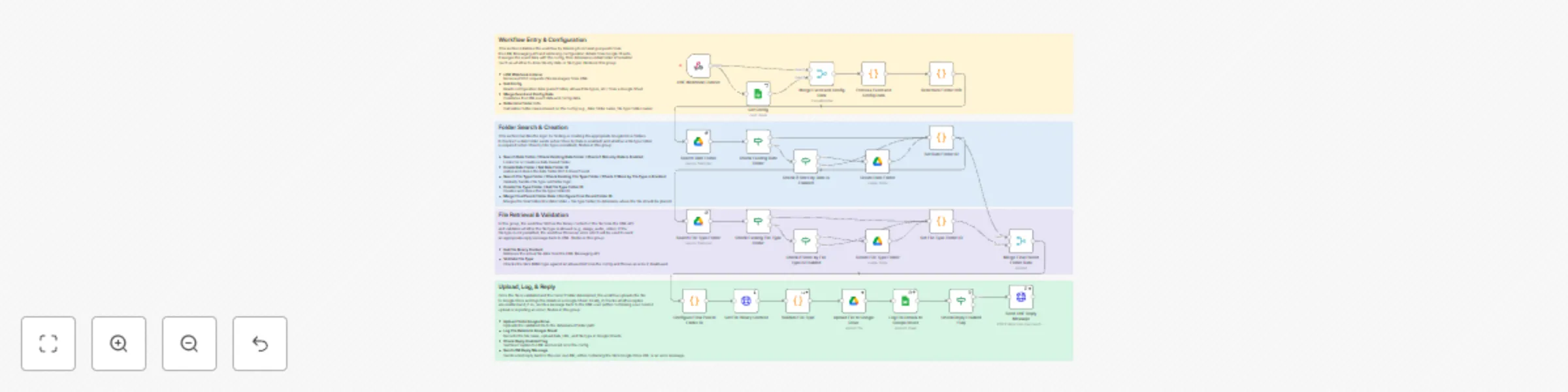

Automatically save & organize LINE message files in Google Drive with Sheets logging

Overview This workflow automatically saves files received via LINE Messaging API into Google Drive and logs the file...

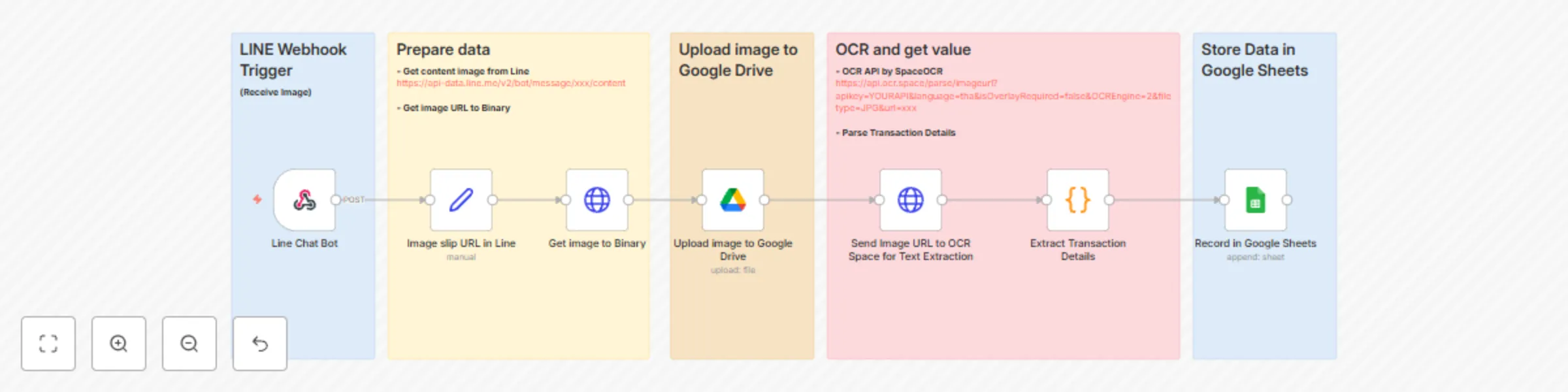

Extract Thai bank slip data from LINE using SpaceOCR and save to Google Sheets

Who is this for? This workflow is ideal for businesses, accountants, and finance teams who receive bank slip images v...

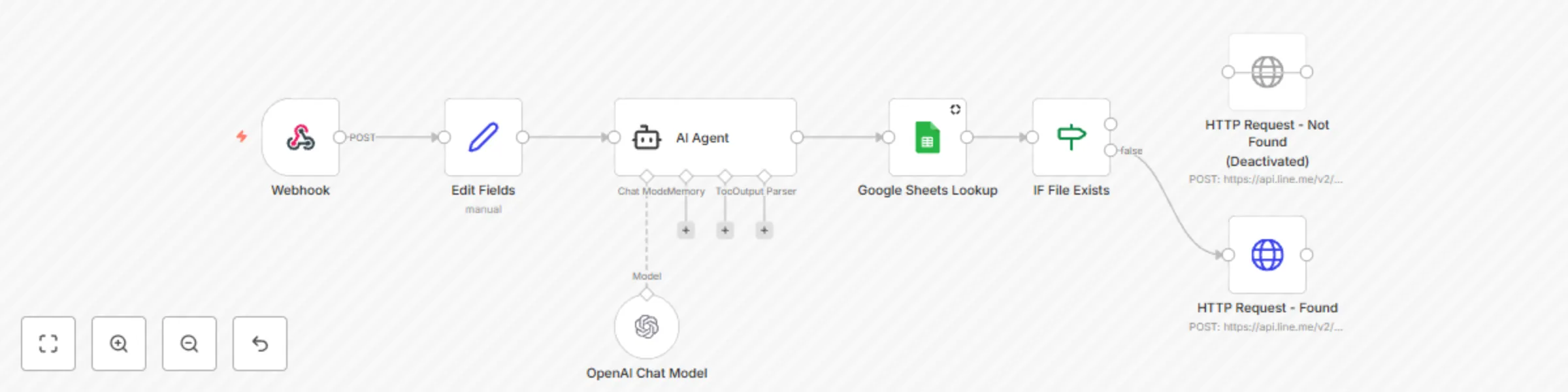

LINE BOT - Google Sheets file lookup with AI agent

This workflow integrates LINE BOT, AI Agent (GPT), Google Sheets, and Google Drive to enable users to search for file...