J

Jainik Sheth

2

Workflows

Workflows by Jainik Sheth

Free advanced

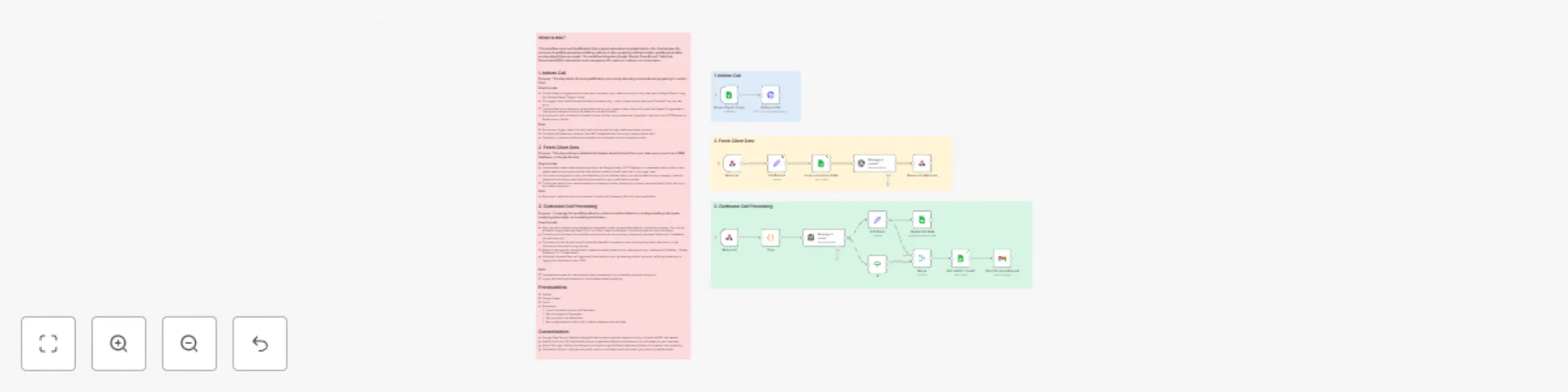

Automated lead qualification voice agent with OpenAI GPT, Twilio, Elevenlabs and Gmail

What is this? This workflow is a Lead Qualification Voice Agent automation template built in n8n. It automates the pr...

J

Jainik Sheth Lead Nurturing

18 Oct 2025

802

0

Free advanced

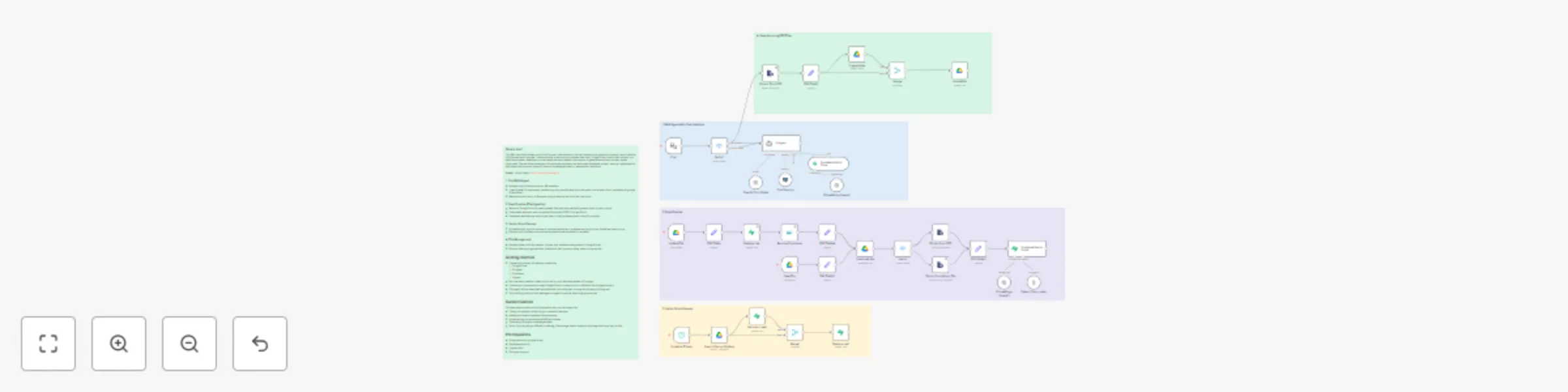

Document-based RAG chat assistant with Google Drive, Supabase & OpenAI

What is this? This RAG workflow allows you to build a smart chat assistant that can answer user questions based on an...

J

Jainik Sheth Internal Wiki

9 Oct 2025

367

0