G

Guido X Jansen

2

Workflows

Workflows by Guido X Jansen

Free advanced

Generate consensus answers with multiple AI models & peer review system

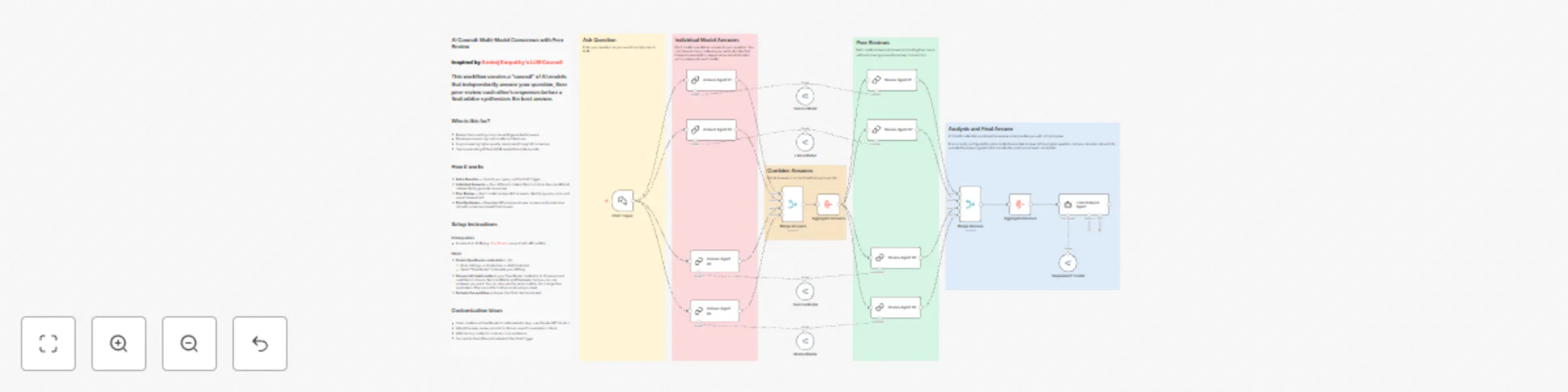

AI Council: Multi Model Consensus with Peer Review Inspired by Andrej Karpathy's LLM Council , but rebuilt in n8n. Th...

G

Guido X Jansen Engineering

10 Dec 2025

415

0

Free advanced

Enrich LinkedIn profiles in NocoDB CRM with Apify scraper

Introduction Manual LinkedIn data collection is time consuming, error prone, and results in inconsistent data quality...

G

Guido X Jansen Lead Generation

25 Jun 2025

934

0