Growth AI

Workflows by Growth AI

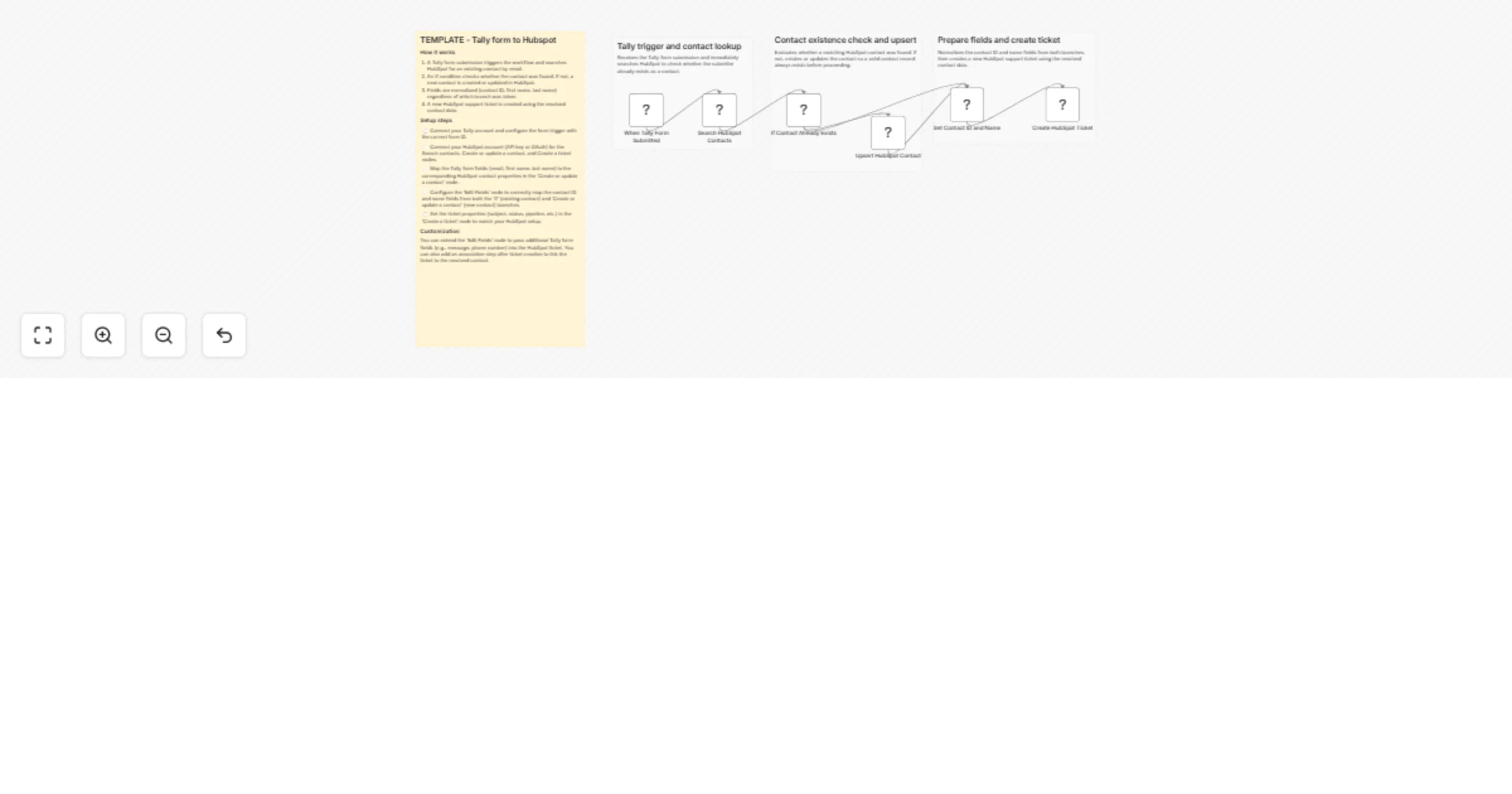

Create HubSpot support tickets from Tally form submissions

> ⚠️ Self hosted only — This template uses a community node ( ) and cannot run on n8n Cloud. Who it's for This wor...

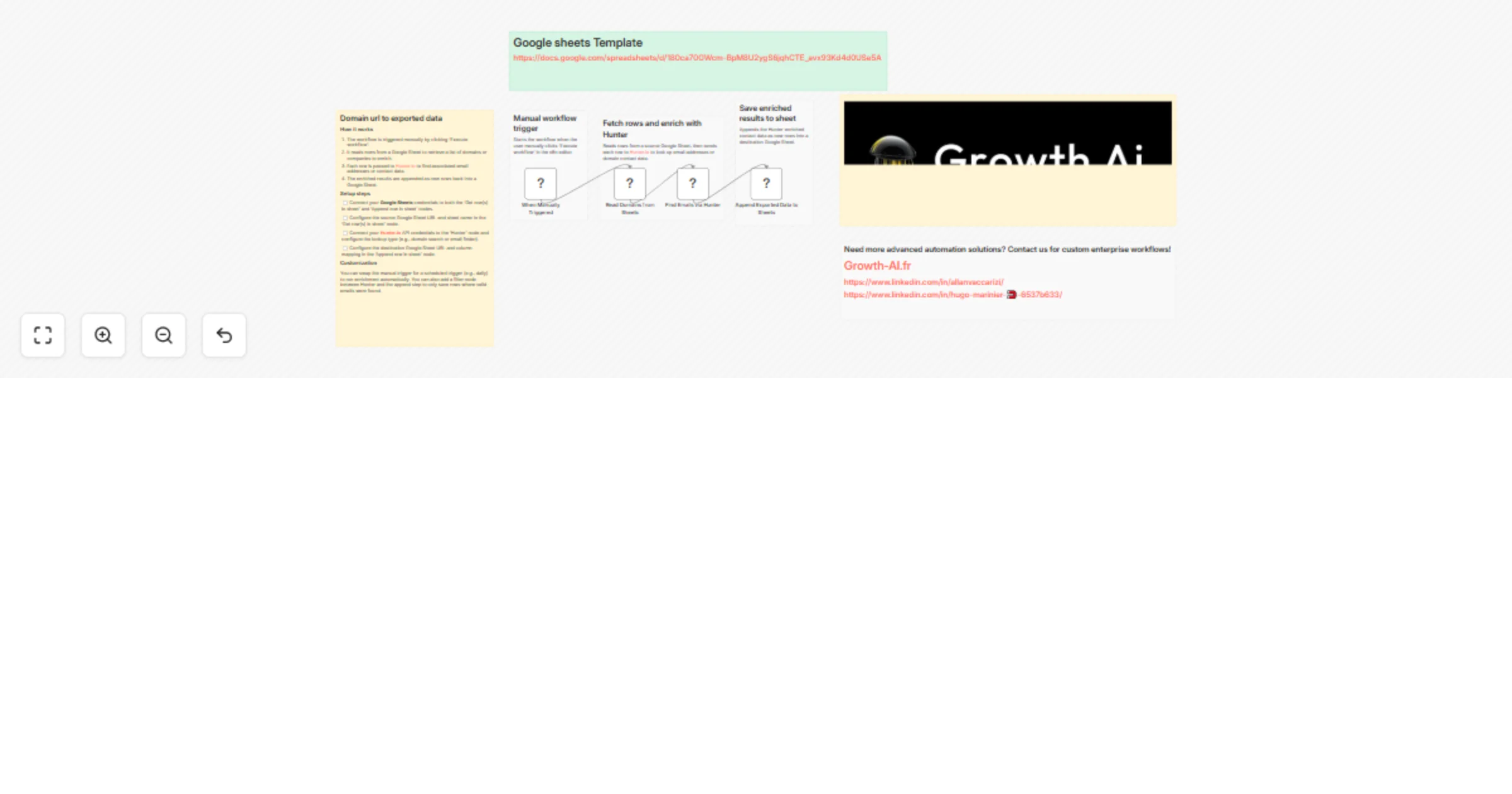

Enrich domain URLs with Hunter.io and export contacts to Google Sheets

📺 Full walkthrough video: https://youtu.be/uk mJRgYnfs Who it's for This workflow is for sales teams, marketers, and...

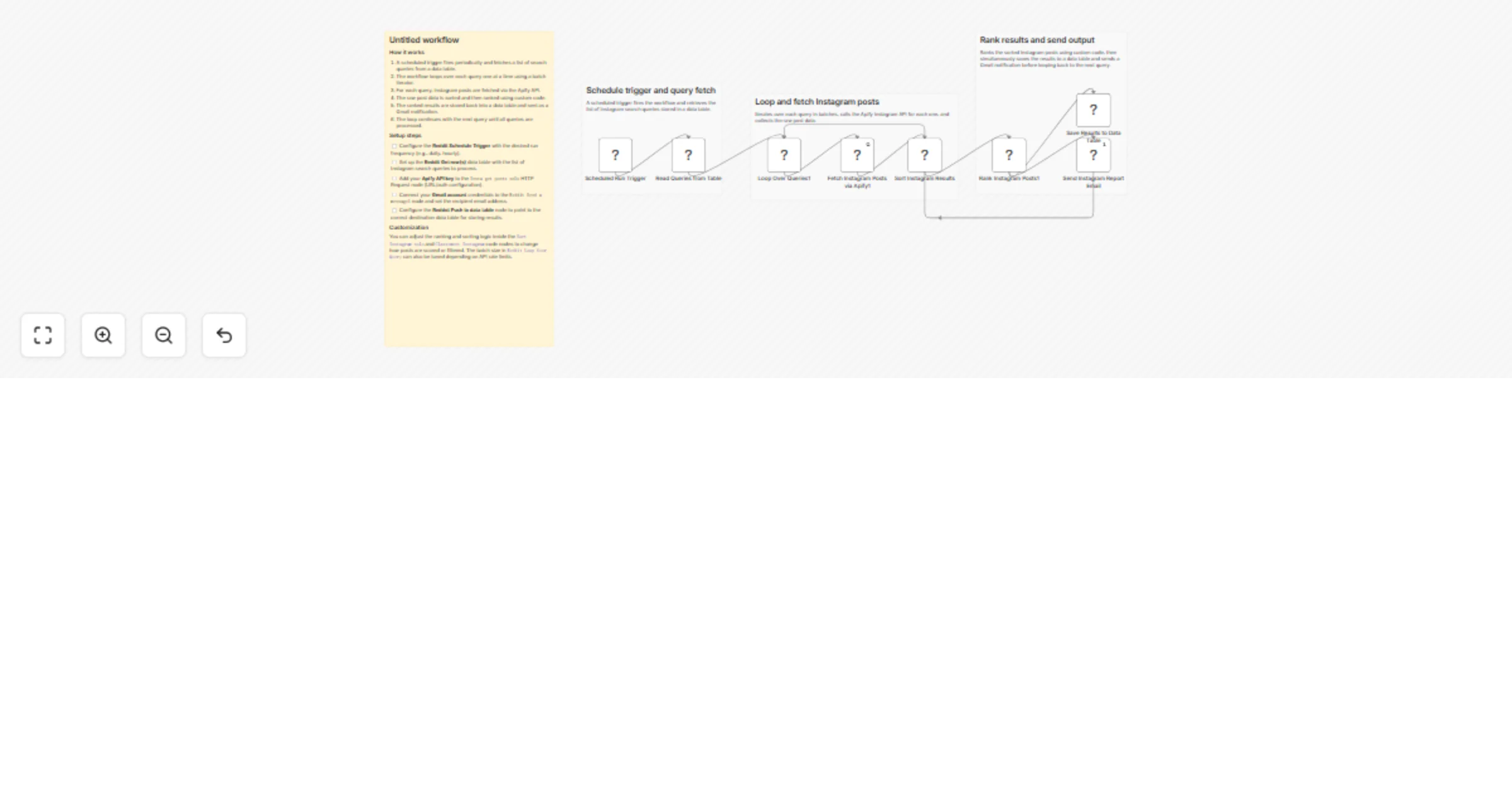

Monitor Instagram hashtag trends and email reports with Apify and Gmail

📺 Full walkthrough video: https://www.youtube.com/watch?v=Me4d4BILvHk What it does This workflow automatically monit...

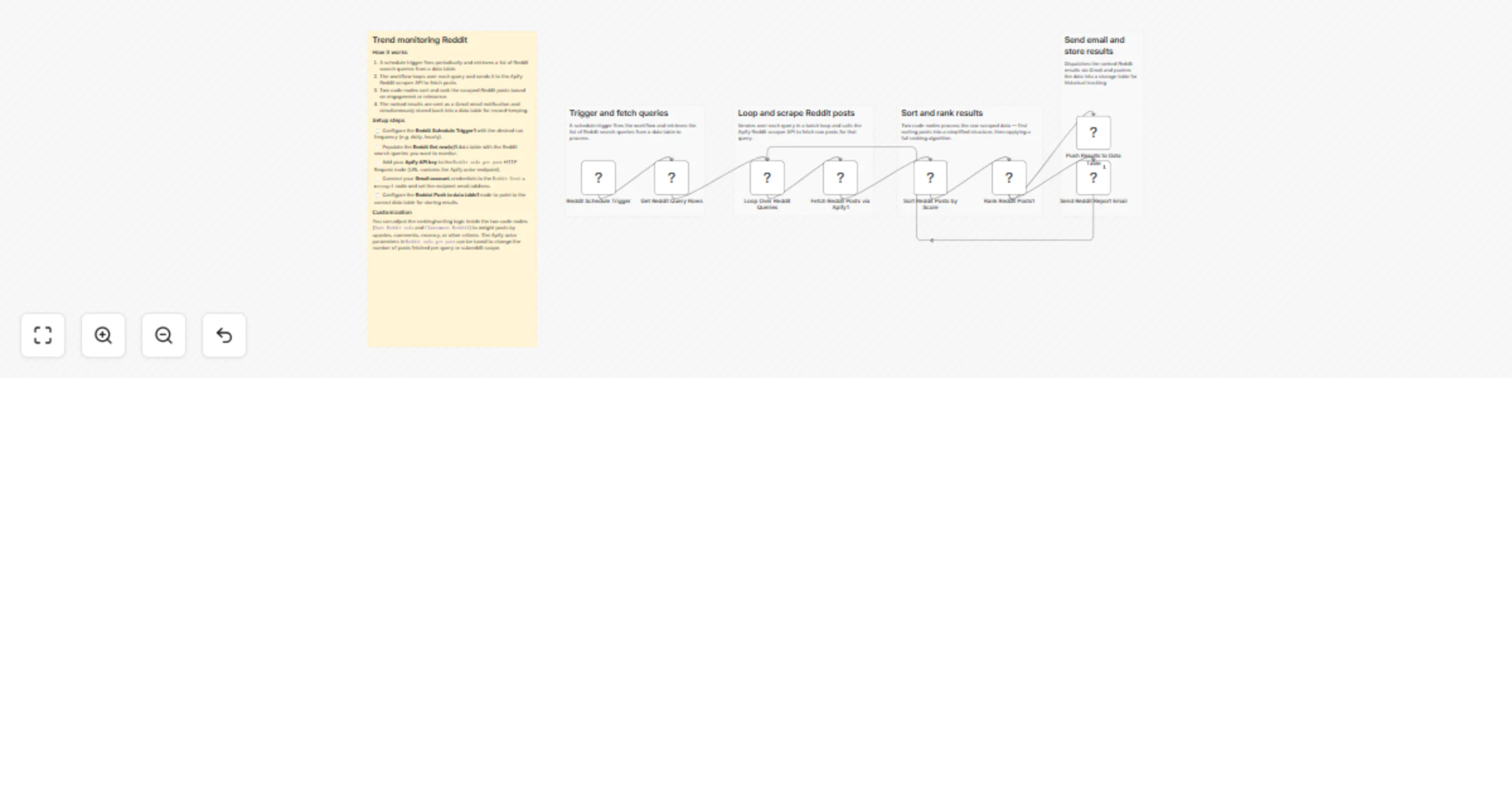

Monitor Reddit keyword trends and email reports with Apify

📺 Full walkthrough video: https://www.youtube.com/watch?v=Me4d4BILvHk What it does This workflow automatically monit...

Monitor & filter French procurement tenders with BOAMP API and Google Sheets

French Public Procurement Tender Monitoring Workflow Overview This n8n workflow automates the monitoring and filterin...

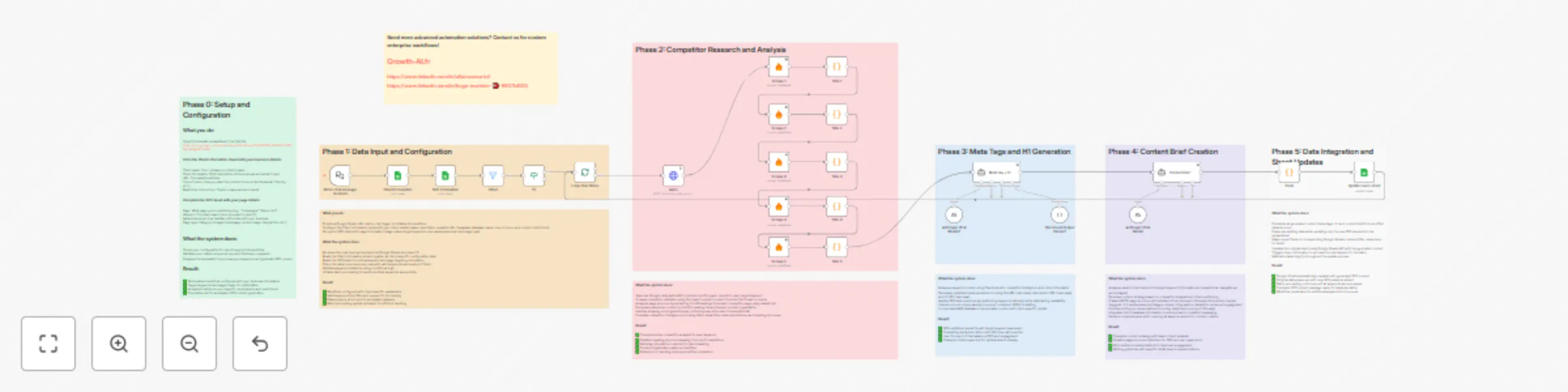

Generate SEO content with Claude AI & competitor analysis using Apify

SEO Content Generation Workflow (Basic Version) n8n Template Instructions Who's it for This workflow is designed for...

Generate SEO content with Claude AI, competitor analysis & Supabase RAG

SEO Content Generation Workflow n8n Template Instructions Who's it for This workflow is designed for SEO professional...

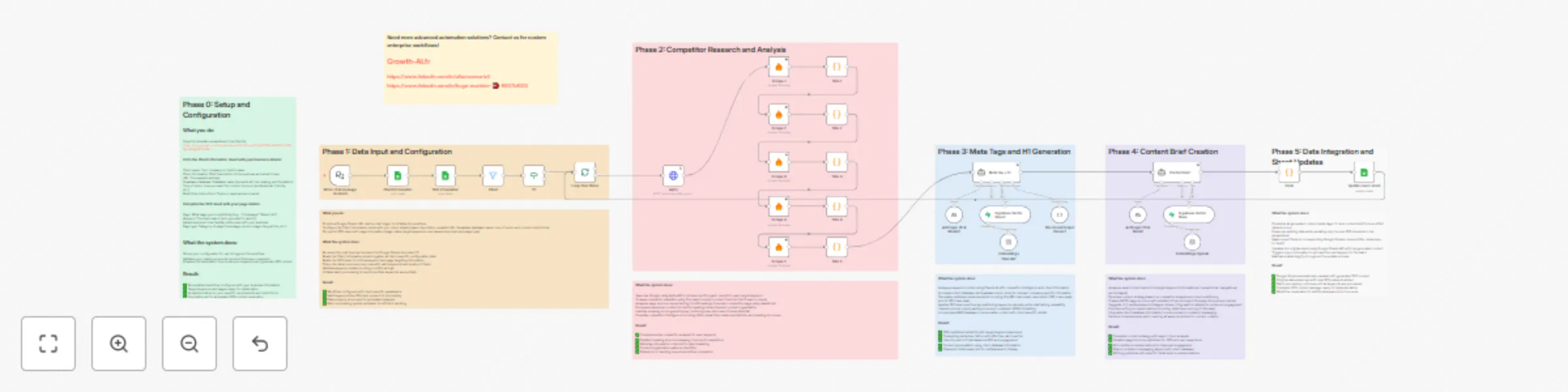

Generate SEO anchor texts from Google Sheets with Claude 4 Sonnet

SEO Anchor Text Generator with n8n and Claude AI Generate optimized SEO anchor texts for internal linking using AI au...

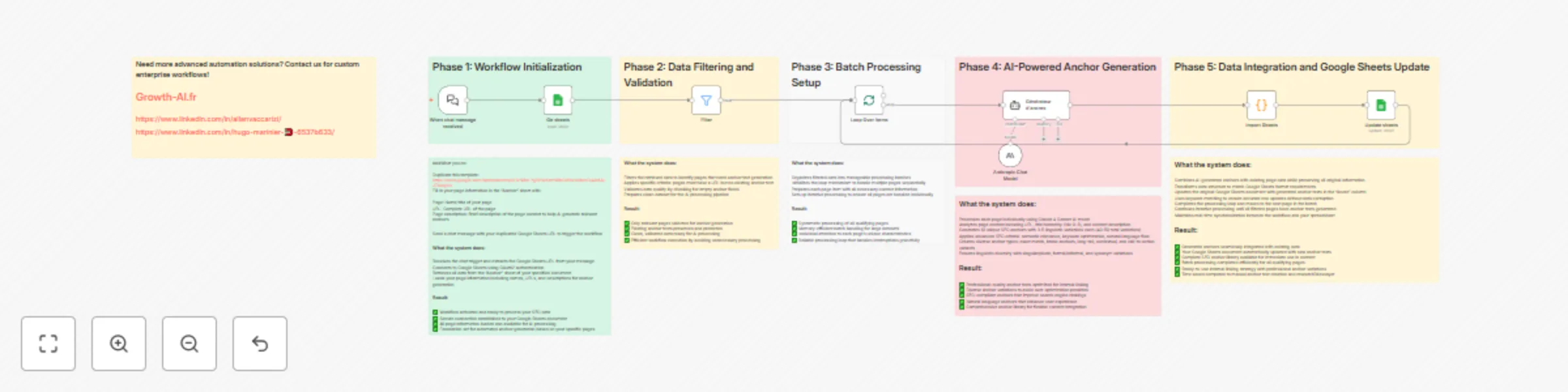

Generate UGC videos from product images with Gemini and VEO3

N8N UGC Video Generator Setup Instructions Transform Product Images into Professional UGC Videos with AI This powerfu...

WhatsApp AI assistant with Claude & GPT4O: multi-format processing & productivity suite

WhatsApp AI Personal Assistant n8n Workflow Instructions Who's it for This workflow is designed for business professi...

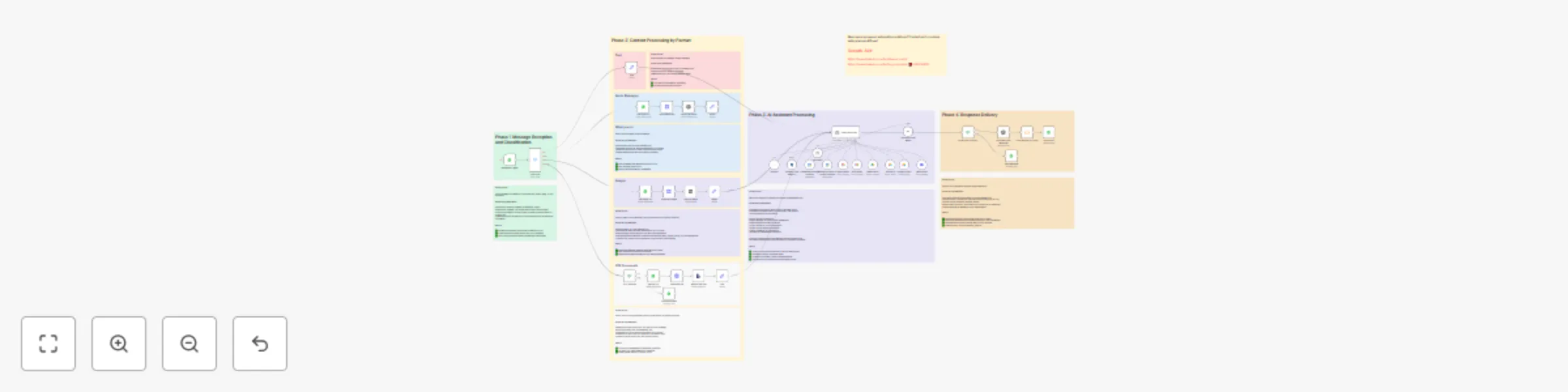

Build multi-client agentic RAG document processing pipeline with Supabase Vector DB

Ultimate n8n Agentic RAG Template Author: Cole Medin What is this? This template provides a complete implementation o...

Automated news monitoring with Claude 4 AI analysis for Discord & Google News

Who's it for Marketing teams, business intelligence professionals, competitive analysts, and executives who need cons...

Monitor social media trends across Reddit, Instagram & TikTok with Apify

Who's it for Social media managers, content creators, brand managers, and marketing teams who need to track keyword p...

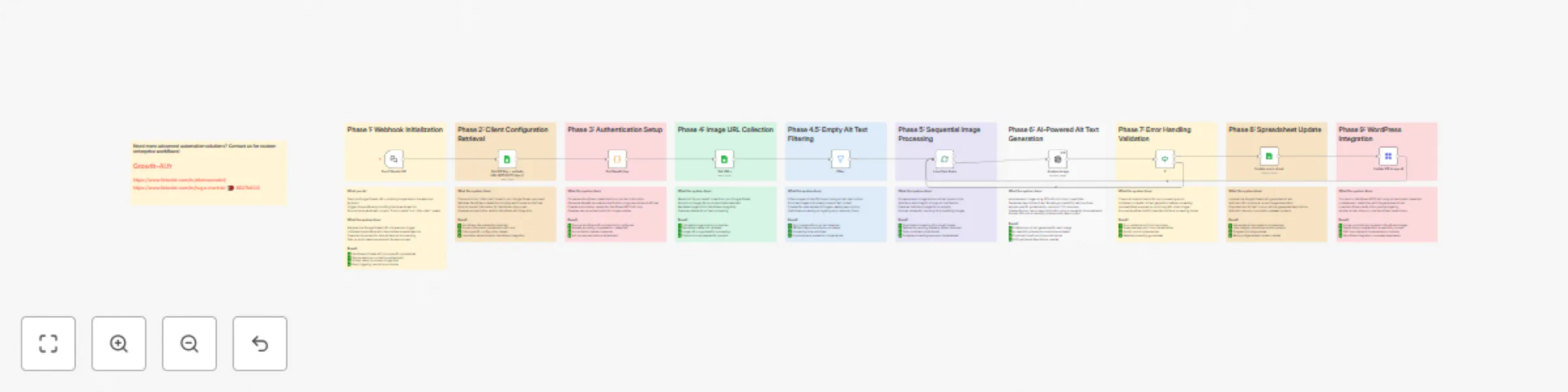

Generate accessible alt text with AI from Google Sheets to WordPress

AI powered alt text generation from Google Sheets to WordPress media Who's it for WordPress site owners, content mana...

Automated Google Ads campaign reporting to Google Sheets with Airtable

Google Ads automated reporting to spreadsheets with Airtable Who's it for Digital marketing agencies, PPC managers, a...

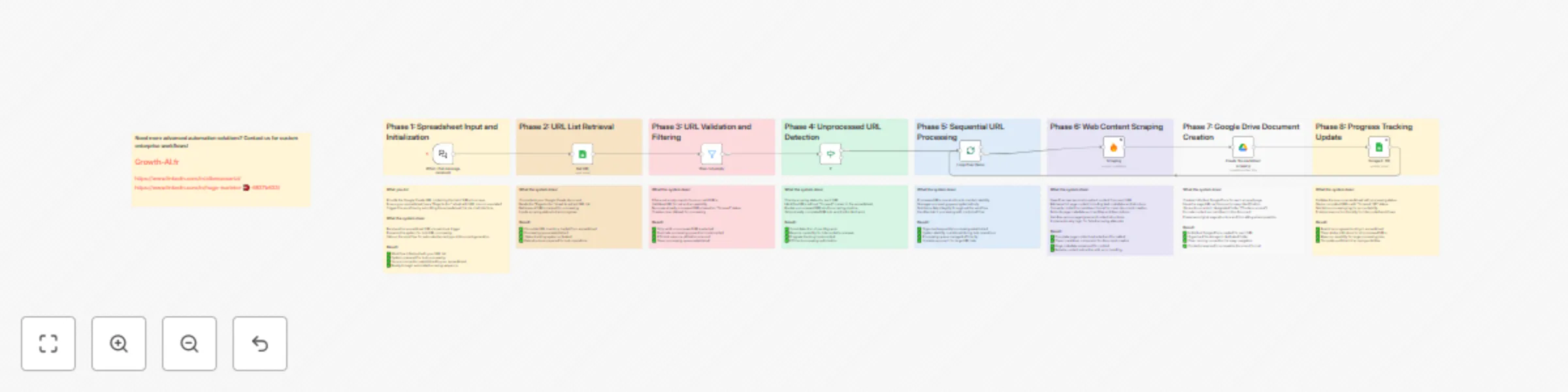

Batch scrape website URLs from Google Sheets to Google Docs with Firecrawl

This workflow contains community nodes that are only compatible with the self hosted version of n8n. Firecrawl batch...

Automated task tracking & notifications with Motion and Airtable

Automated project status tracking with Airtable and Motion Who's it for Project managers, team leads, and agencies wh...

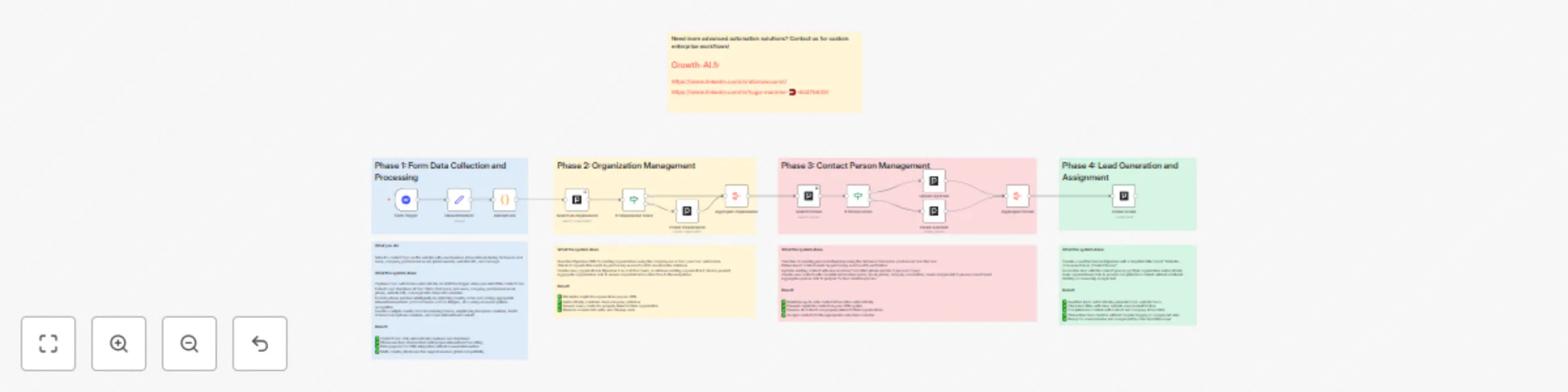

Complete Webflow to Pipedrive integration with smart phone formatting

Advanced Form Submission to CRM Automation with International Phone Support Who's it for Sales teams, marketing profe...

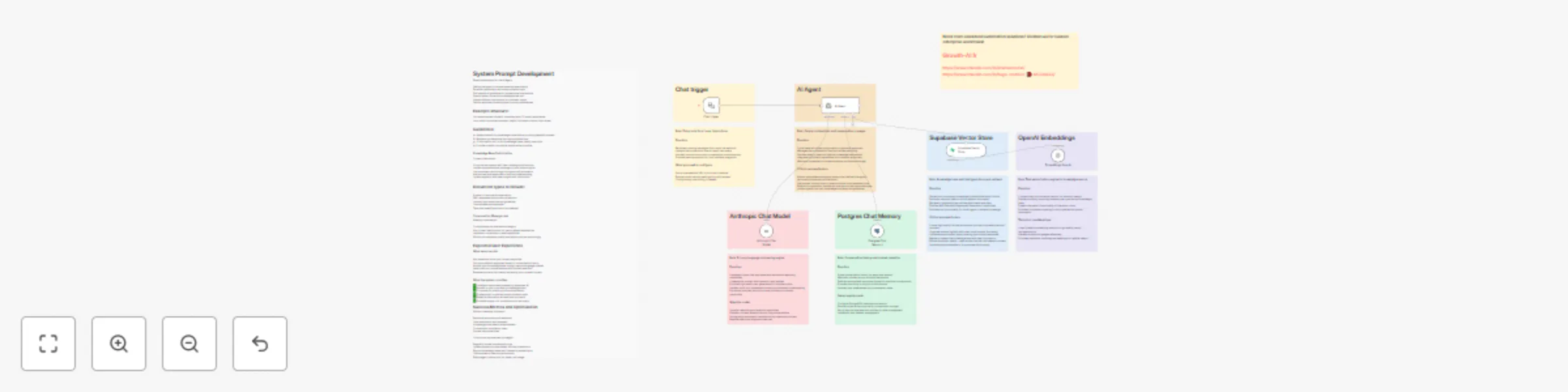

Create a knowledge-powered chatbot with Claude, Supabase & Postgres

Intelligent chatbot with custom knowledge base Who's it for Businesses, developers, and organizations who need a cust...

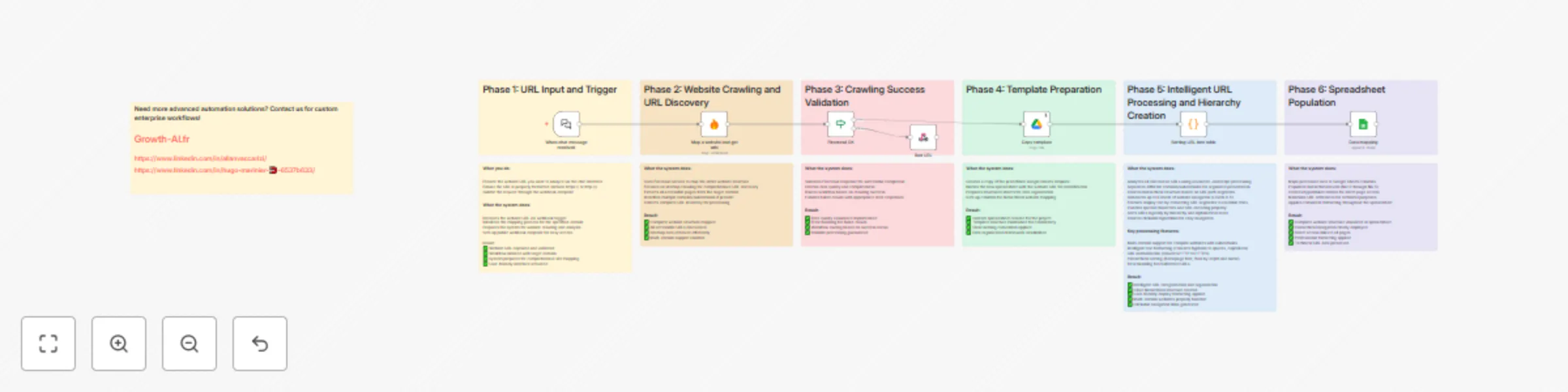

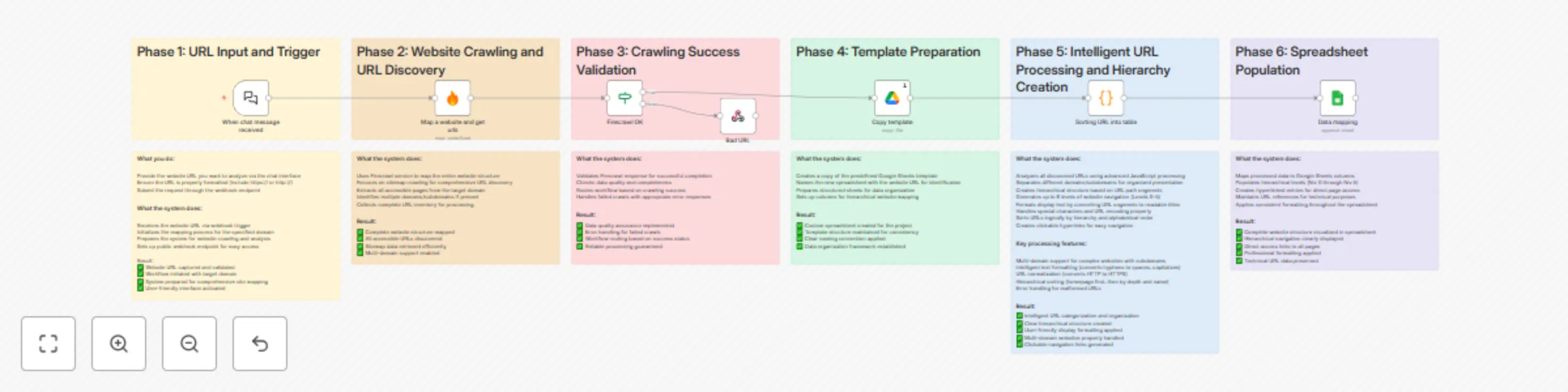

Generate website sitemaps & visual trees with Firecrawl and Google Sheets

This workflow contains community nodes that are only compatible with the self hosted version of n8n. Website sitemap...

Automated content strategy with Google Trends, News, Firecrawl & Claude AI

Automated trend monitoring for content strategy Who's it for Content creators, marketers, and social media managers w...