R

Raphael De Carvalho Florencio

2

Workflows

Workflows by Raphael De Carvalho Florencio

Free advanced

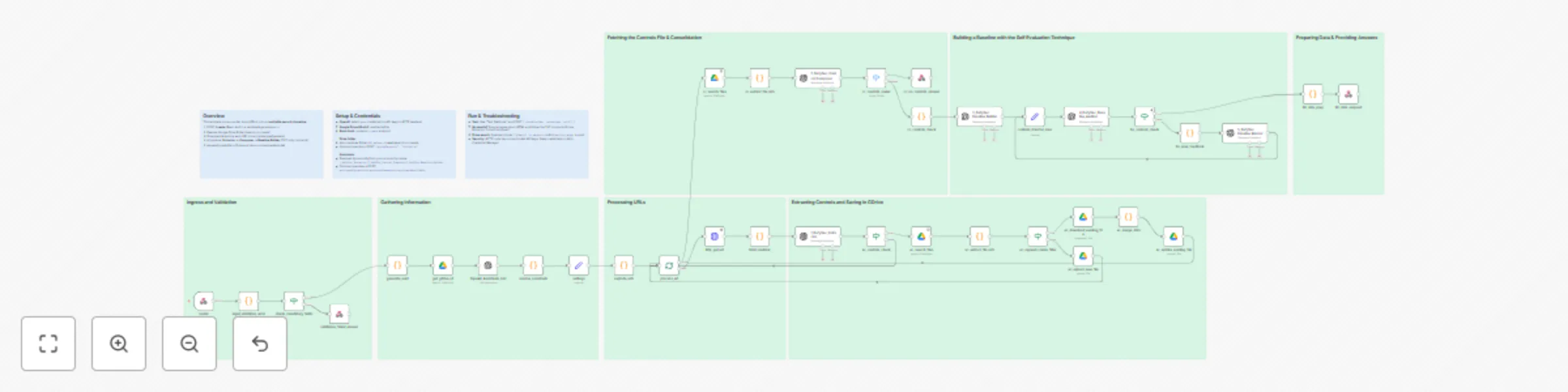

Transform cloud documentation into security baselines with OpenAI and GDrive

What this template does Transforms provider documentation (URLs) into an auditable, enforceable multicloud security c...

R

Raphael De Carvalho Florencio AI Summarization

18 Aug 2025

414

0

Free advanced

AI lyrics study bot for Telegram — Translation, summary, vocabulary

What this workflow is (About) This workflow turns a Telegram bot into an AI powered lyrics assistant . Users send a c...

R

Raphael De Carvalho Florencio Content Creation

9 Aug 2025

337

0