Fahmi Fahreza

Workflows by Fahmi Fahreza

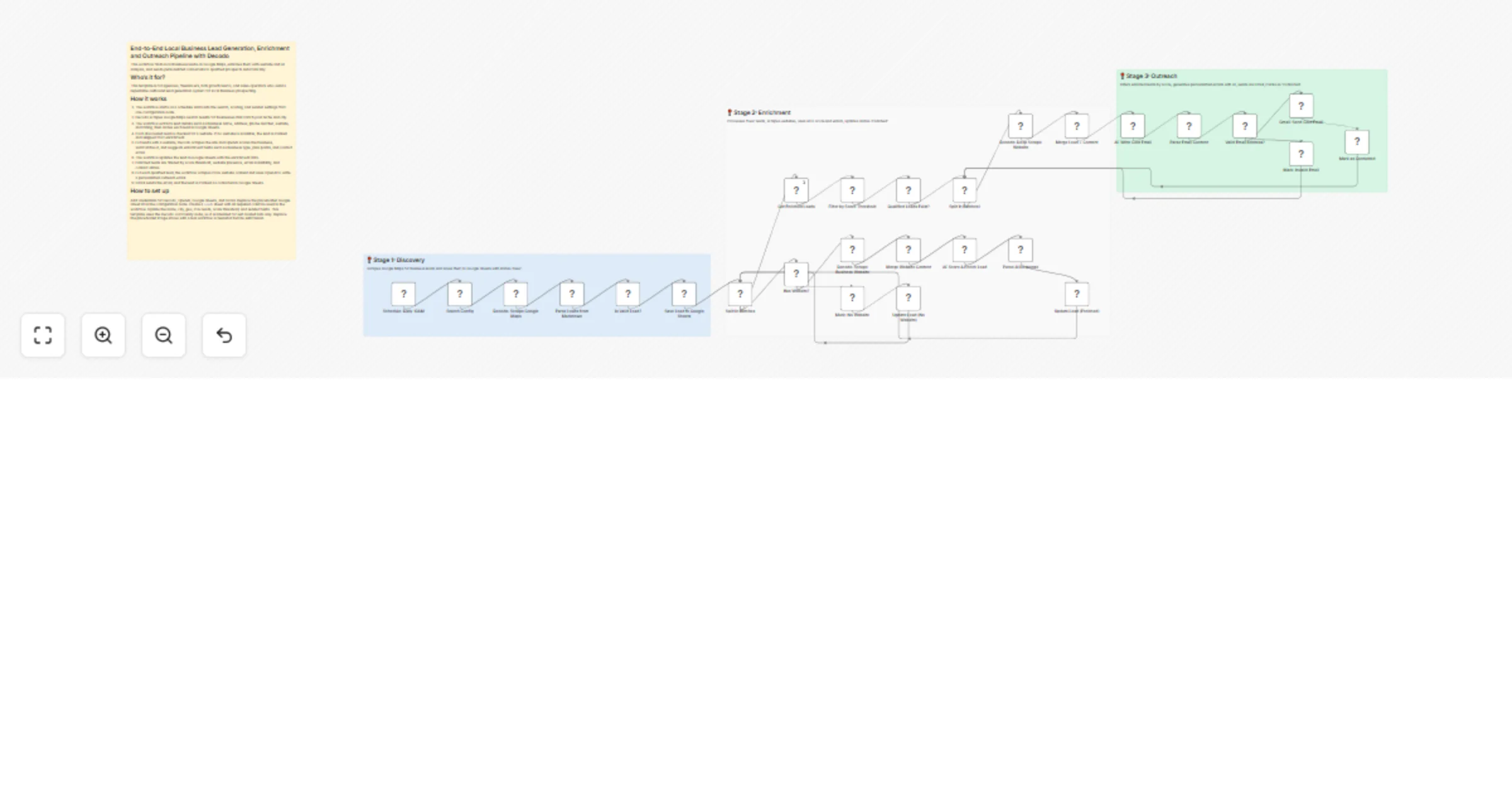

Generate and enrich local business leads with Decodo, OpenAI and Gmail

End to End Local Business Lead Generation, Enrichment and Outreach Pipeline with Decodo This workflow finds local bus...

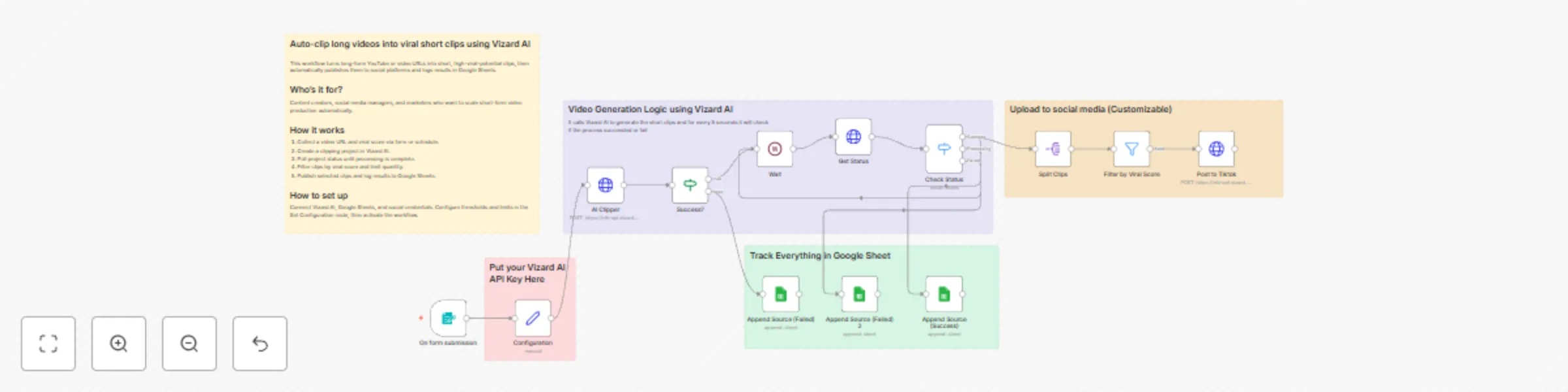

Auto-clip long videos into viral short clips with Vizard AI and social publishing

Auto clip long videos into viral short clips using Vizard AI This workflow turns long form YouTube or video URLs into...

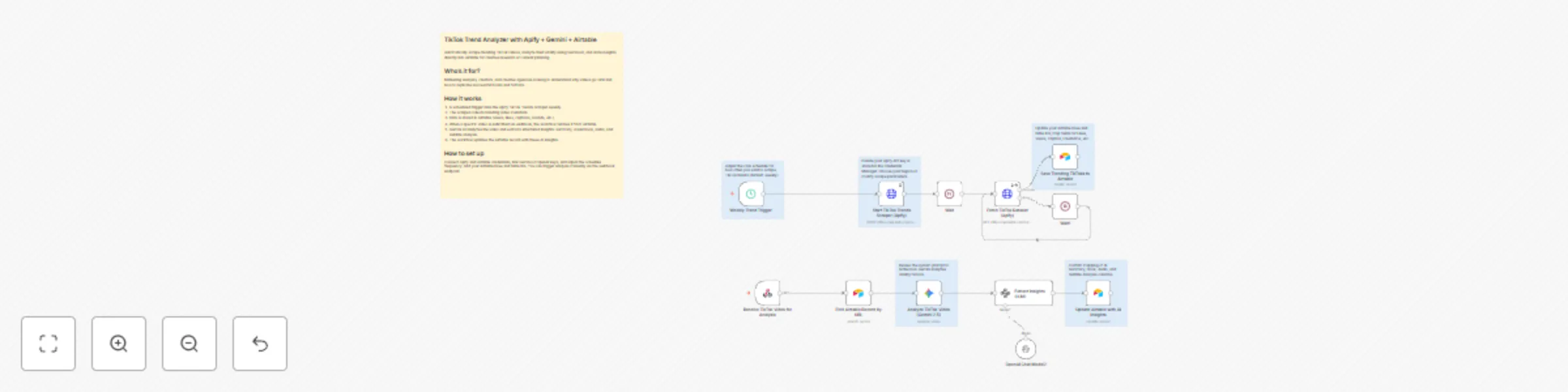

TikTok trend analyzer with Apify + Gemini + Airtable

TikTok Trend Analyzer with Apify + Gemini + Airtable Automatically scrape trending TikTok videos, analyze their viral...

Sync multi-bank balance data to BigQuery using Plaid

Automated Multi Bank Balance Sync to BigQuery This workflow automatically fetches balances from multiple financial in...

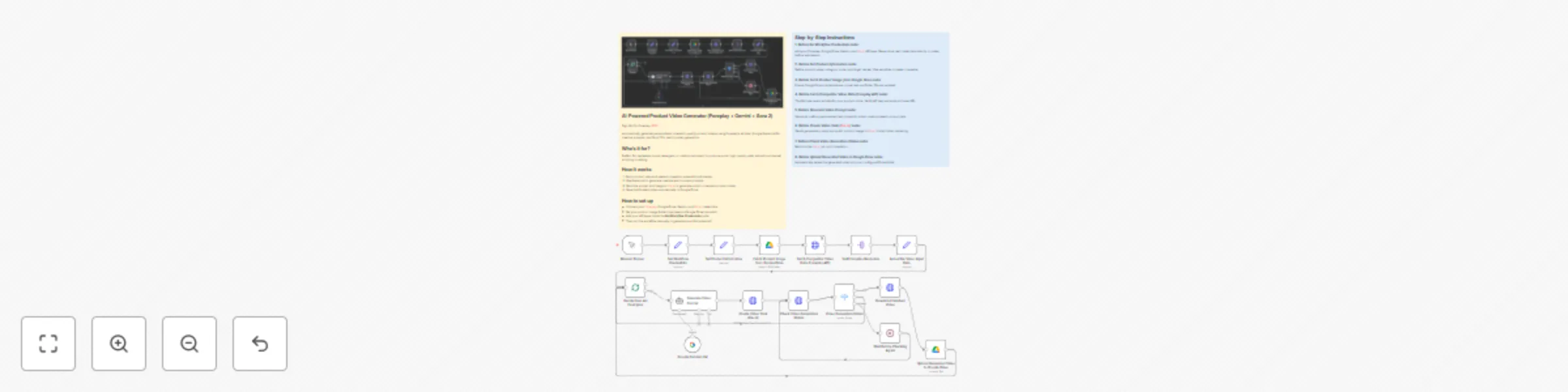

AI-powered product video generator (Foreplay + Gemini + Sora 2)

AI Powered Product Video Generator (Foreplay + Gemini + Sora 2) Sign Up For Foreplay HERE Automatically generate pers...

Analyze Trustpilot & Sitejabber sentiment with Decodo + Gemini to sheets

Analyze Trustpilot & Sitejabber sentiment with Decodo + Gemini to Sheets Sign up for Decodo HERE for Discount This te...

Weekly SEO watchlist audit to Google Sheets with Gemini and Decodo

Weekly SEO Watchlist Audit to Google Sheets (Gemini + Decodo) Sign up for Decodo HERE for Discount Automatically fetc...

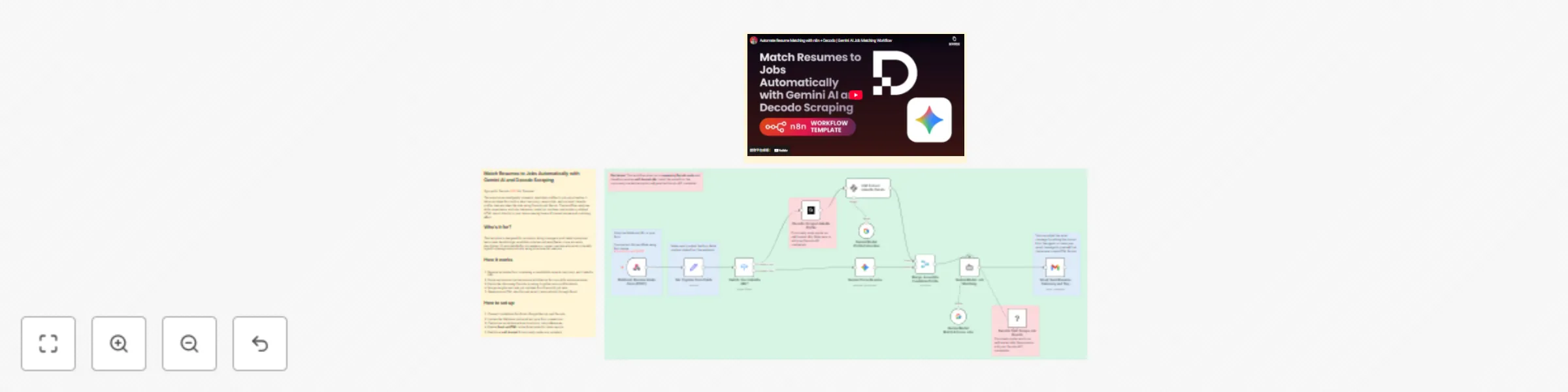

Match resumes to jobs automatically with Gemini AI and Decodo Scraping

Match Resumes to Jobs Automatically with Gemini AI and Decodo Scraping Sign up for Decodo HERE for Discount This auto...

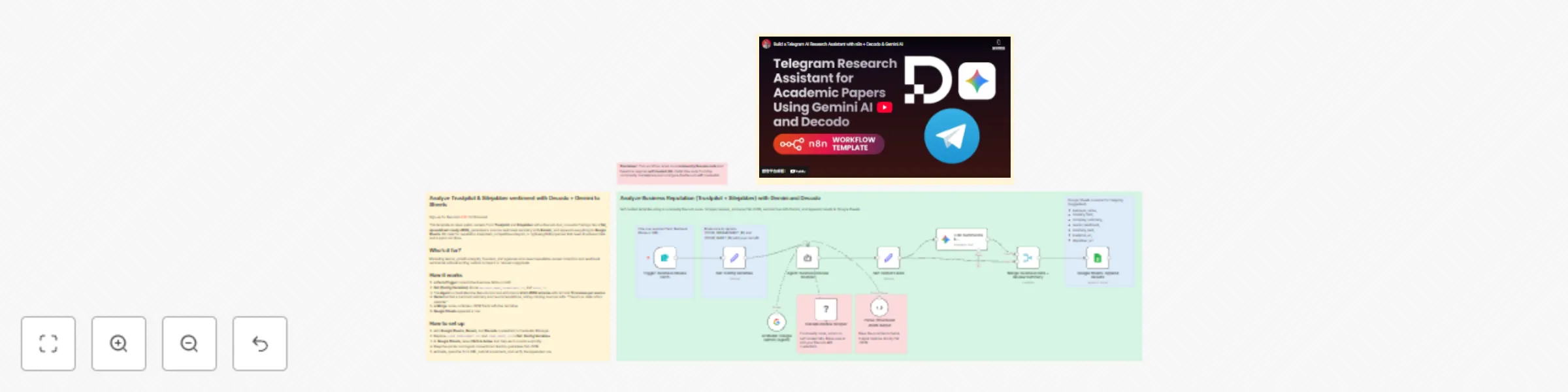

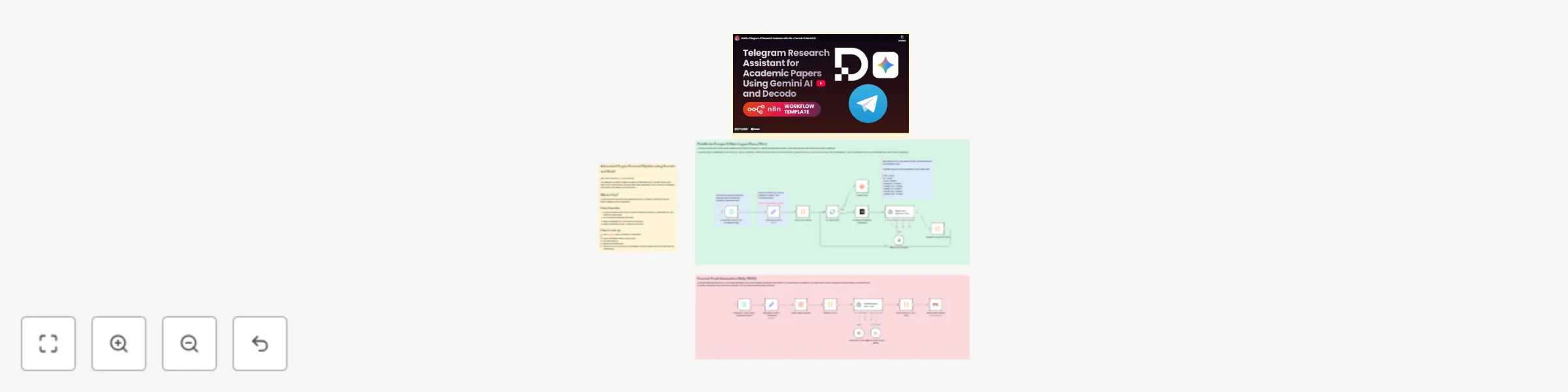

Telegram research assistant for academic papers using Gemini AI and Decodo

AI Research Assistant Using Gemini AI and Decodo Sign up for Decodo HERE for Discount This workflow transforms your T...

Scrape, structure, and store news data using Decodo, Gemini AI and Google Sheets

Sign up for Decodo HERE for Discount Automatically scrape, structure, and log forum or news content using Decodo and...

CoinGecko crypto price forecasting pipeline with Gemini AI, Decodo, and Gmail

Automated Crypto Forecast Pipeline using Decodo and Gmail Sign Up for Decodo HERE for discount This template scrapes...

Send Personalized B2B/B2C Welcome Emails with Jotform, GPT-4o & Perplexity AI

Send smart, personalized welcome emails to any Jotform lead This workflow intelligently qualifies new Jotform leads a...

Automated content migration from ClickUp Docs to Airtable records

Create Airtable records from new ClickUp Doc pages This workflow automates the process of turning content from ClickU...

Generate AI videos from text prompts with Google Veo

This n8n workflow uses the Google Gemini node to generate AI videos via the Veo model. It replaces complex manual API...

Analyze any video and generate text summaries with Google Gemini 2.5 Pro

This workflow contains community nodes that are only compatible with the self hosted version of n8n. Analyze Any Vide...

Sync QuickBooks chart of accounts to Google BigQuery

Sync QuickBooks Chart of Accounts to Google BigQuery Keep a historical, structured copy of your QuickBooks Chart of A...

Archive trending TikTok hashtags using TikTok, Airtable, and Apify

Archive Trending TikTok Hashtags to Airtable with Apify This template uses a community node ( ). It will not work wit...

Weekly ETL pipeline: QuickBooks financial data to Google BigQuery

This template sets up a weekly ETL (Extract, Transform, Load) pipeline that pulls financial data from QuickBooks Onli...

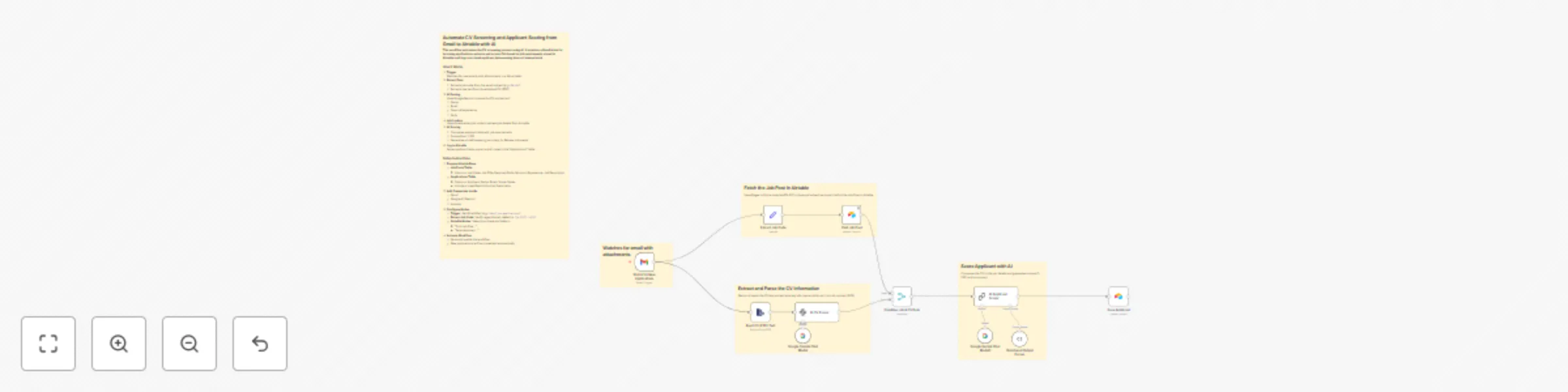

Automate CV screening and applicant scoring from Gmail to Airtable with AI

How It Works 1. Trigger Watches for new emails with attachments in a Gmail label. 2. Extract Data Extracts job code f...