E

Edisson Garcia

2

Workflows

Workflows by Edisson Garcia

Free advanced

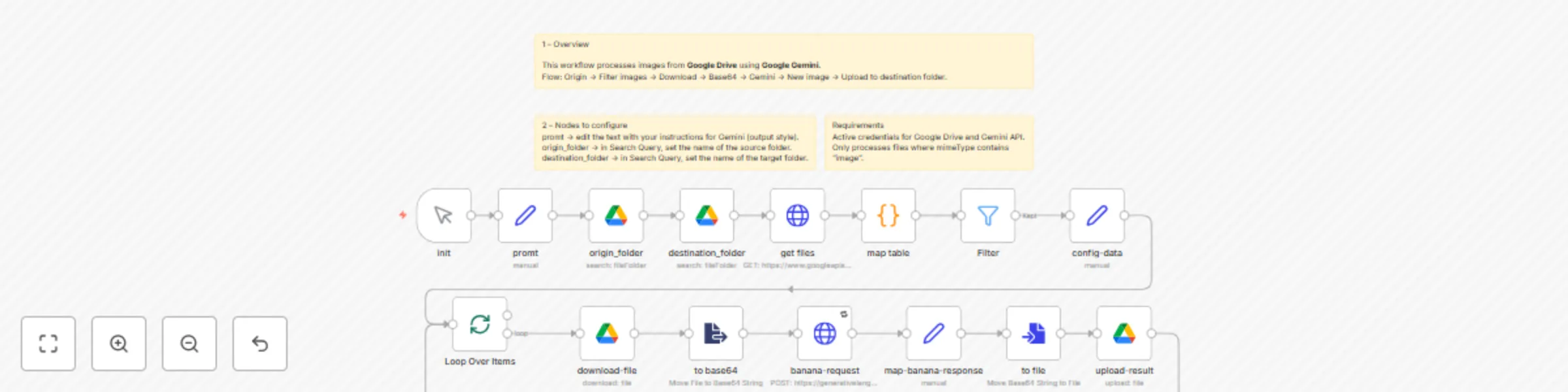

Enhance Google Drive images with Gemini 2.5 Flash AI

🚀 Google Drive Image Enhancement with Gemini nano banana This workflow automates image enhancement by integrating Go...

E

Edisson Garcia Content Creation

10 Oct 2025

54

0

Free advanced

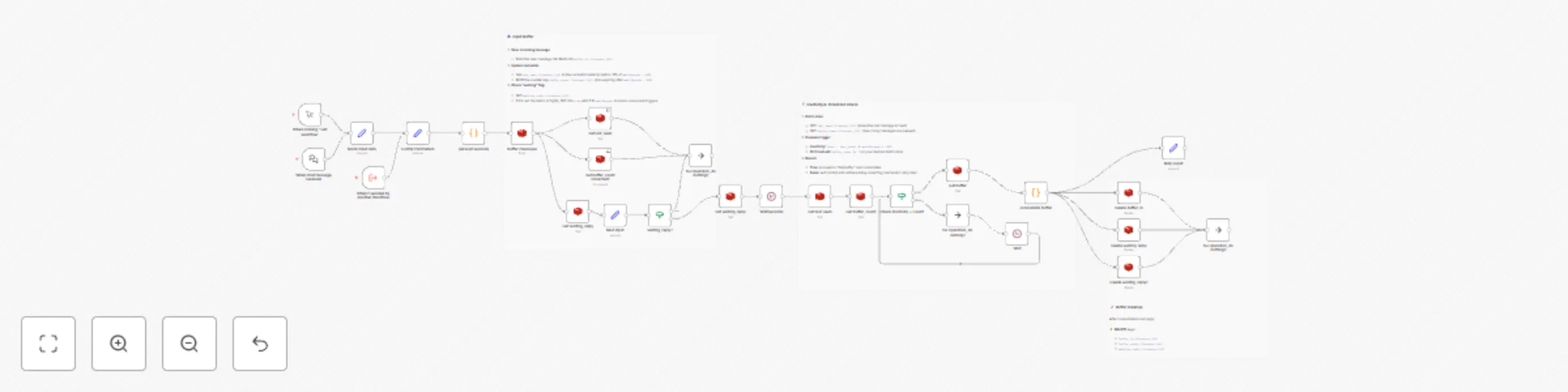

Message buffer system with Redis for efficient processing

🚀 Message Batching Buffer Workflow (n8n) This workflow implements a lightweight message batching buffer using Redis...

E

Edisson Garcia Support Chatbot

2 May 2025

2018

0