C

Chandan Singh

3

Workflows

Workflows by Chandan Singh

Free advanced

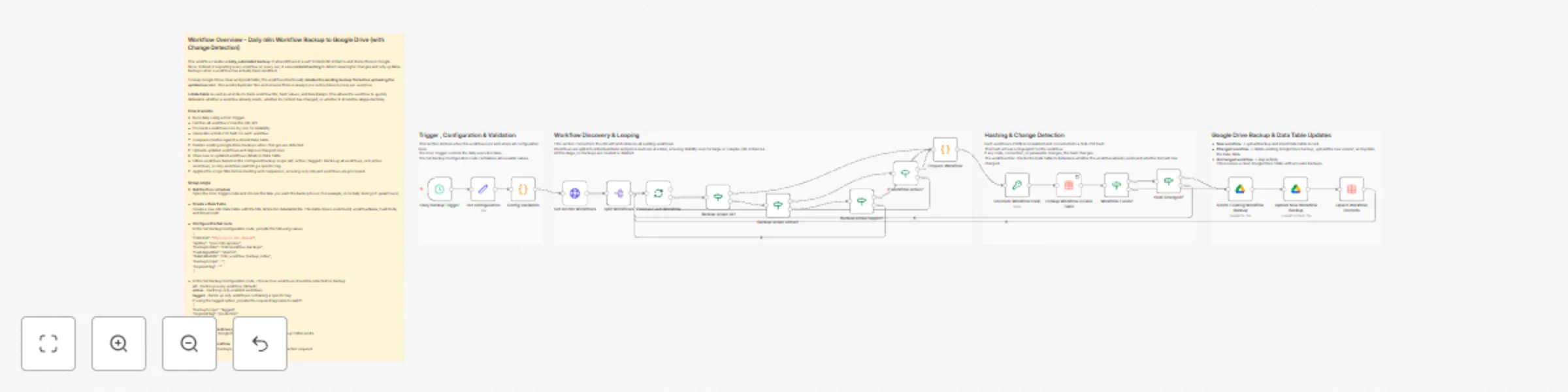

Back up self-hosted workflows to Google Drive daily with change detection

This workflow creates a daily, automated backup of all workflows in a self hosted n8n instance and stores them in Goo...

C

Chandan Singh DevOps

29 Dec 2025

4

0

Free advanced

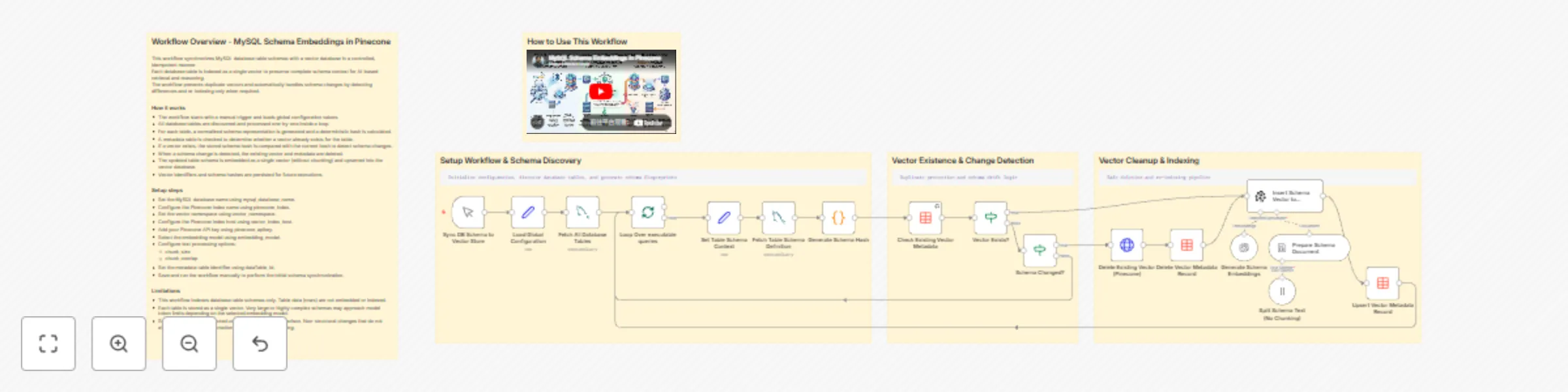

Synchronize MySQL database schemas to Pinecone with OpenAI embeddings

This workflow synchronizes MySQL database table schemas with a vector database in a controlled, idempotent manner. Ea...

C

Chandan Singh Document Extraction

20 Dec 2025

60

0

Free intermediate

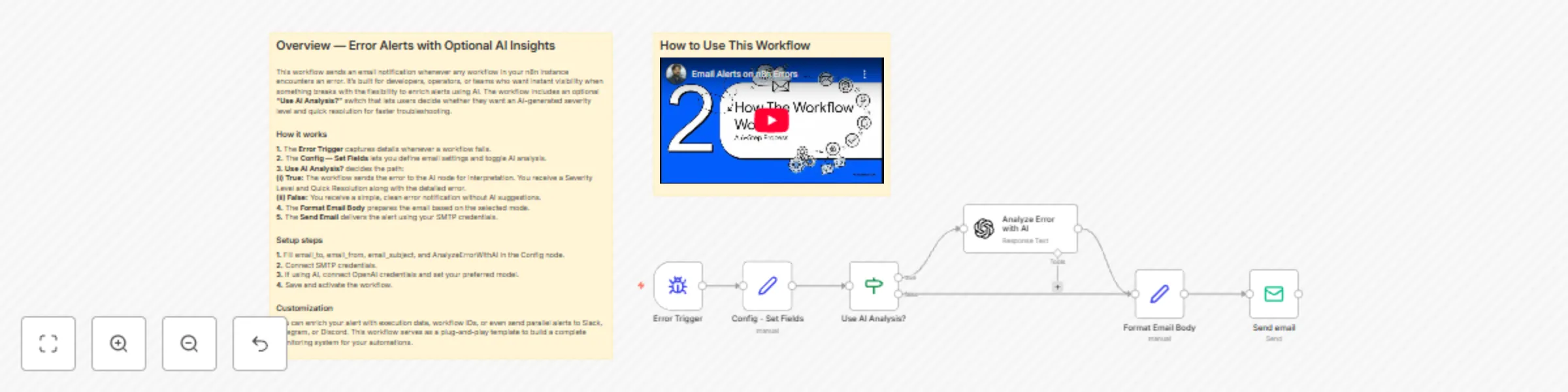

Automated error notifications with optional GPT-4o diagnostics via email

++Who’s it for++ This template is ideal for anyone who needs reliable, real time visibility into failed executions in...

C

Chandan Singh DevOps

5 Dec 2025

368

0