Baptiste Fort

Workflows by Baptiste Fort

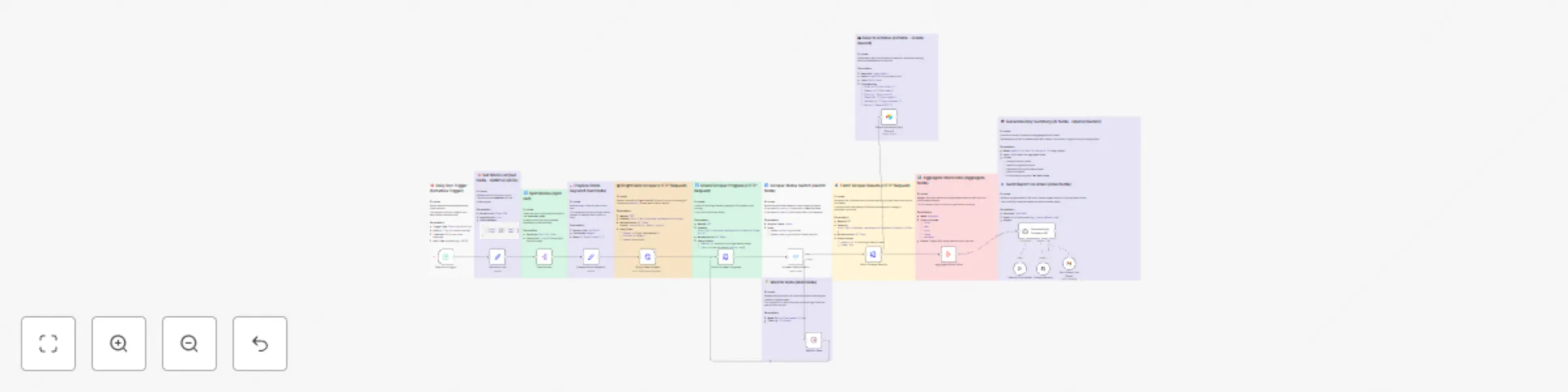

Automated daily stock market report with Bright Data, GPT-4.1, Airtable/Gmail

📘 Workflow Documentation – Stock Market Daily Digest 👋 Introduction Wake up to a clean, analyst style stock digest...

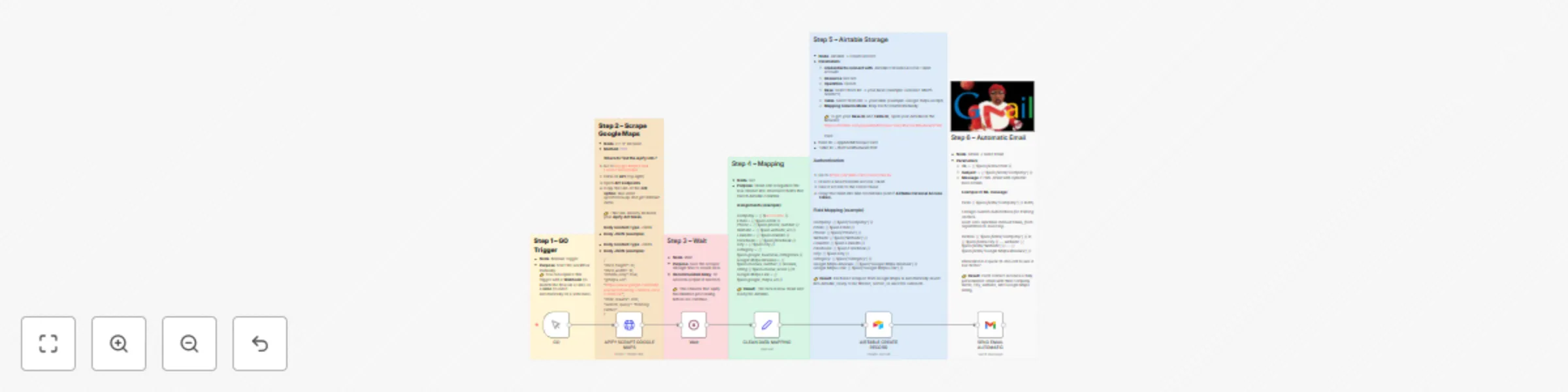

Export Google Search Console data to Airtable automatically

Export Google Search Console Data to Airtable Automatically If you’ve ever downloaded CSV files from Google Search Co...

Automatically reply to customer emails with Airtable, Gmail, and GPT-4.1 Mini

Automatically Reply to Customer Emails with Airtable, Gmail, and OpenAI Introduction This guide walks you step by ste...

Google Maps leads (names,emails,phones...) Apify + Airtable + custom emails

Who is it for? This workflow is perfect for anyone who wants to: Automatically collect contacts from Google Maps : em...

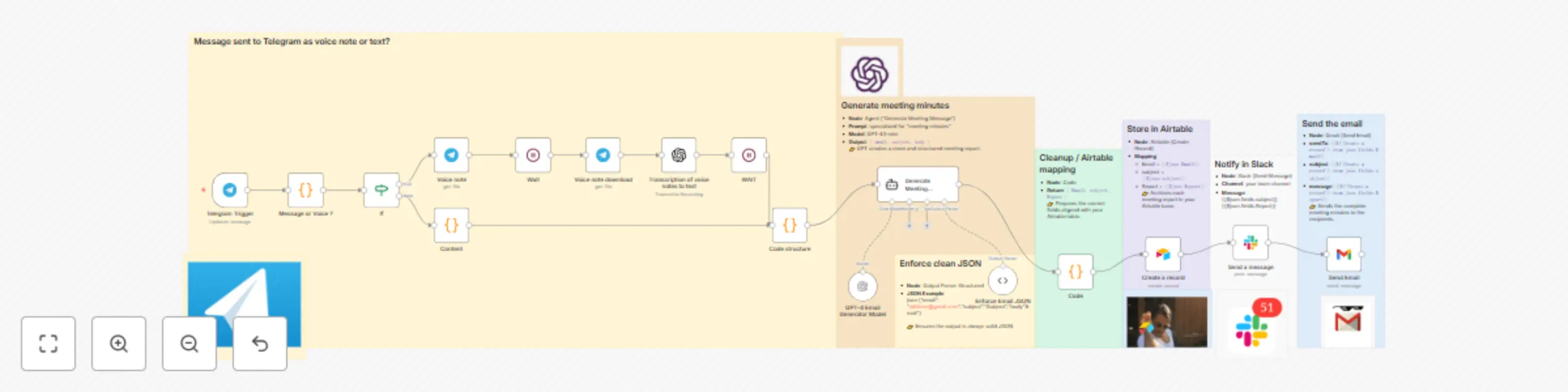

Create meeting minutes from Telegram messages with GPT-5 to Airtable Slack Gmail

How it works? 1. Send a message or a voice note on Telegram right after the meeting. 2. n8n transcribes (if it's a vo...

Send a voice note on Telegram to generate a professional email with ChatGPT

Telegram Voice Message → Automatic Email Imagine: What if you could turn a simple Telegram voice message into a profe...

Find leads on Google Maps and reach out automatically (GPT-4 + Airtable + Gmail)

Who is it for? This workflow is perfect for marketers, sales teams, agencies, and local businesses who want to save t...

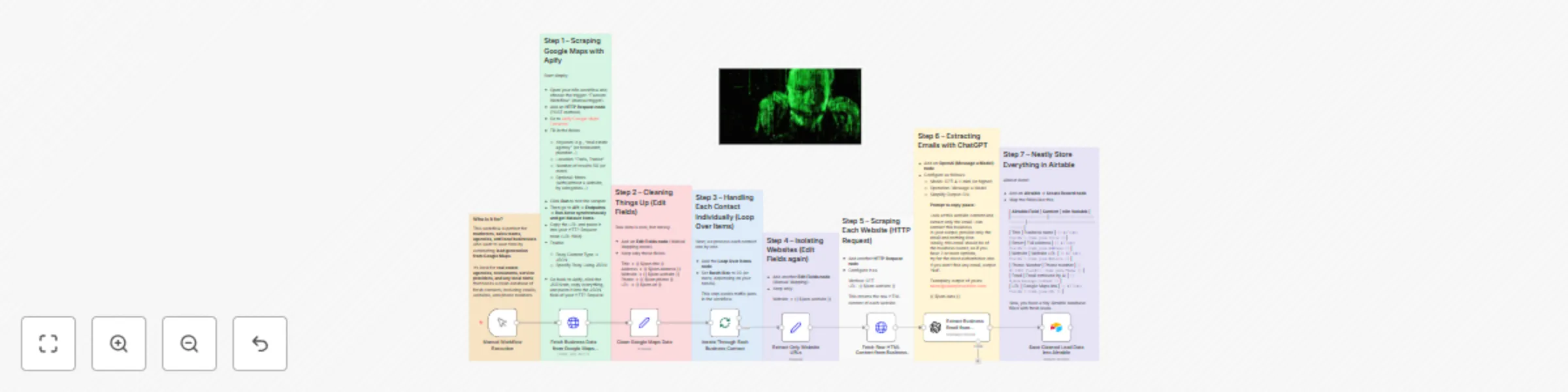

Scrape Google Maps leads (email, phone, website) using Apify + GPT + Airtable

Who is it for? This workflow is for marketers, sales teams, and local businesses who want to quickly collect leads (b...

Daily task reminder system: Airtable to Slack automated notifications

Still reminding people about their tasks manually every morning? Let’s be honest — who wants to start the day chasing...

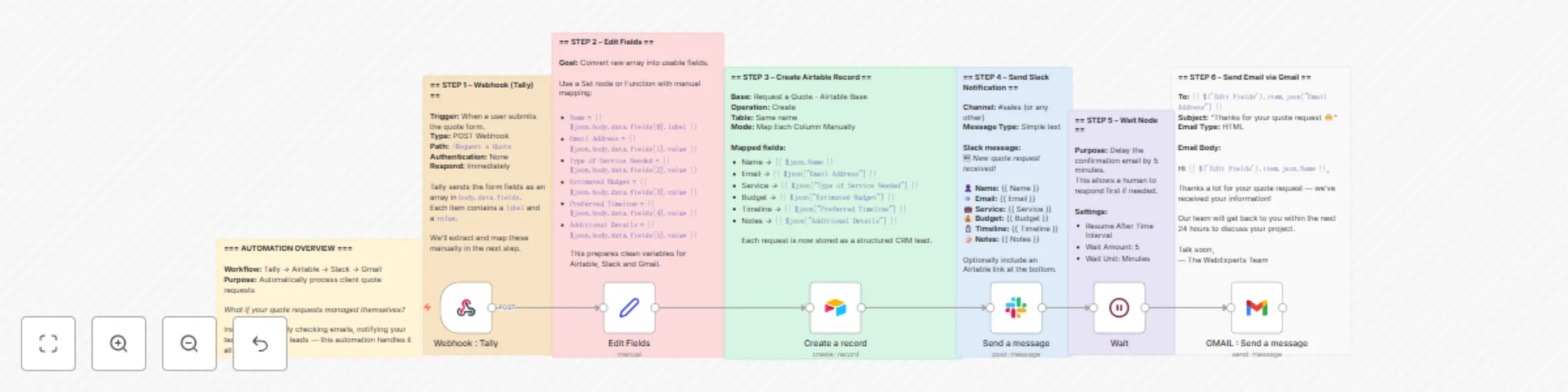

Automate quote request processing with Tally, Airtable, Slack, and Gmail

What if your quote requests managed themselves? Every quote request is a potential deal — but only if it's handled qu...

Automate a Tally form: store with Airtable, notify via Slack

🎯 Workflow Goal Still manually checking form responses in your inbox? What if every submission landed neatly in Airt...

Centralize your forms and reply automatically with Tally + Airtable + Gmail

Still manually copy pasting your Tally form responses? What if every submission went straight into Airtable — and the...