Artur

Workflows by Artur

Connect Pipedrive deal outcomes to GA4 & Google Ads via Measurement Protocol

Who’s it for Problem: Your ads and GA4 often optimize to shallow web events (form fills), while the real value sits i...

Facebook / Meta ads performance monitoring with Slack alerts (CTR, CPC, ROAS)

Who’s it for This workflow is for marketing teams, performance marketers, and media buyers running Facebook (Meta) Ad...

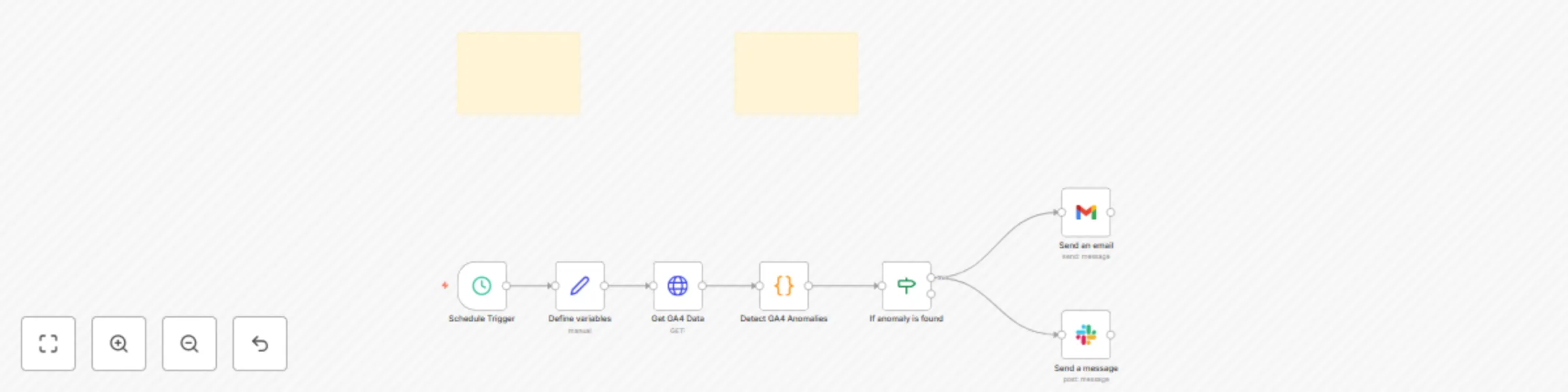

Ga4 anomaly detection with automated Slack & email alerts

Who’s it for Teams that monitor traffic, signups, or conversions in Google Analytics 4 and want automatic Slack/email...

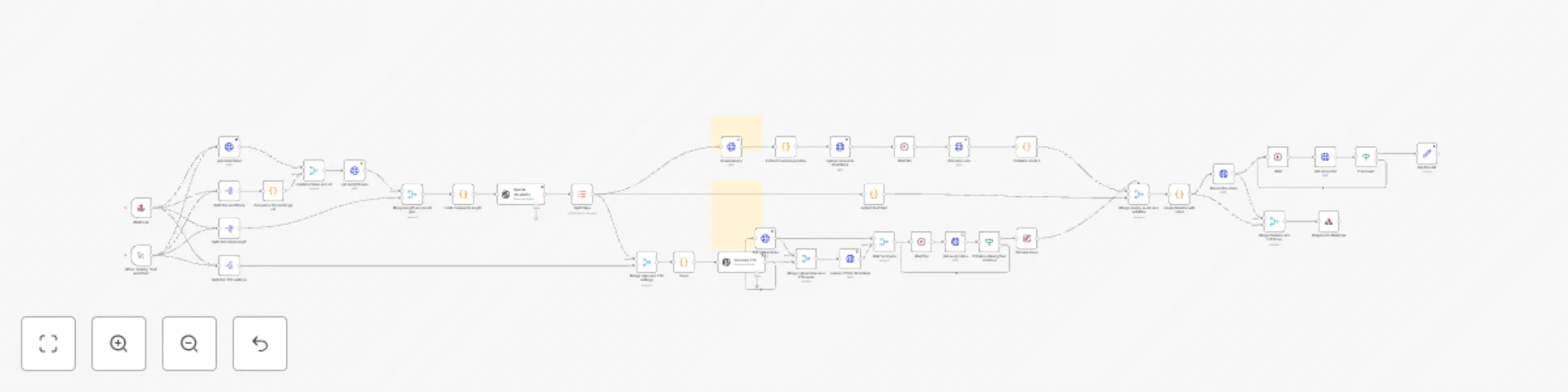

Convert Reddit threads into short vertical videos with AI

Convert Reddit threads into short vertical videos with AI Who is this for? This workflow is ideal for: Content creato...

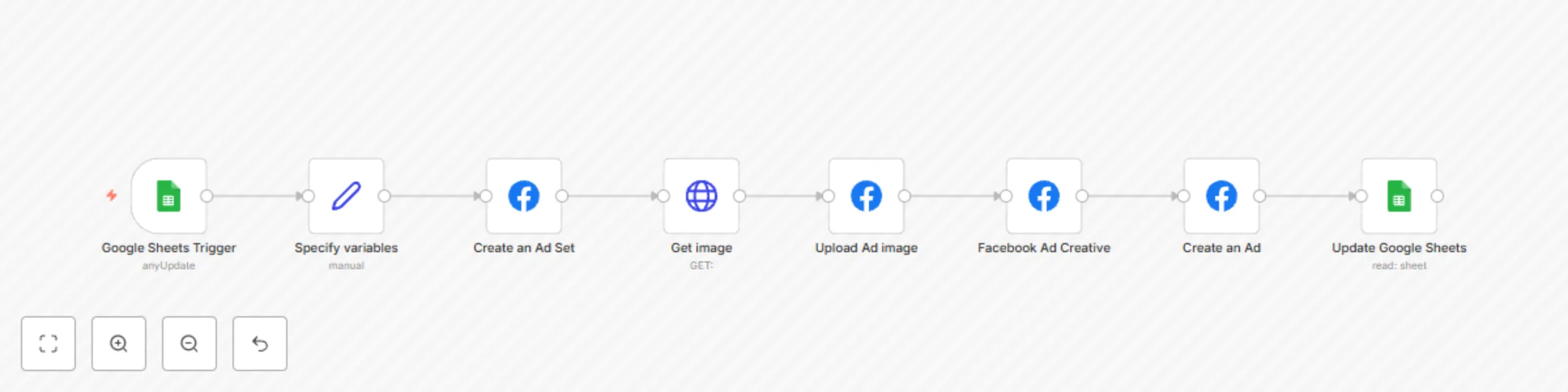

Automatically create Facebook ads from Google Sheets

Who is this for? This template is designed for Marketing Managers , Performance Marketers , and Ad Ops professionals...

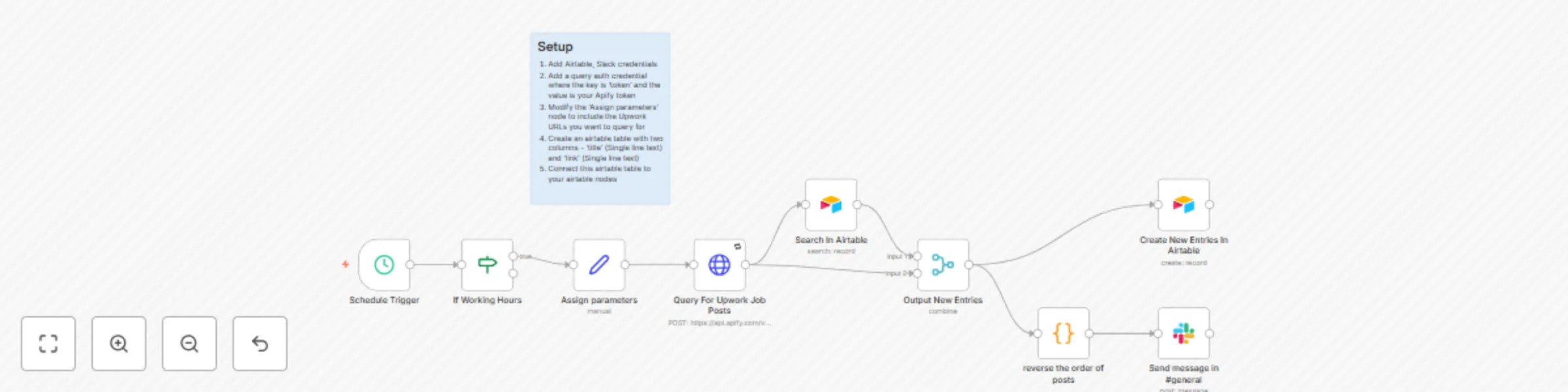

Automated Upwork job alerts with Airtable & Slack

This automated workflow fetches Upwork job postings using Apify , removes duplicate job listings via Airtable , and s...

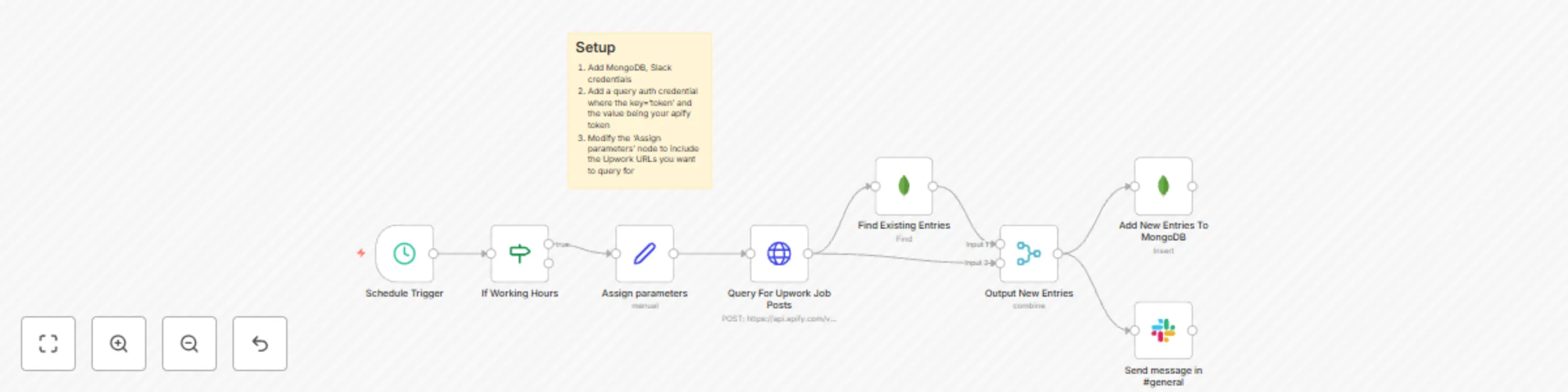

Automated Upwork job alerts with MongoDB & Slack

This automated workflow fetches Upwork job postings using Apify , removes duplicate job listings via MongoDB , and se...

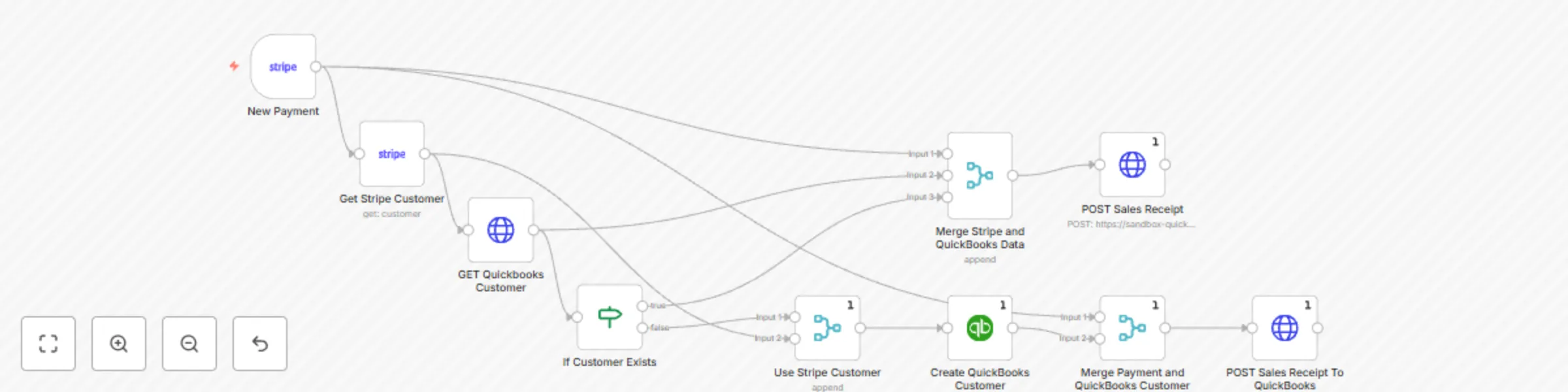

Create QuickBooks Online customers with sales receipts for new Stripe payments

Streamline your accounting by automatically creating QuickBooks Online customers and sales receipts whenever a succes...

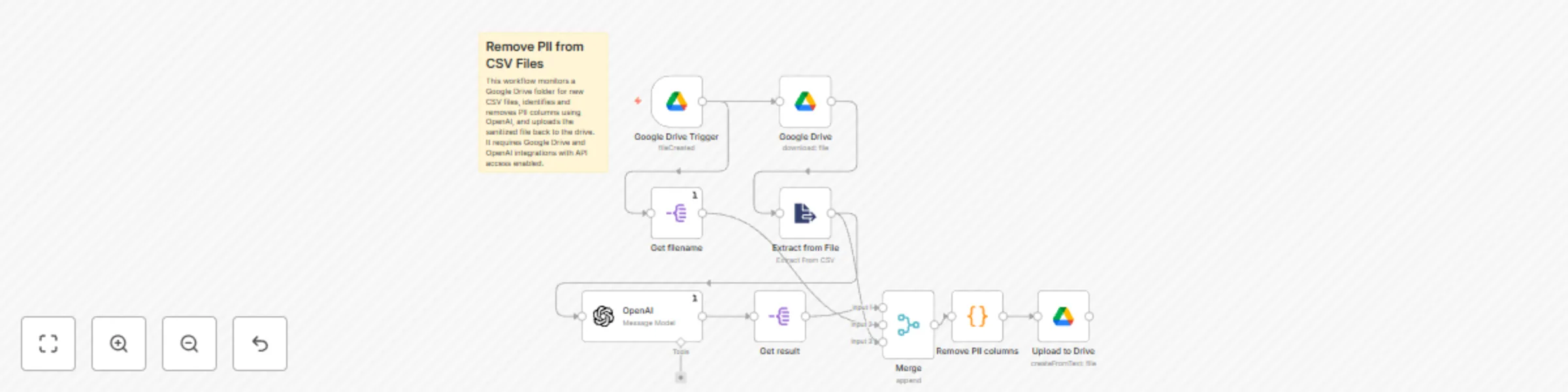

Remove personally identifiable information (PII) from CSV files with OpenAI

What this workflow does Monitors Google Drive: The workflow triggers whenever a new CSV file is uploaded. Uses AI to...