P

Piotr Sikora

3

Workflows

Workflows by Piotr Sikora

Free advanced

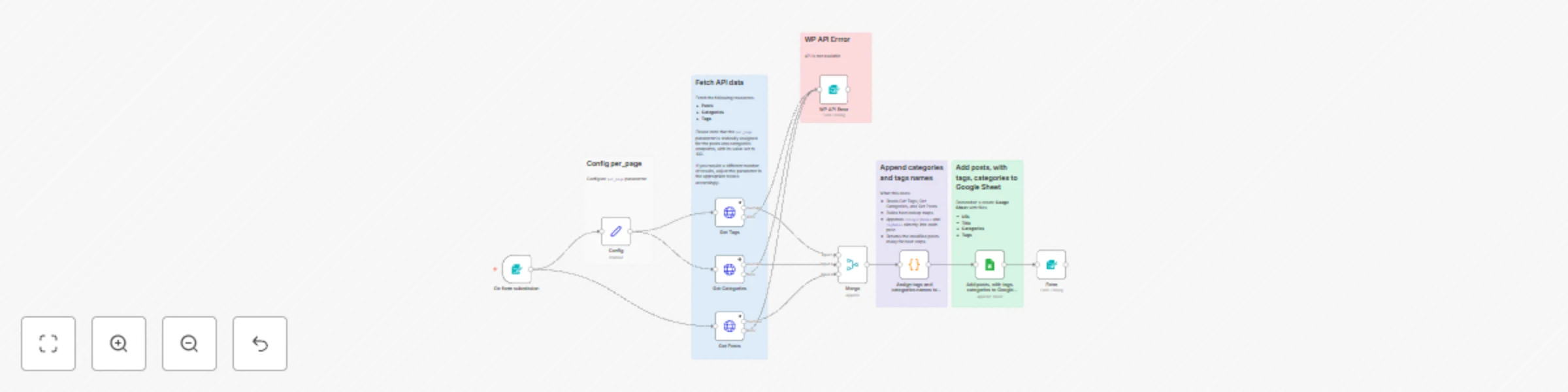

Export WordPress posts with categories and tags to Google Sheets for SEO audits

Who’s it for This workflow is perfect for content managers, SEO specialists , and website owners who want to easily a...

P

Piotr Sikora Market Research

22 Oct 2025

83

0

Free advanced

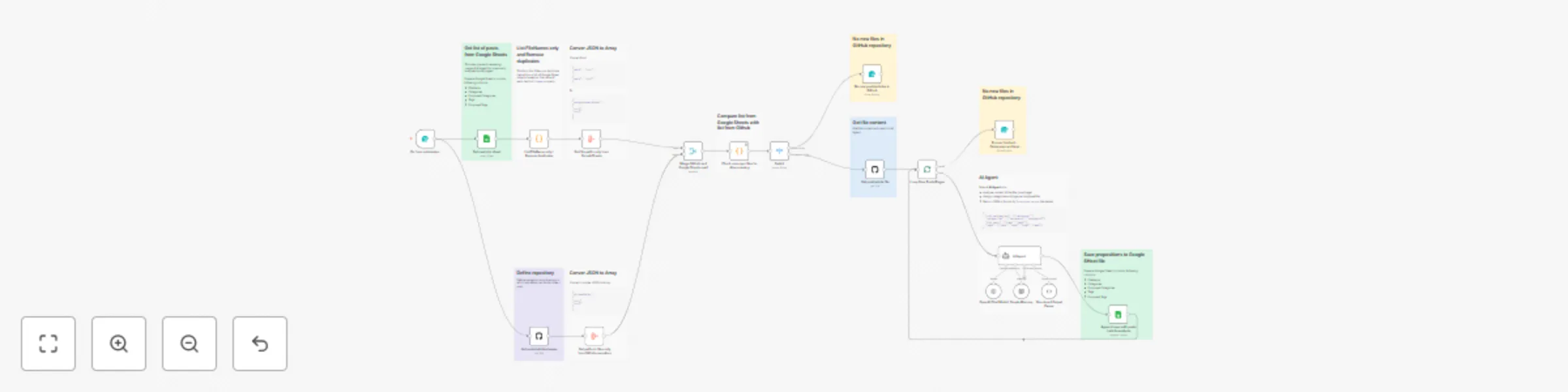

Auto-categorize blog posts with OpenAI GPT-4, GitHub, and Google Sheets for Astro/Next.js

Automatically Assign Categories and Tags to Blog Posts with AI This workflow streamlines your content organization pr...

P

Piotr Sikora Content Creation

21 Oct 2025

193

0

Free intermediate

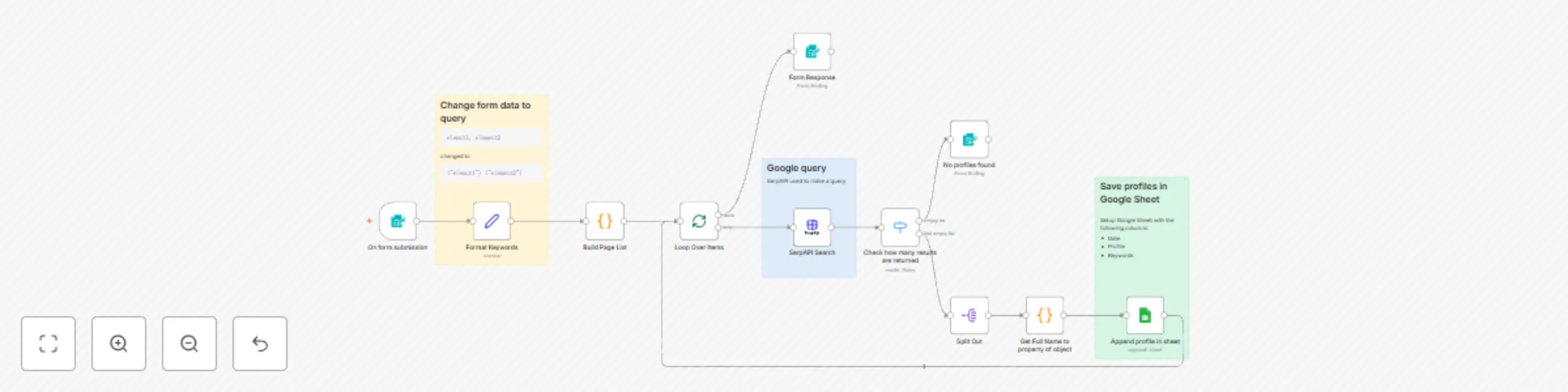

Collect LinkedIn profiles with SerpAPI Google Search and Sheets

[LI] – Search Profiles > ⚠️ Self hosted disclaimer: > This workflow uses the SerpAPI community node , which is...

P

Piotr Sikora Lead Generation

1 Oct 2025

163

0