A

Alex Huy

4

Workflows

Workflows by Alex Huy

Free advanced

Create structured Notion workspaces from notes & voice using Gemini & GPT

AI Assistant Workflow: Create Notion Workspaces from Notes & Voice Records 👤 Who is this for? This workflow is desig...

A

Alex Huy Multimodal AI

28 Aug 2025

85

0

Free intermediate

Daily tech news curation with RSS, GPT-4o-Mini, and Gmail delivery

How it works This workflow automatically curates and sends a daily AI/Tech news digest by aggregating articles from p...

A

Alex Huy Multimodal AI

26 Aug 2025

888

0

Free advanced

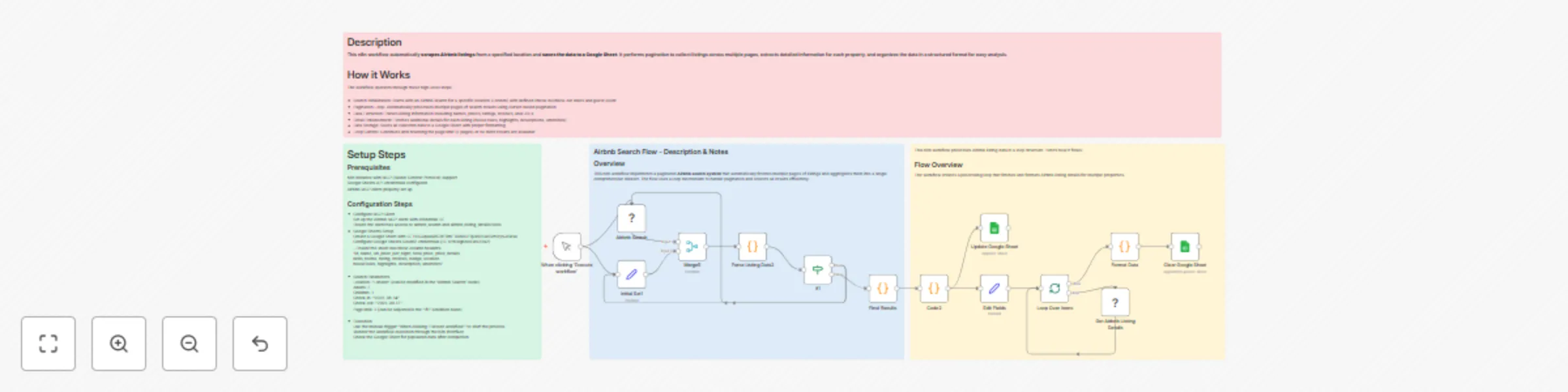

Scrape Airbnb listings with pagination & store in Google Sheets

This workflow contains community nodes that are only compatible with the self hosted version of n8n. Description This...

A

Alex Huy Market Research

14 Jul 2025

1162

0

Free advanced

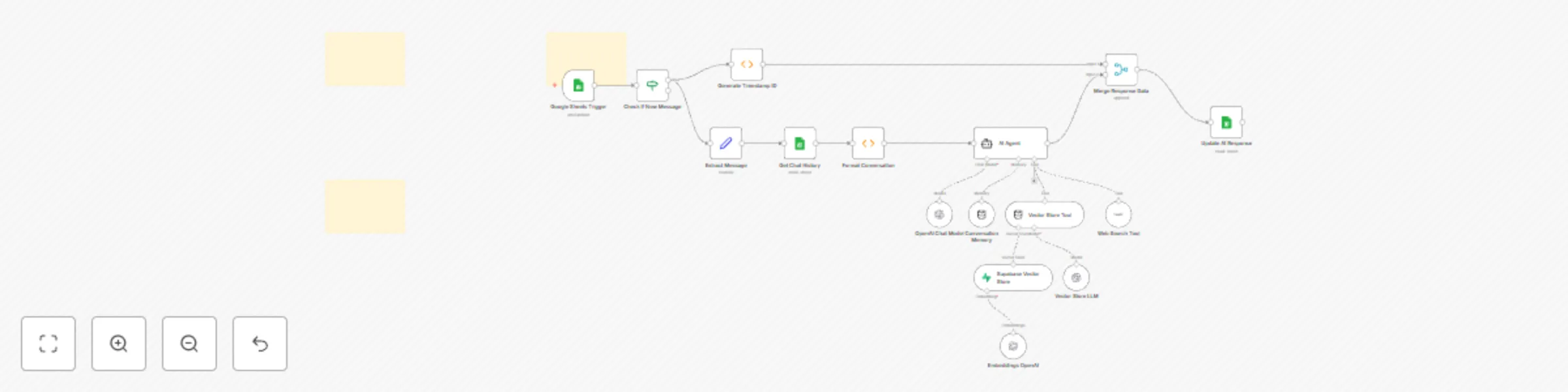

AI-powered knowledge assistant using Google Sheets, OpenAI, and Supabase Vector Search

Description An intelligent conversational AI system that provides contextual responses by combining chat history, vec...

A

Alex Huy Internal Wiki

29 May 2025

528

0