A

Adam Bertram

3

Workflows

Workflows by Adam Bertram

Free advanced

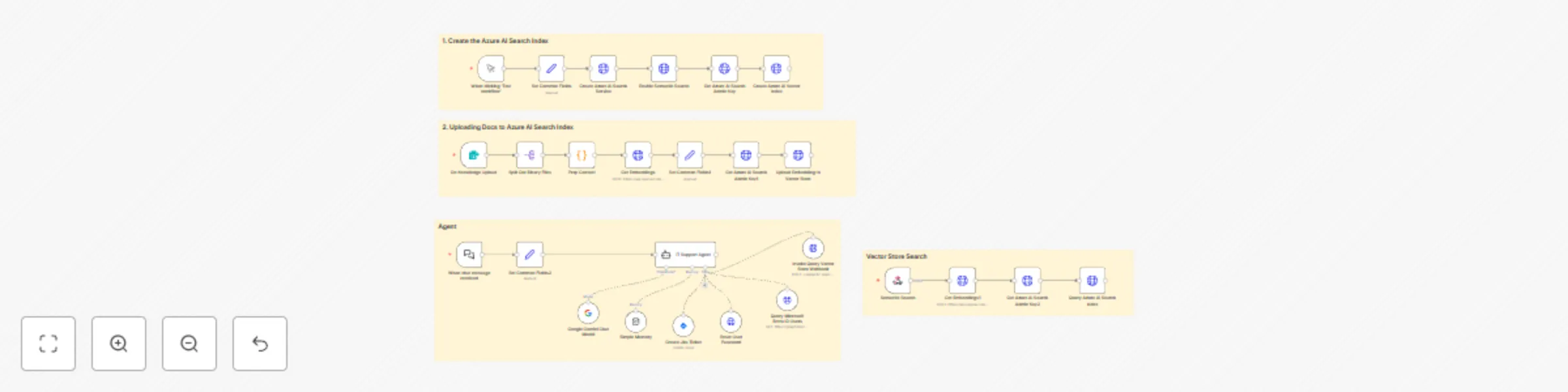

Build an AI IT support agent with Azure Search, Entra ID & Jira

An intelligent IT support agent that uses Azure AI Search for knowledge retrieval, Microsoft Entra ID integration for...

A

Adam Bertram Support Chatbot

1 Jun 2025

1846

0

Free intermediate

Generate Azure VM timeline reports with Google Gemini AI chat assistant

An AI powered chat assistant that analyzes Azure virtual machine activity and generates detailed timeline reports sho...

A

Adam Bertram DevOps

30 May 2025

652

0

Free advanced

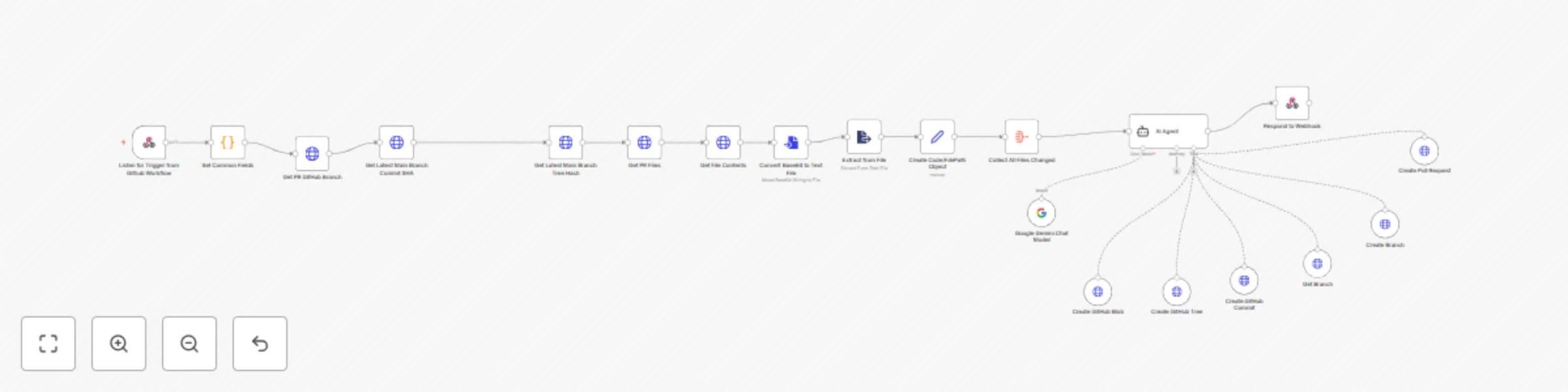

Automate GitHub PR linting with Google Gemini AI and auto-fix PRs

LintGuardian: Automated PR Linting with n8n & AI What It Does LintGuardian is an n8n workflow template that automates...

A

Adam Bertram DevOps

15 May 2025

371

0