A

Abdullahi Ahmed

3

Workflows

Workflows by Abdullahi Ahmed

Free advanced

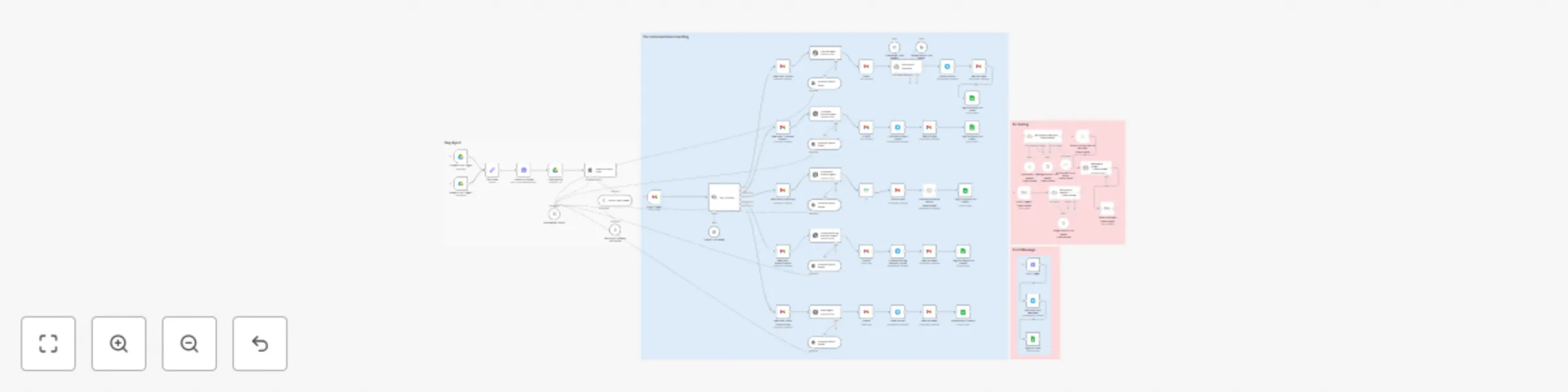

Ai-powered email triage & auto-response system with OpenAI agents & Gmail

AI Email Dispatcher: Classify, Process, and Route Emails with Multiple Agents This n8n template automatically classif...

A

Abdullahi Ahmed Ticket Management

1 Oct 2025

556

0

Free advanced

Build a document QA system with Google Drive, Pinecone, and OpenAI RAG

Title RAG AI Agent for Documents in Google Drive → Pinecone → OpenAI Chat (n8n workflow) Short Description This n8n w...

A

Abdullahi Ahmed Internal Wiki

28 Sep 2025

325

0

Free advanced

Client onboarding with form

AI Powered Lead Triage and Response System 🤖 This advanced workflow creates a customized, embedded lead capture form...

A

Abdullahi Ahmed Lead Nurturing

27 Sep 2025

564

0