A

Aadarsh Jain

2

Workflows

Workflows by Aadarsh Jain

Free intermediate

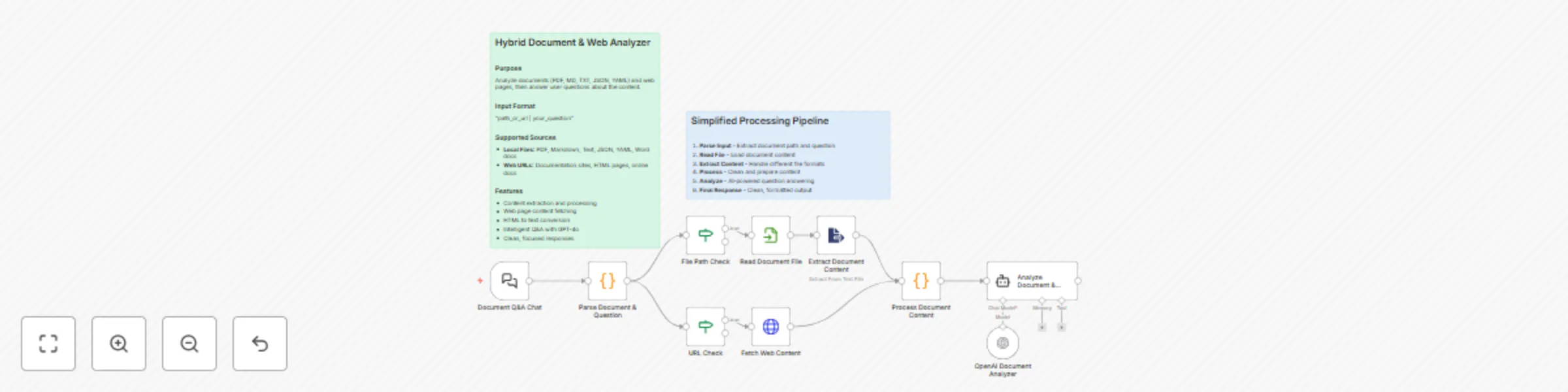

Analyze documents & web content with GPT-4o Q&A assistant

Document Analyzer and Q&A Workflow AI powered document and web page analysis using n8n and GPT model. Ask questions a...

A

Aadarsh Jain Document Extraction

15 Oct 2025

360

0

Free intermediate

Kubernetes management with natural language using GPT-4o and MCP tools

Who is this for? This workflow is designed for DevOps engineers, platform engineers, and Kubernetes administrators wh...

A

Aadarsh Jain DevOps

11 Aug 2025

728

0